All pages referring or tutorials for Microsoft Azure.

This is the multi-page printable view of this section. Click here to print.

Microsoft Azure

- Useful Azure links/tools

- Create HTTPS 301 redirects with Azure Front Door

- Everything you need to know about Azure Bastion

- I tried running Active Directory DNS on Azure Private DNS

- ARM templates and Azure VM + Script deployment

- Automatic Azure Boot diagnostics monitoring with Azure Policy

- Wordpress on Azure

- New: Azure Service Groups

- In-Place upgrade to Windows Server 2025 on Azure

- Installing Windows Updates through PowerShell (script)

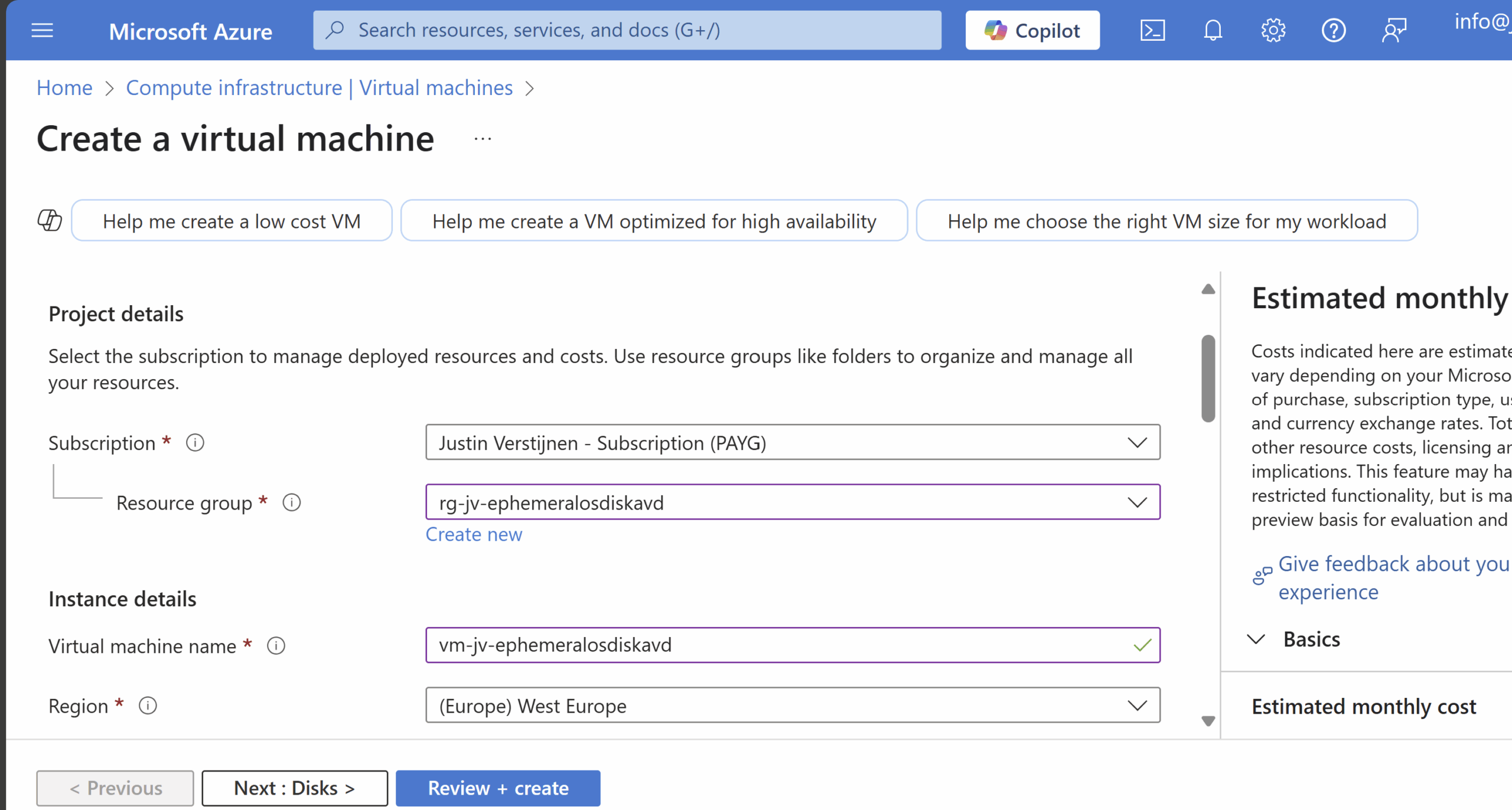

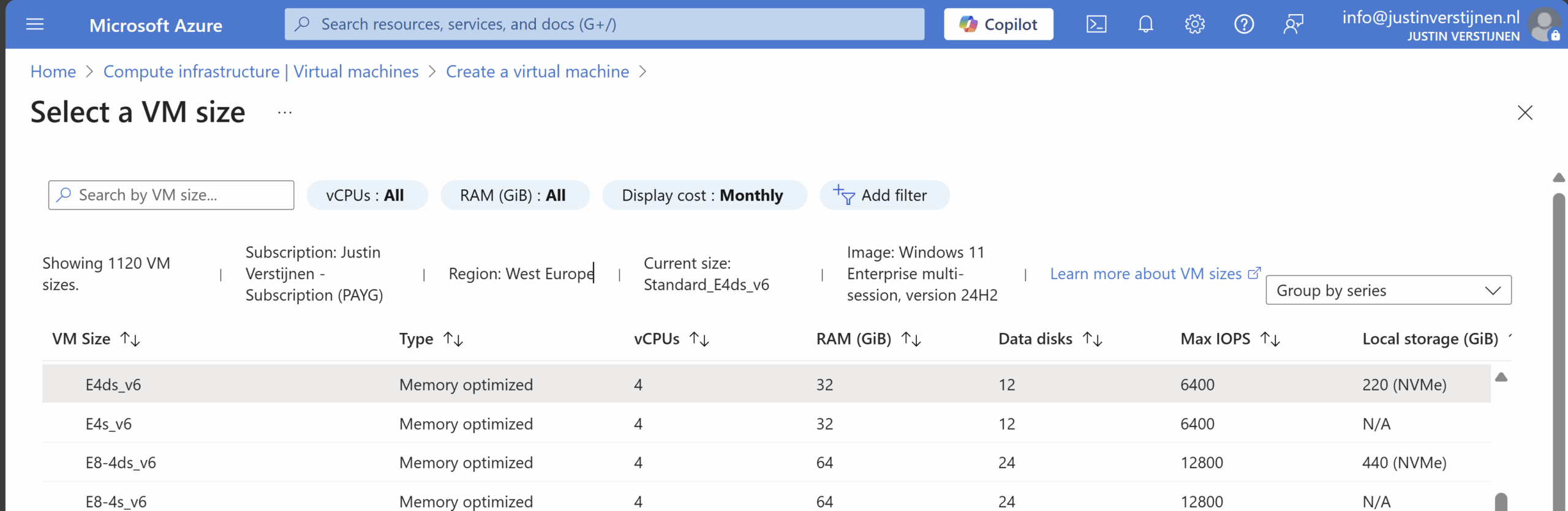

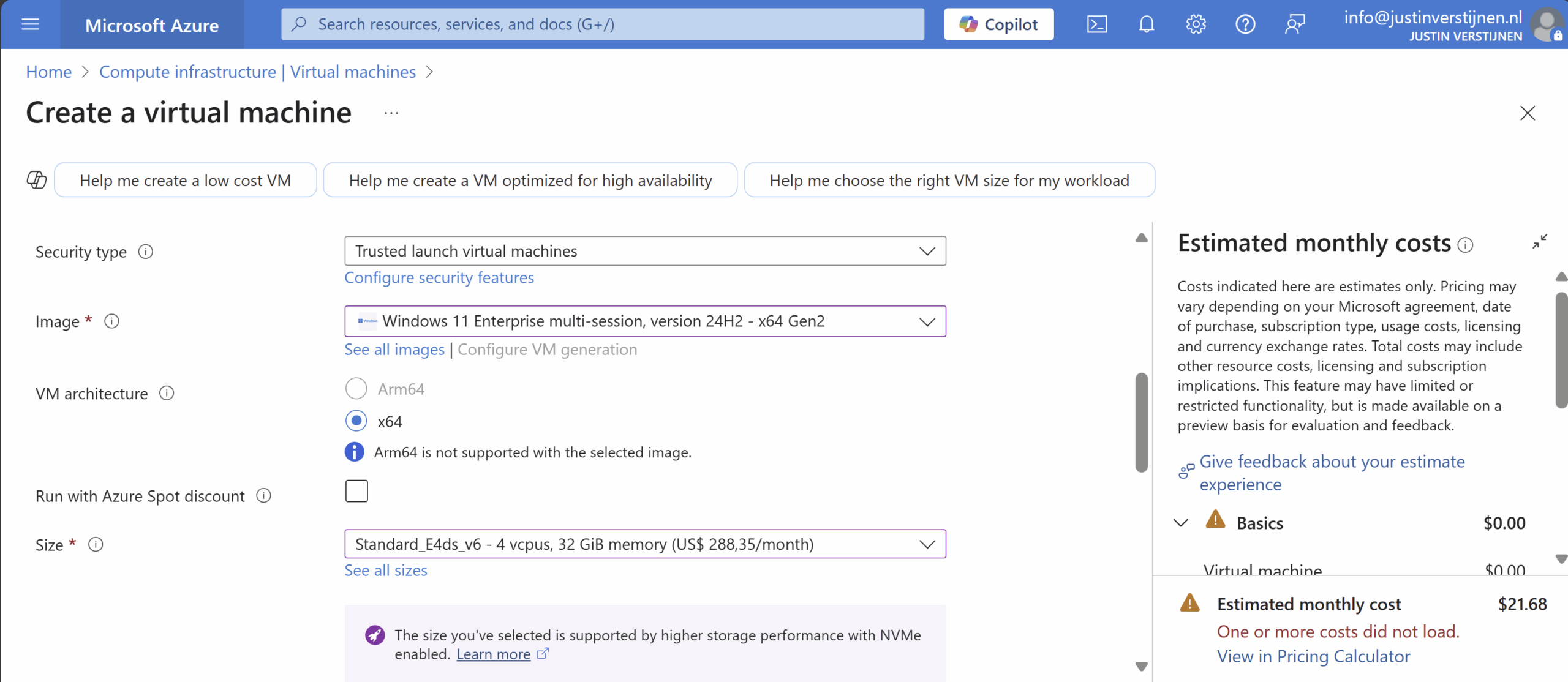

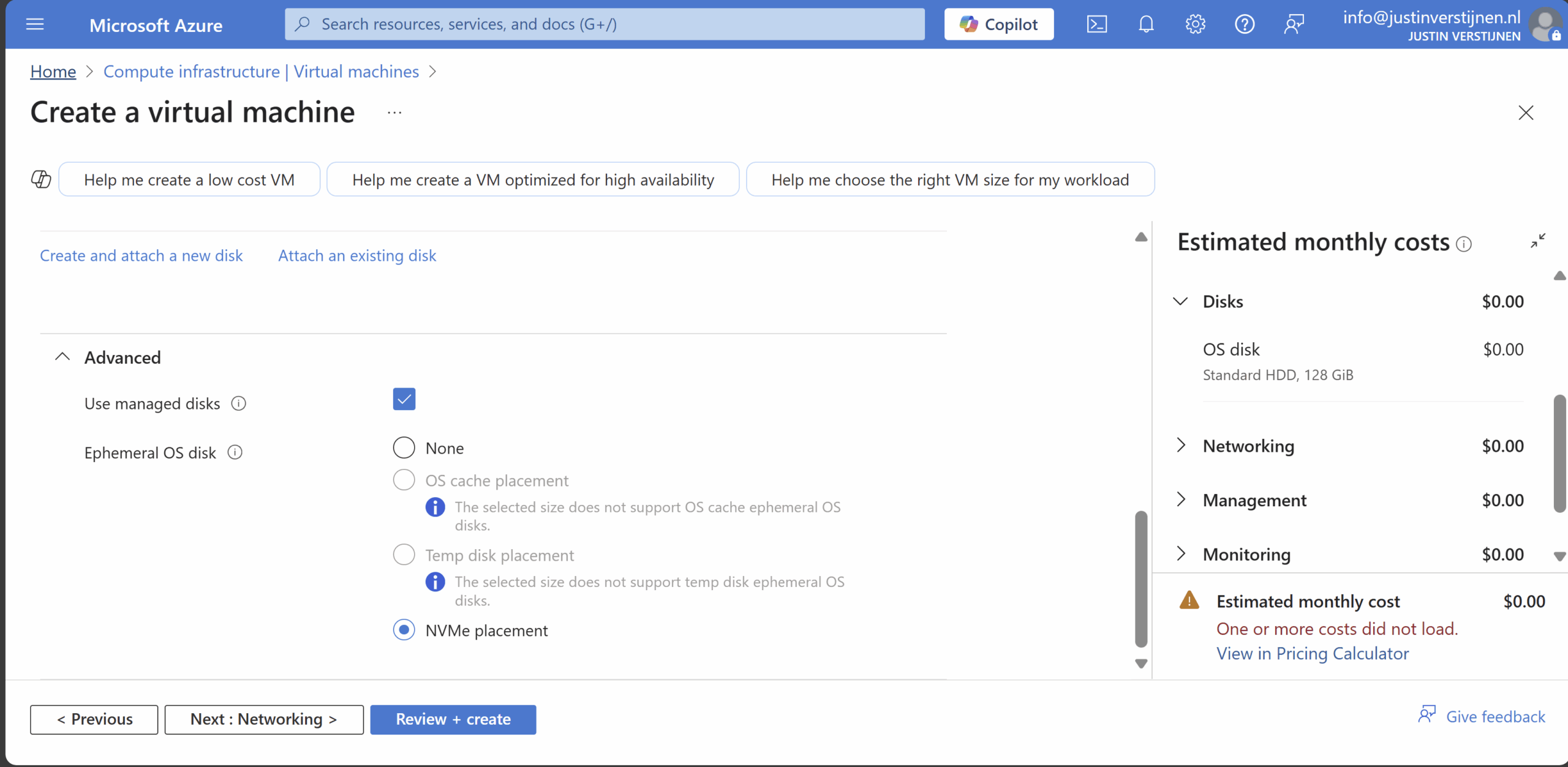

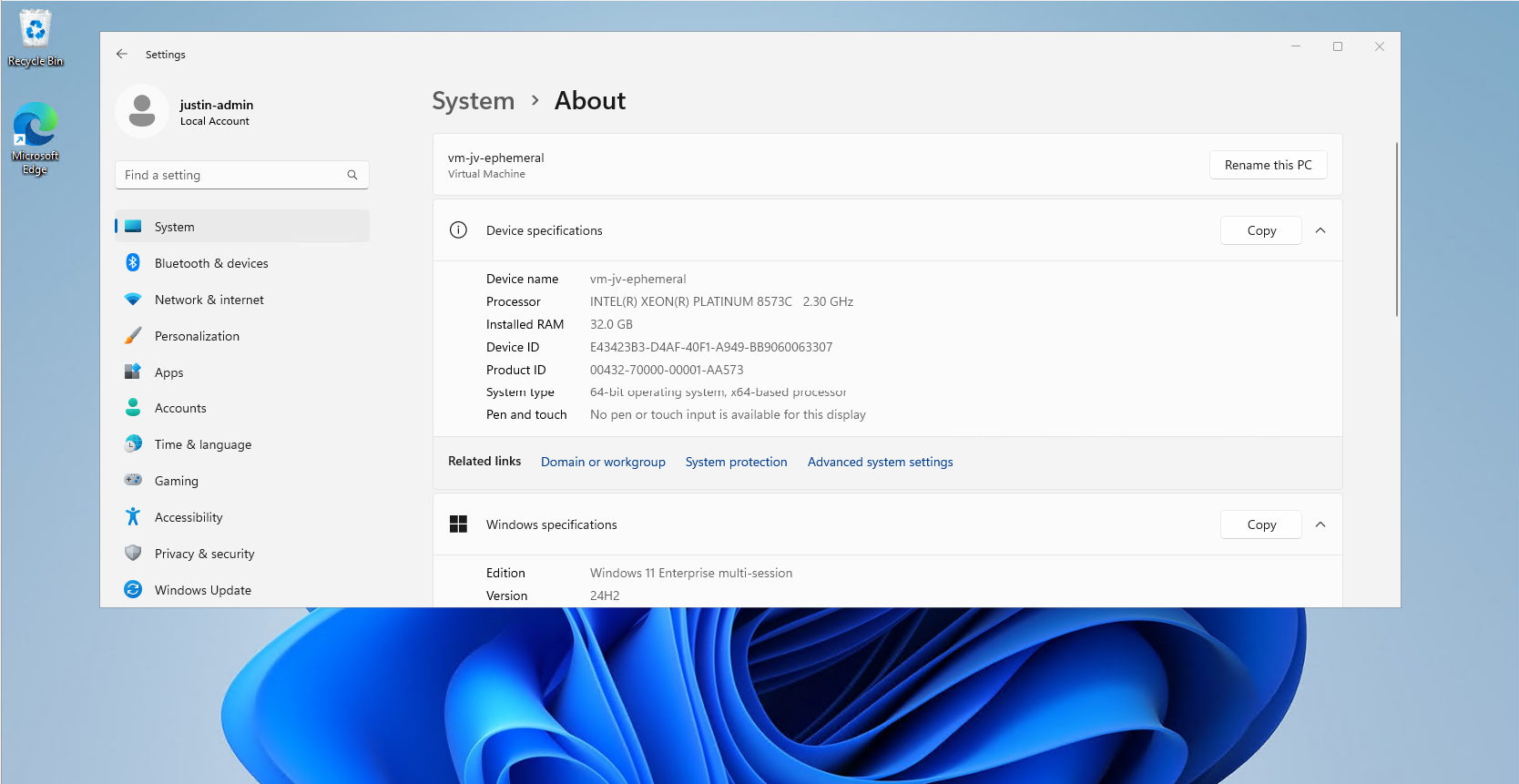

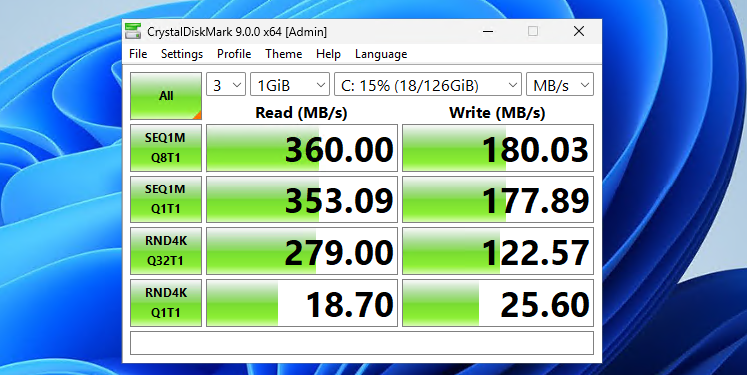

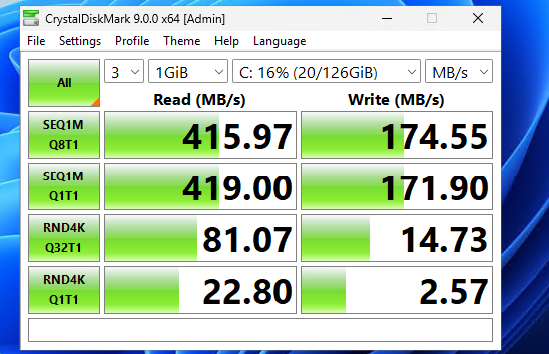

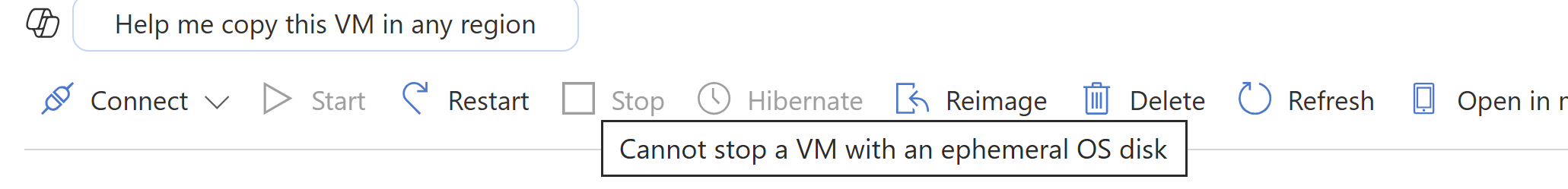

- Use Ephemeral OS Disks in Azure

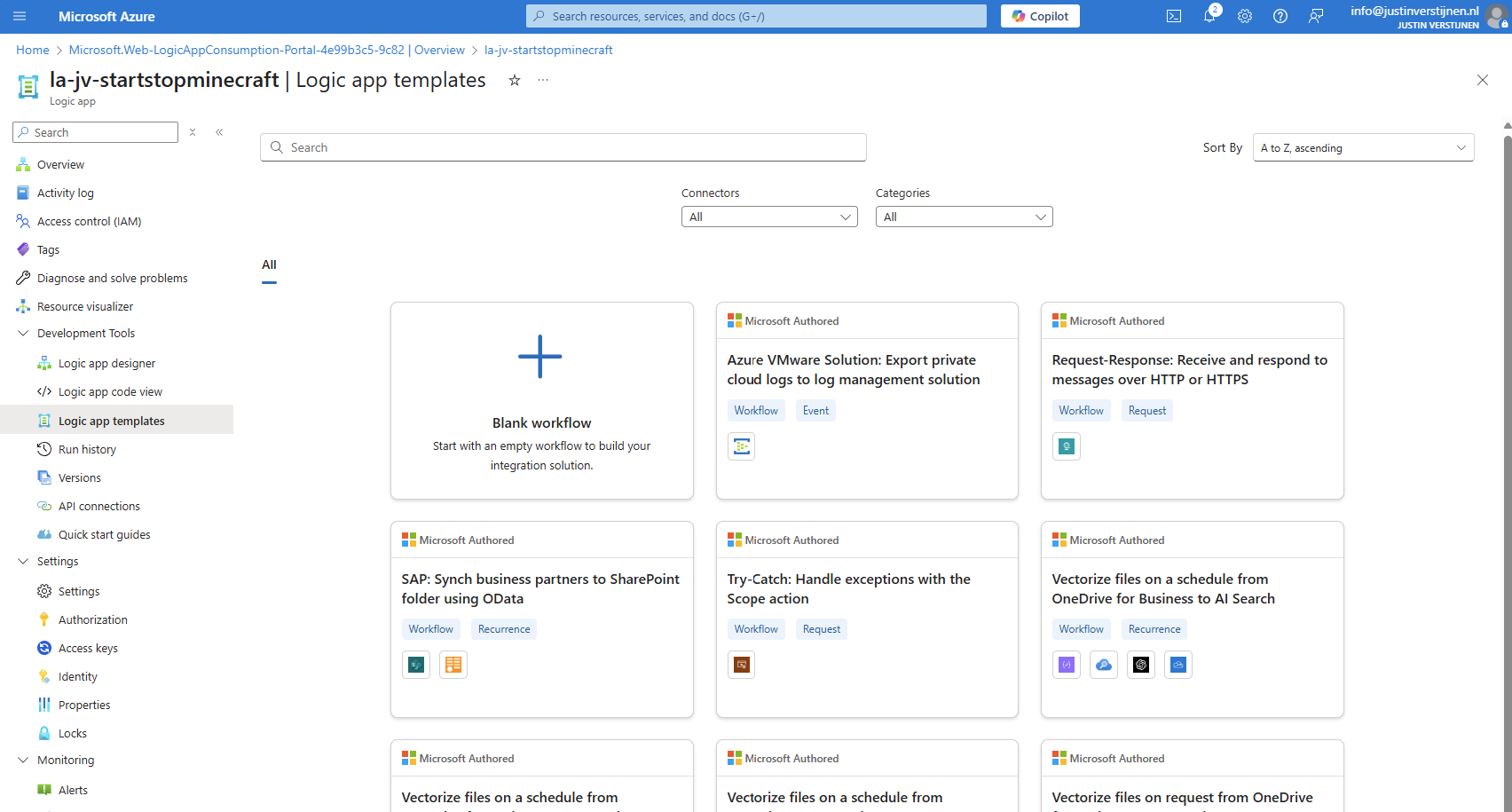

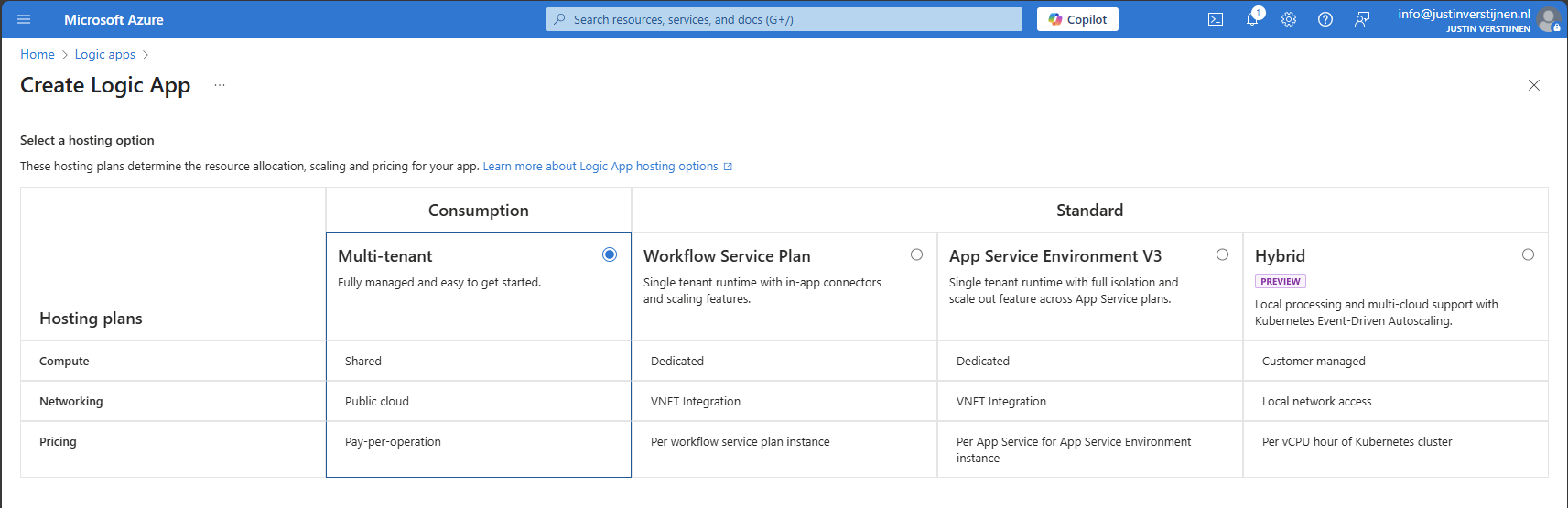

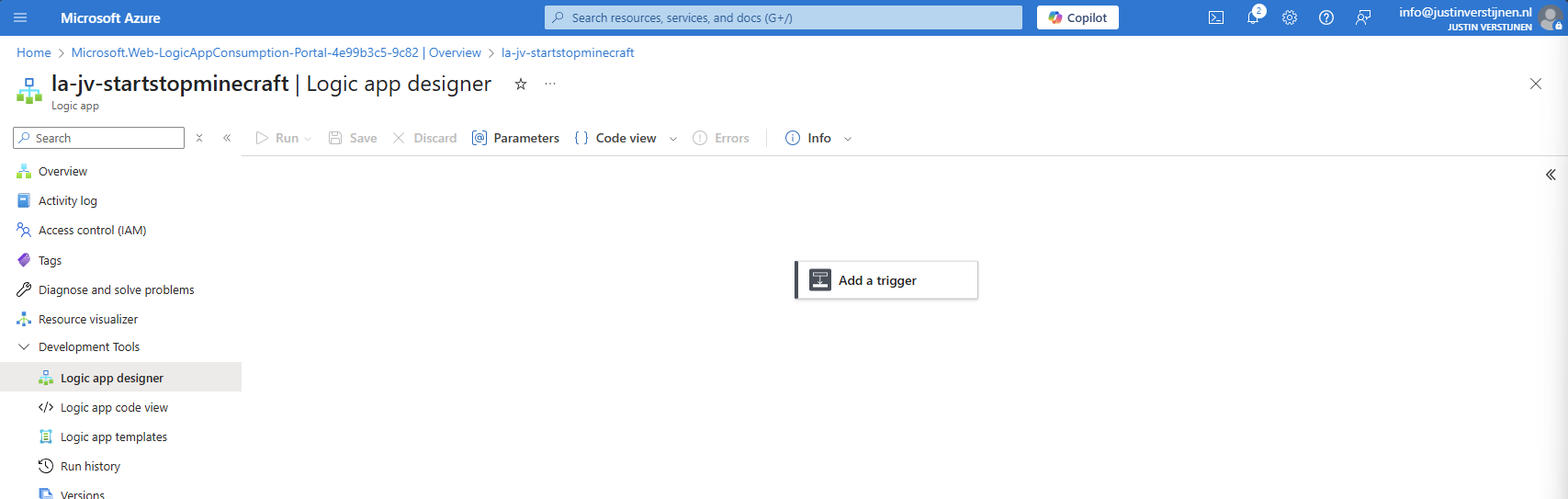

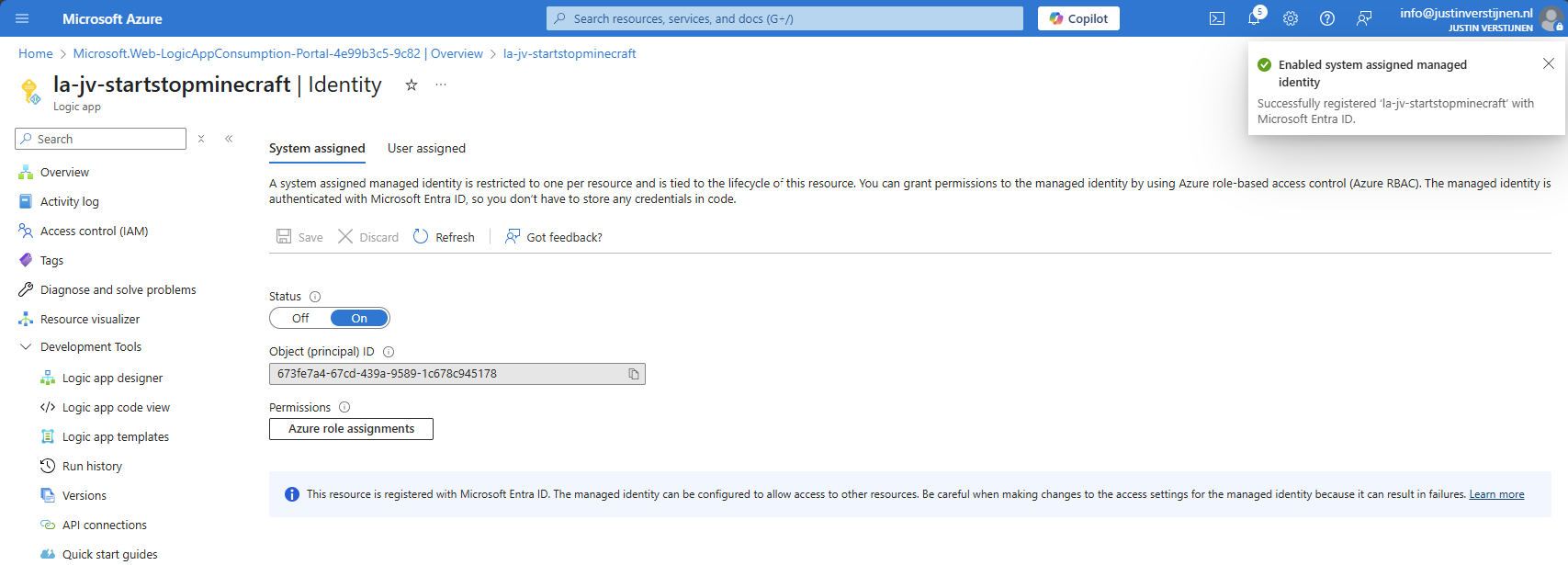

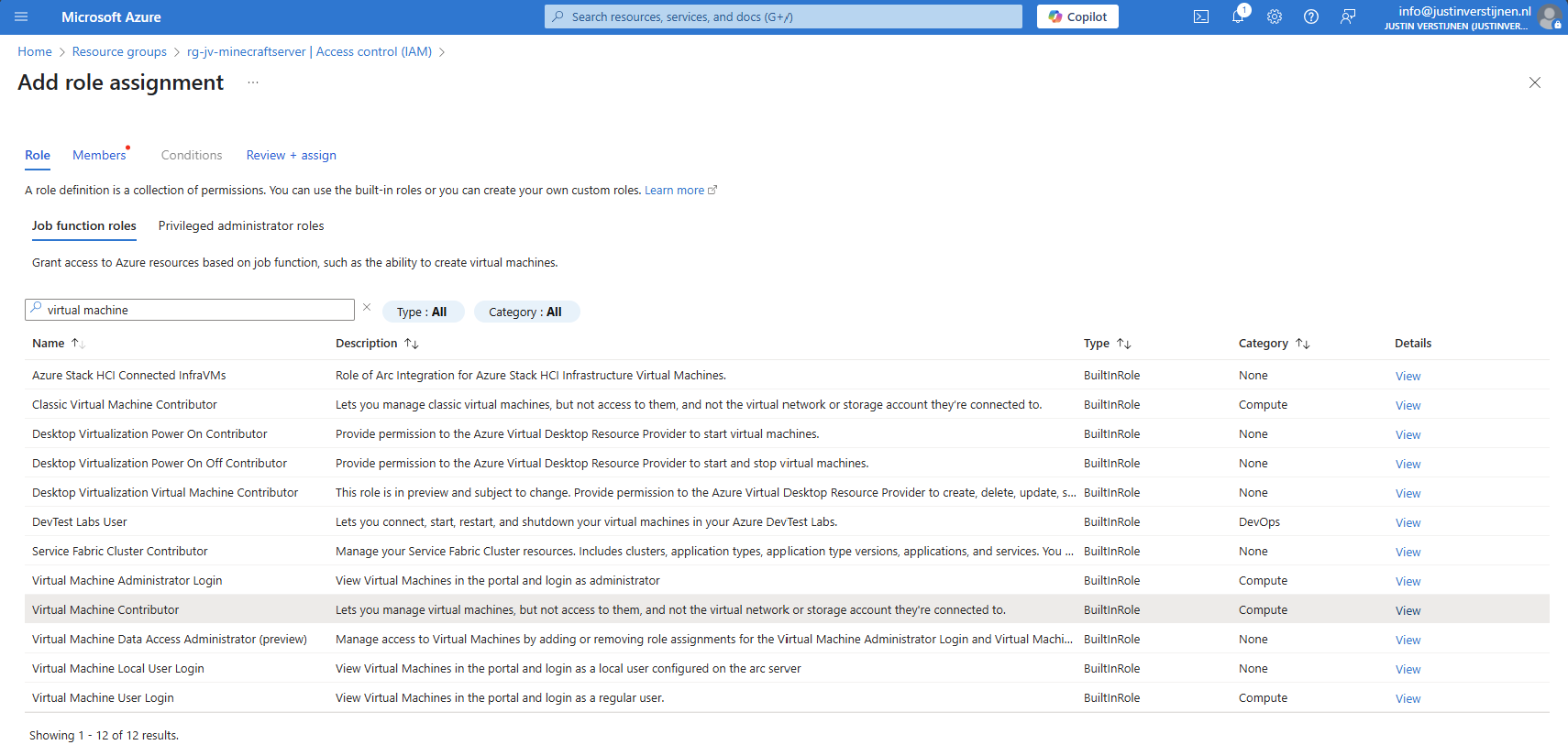

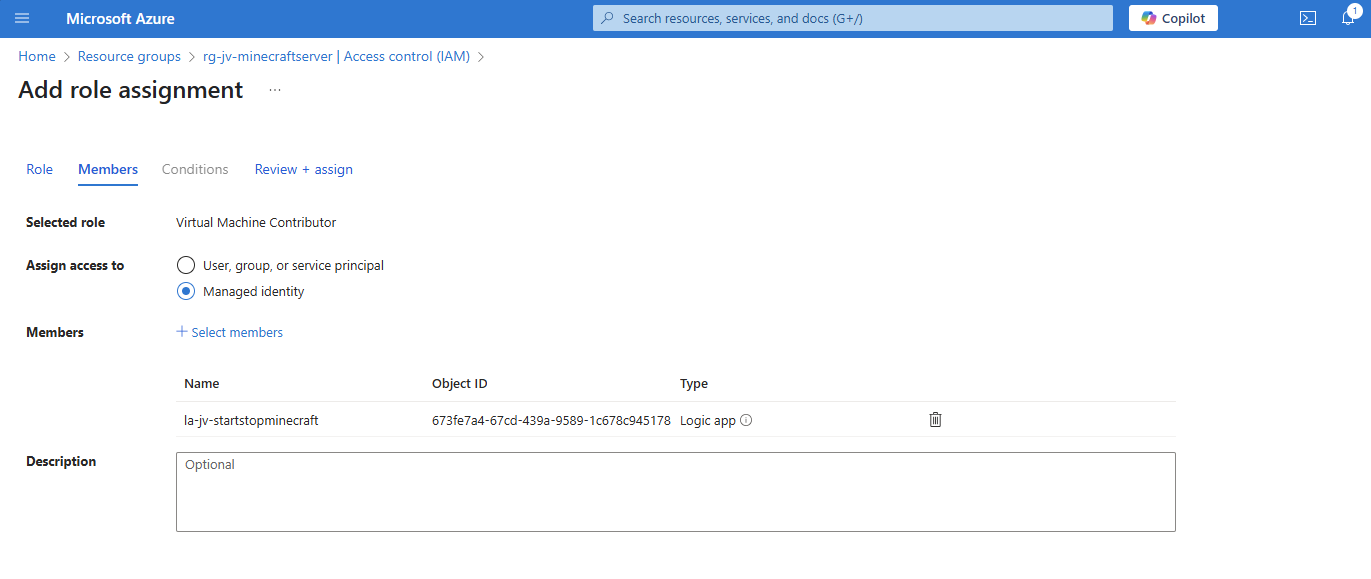

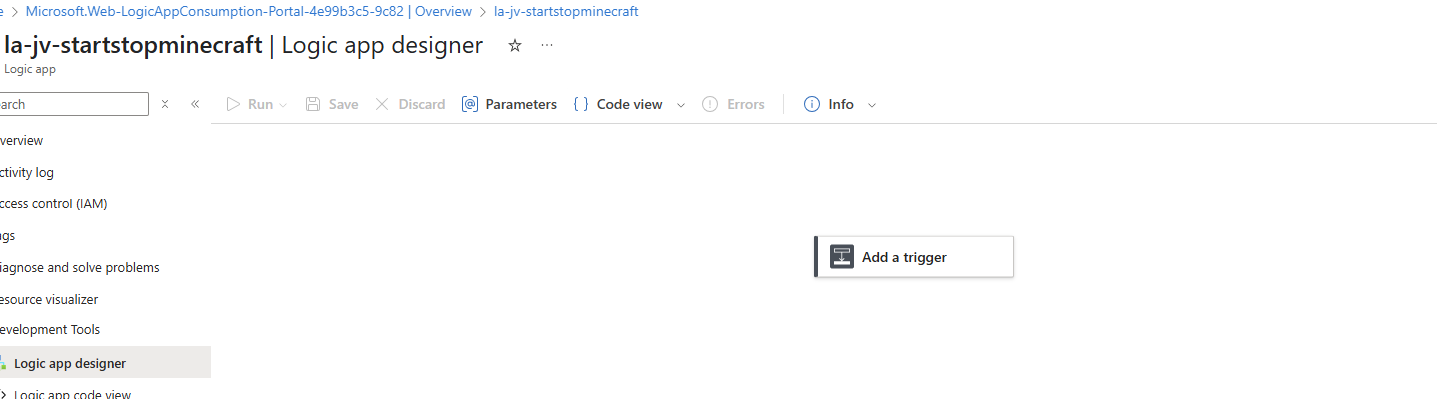

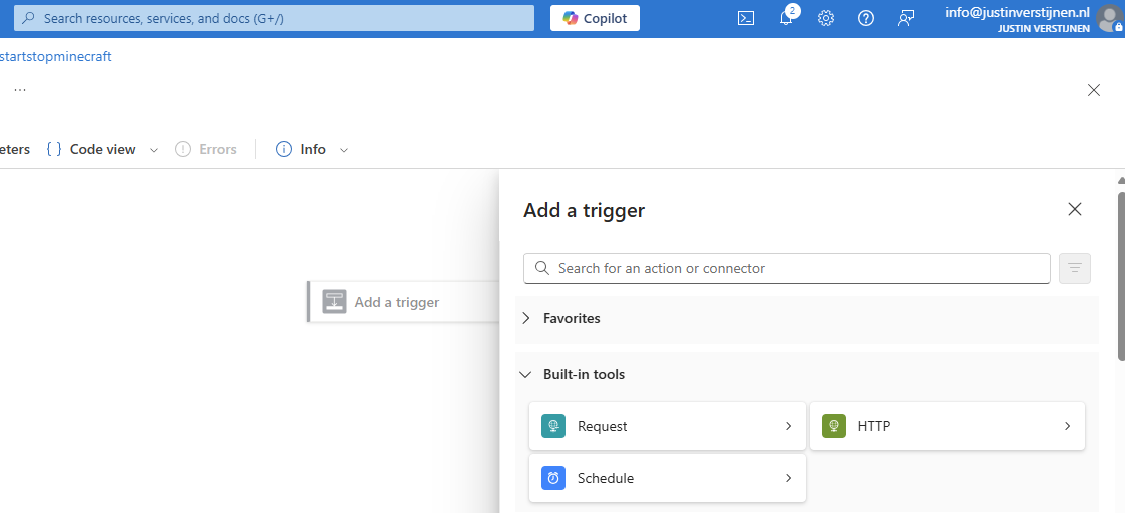

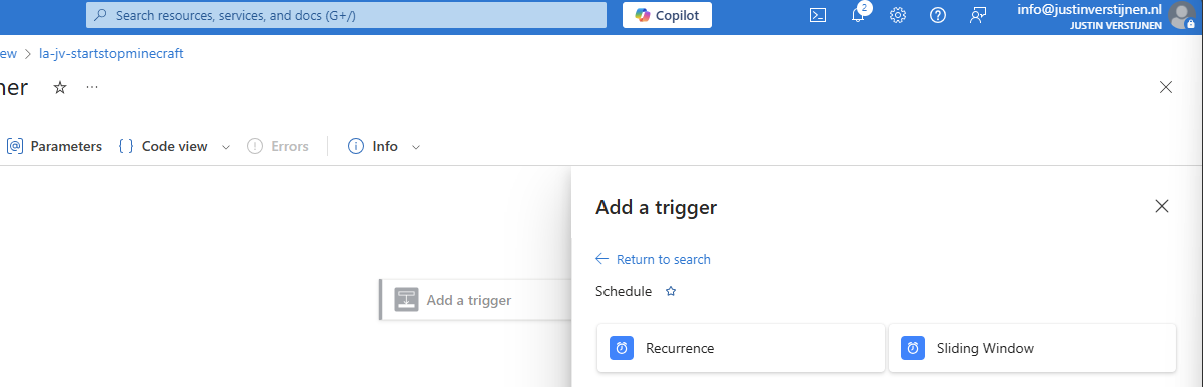

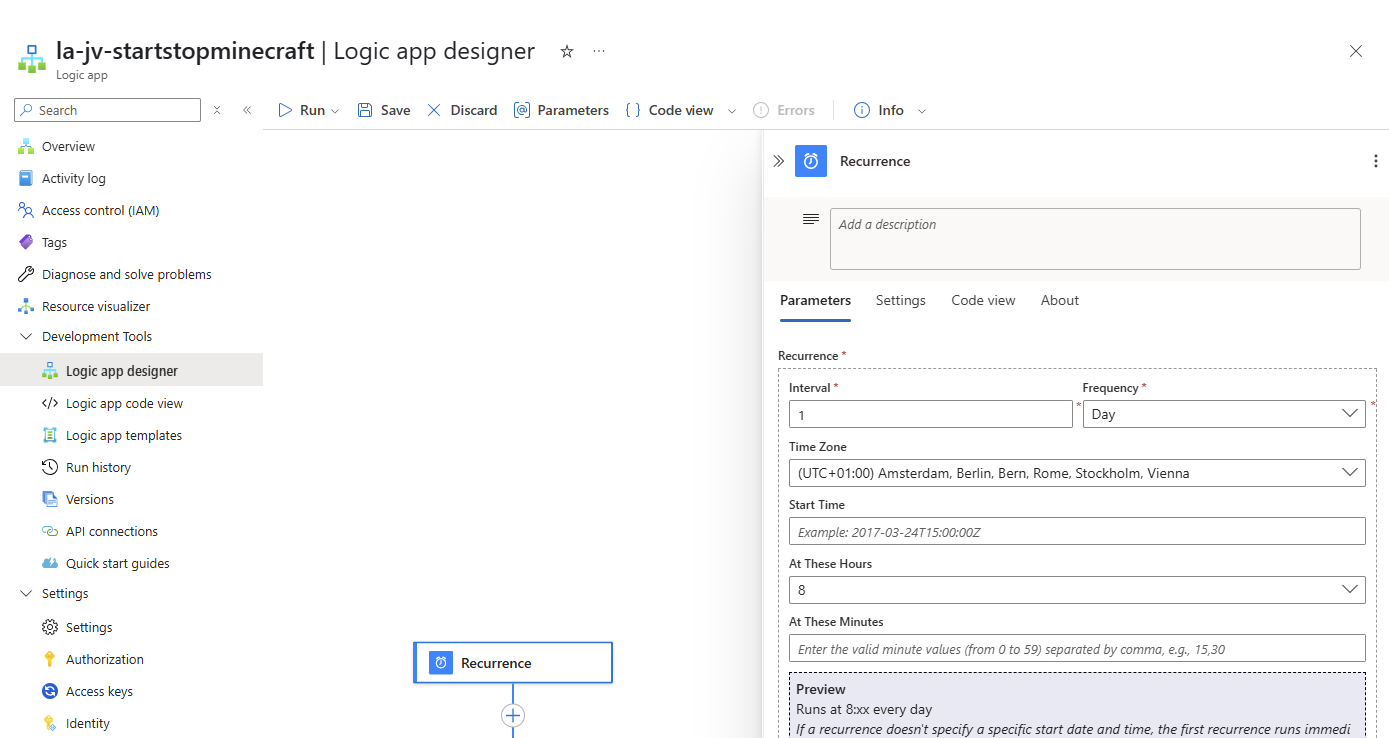

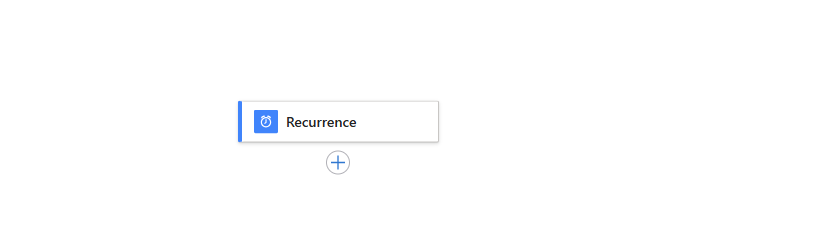

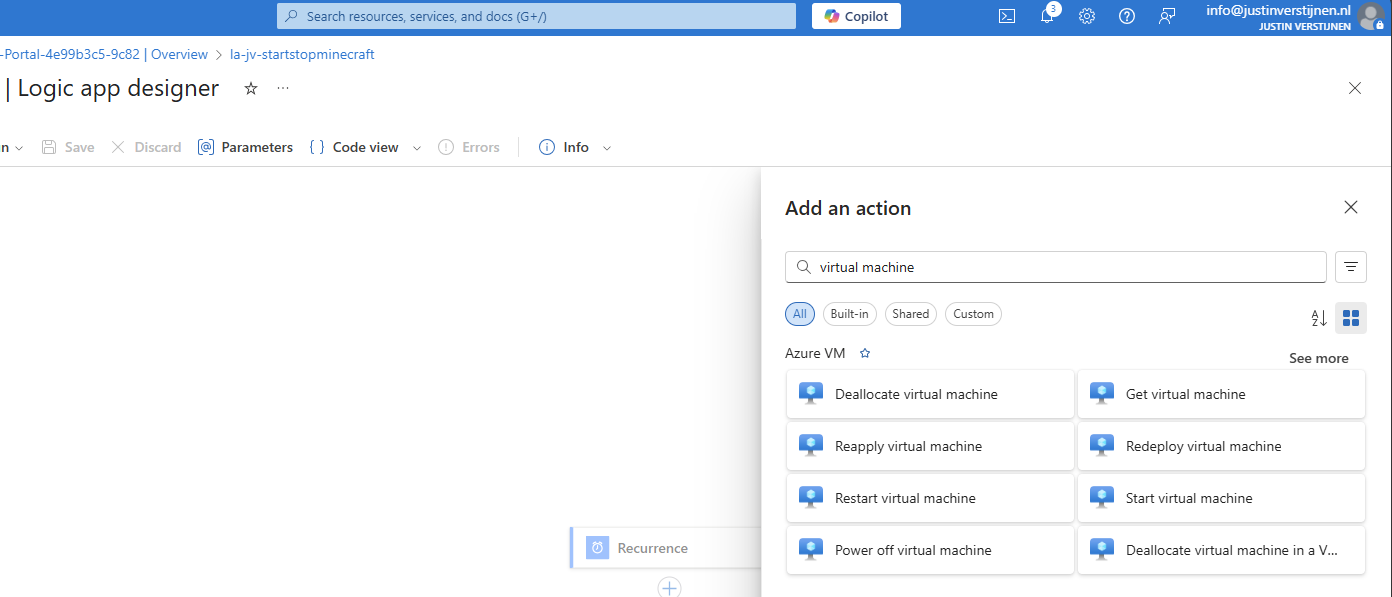

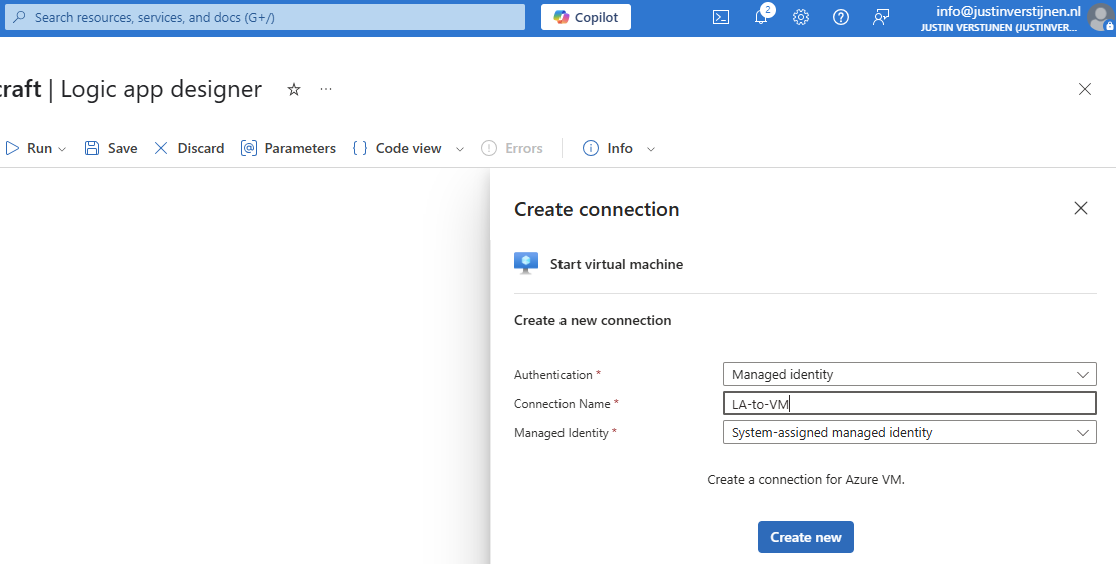

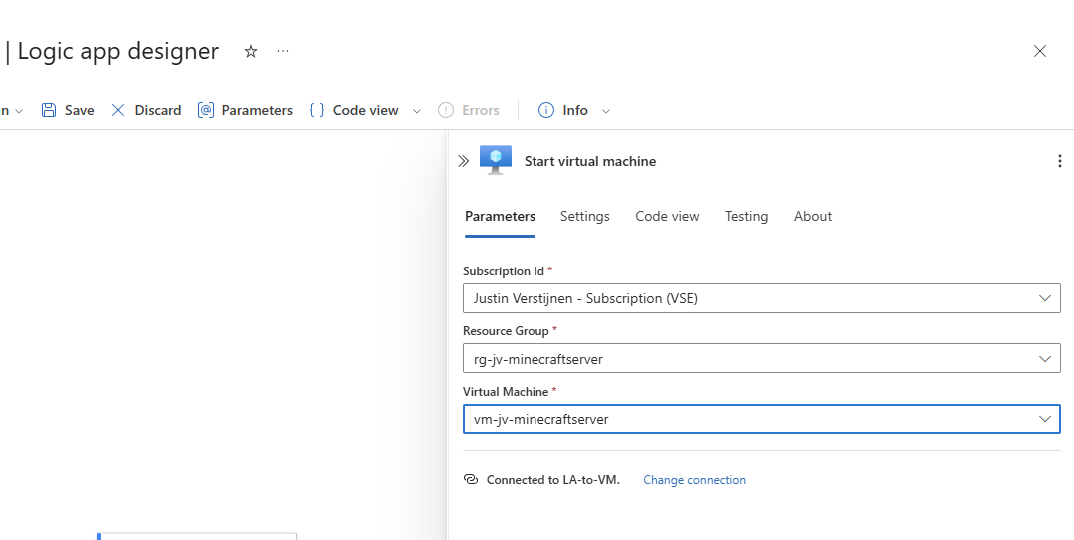

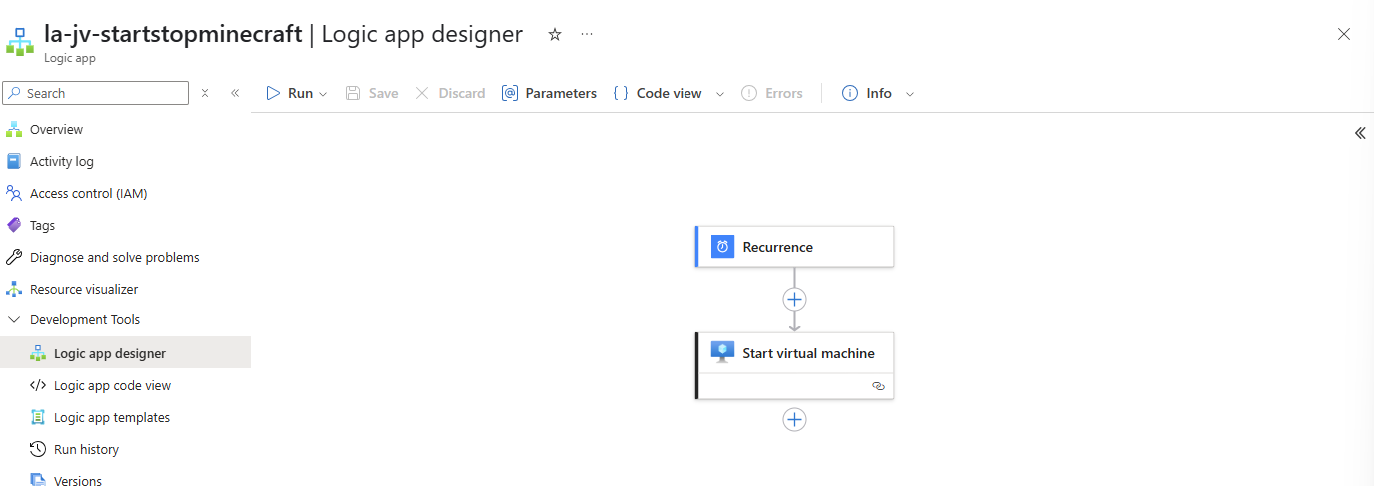

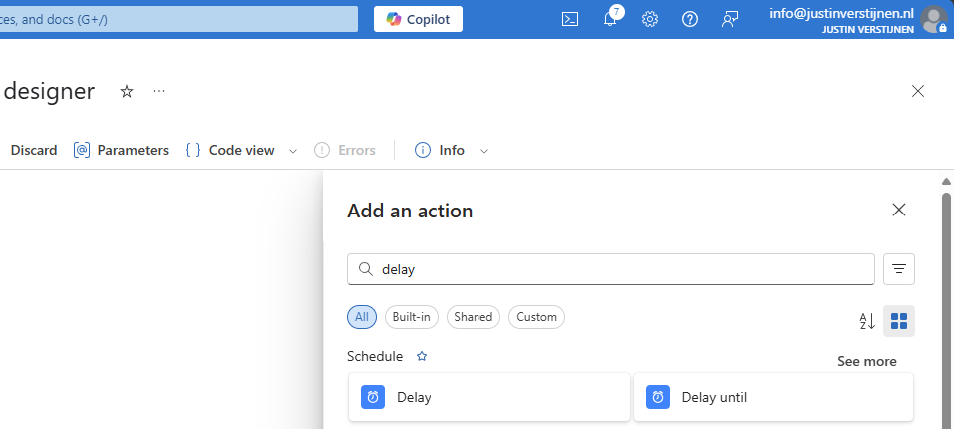

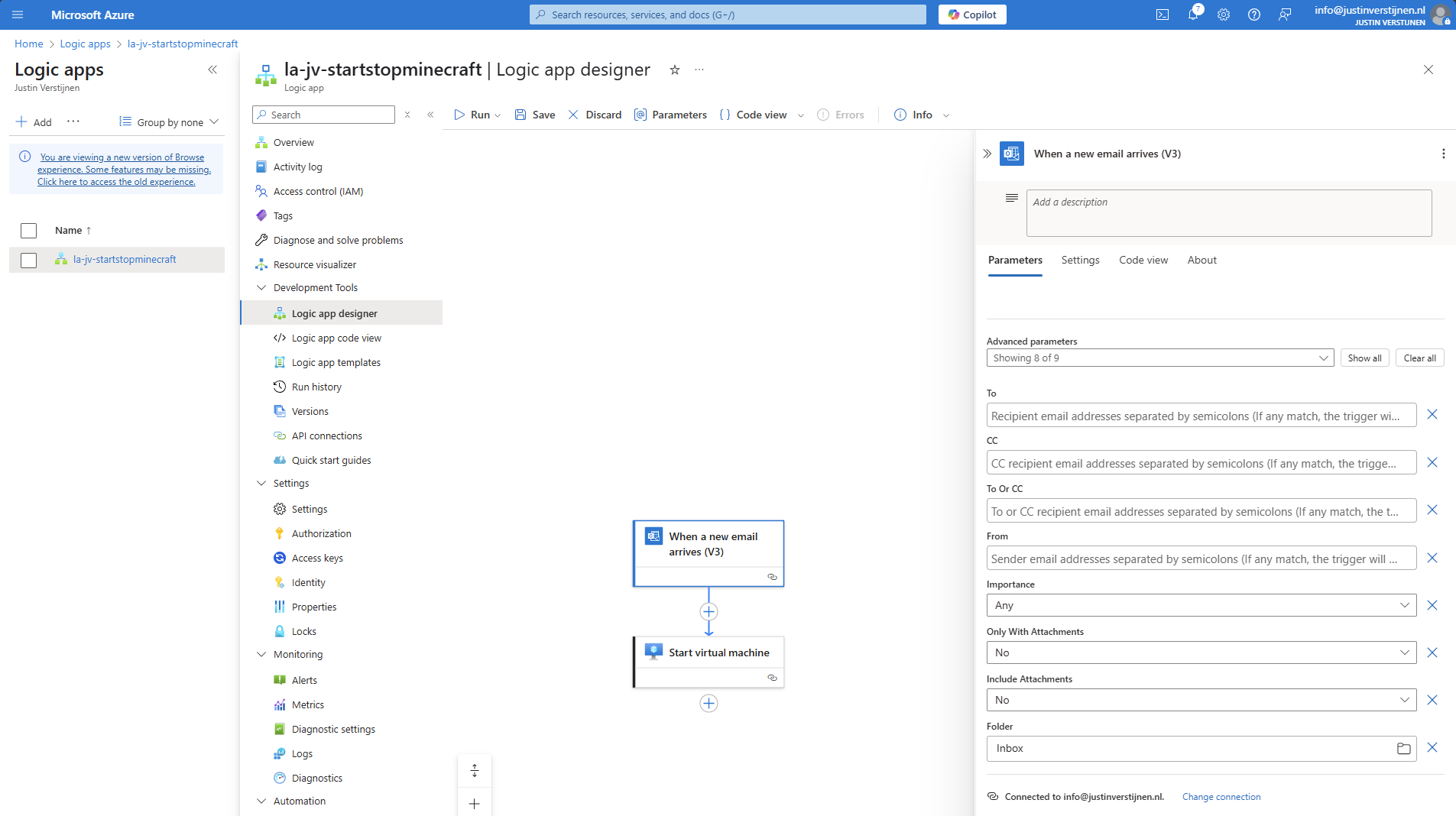

- Use Azure Logic Apps to automatically start and stop VMs

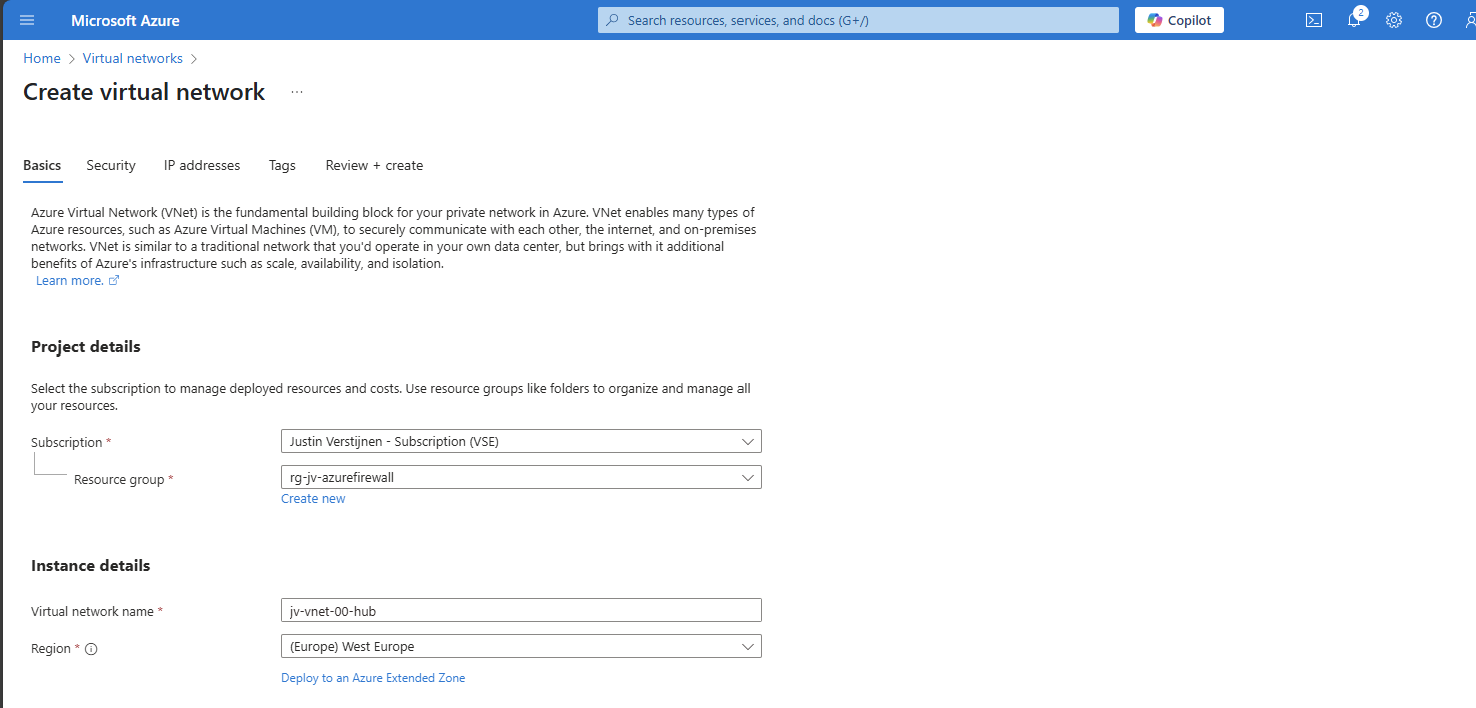

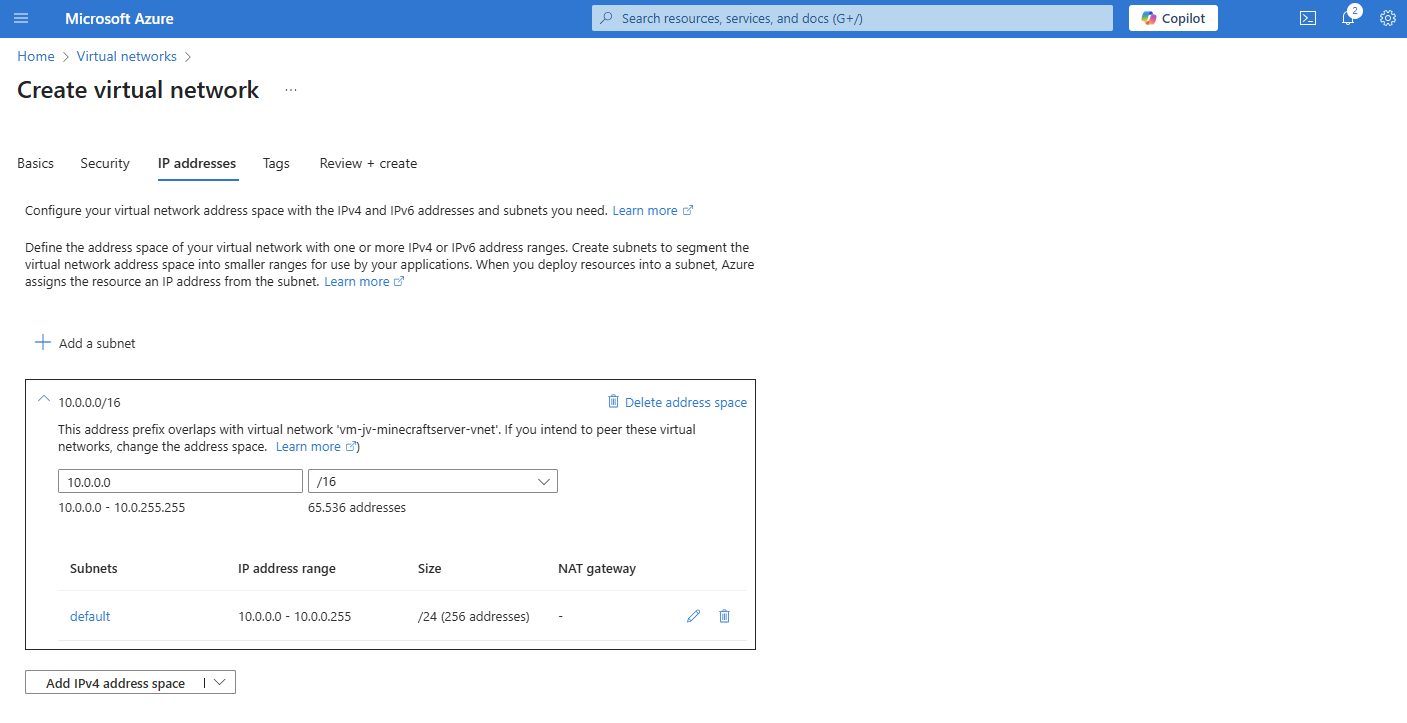

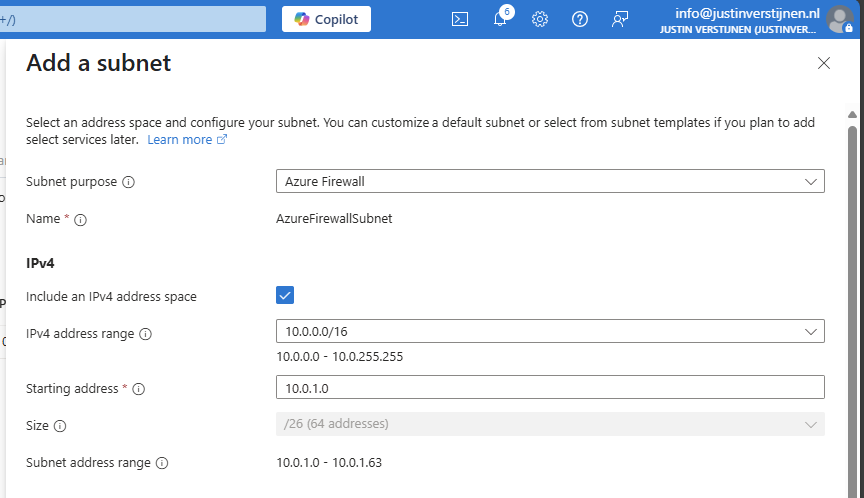

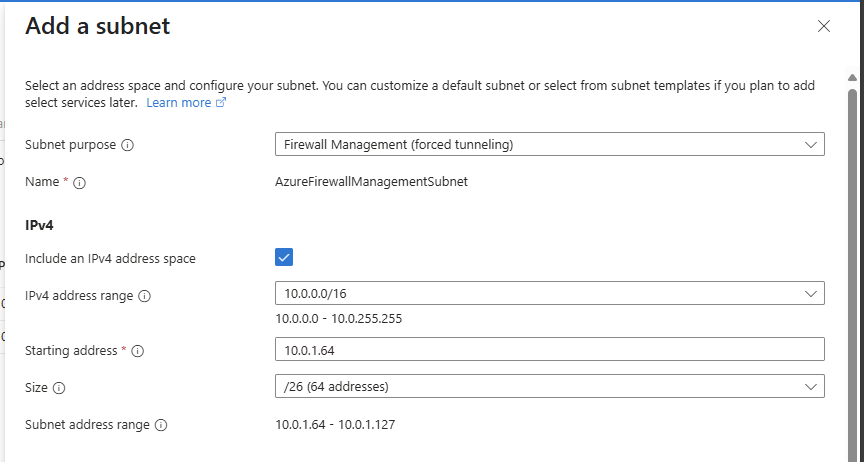

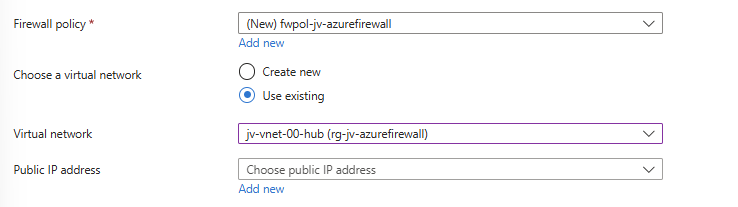

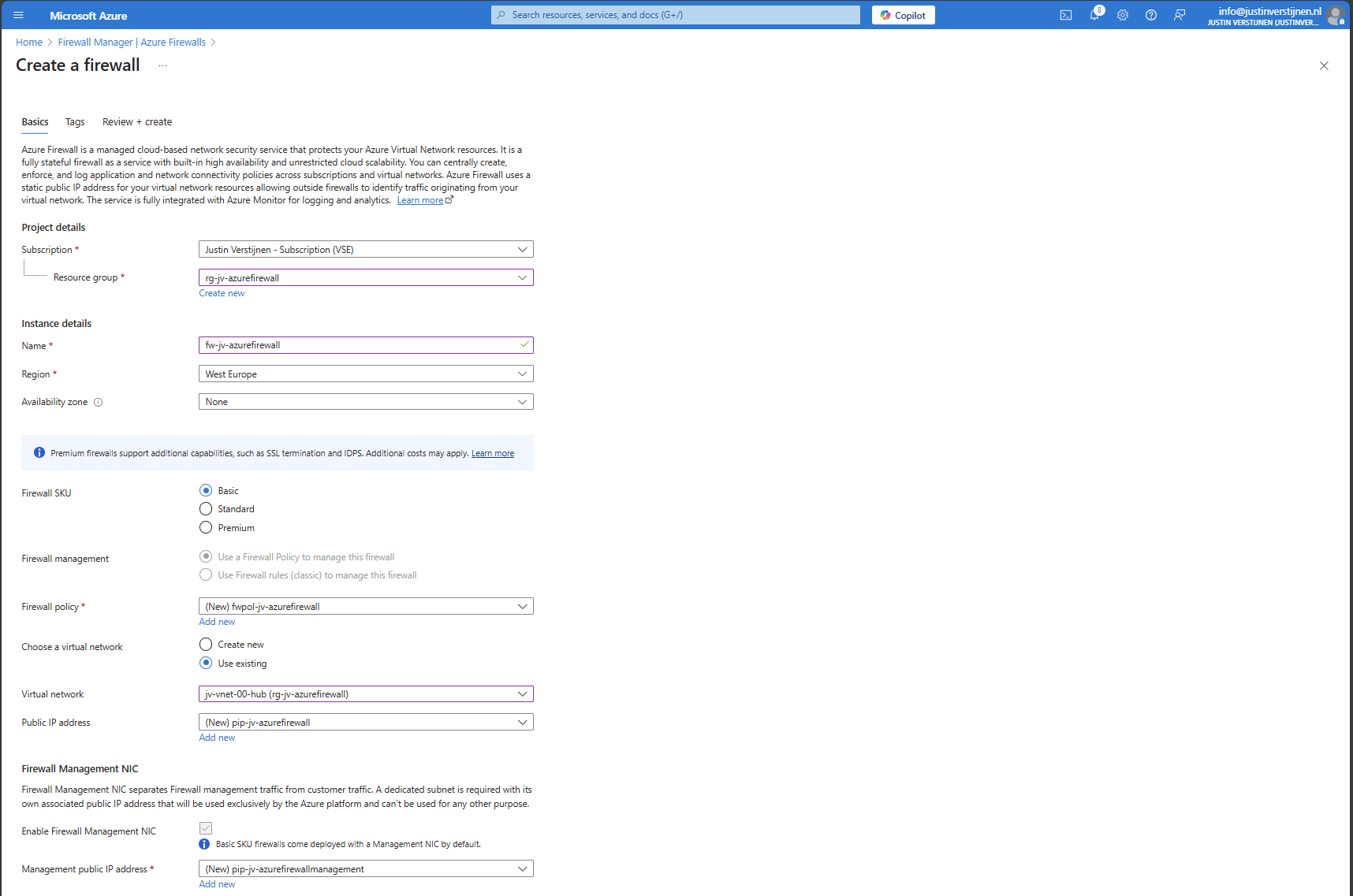

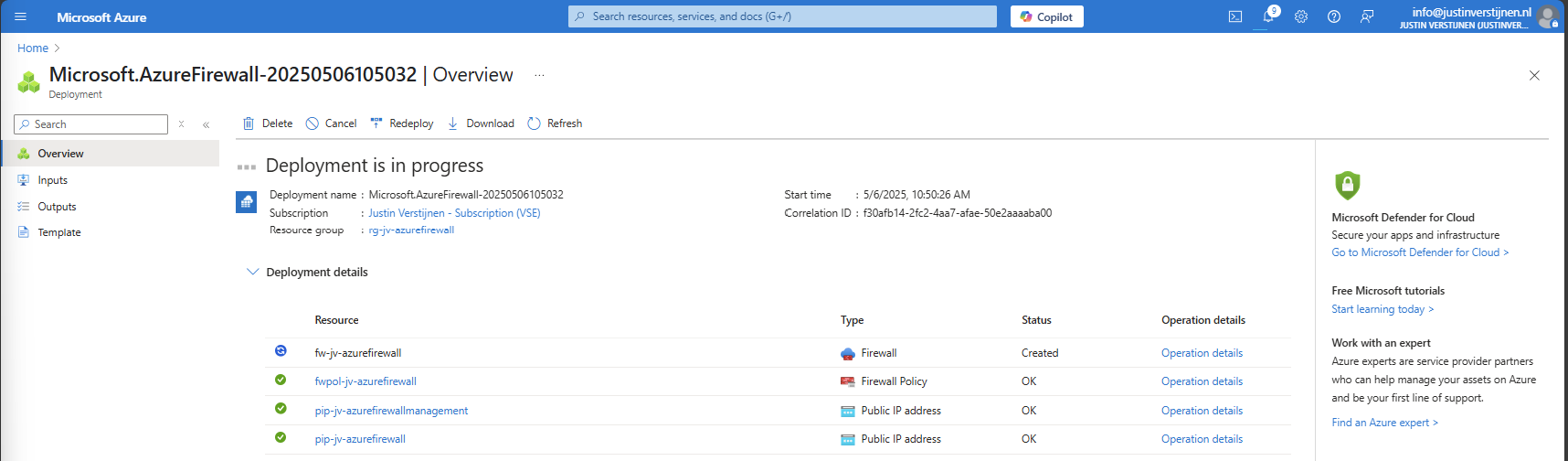

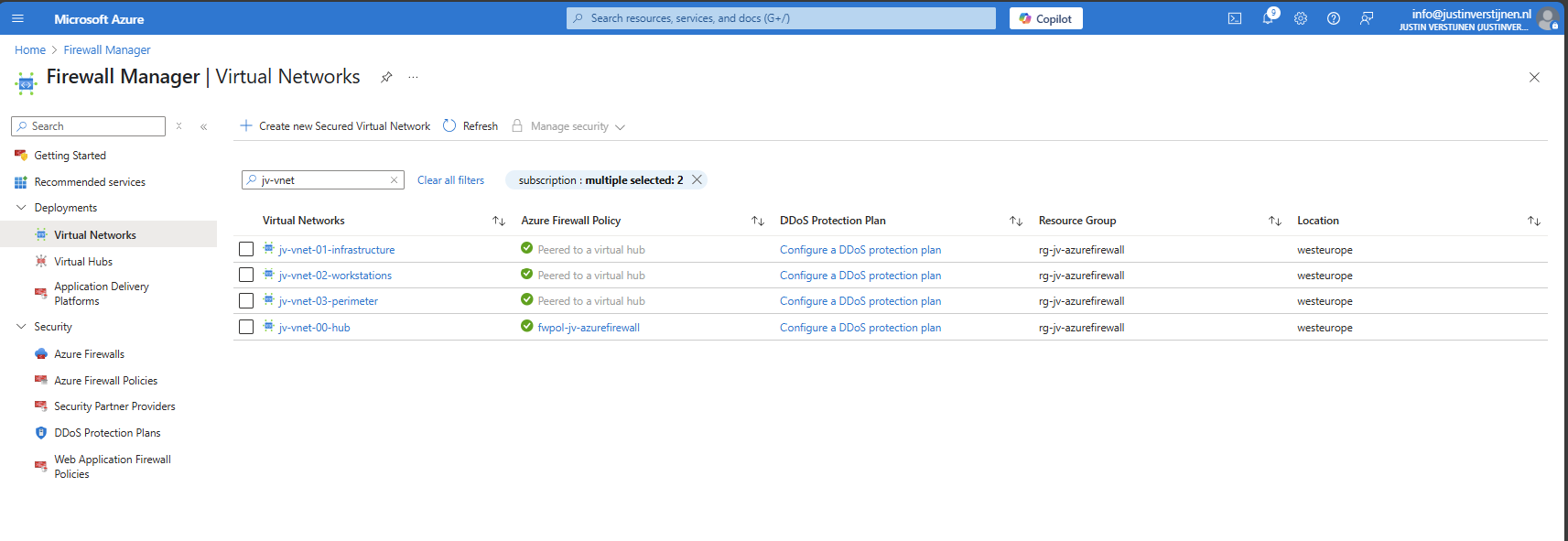

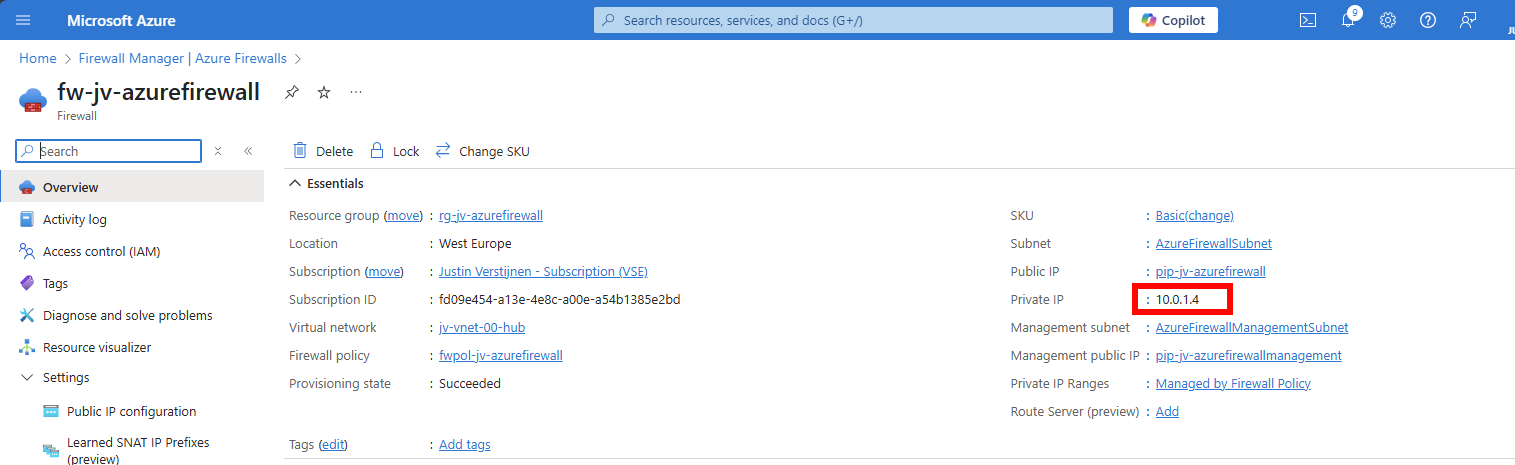

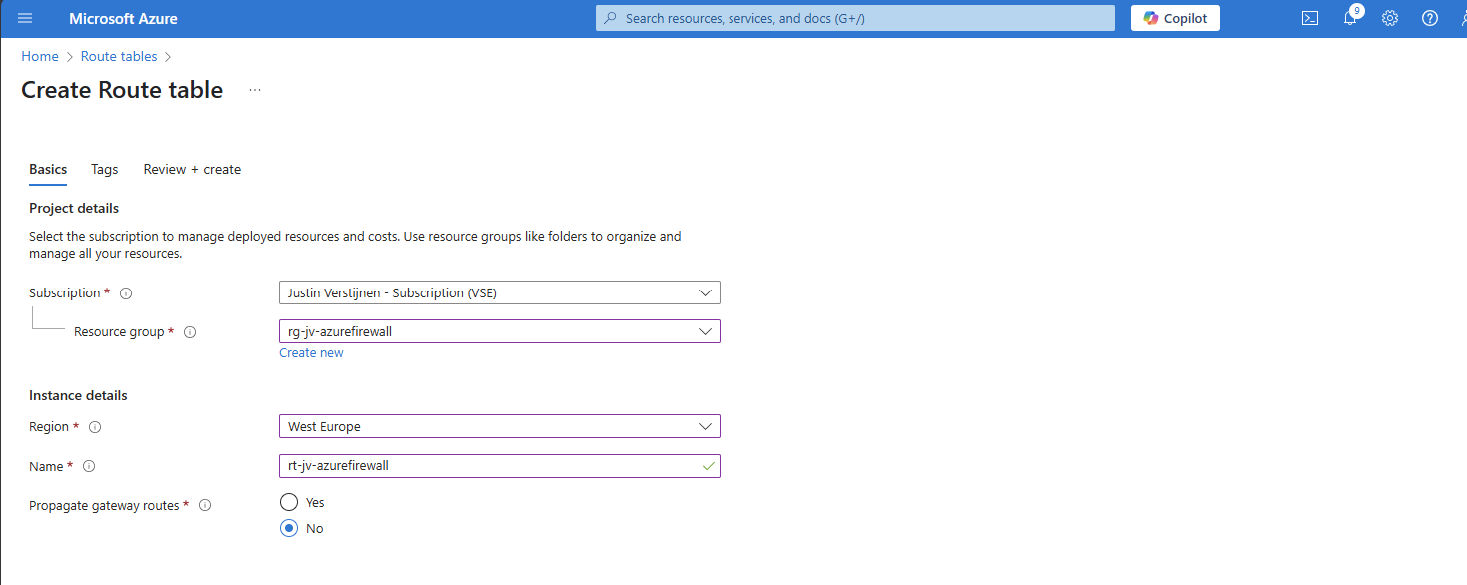

- How to implement Azure Firewall to secure your Azure environment

- What is Azure Firewall?

- Azure Default VM Outbound access deprecated

- Microsoft Azure certifications for Developers

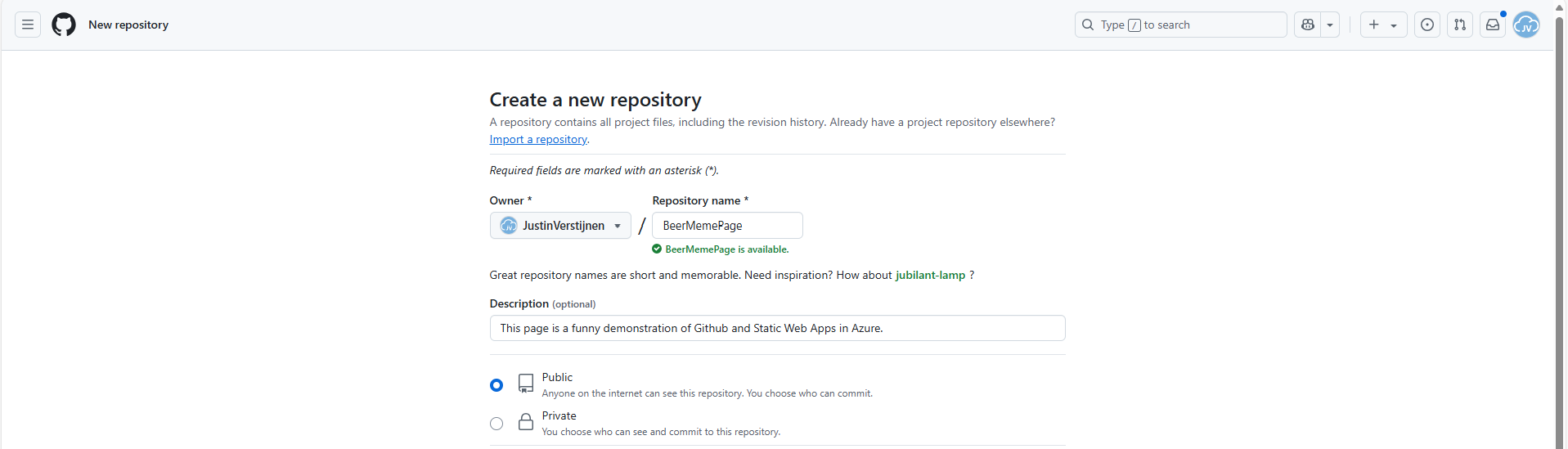

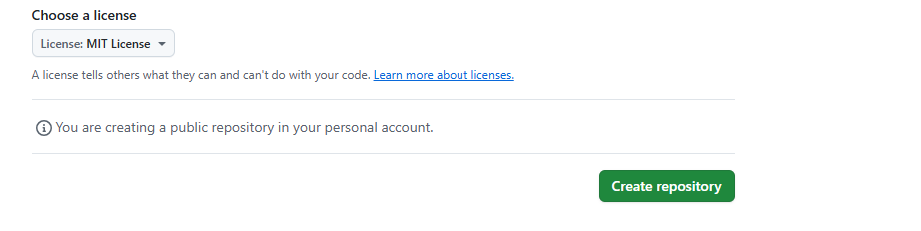

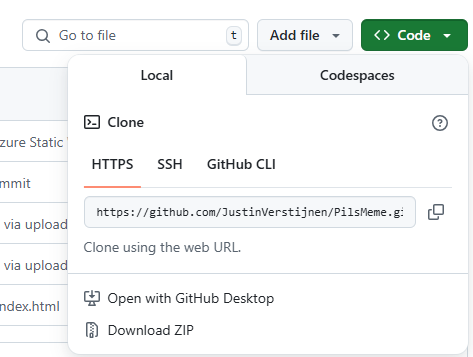

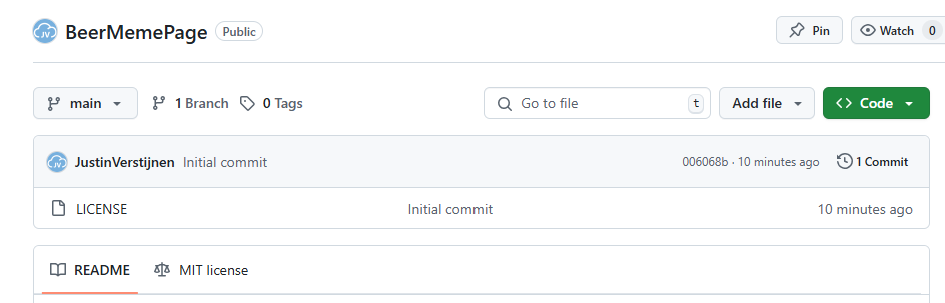

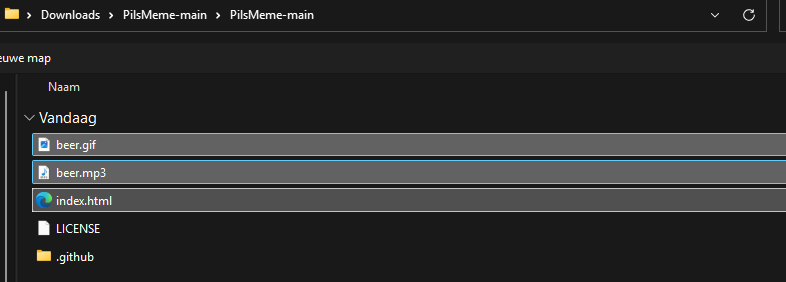

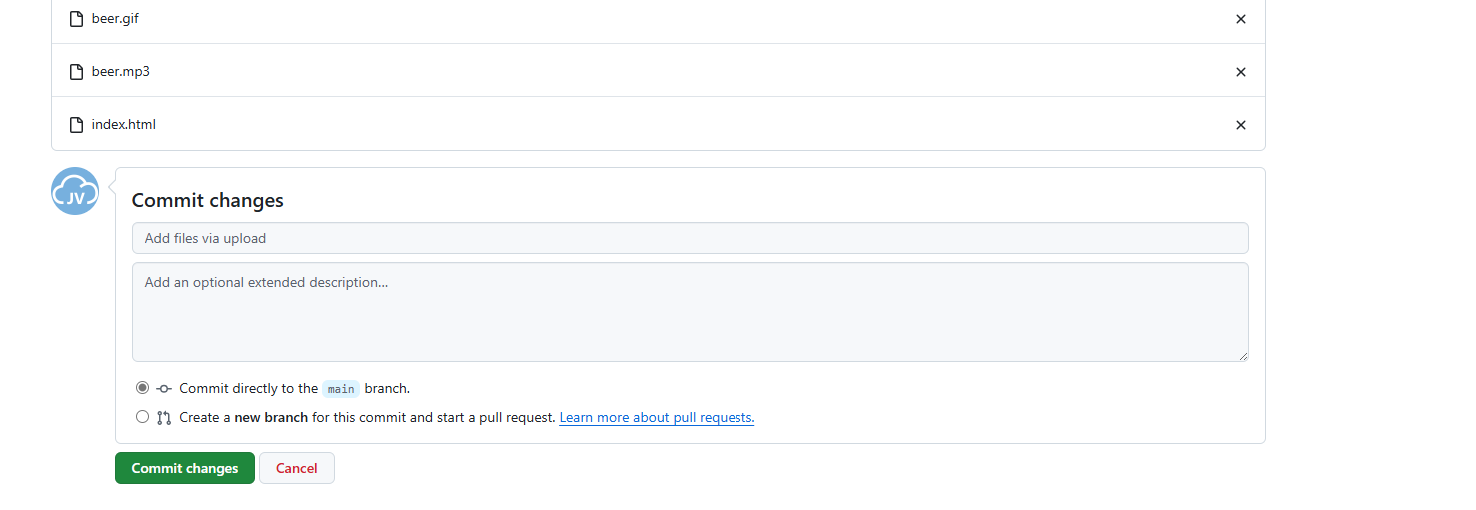

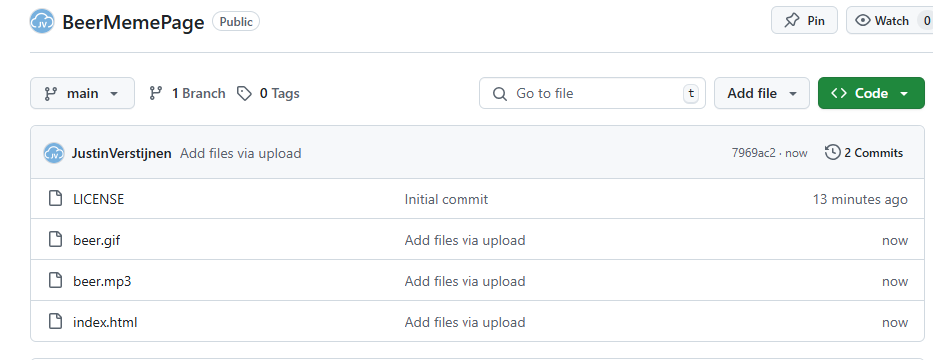

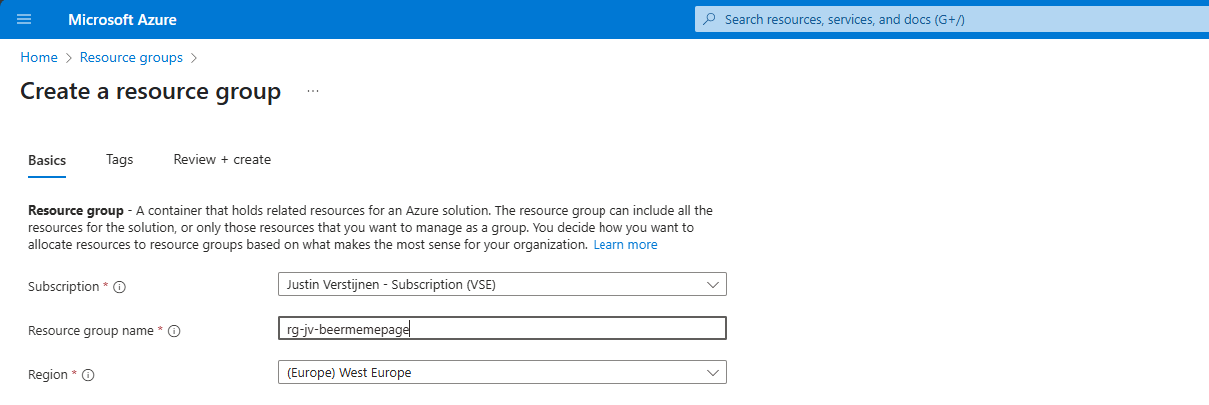

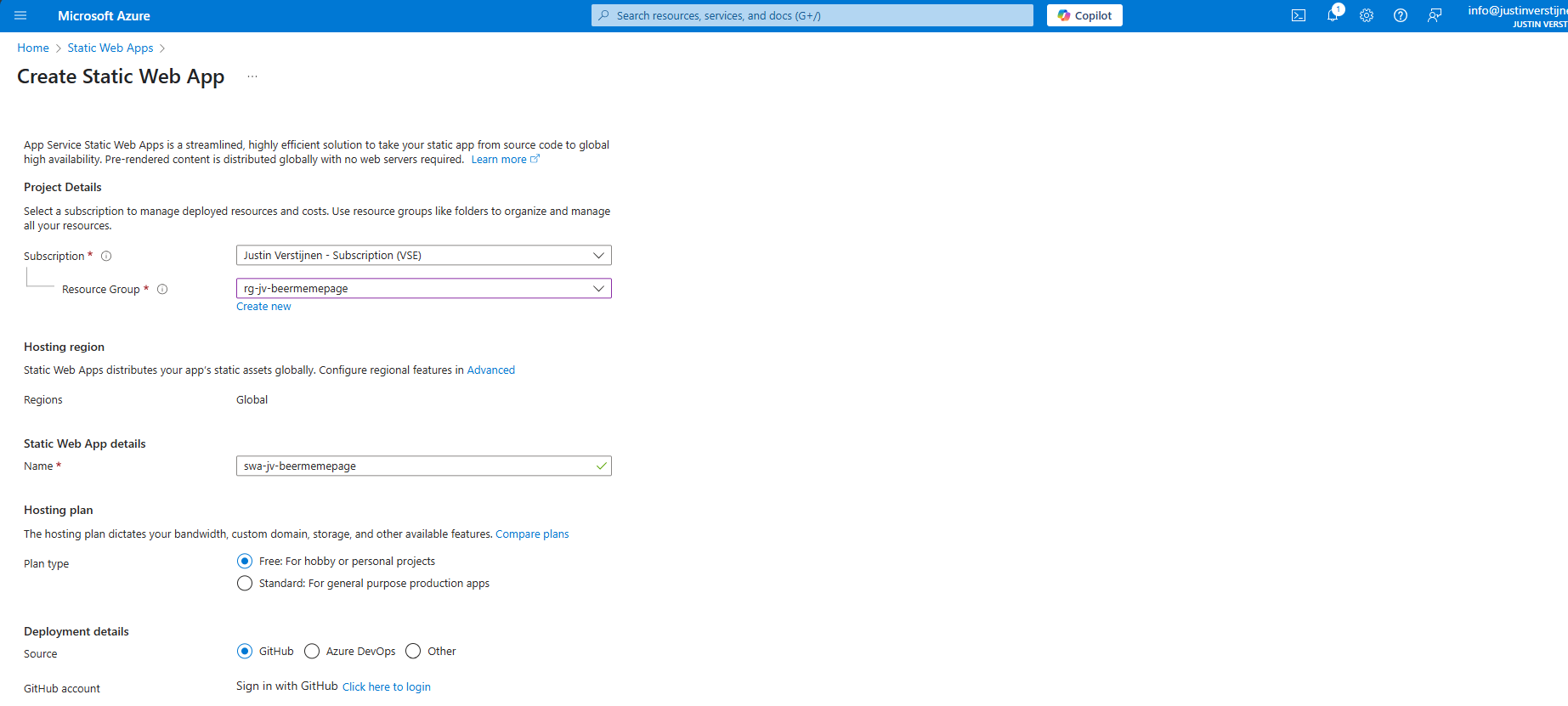

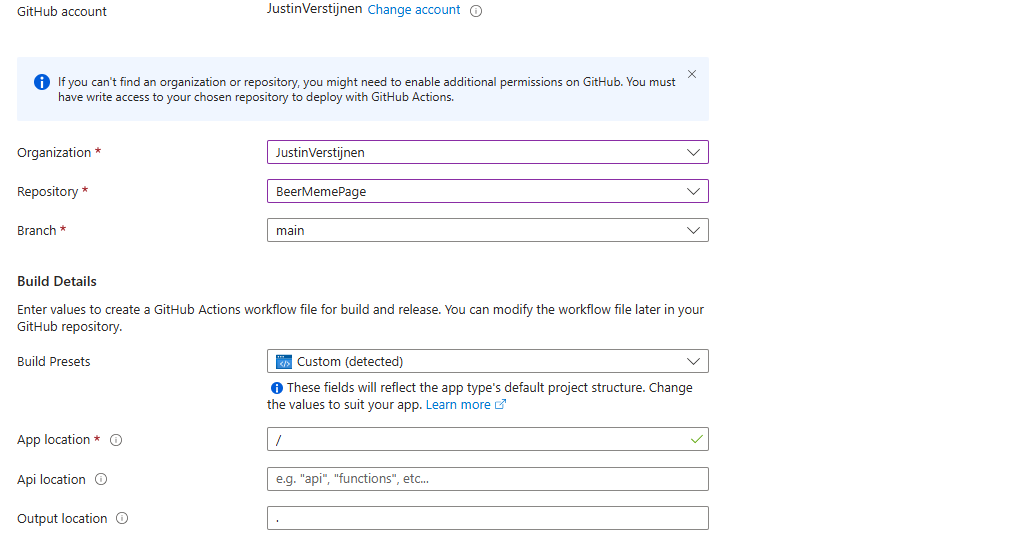

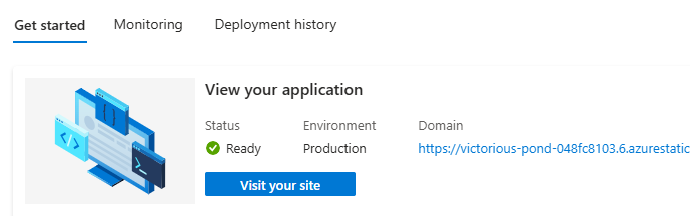

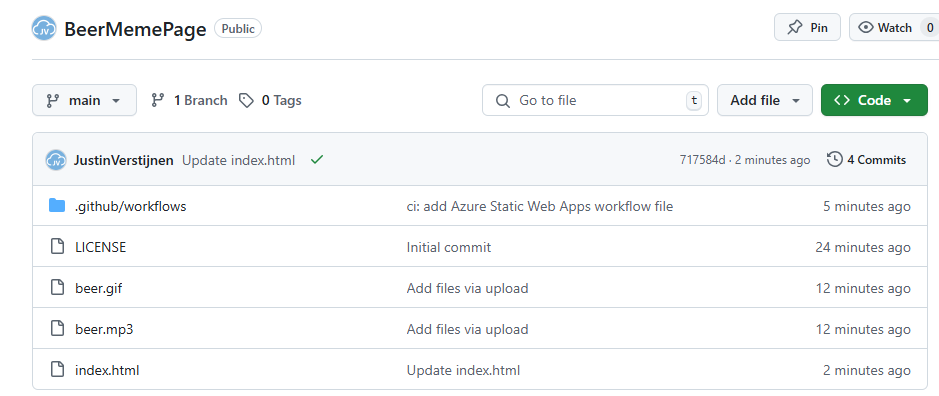

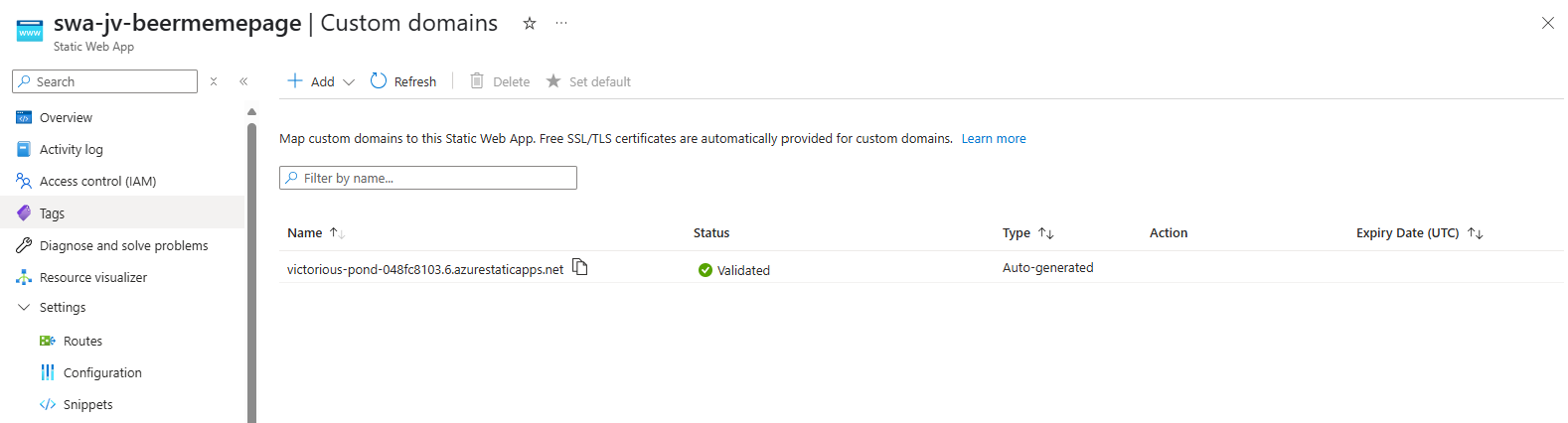

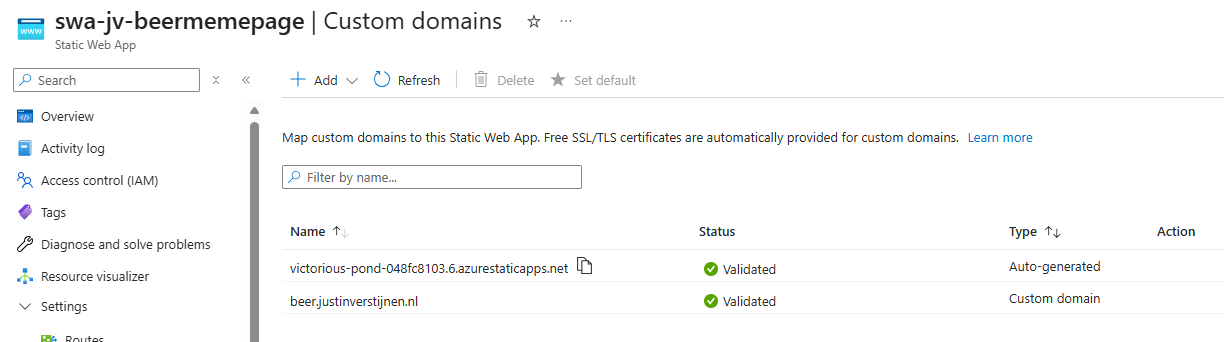

- Creating Static Web Apps on Azure the easy way

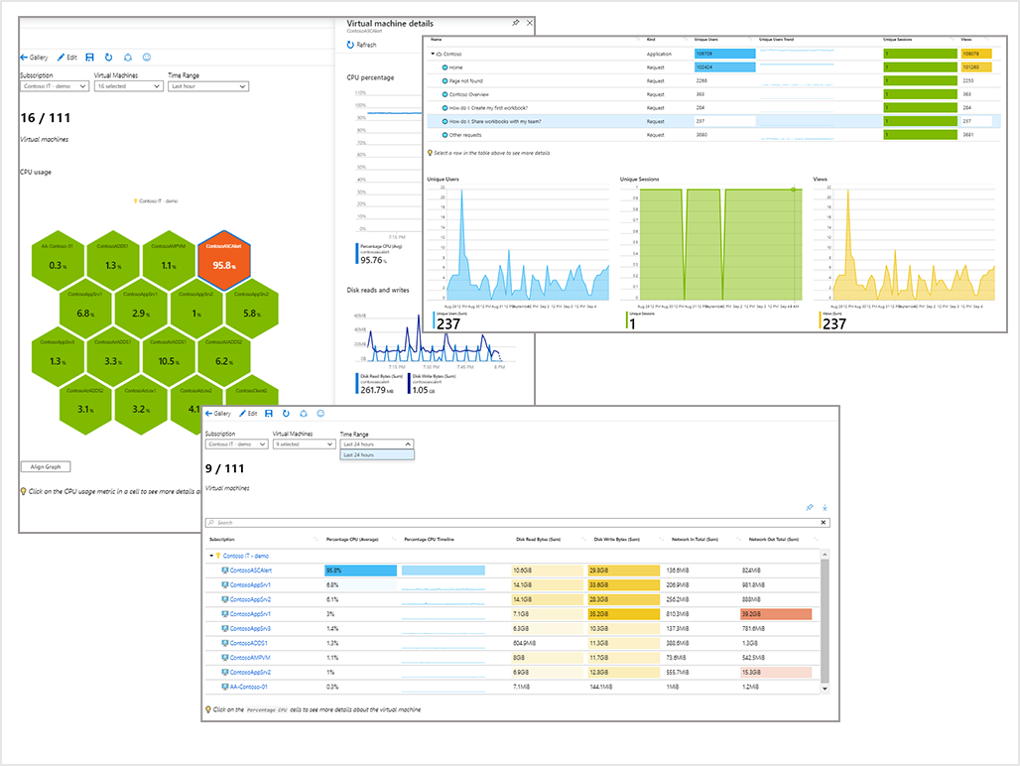

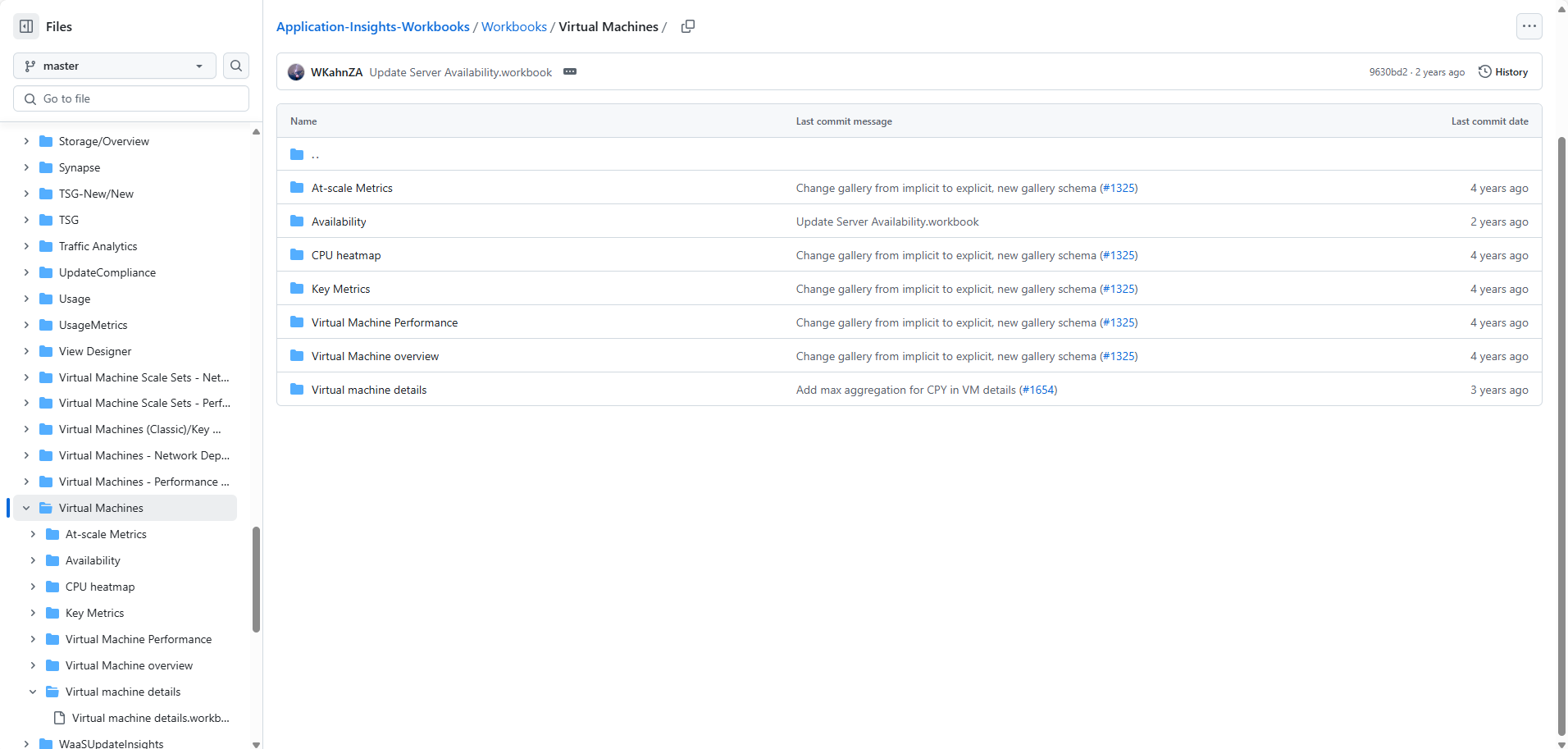

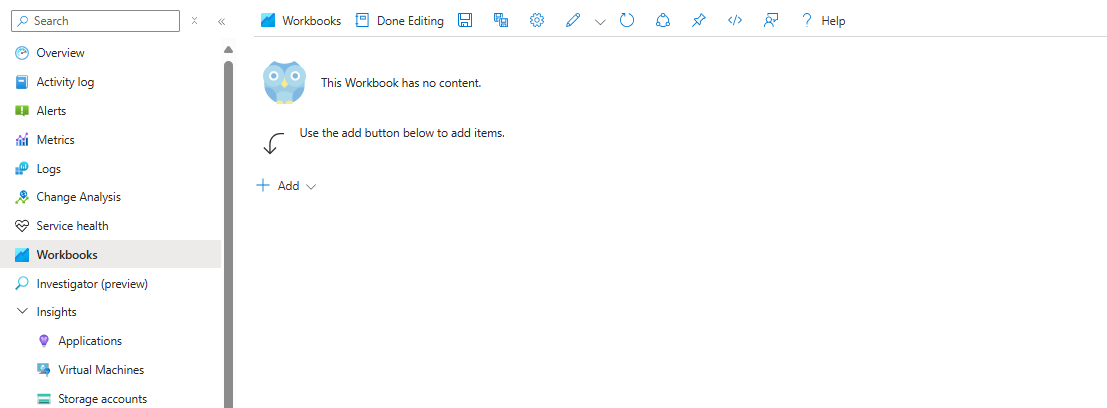

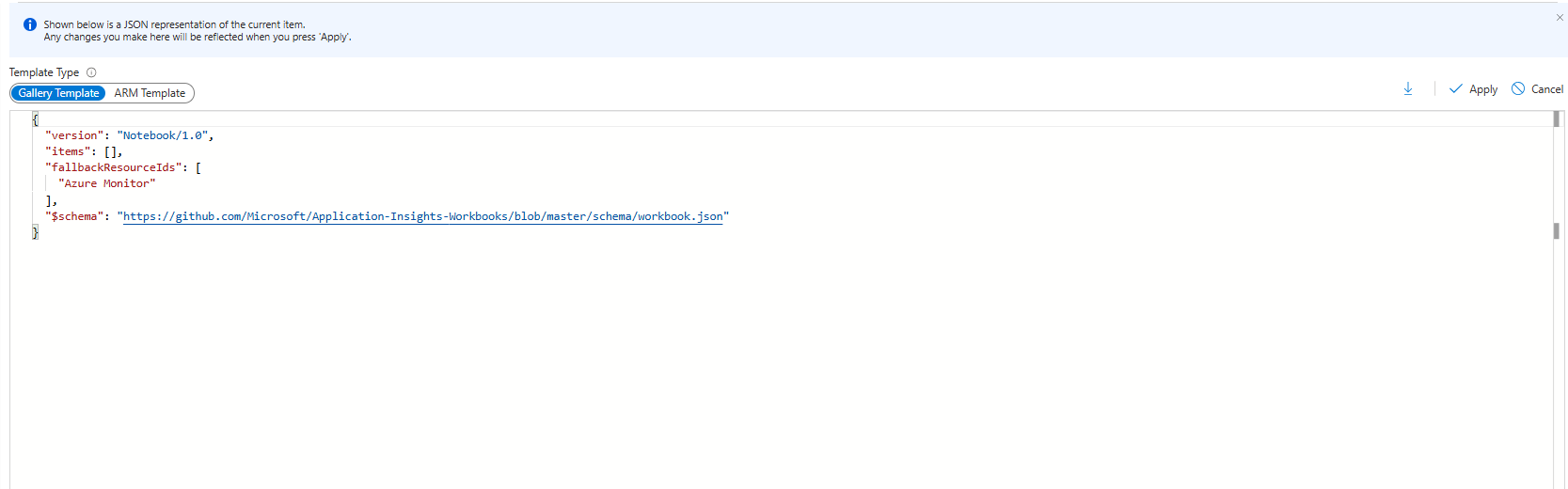

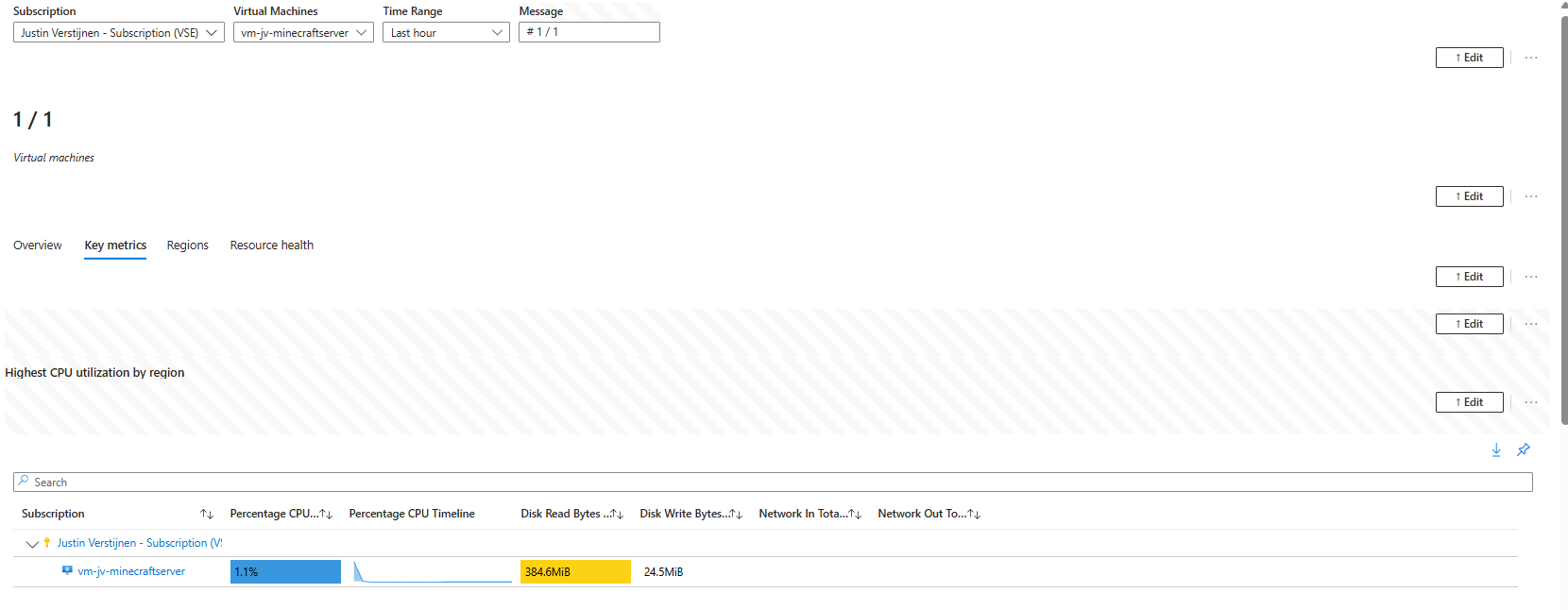

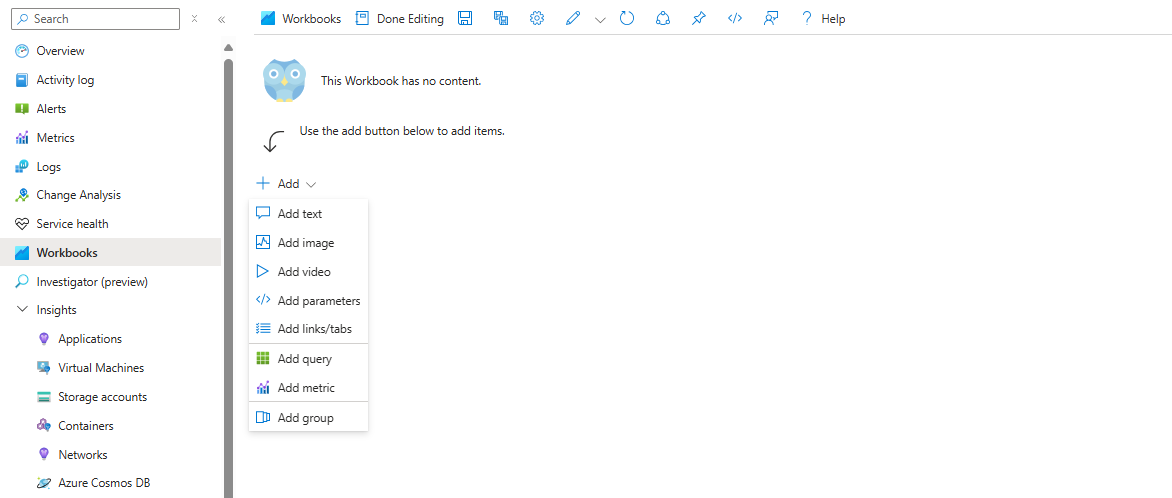

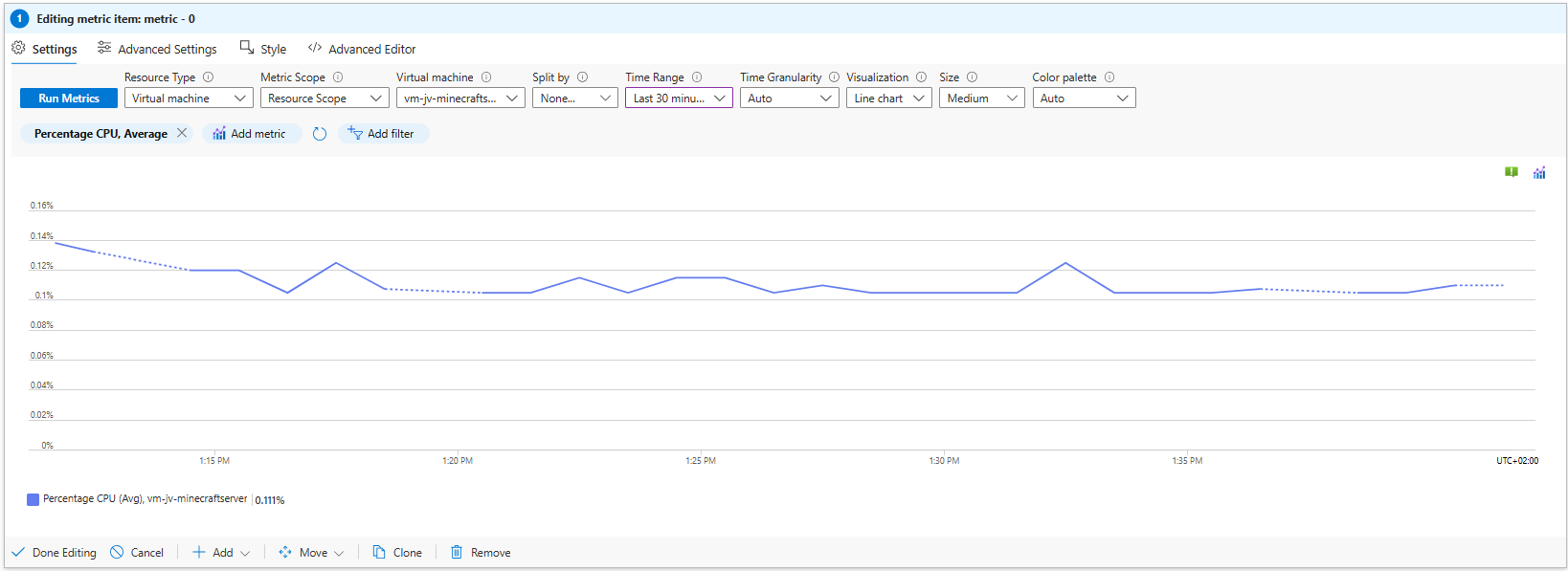

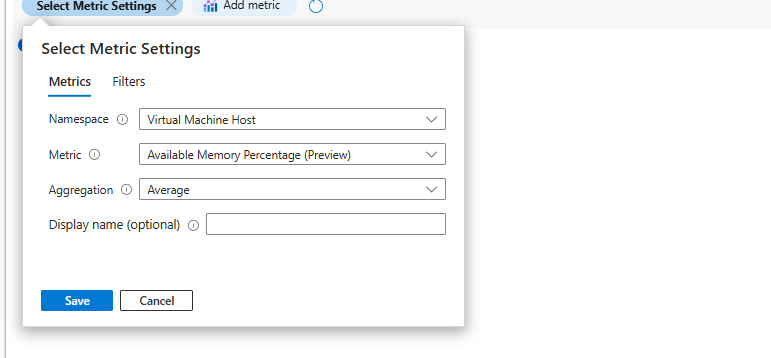

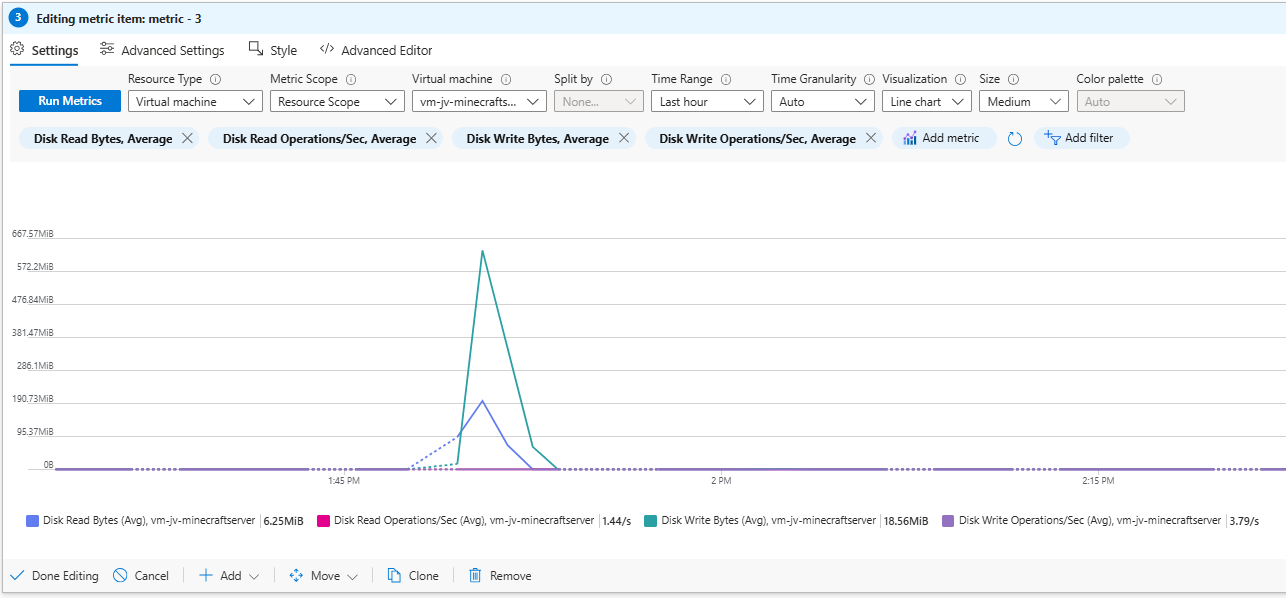

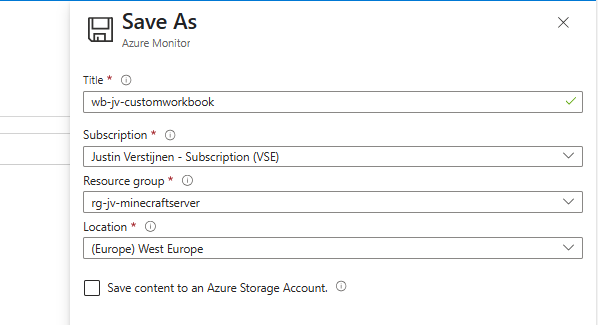

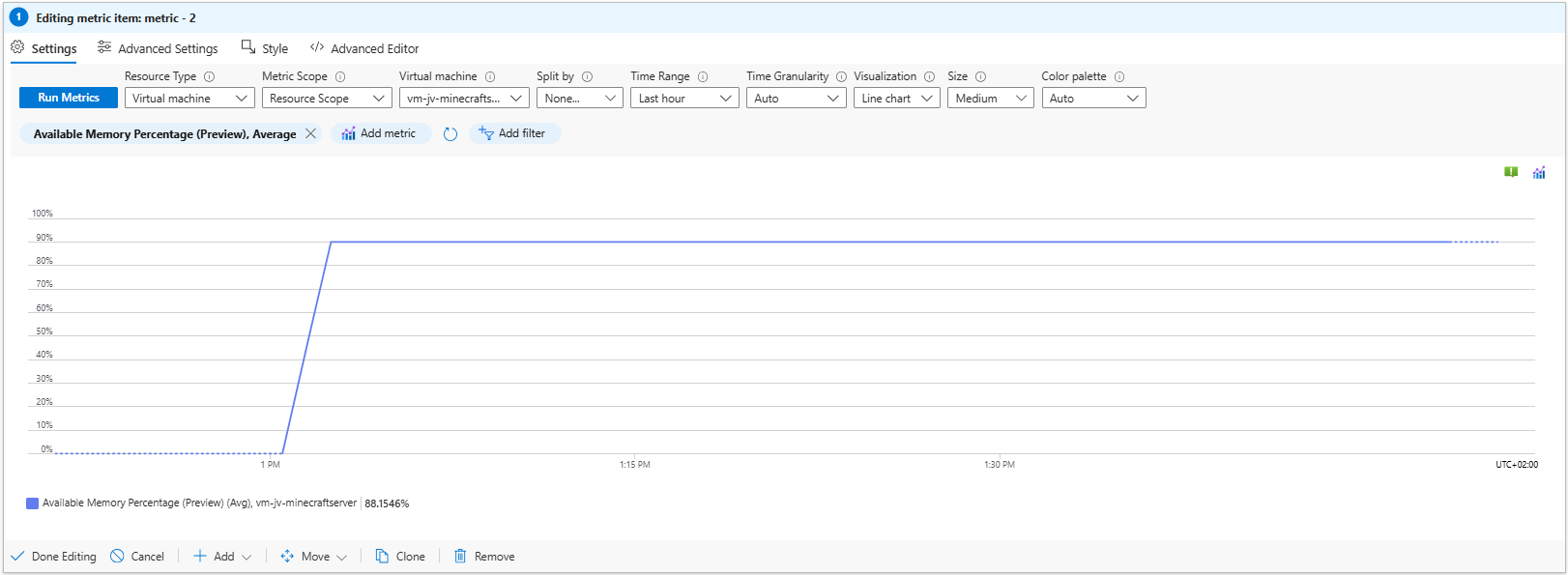

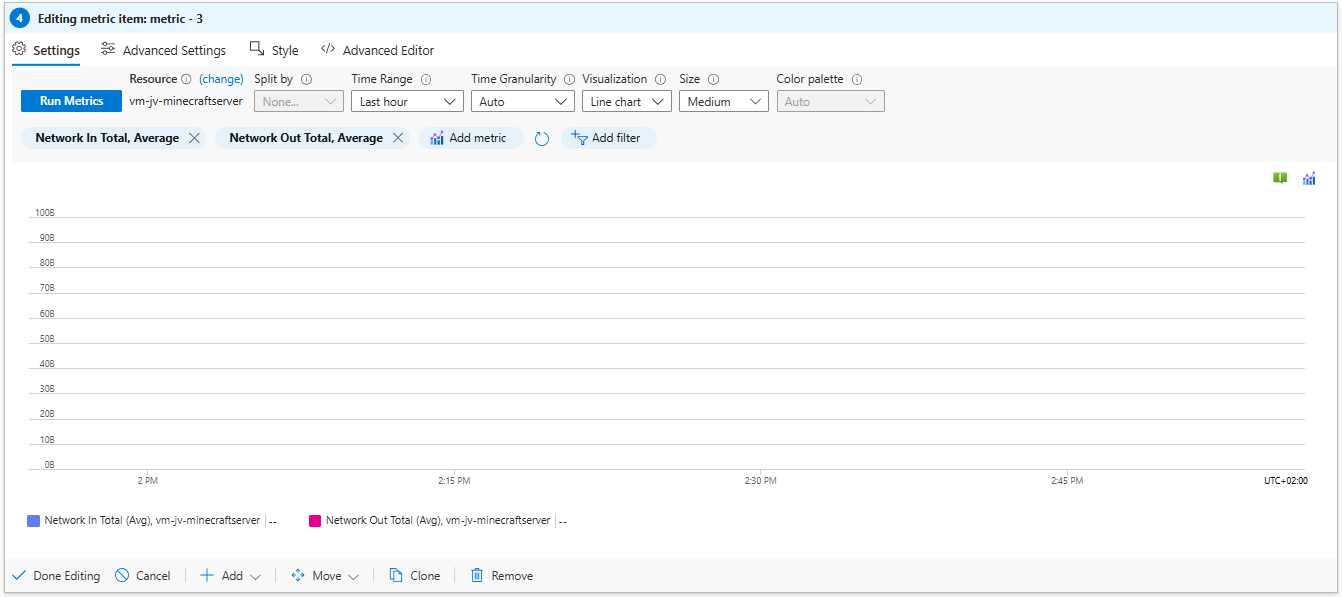

- Create custom Azure Workbooks for detailed monitoring

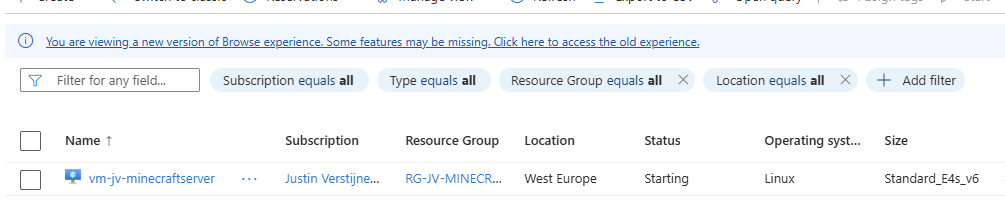

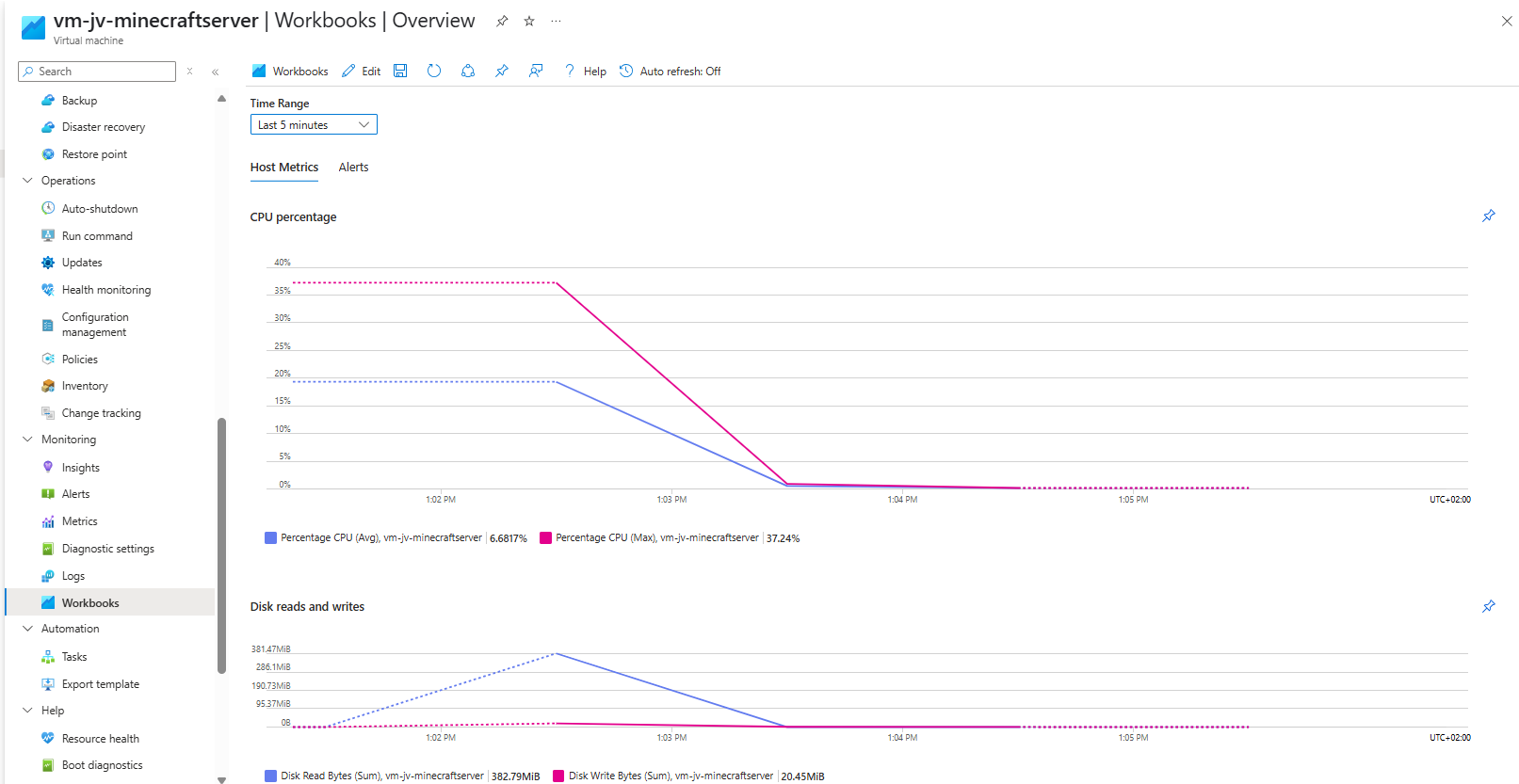

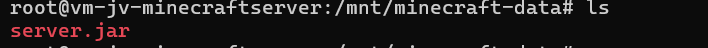

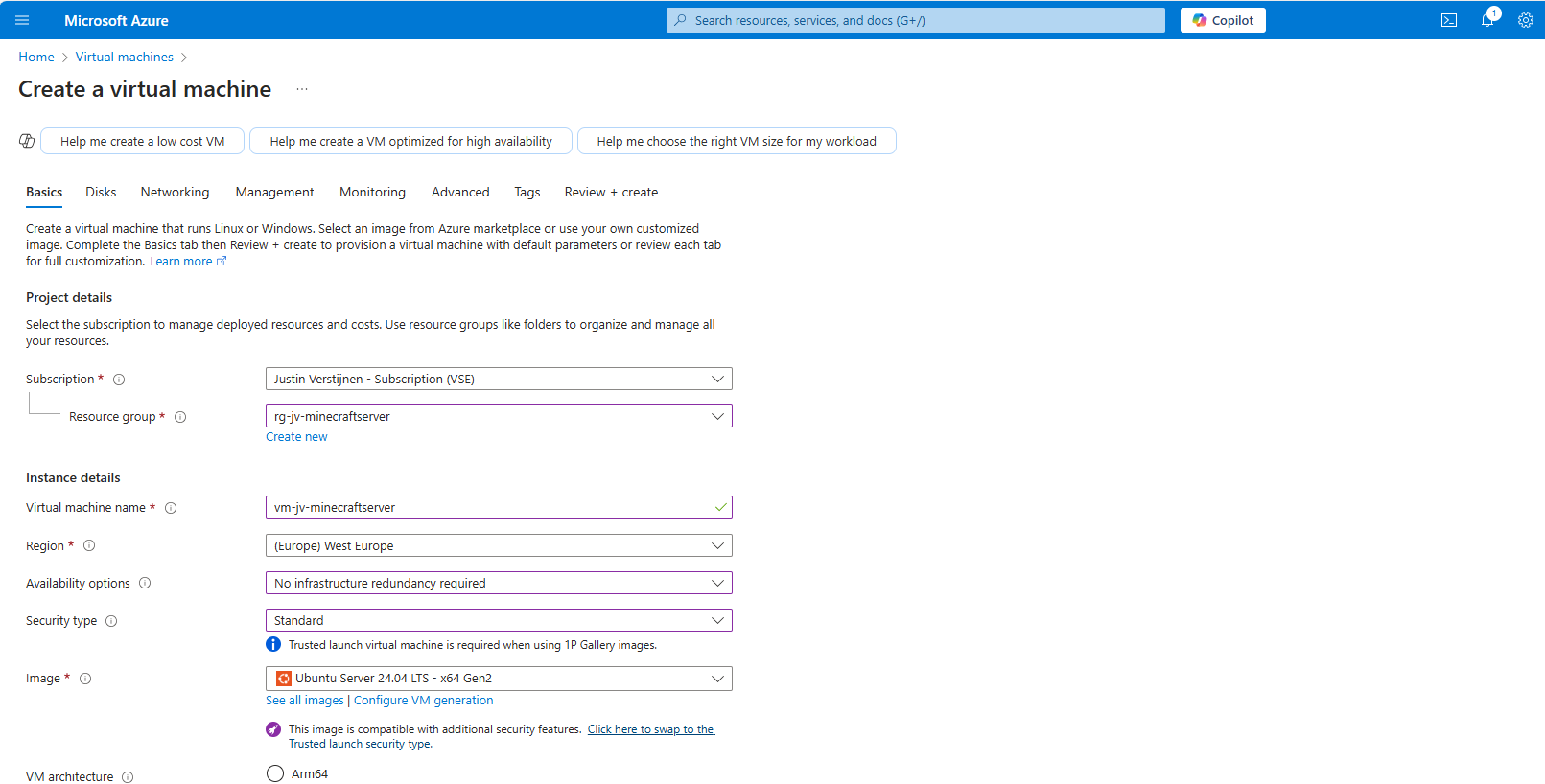

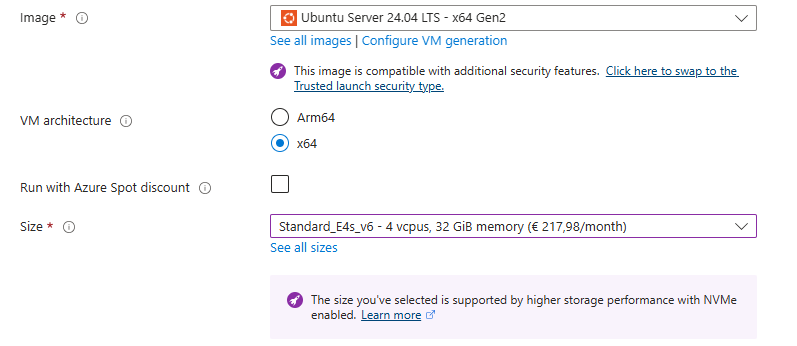

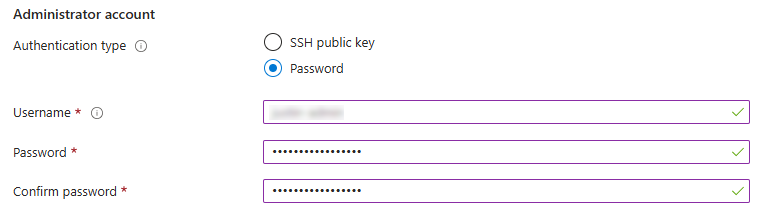

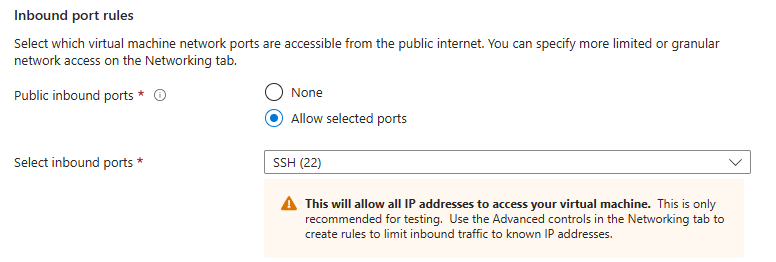

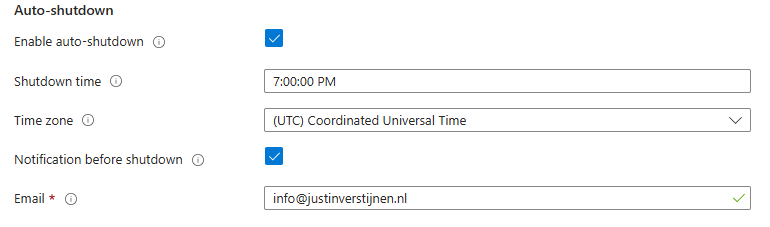

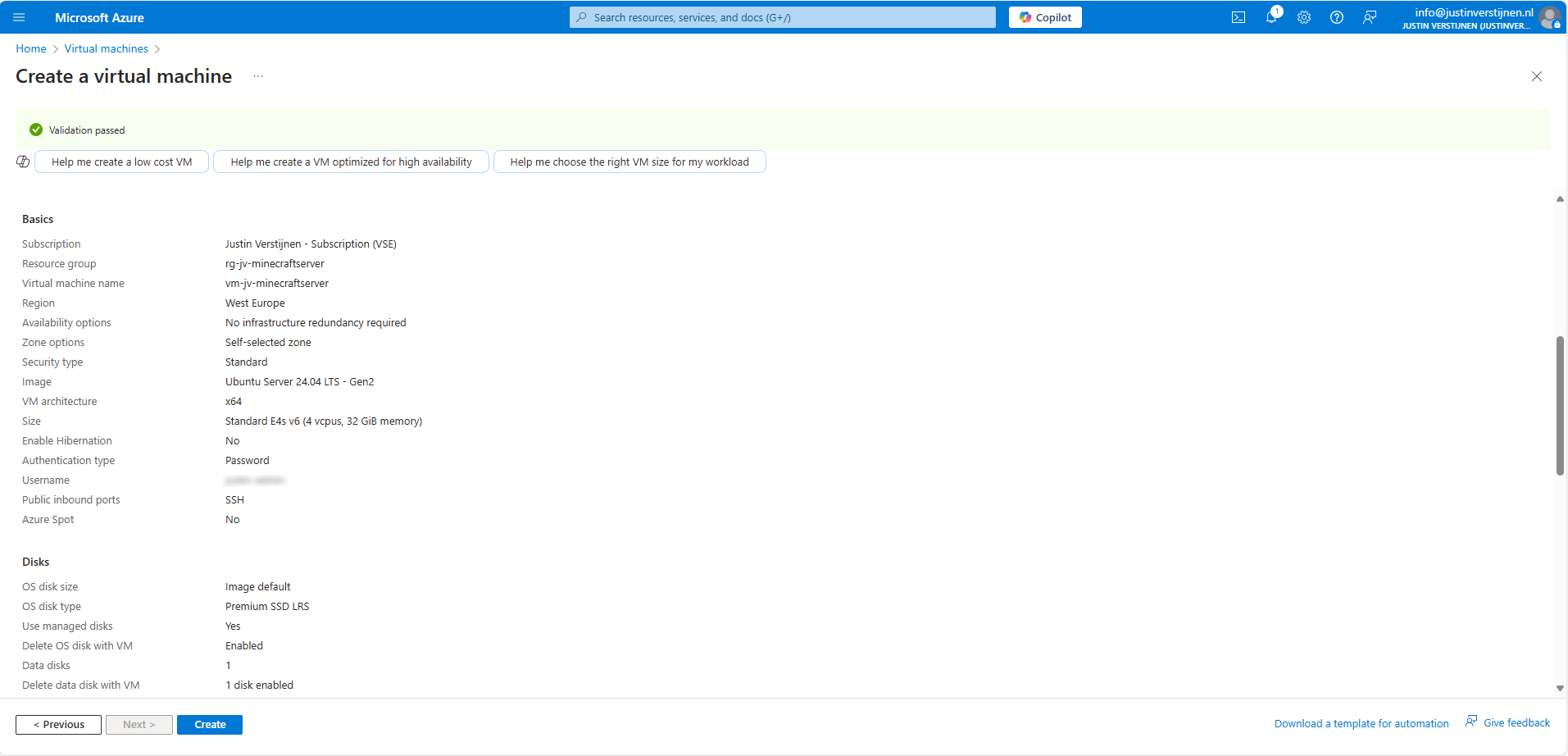

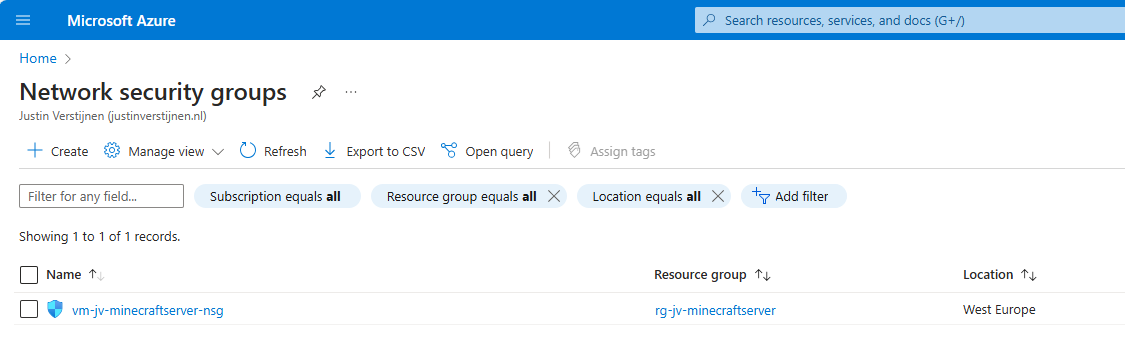

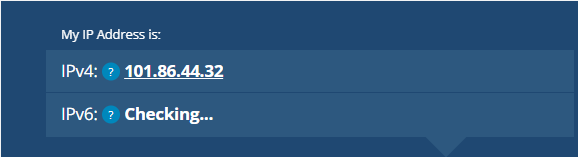

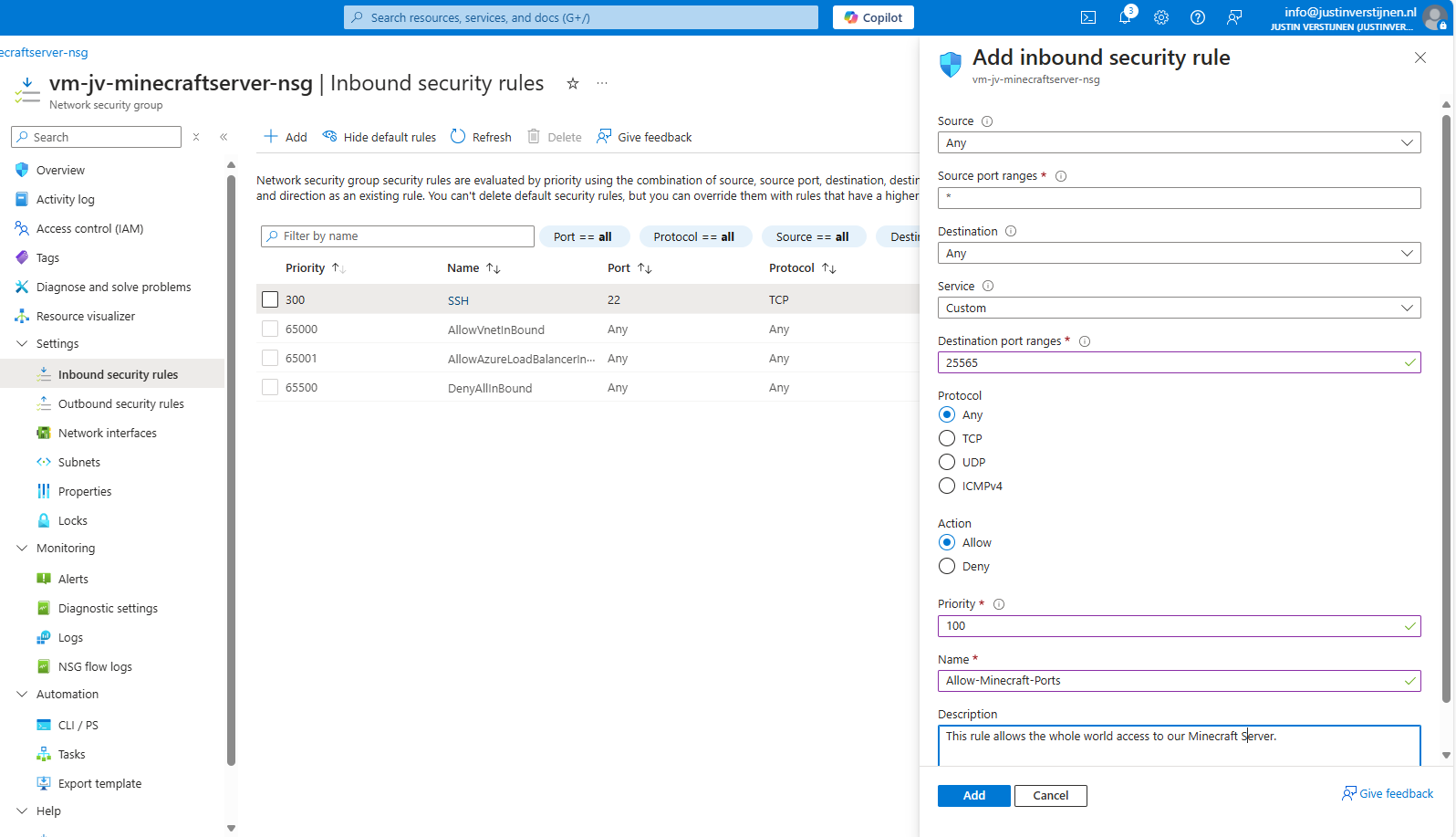

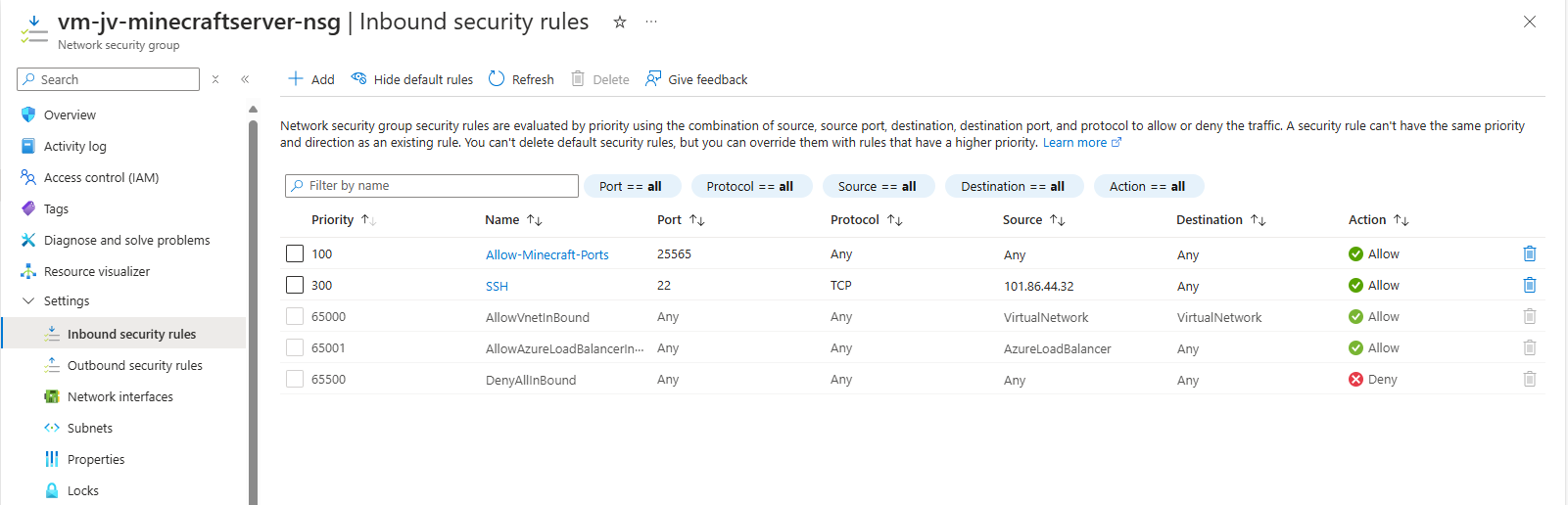

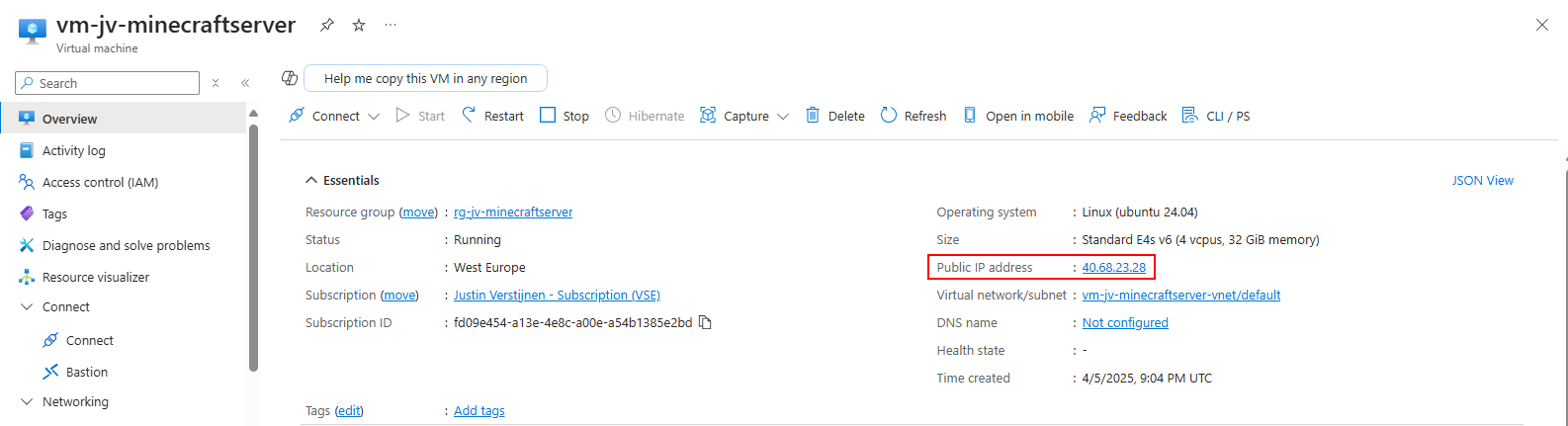

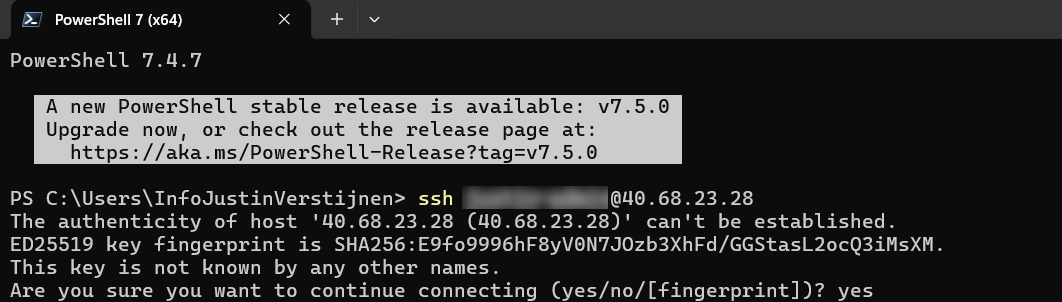

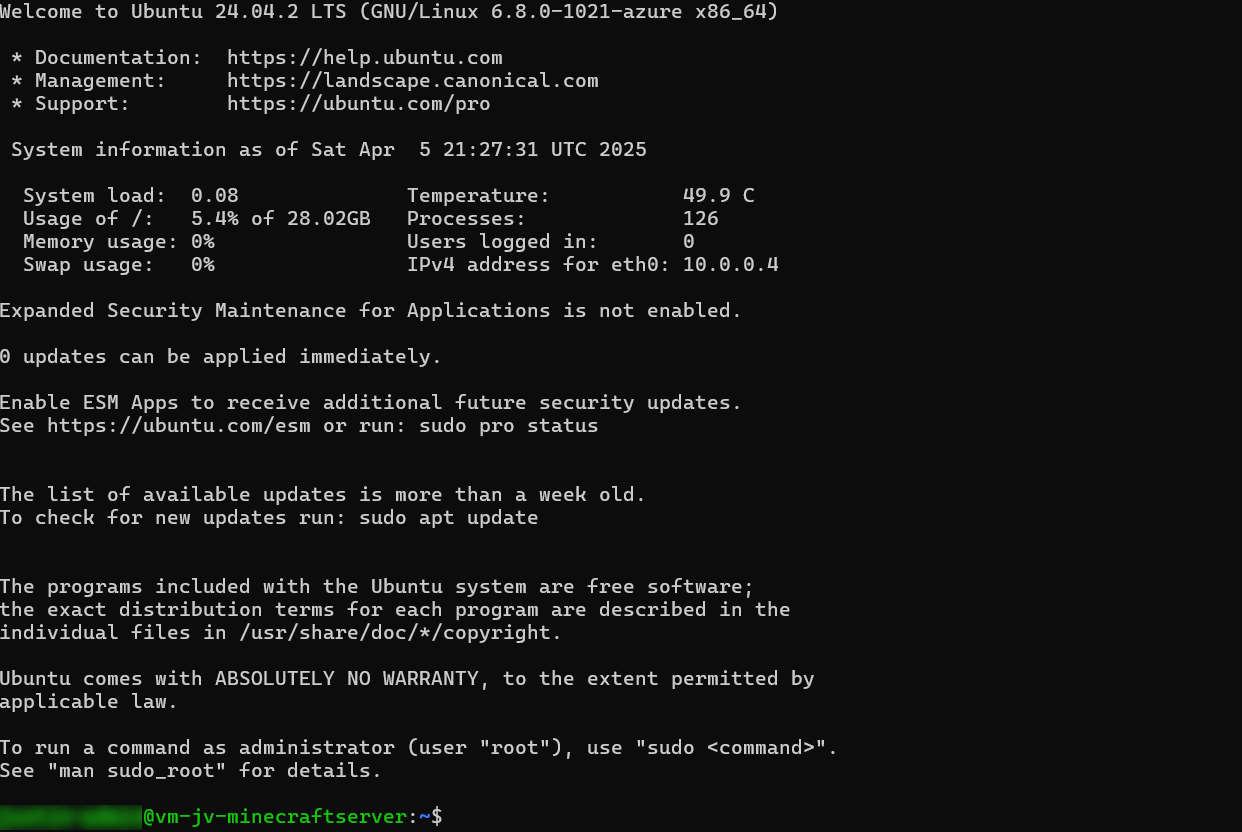

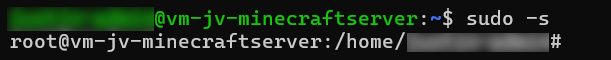

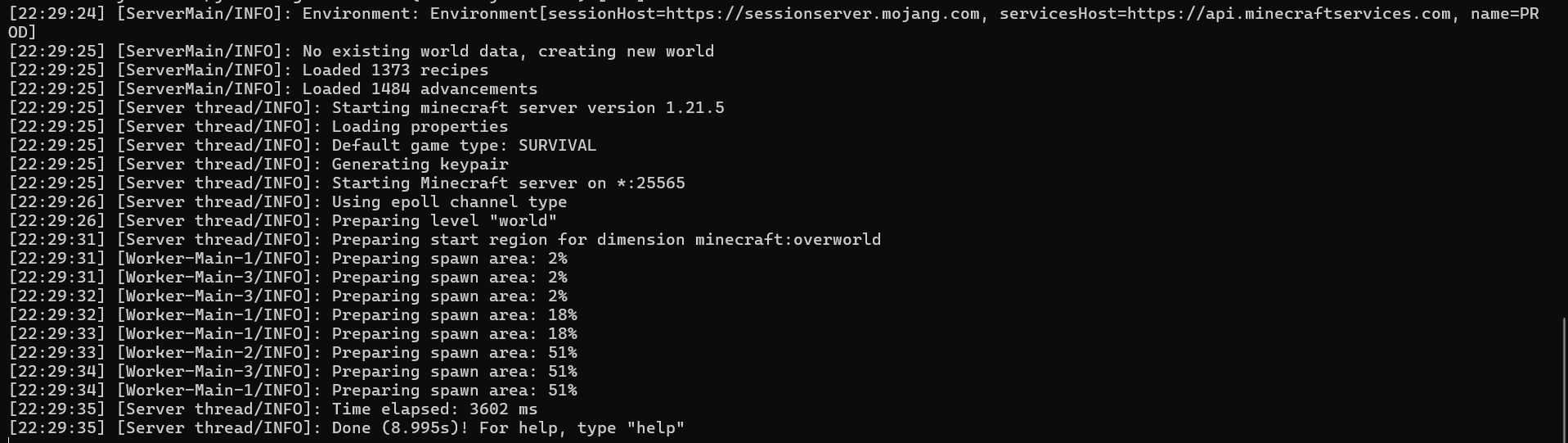

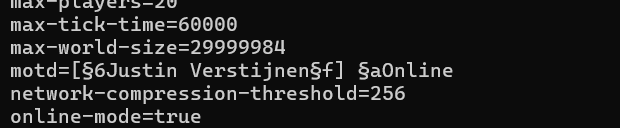

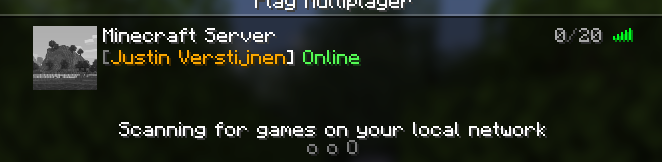

- Setup a Minecraft server on Azure

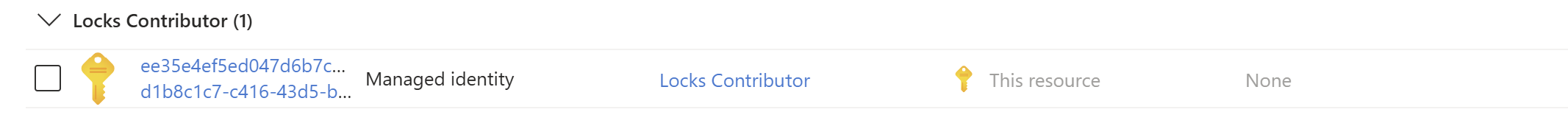

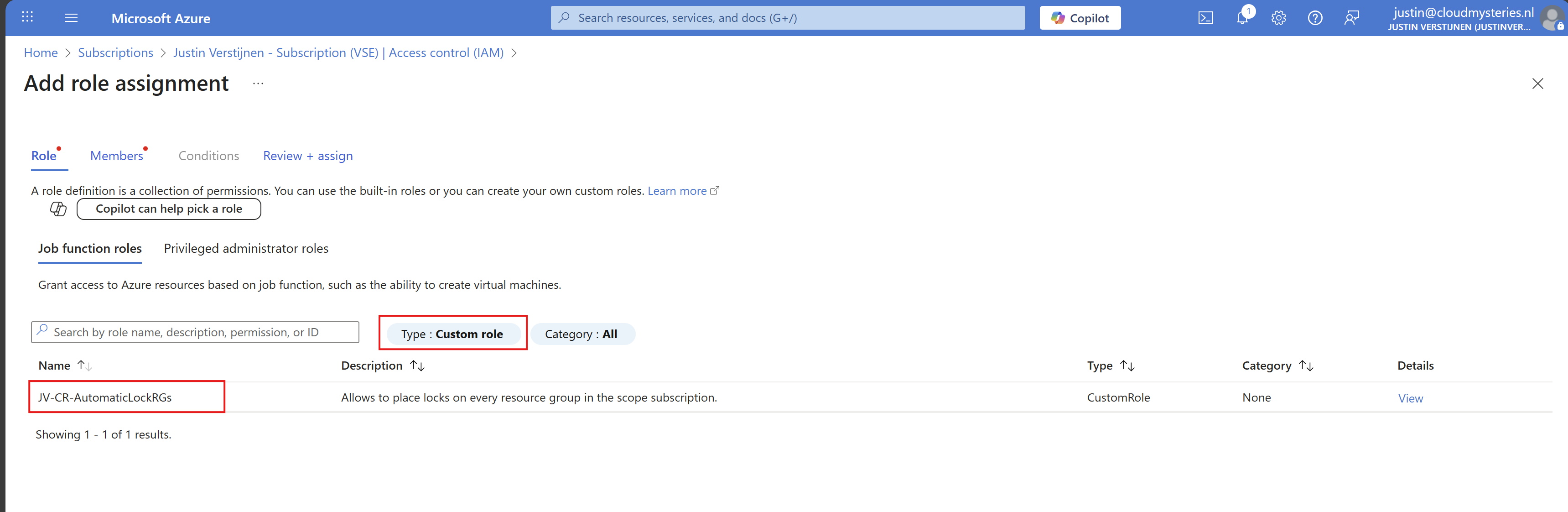

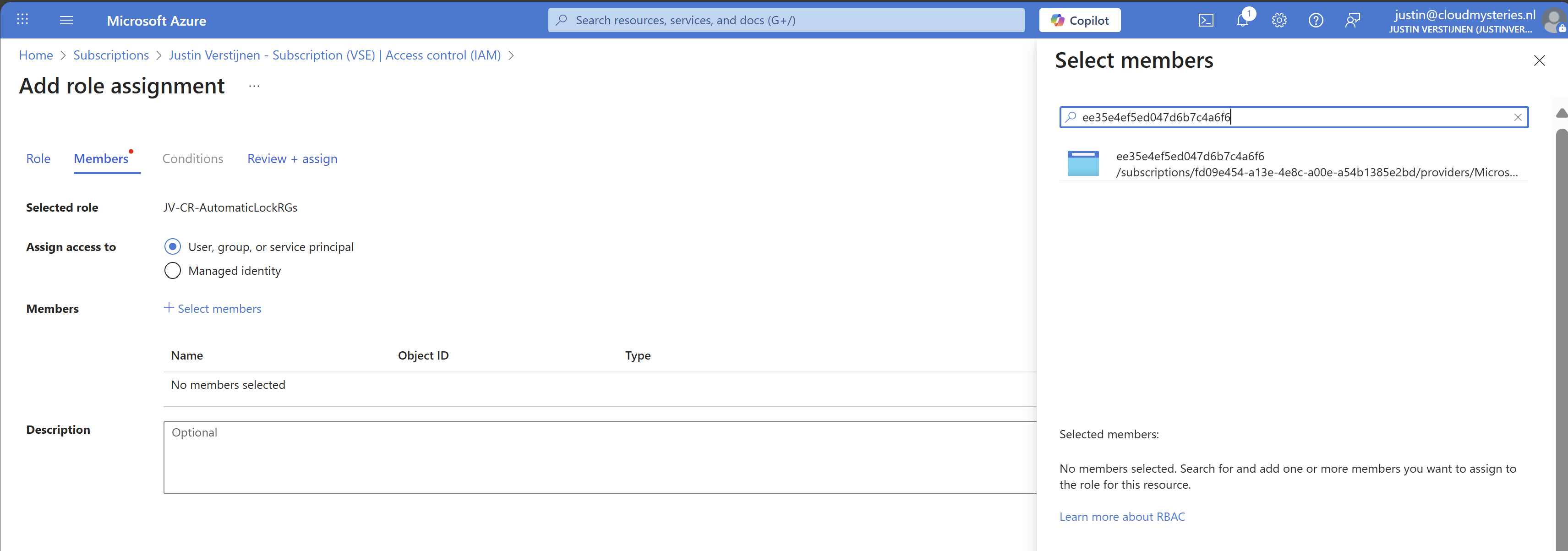

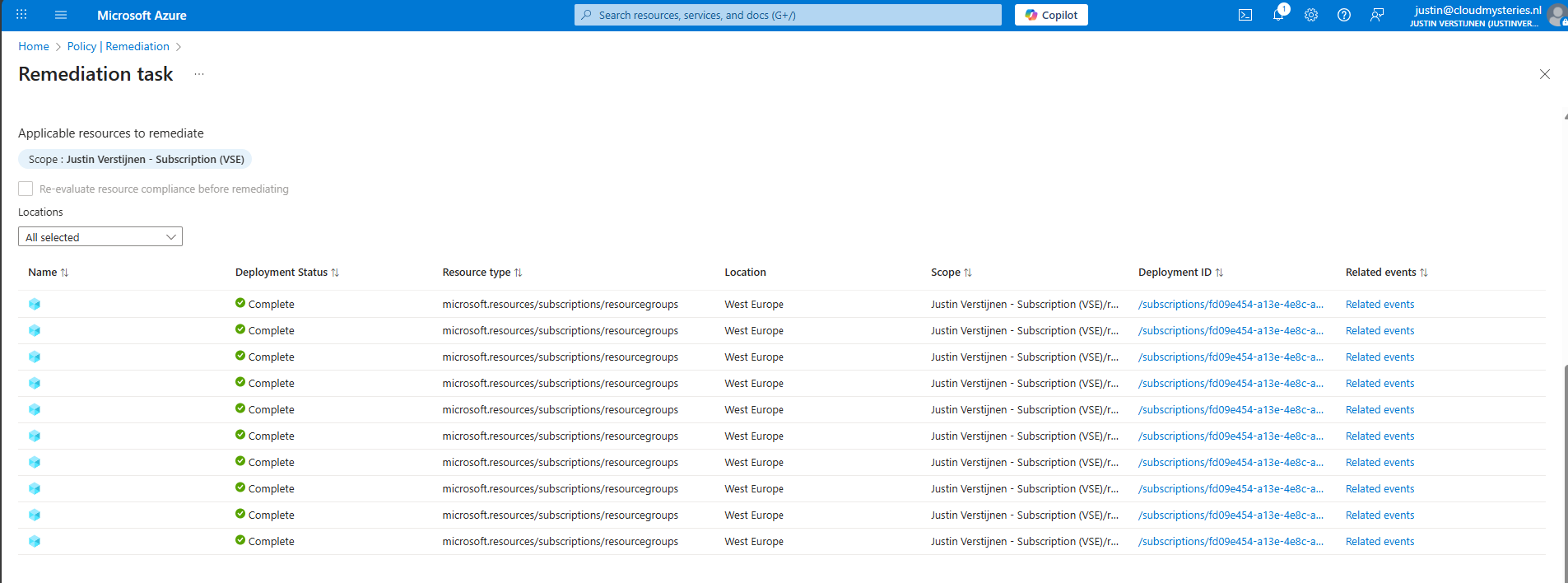

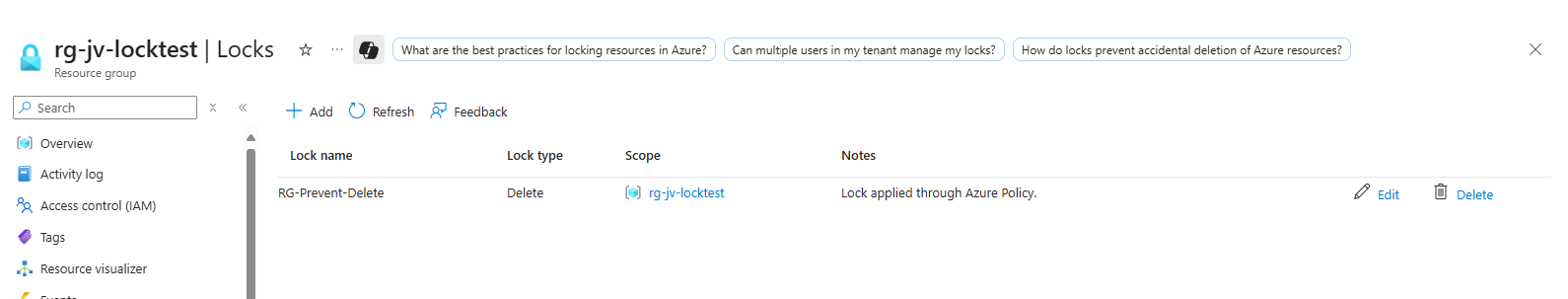

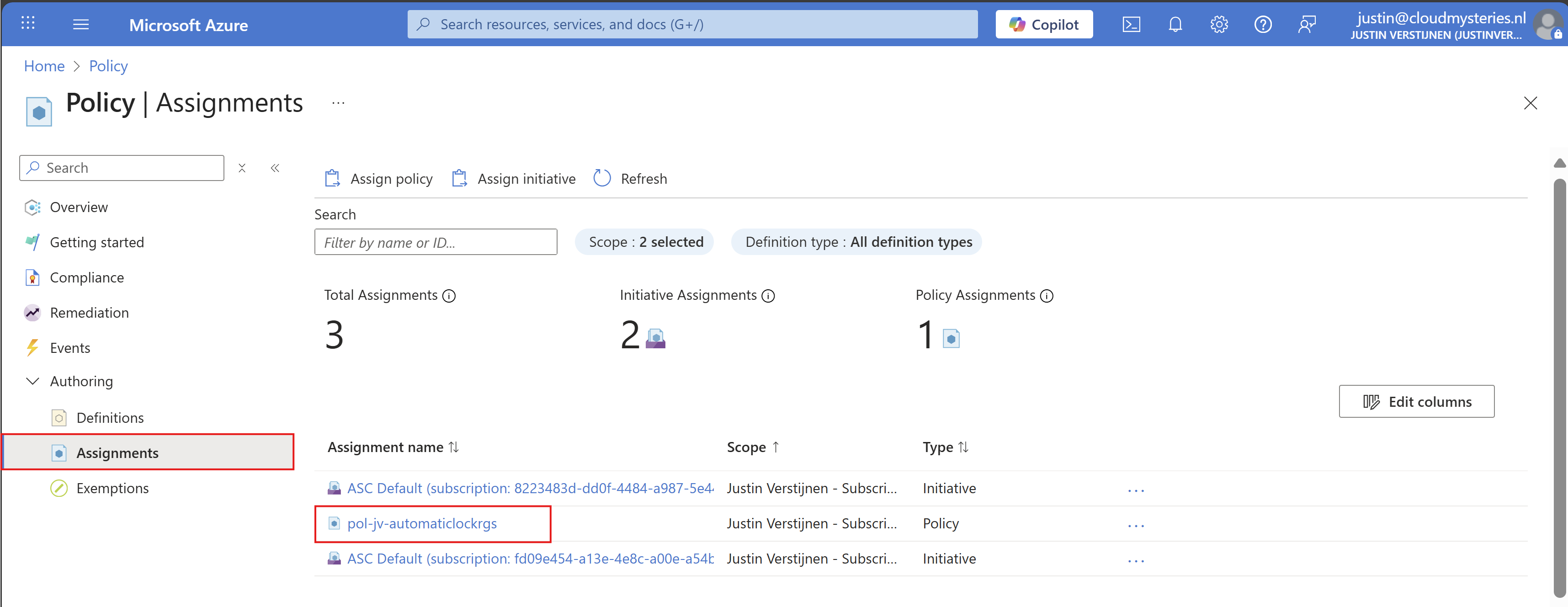

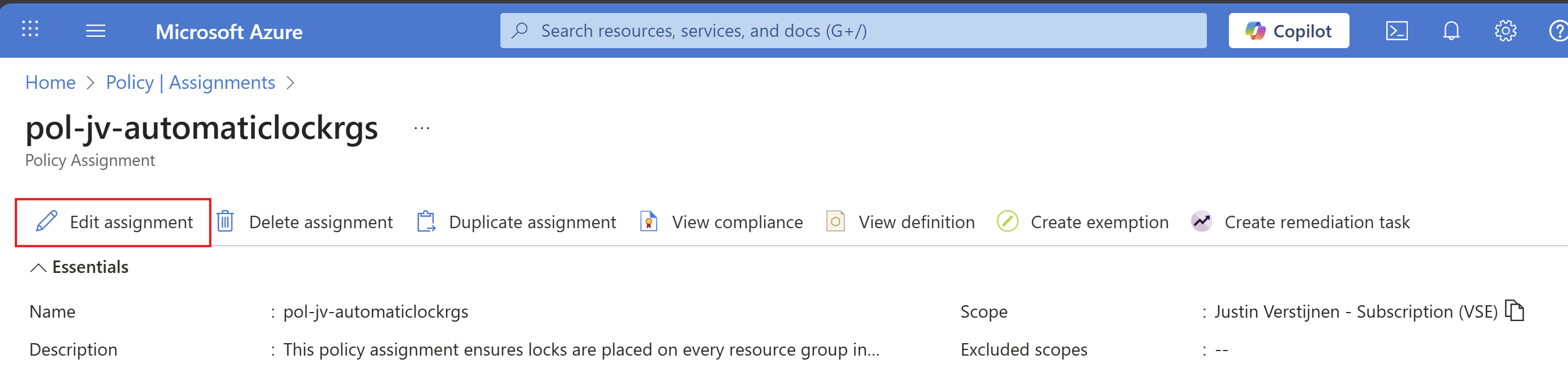

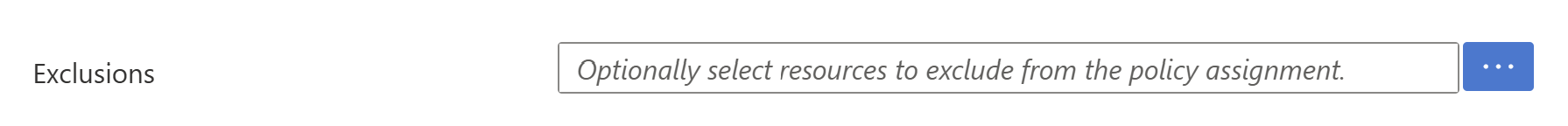

- Deploy Resource Group locks automatically with Azure Policy

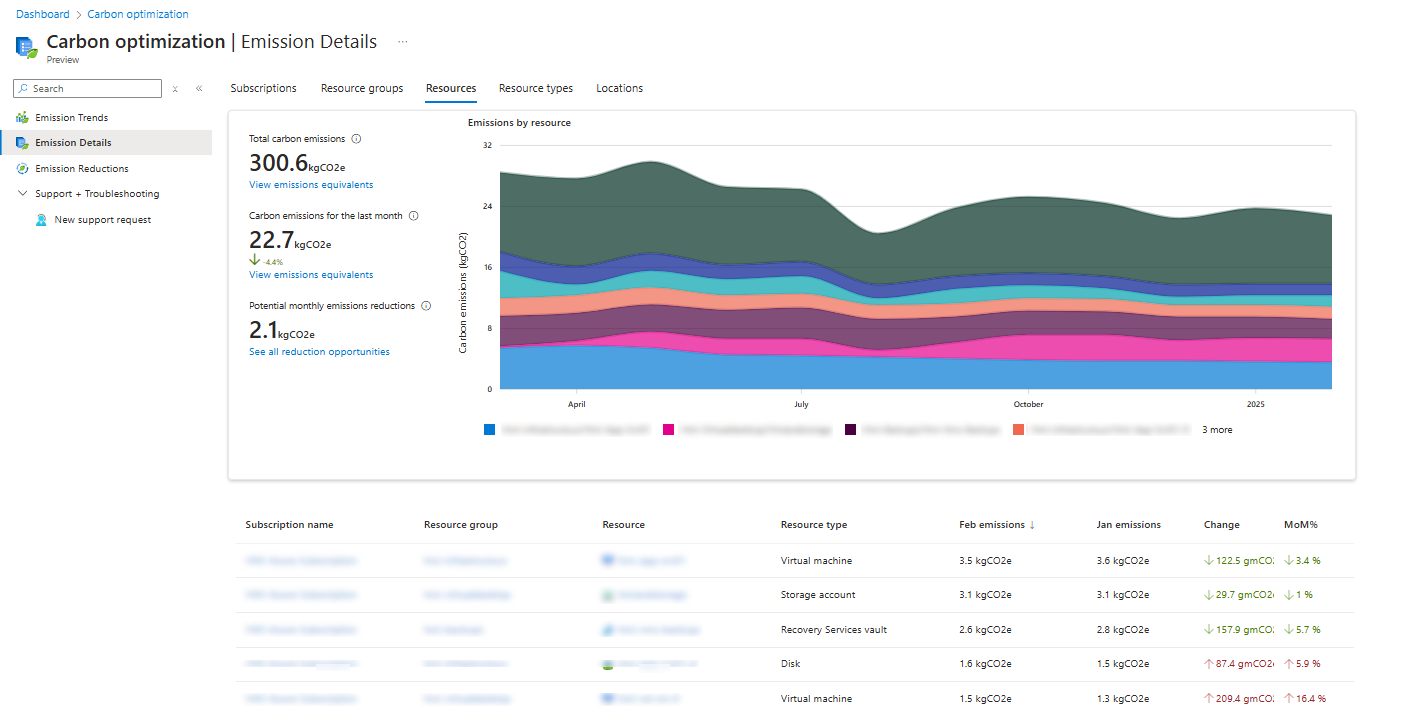

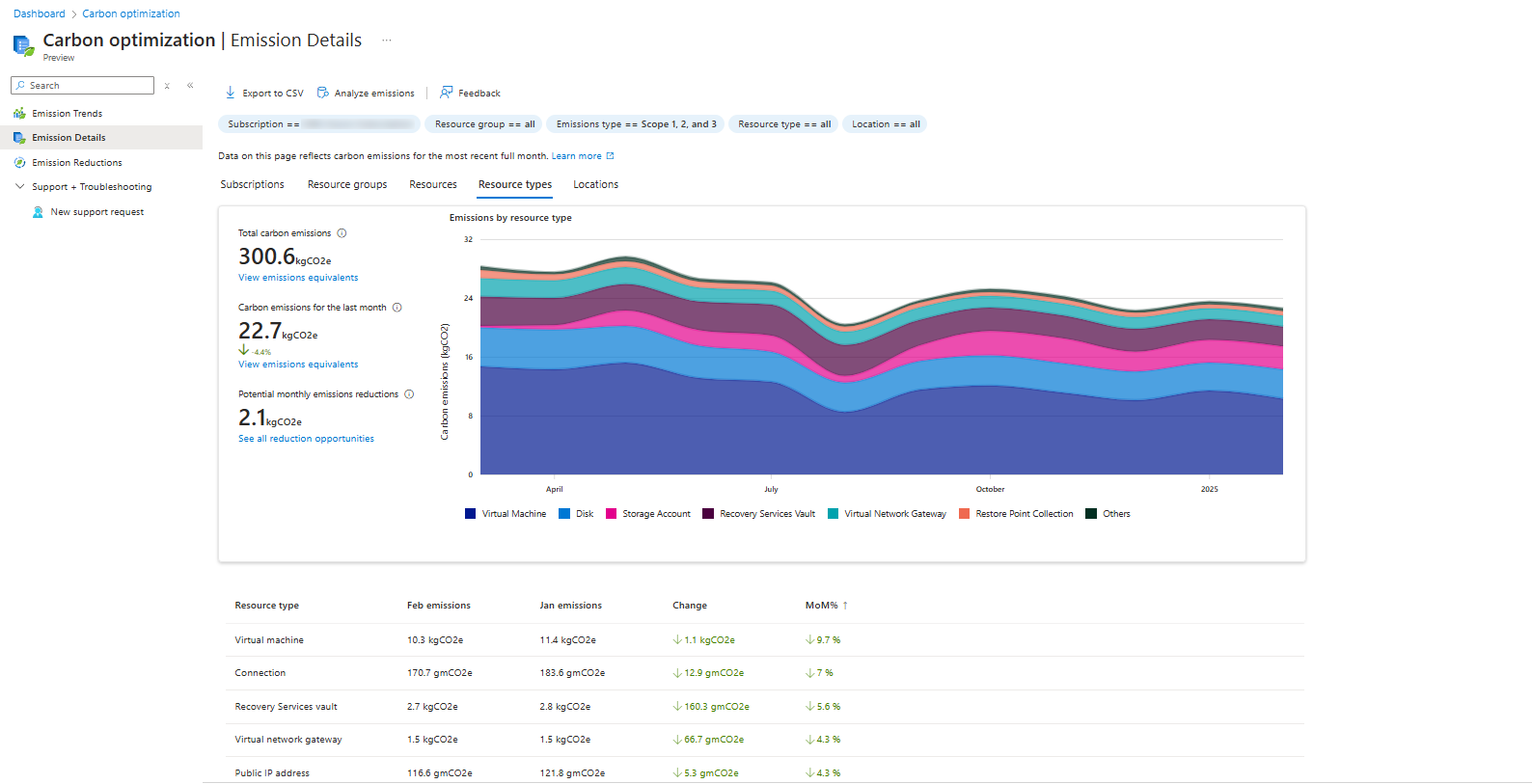

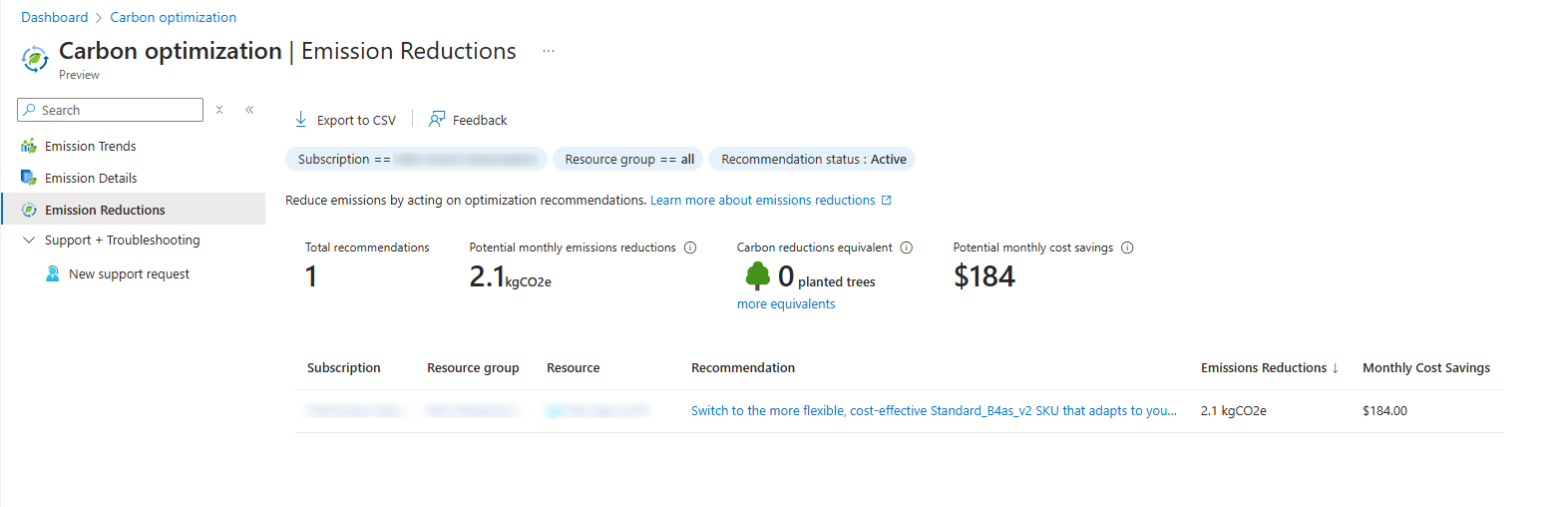

- Monitor and reduce carbon emissions (CO2) in Azure

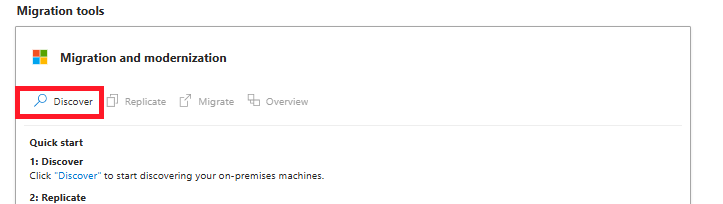

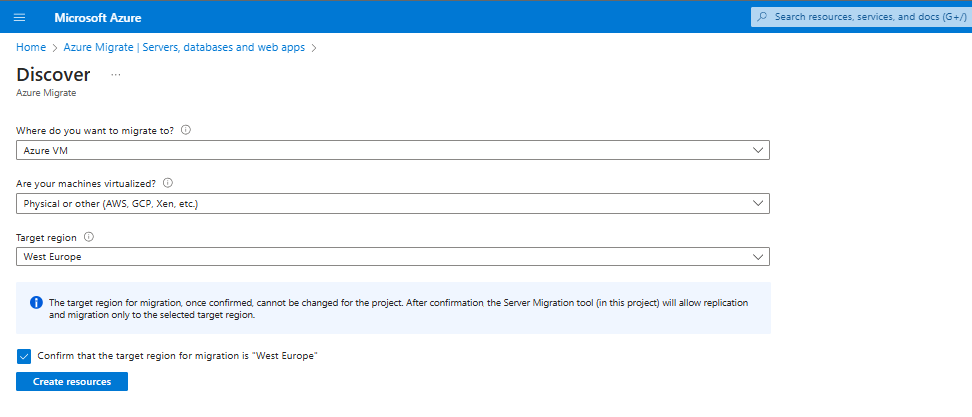

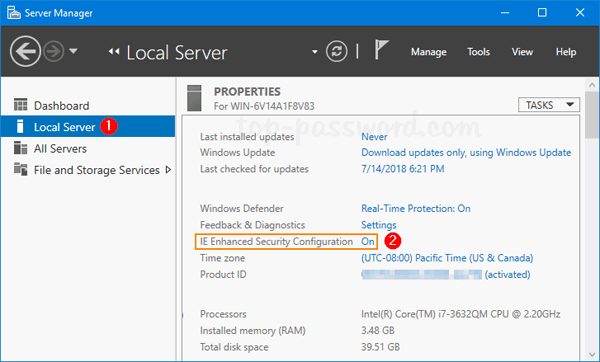

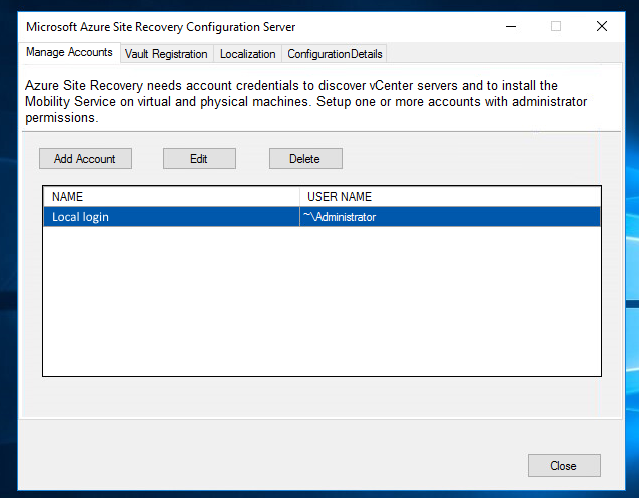

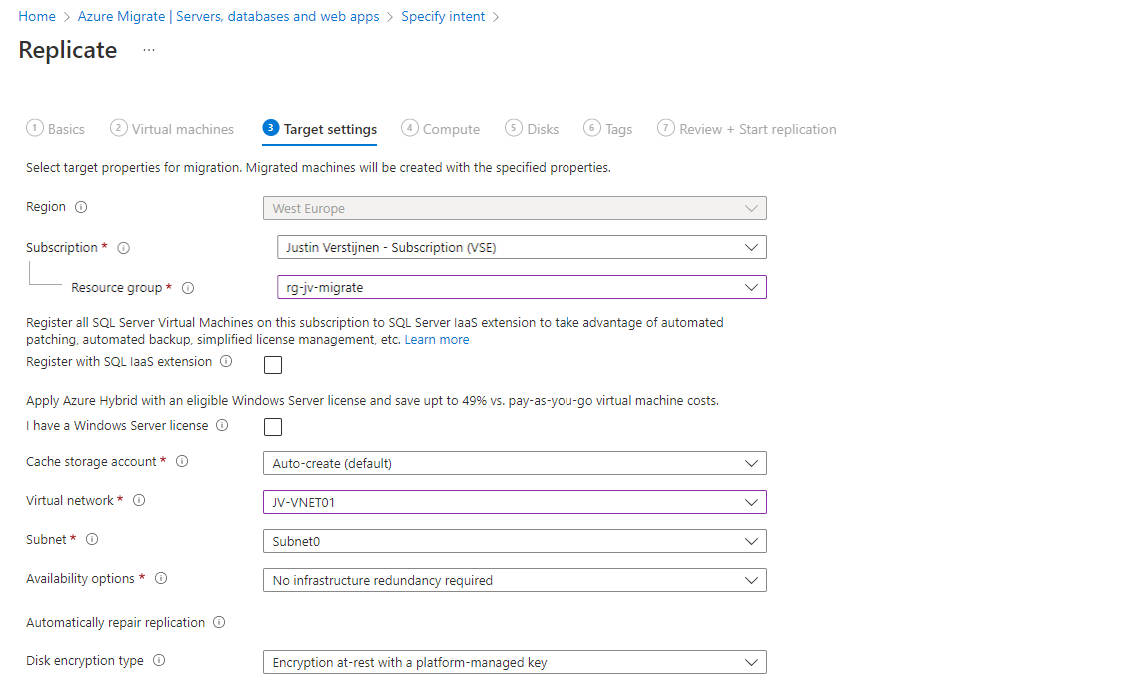

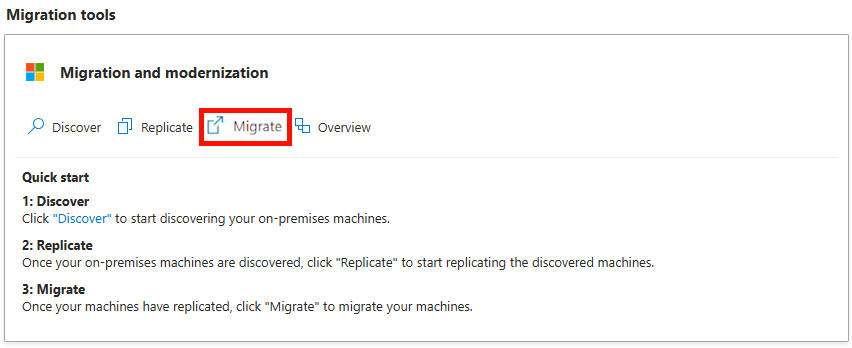

- Migrate servers with Azure Migrate in 7 steps

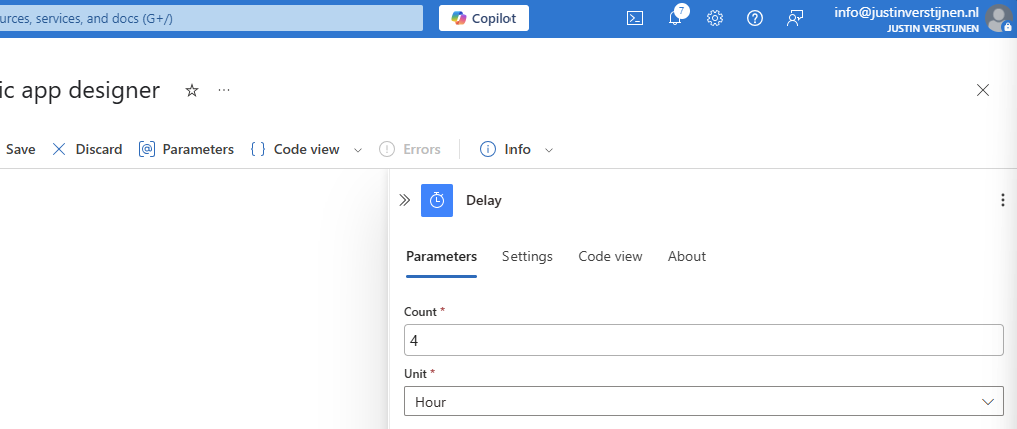

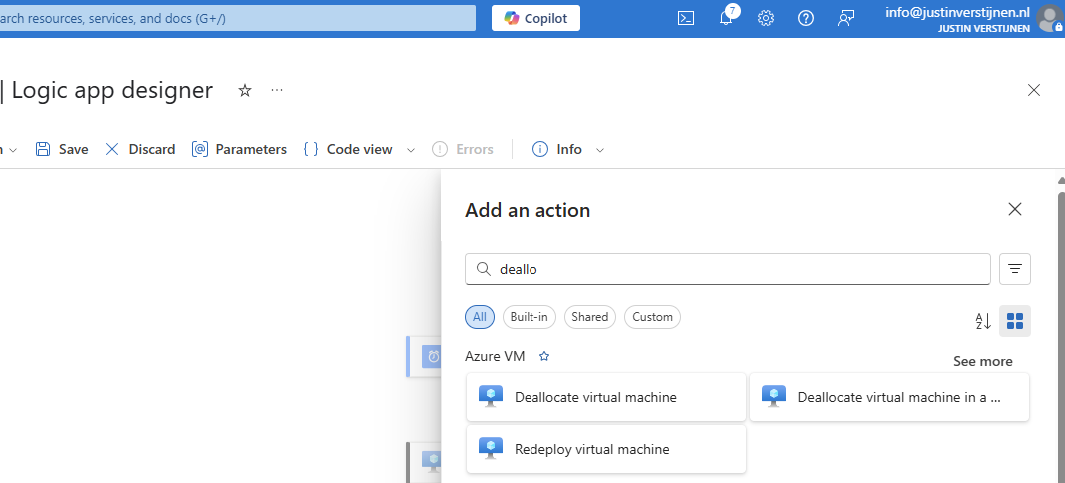

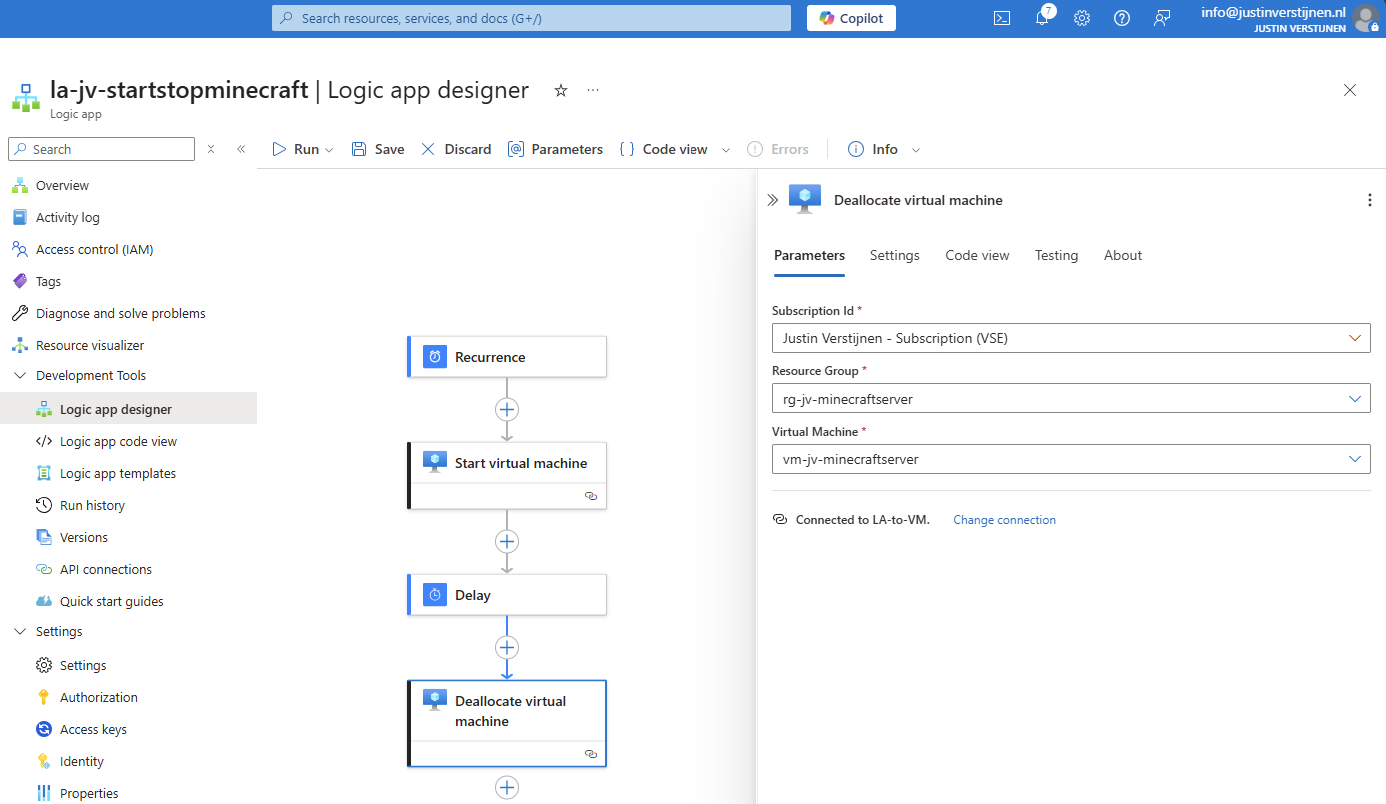

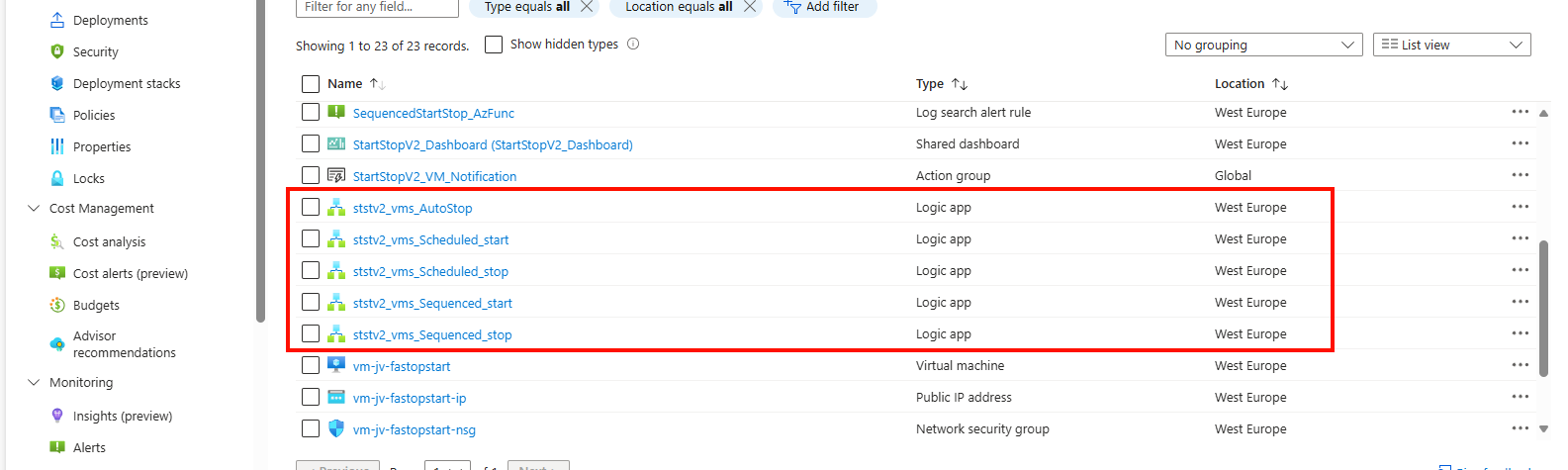

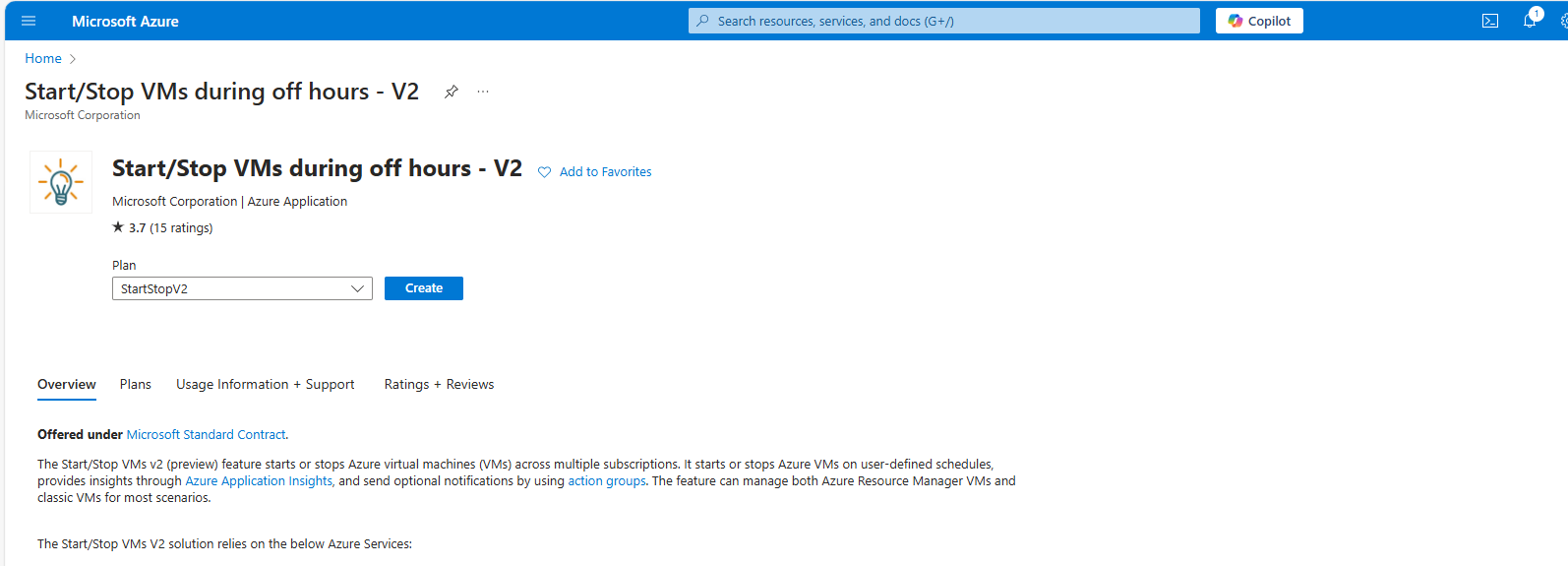

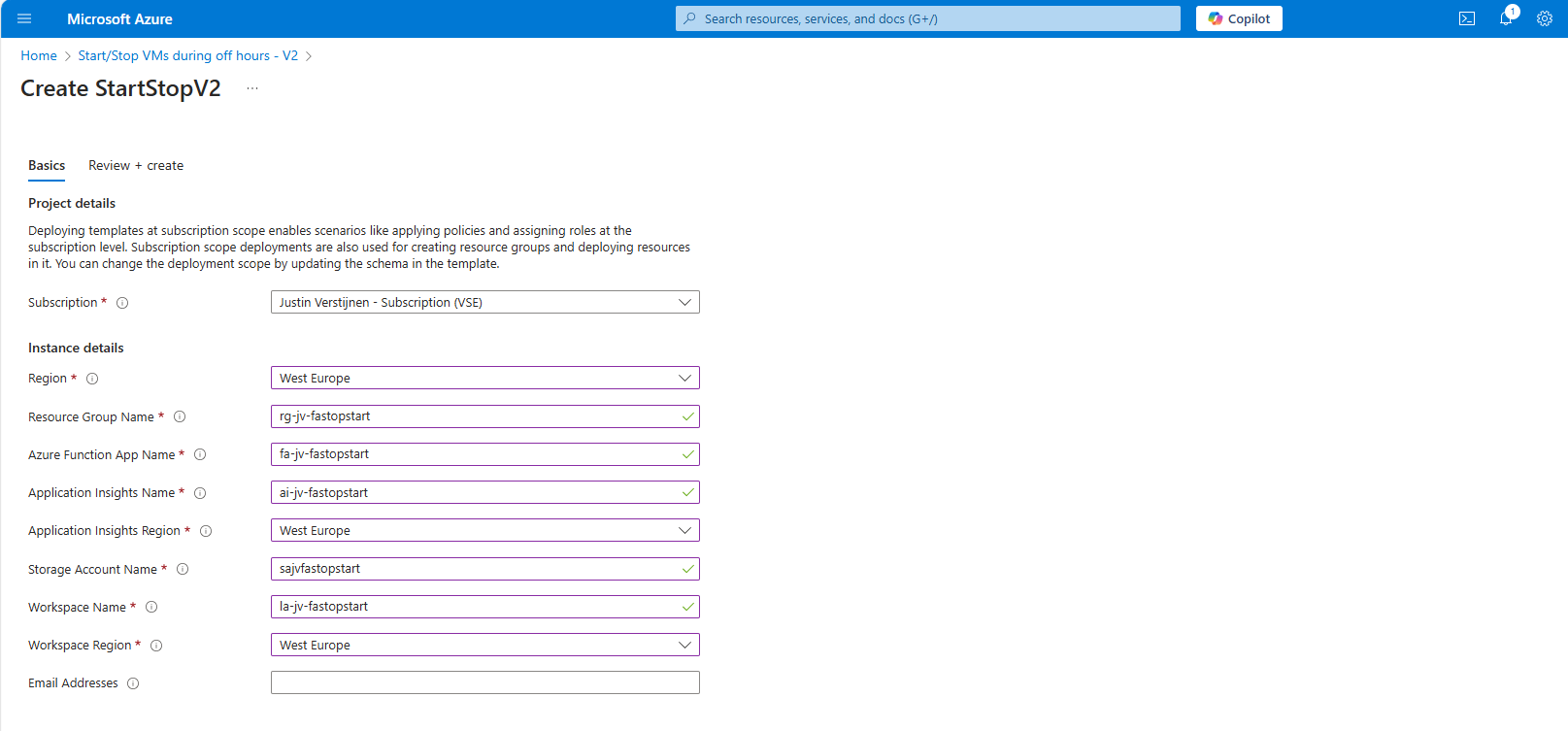

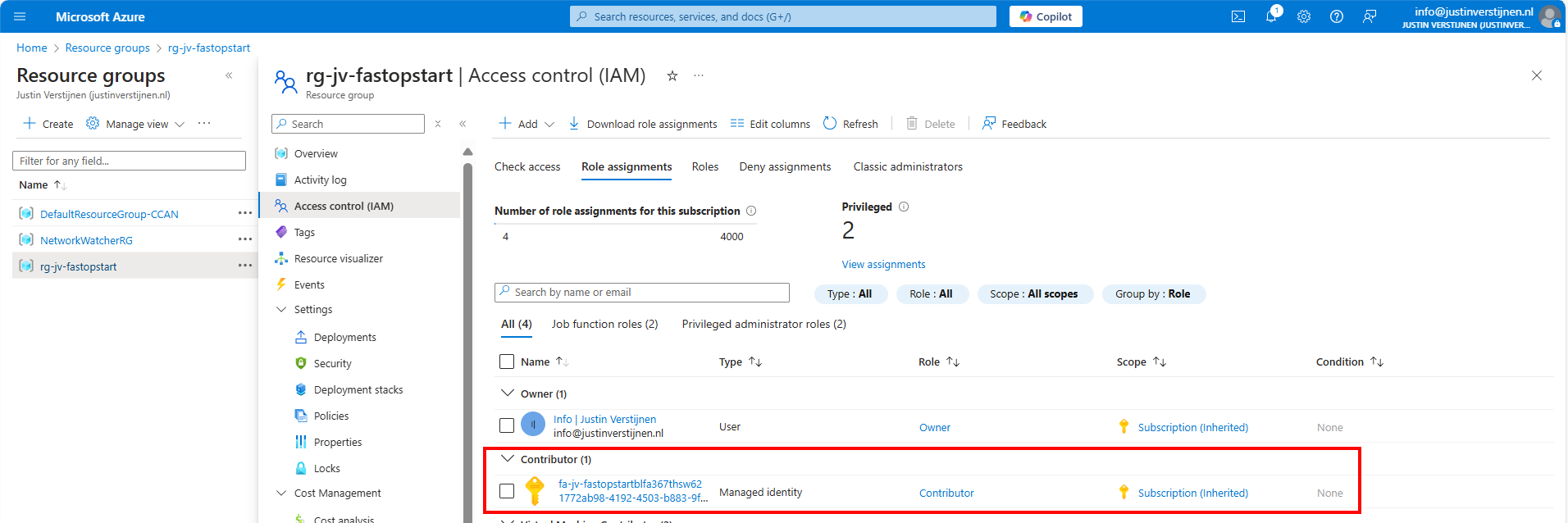

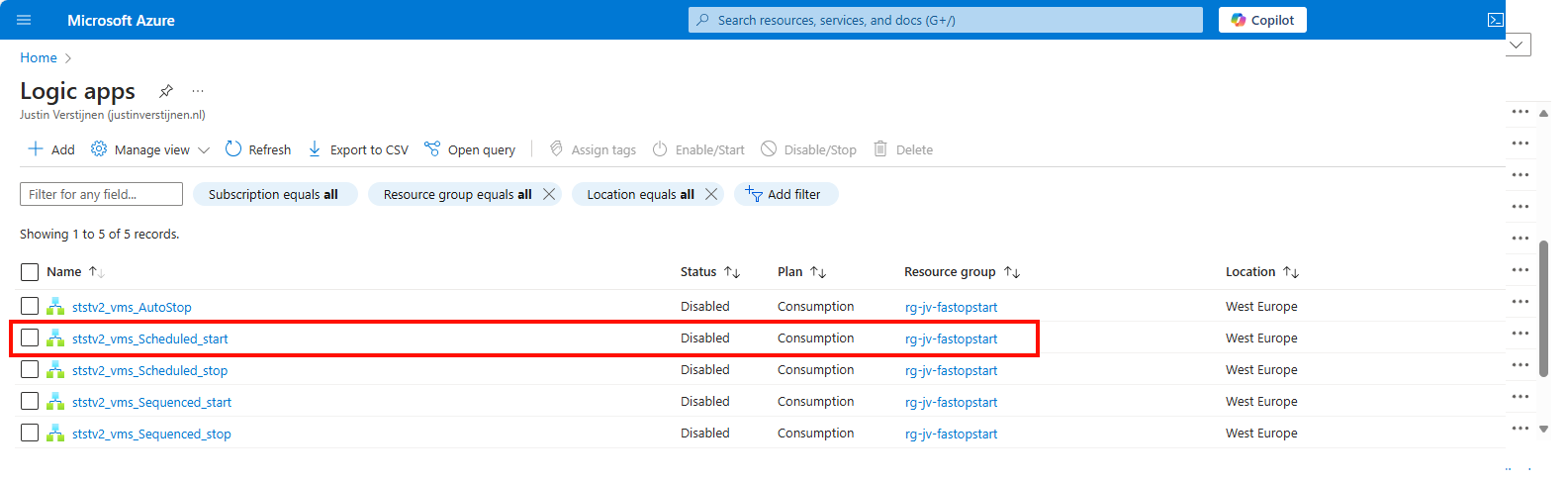

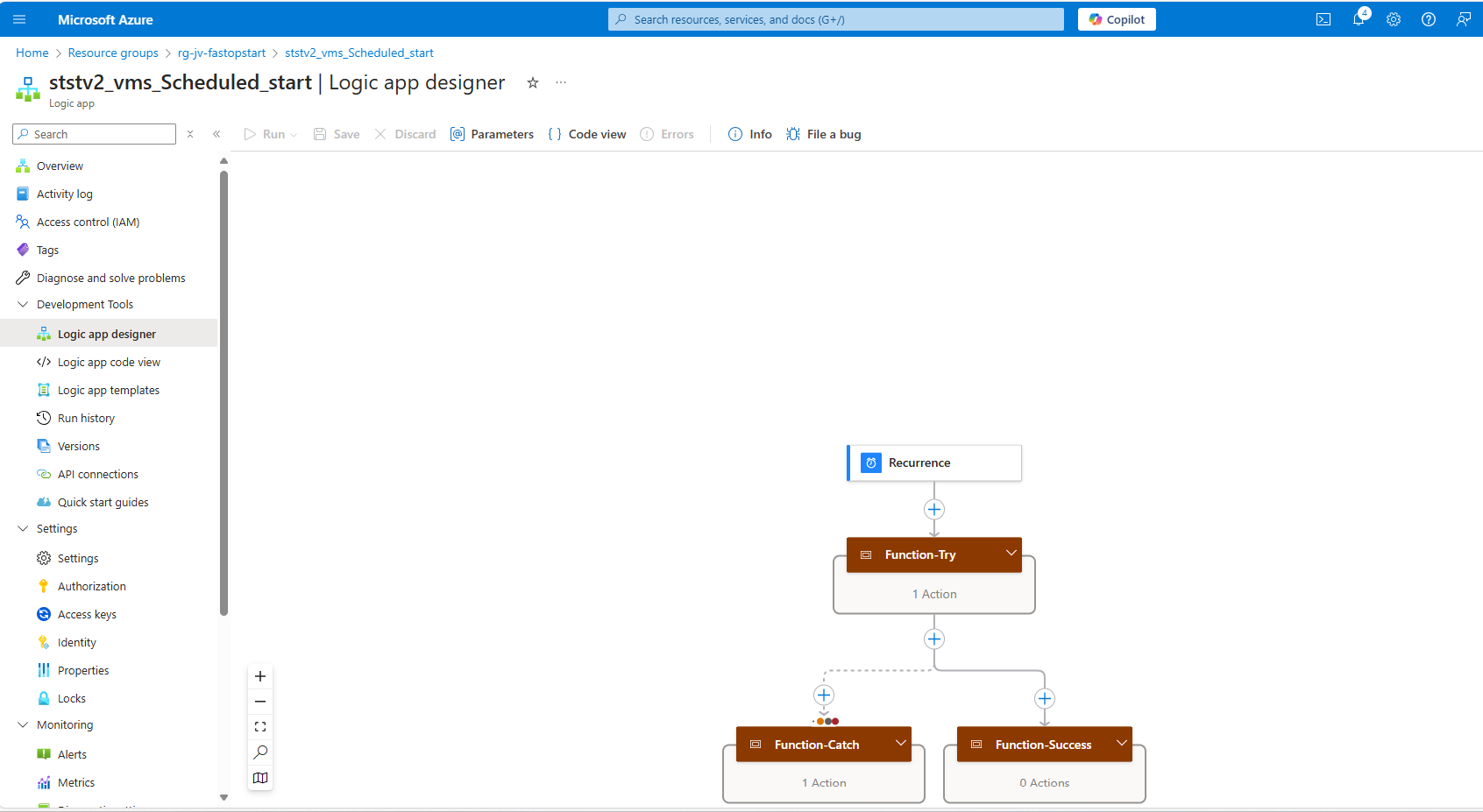

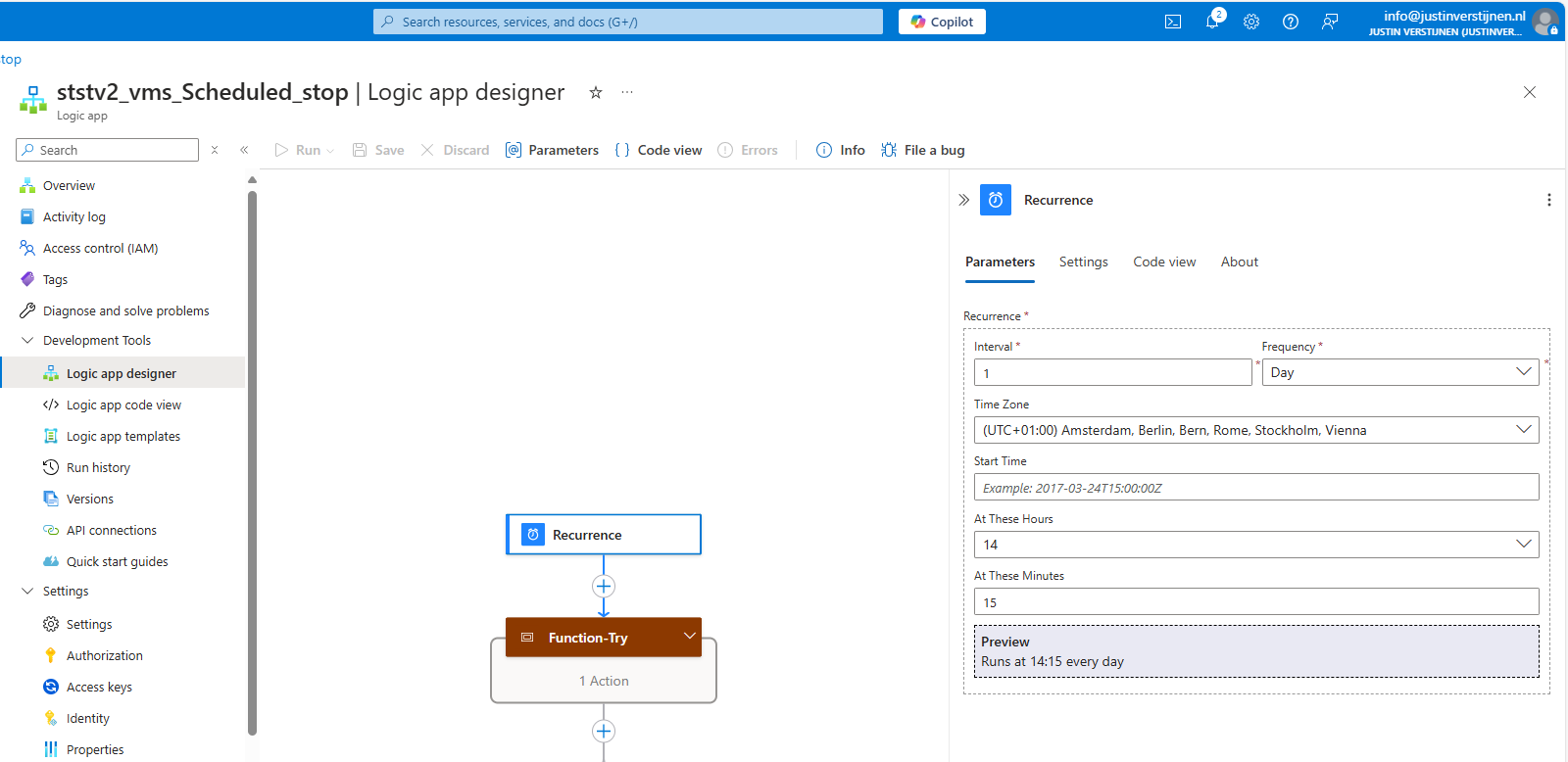

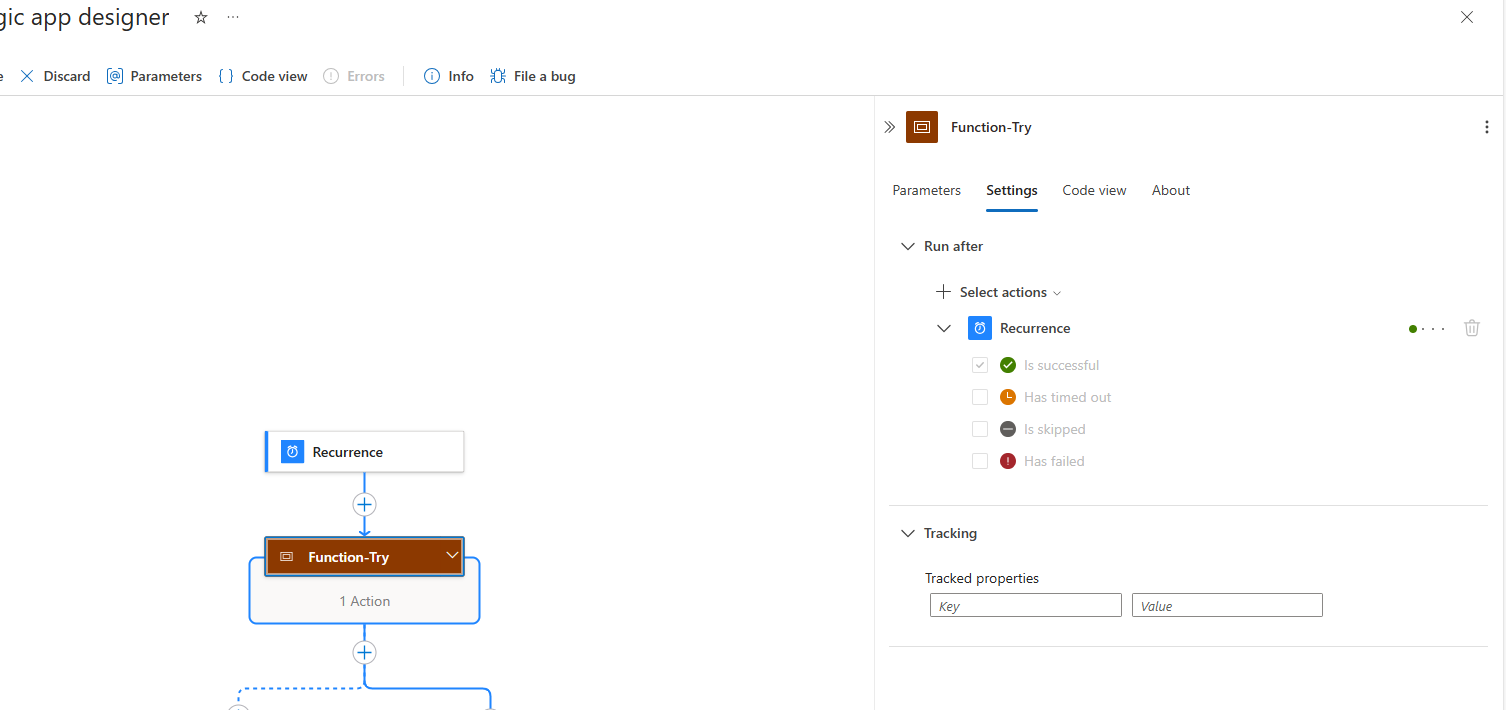

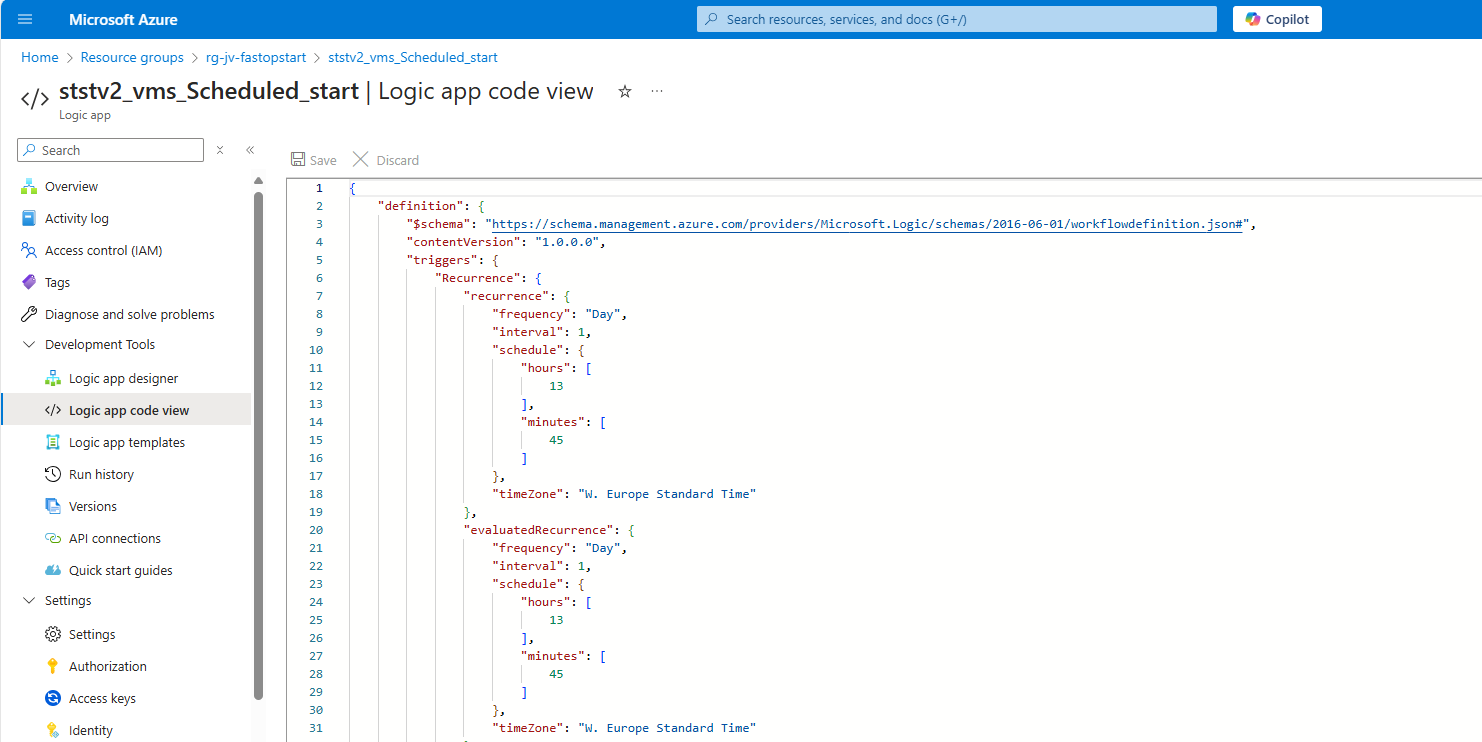

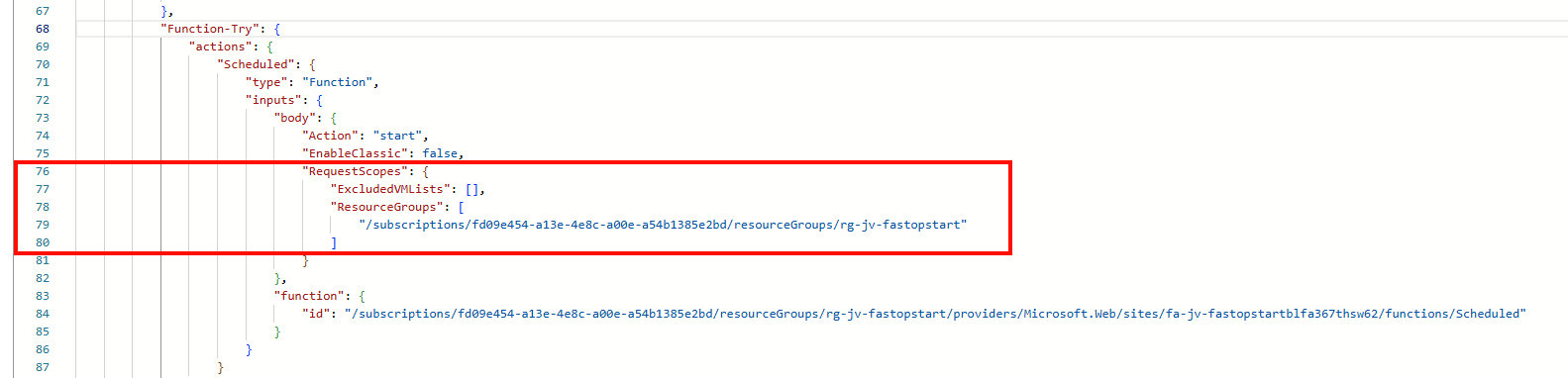

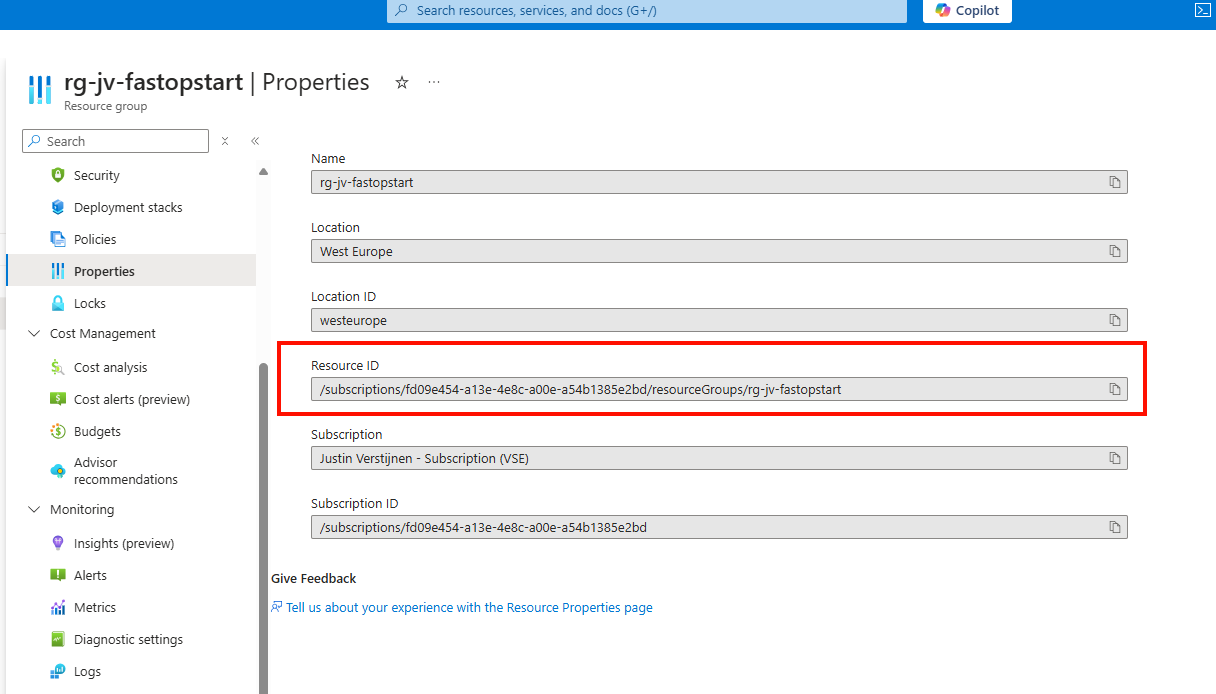

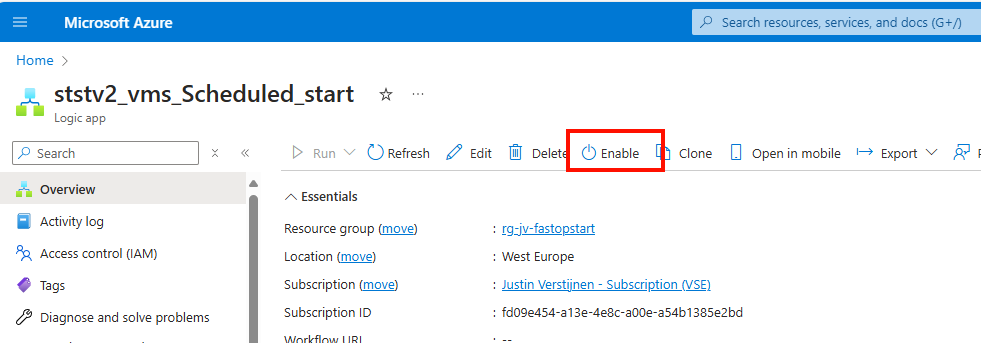

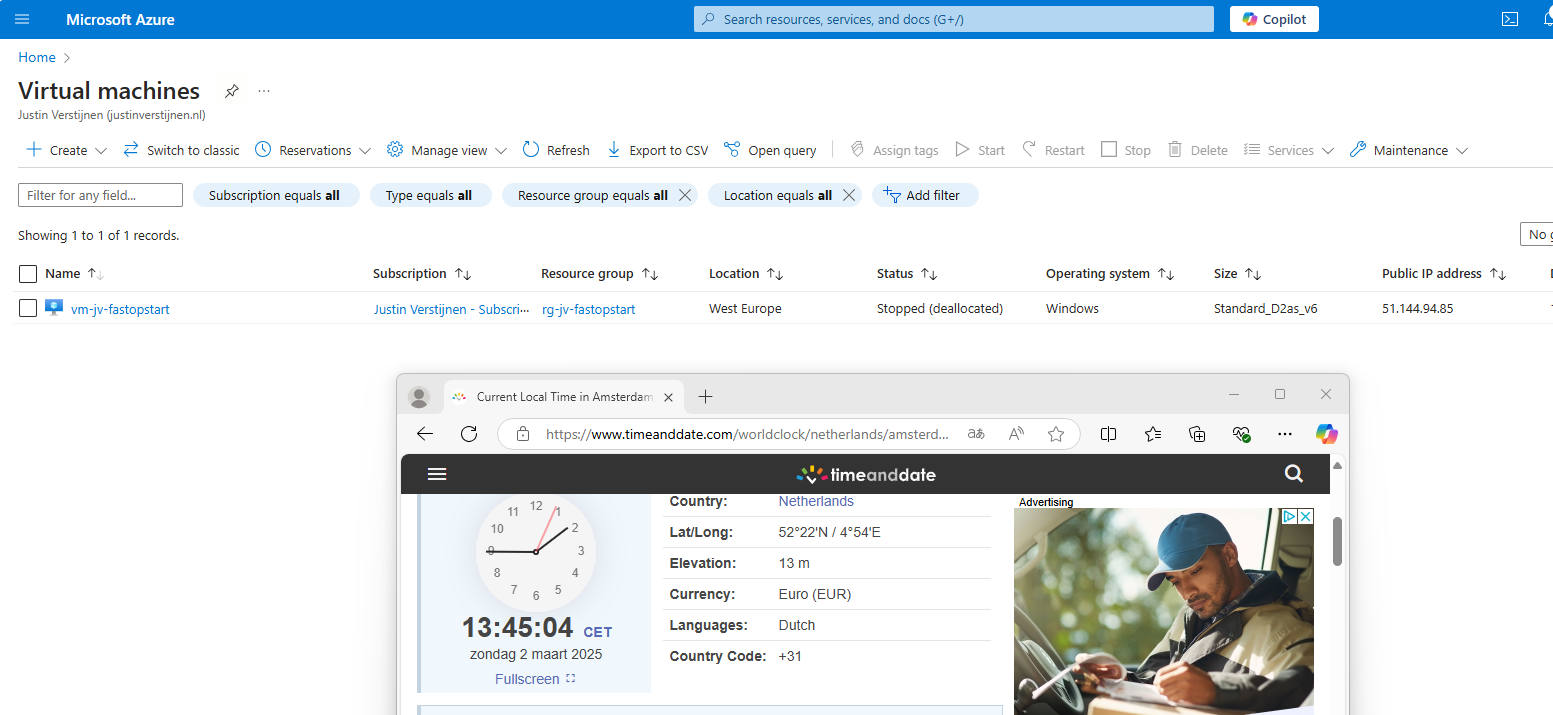

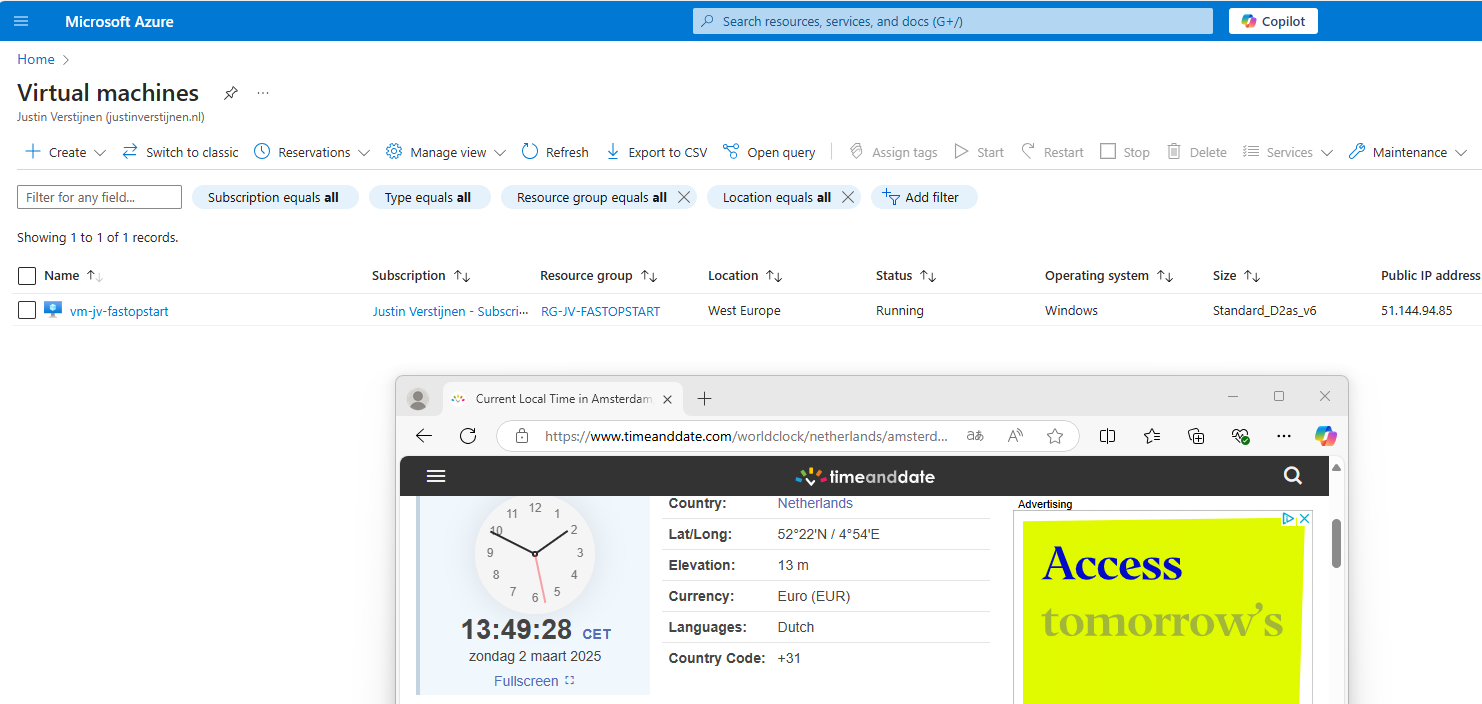

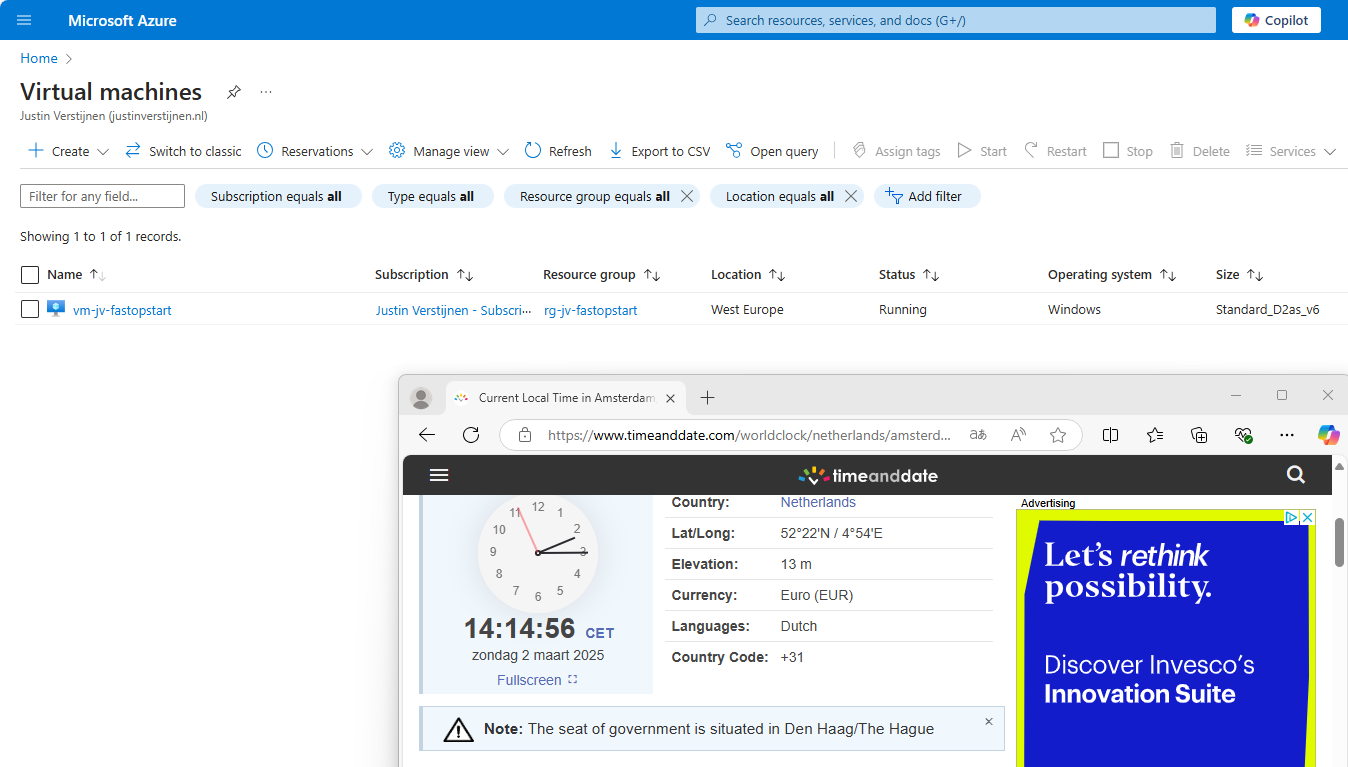

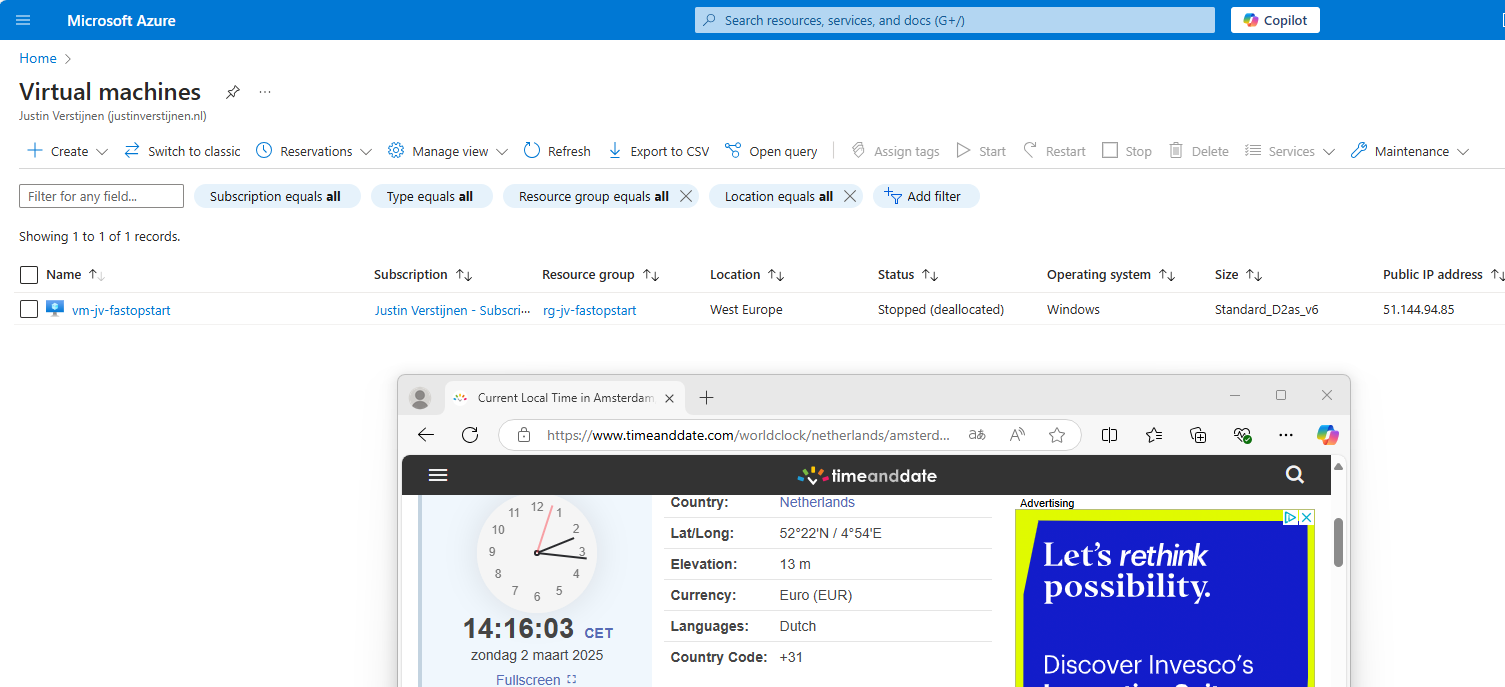

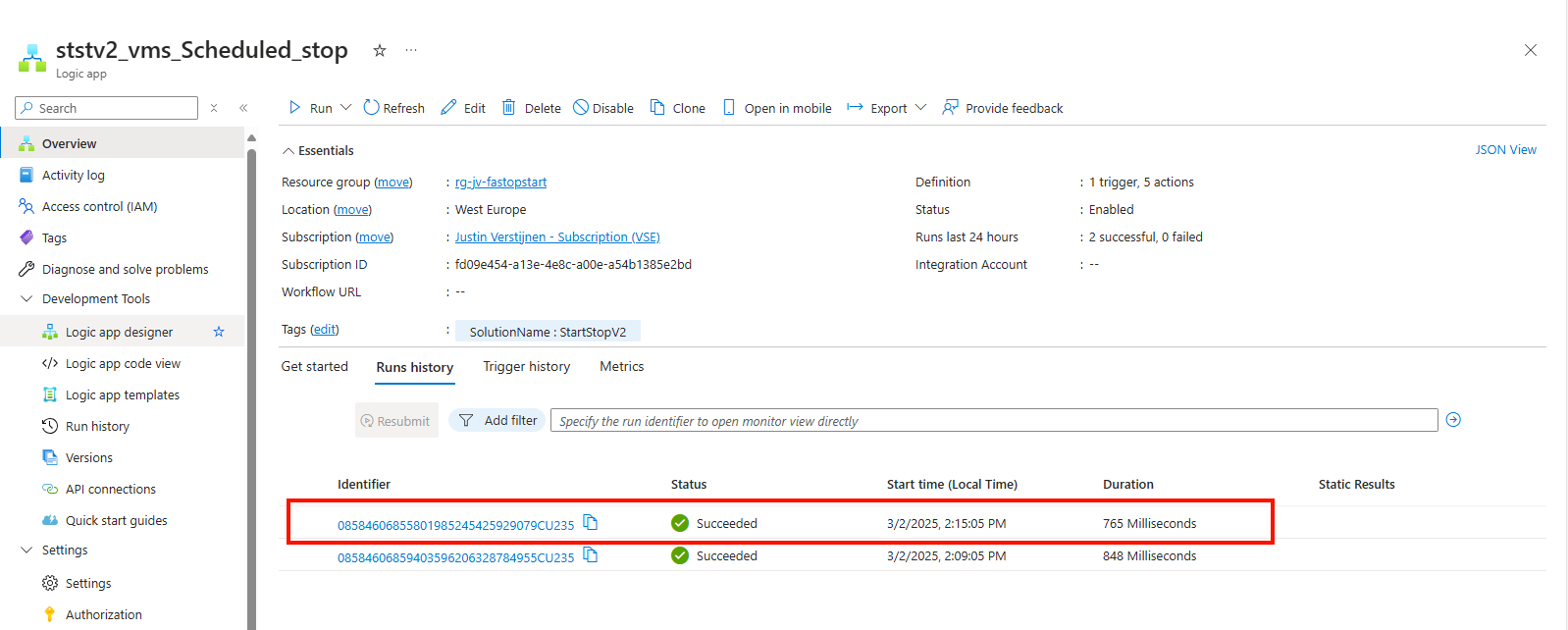

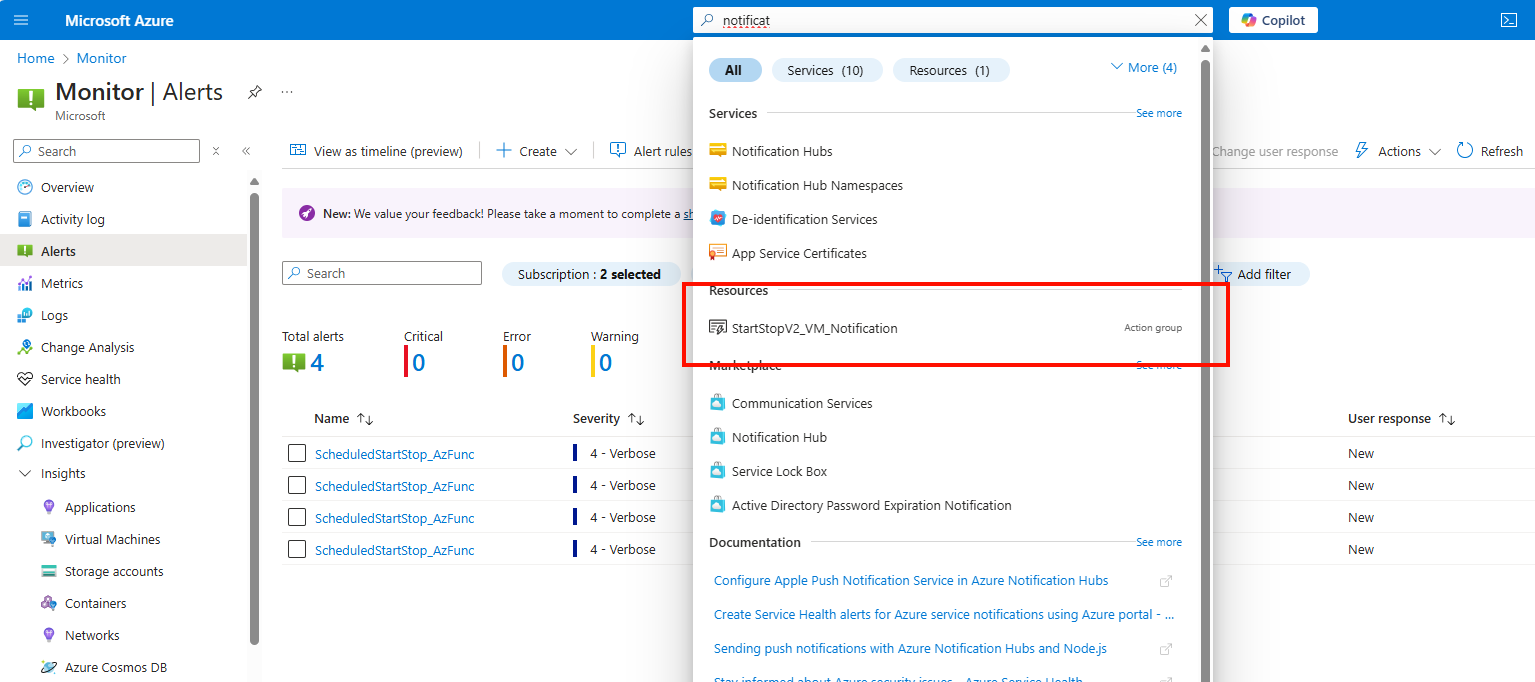

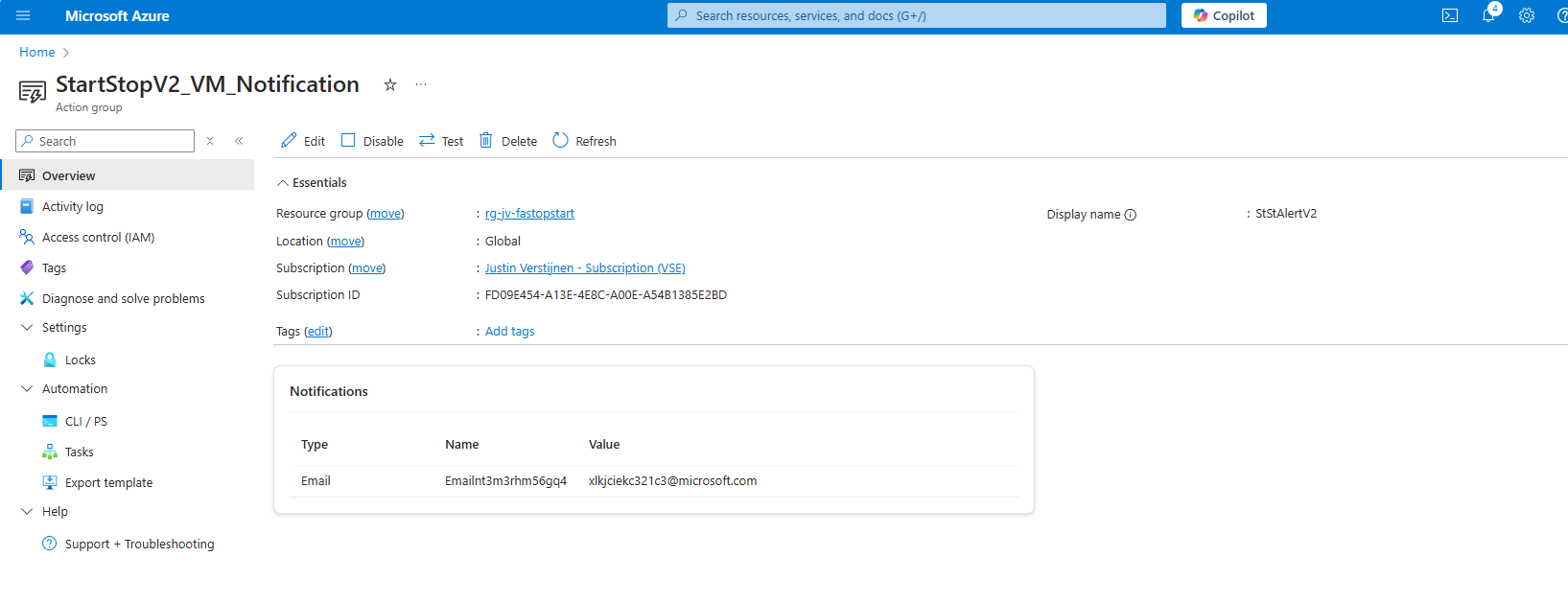

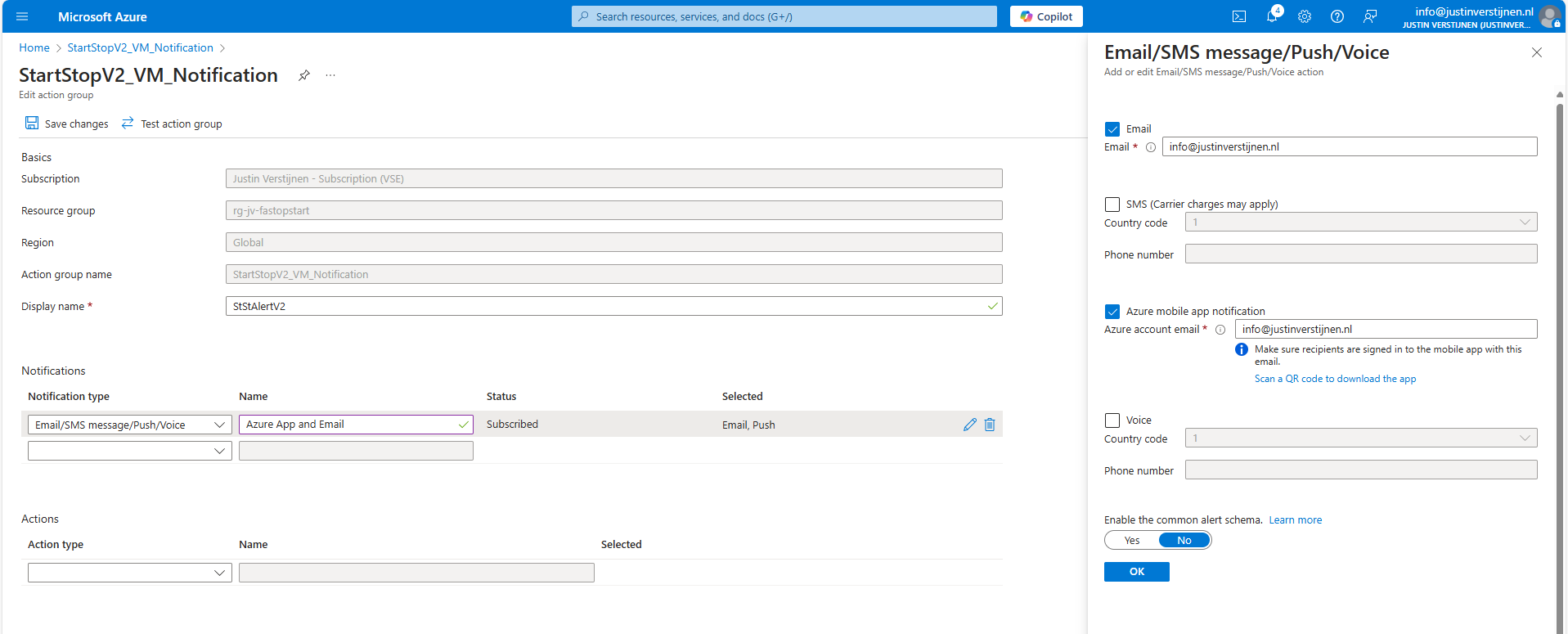

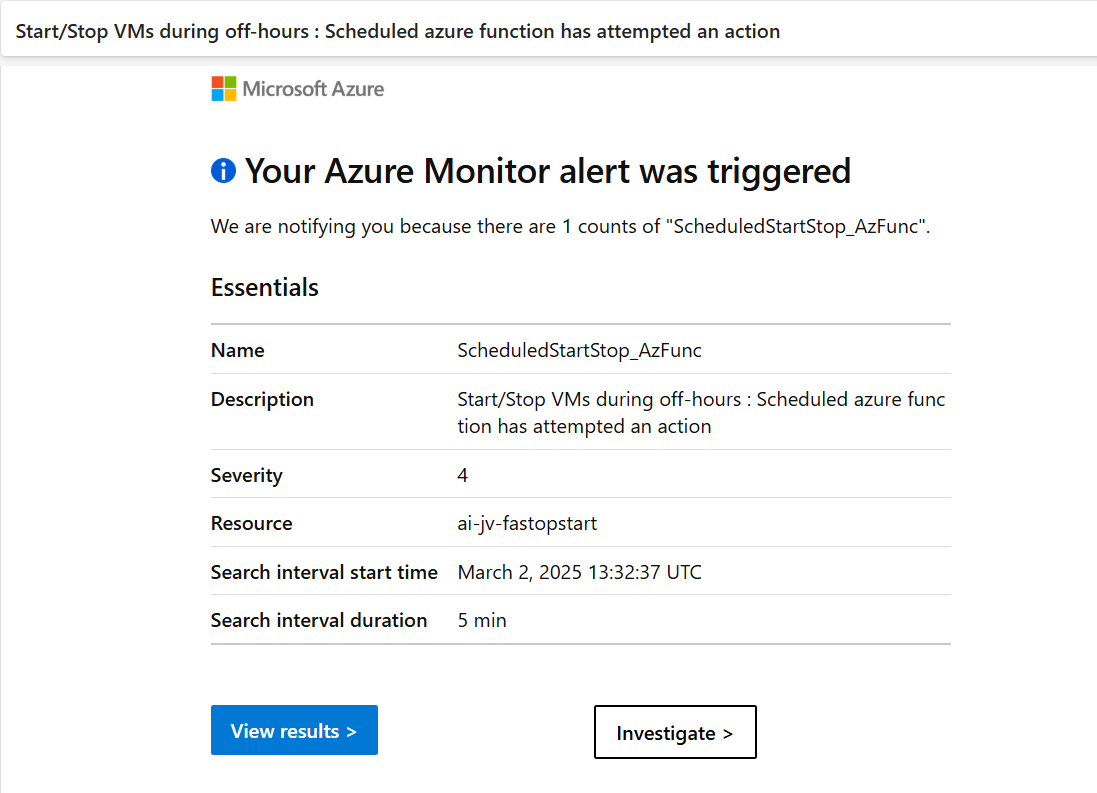

- Save Azure costs on Virtual Machines with Start/Stop

- Deep dive into IPv6 with Microsoft Azure

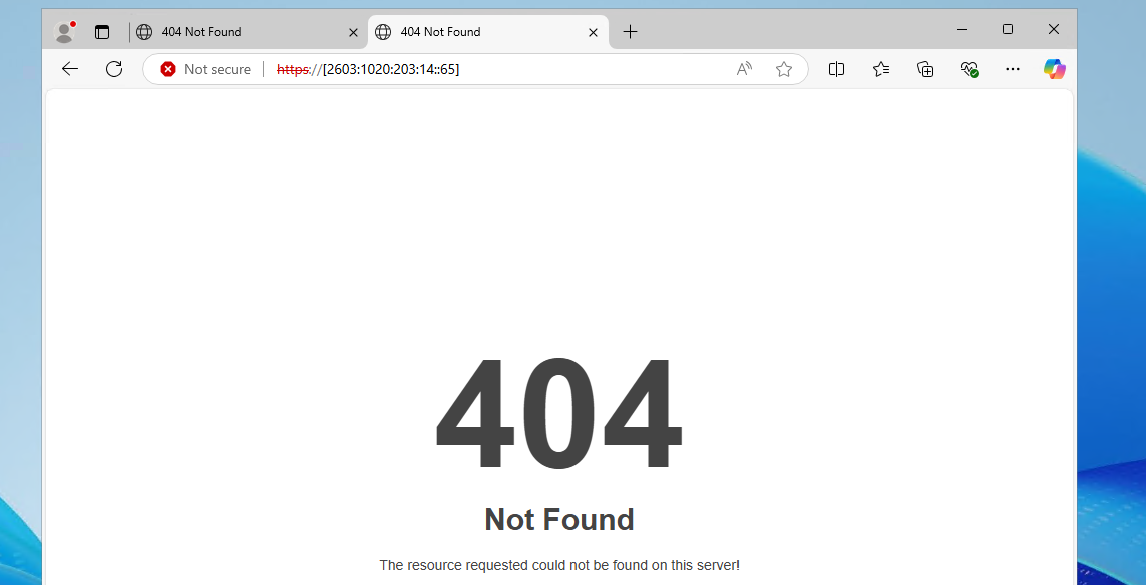

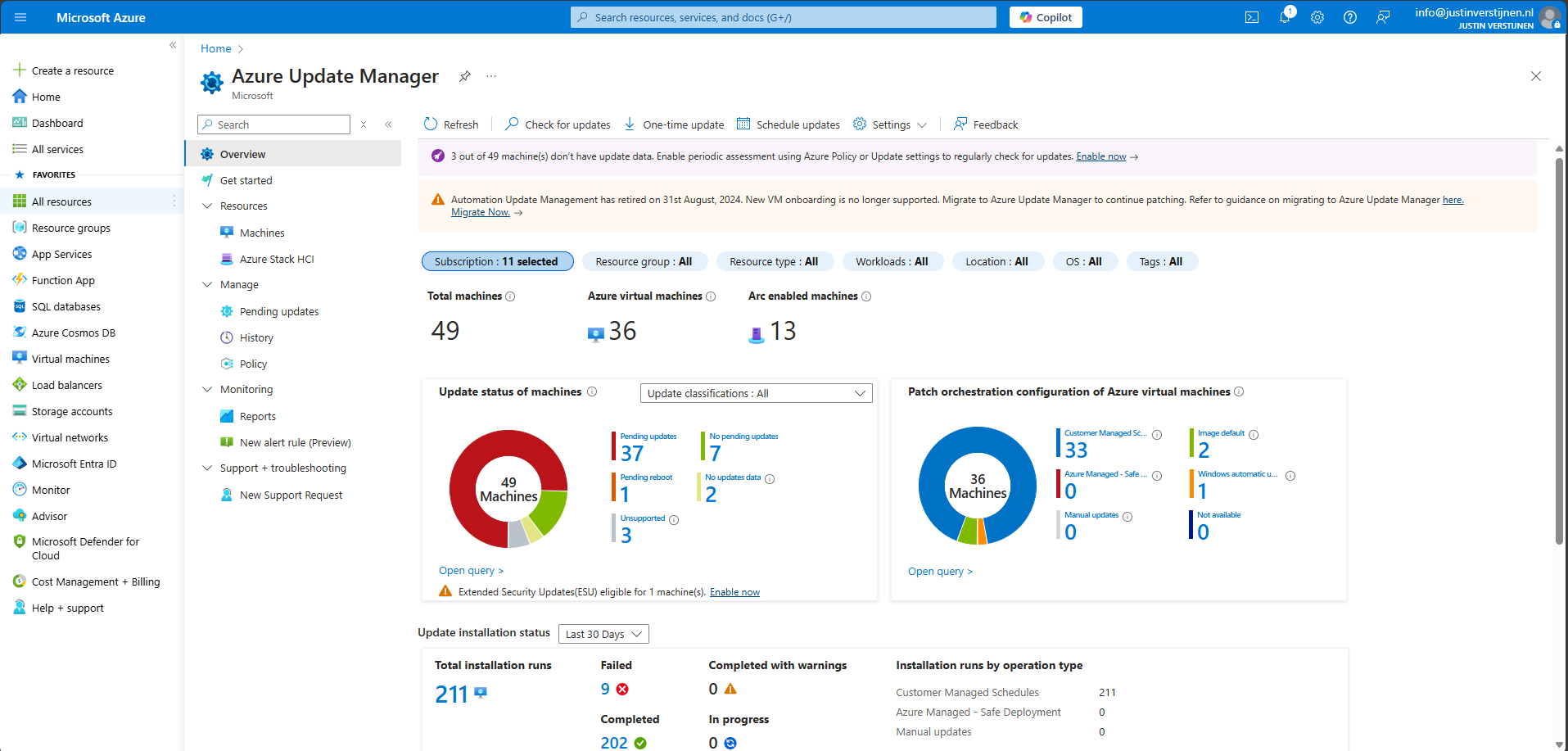

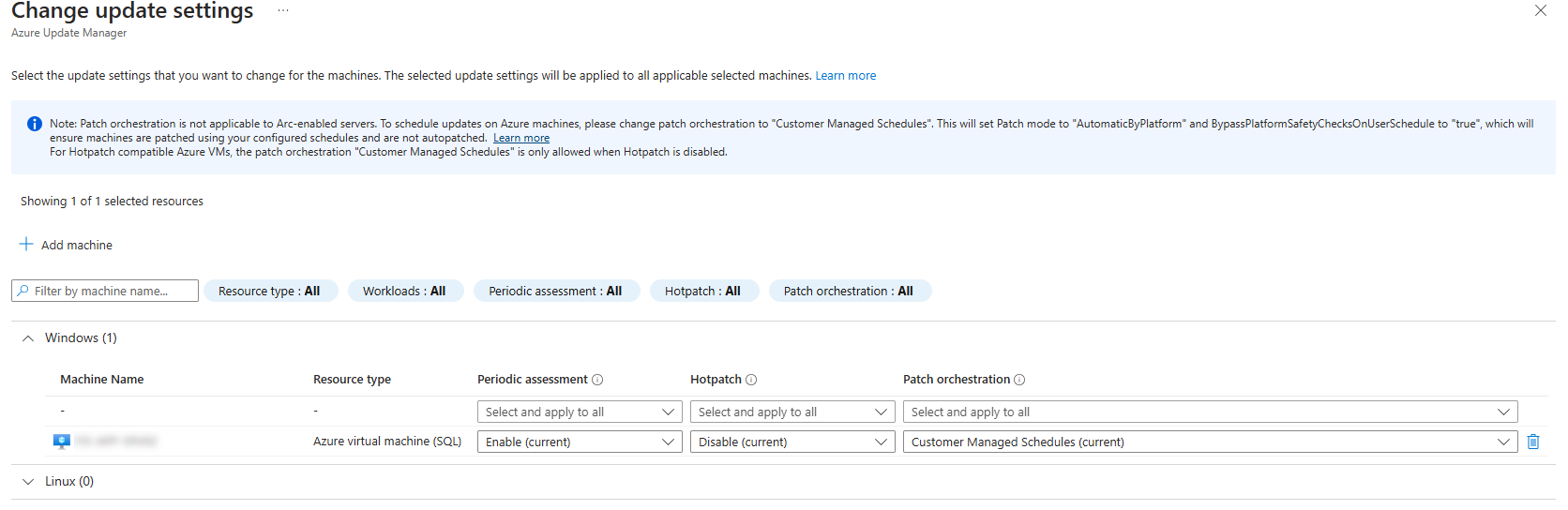

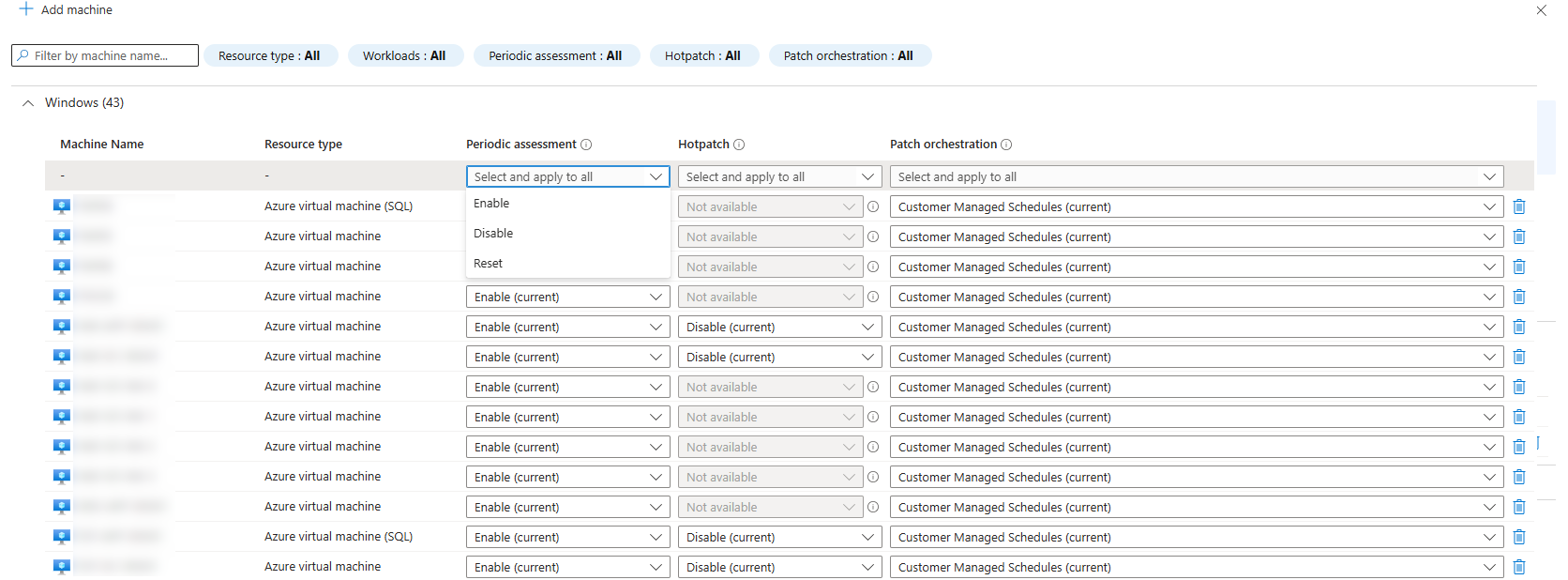

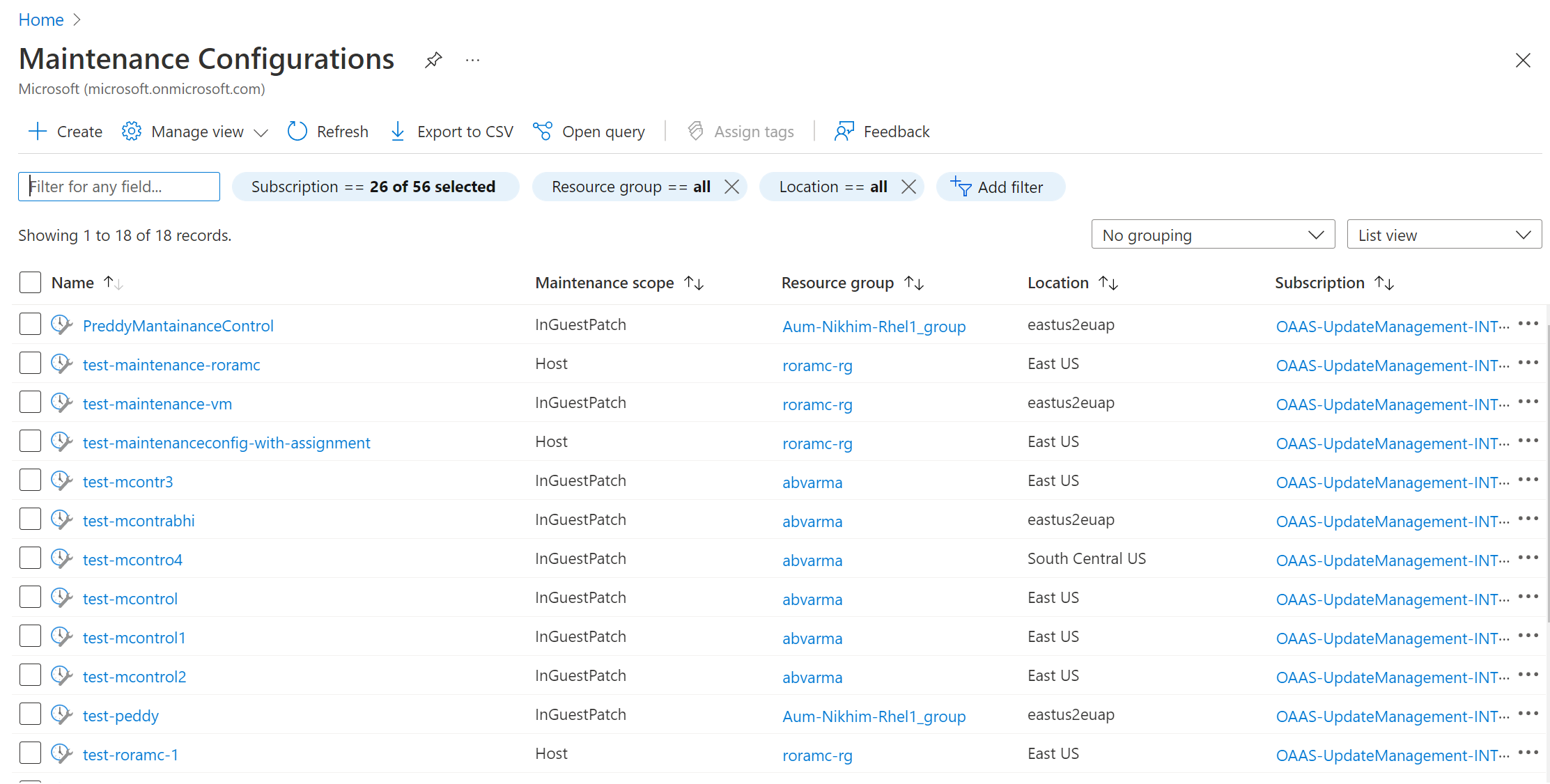

- Using Azure Update Manager to manage updates at scale

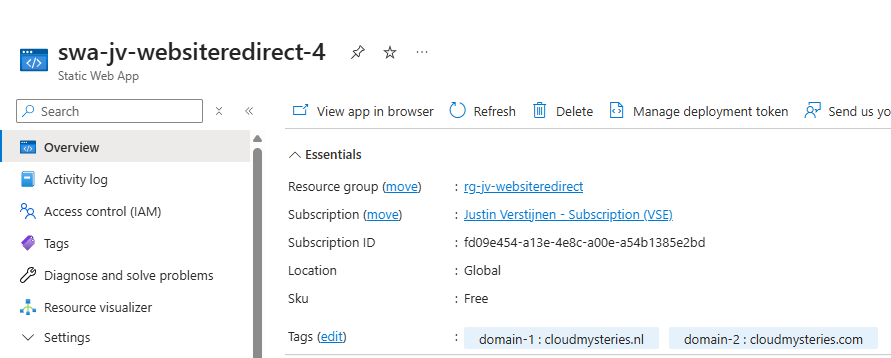

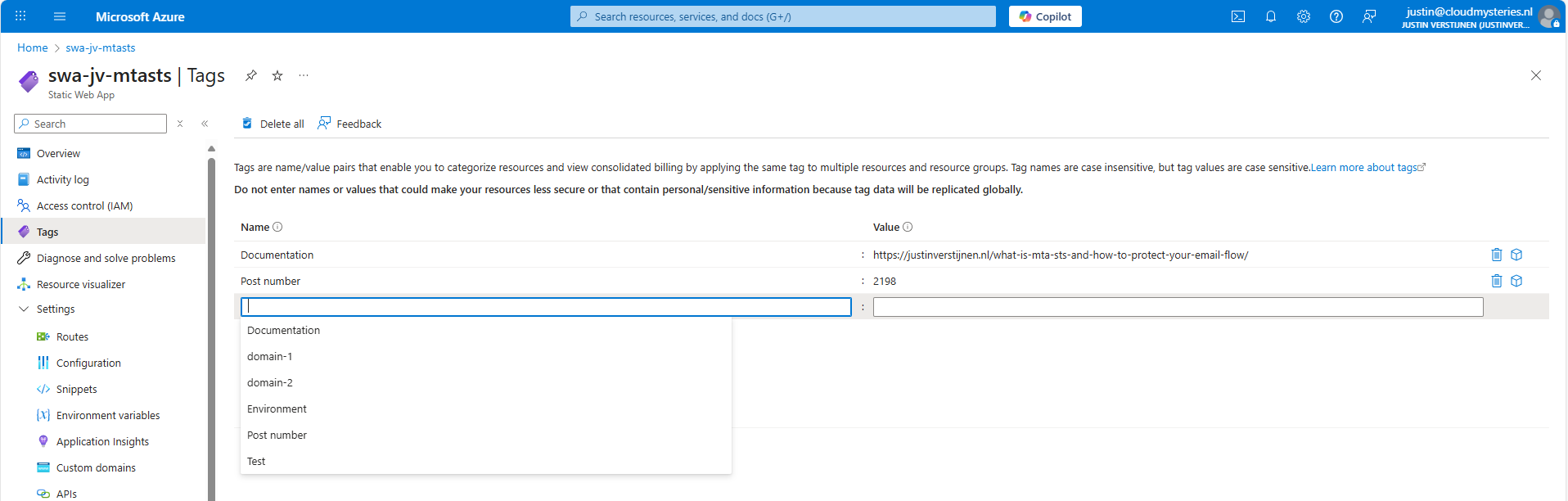

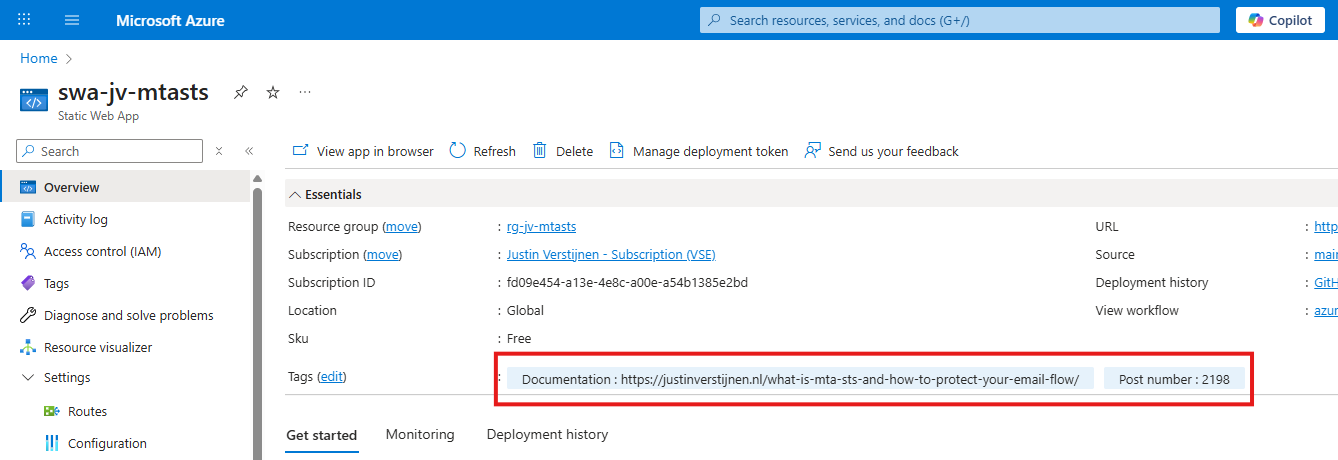

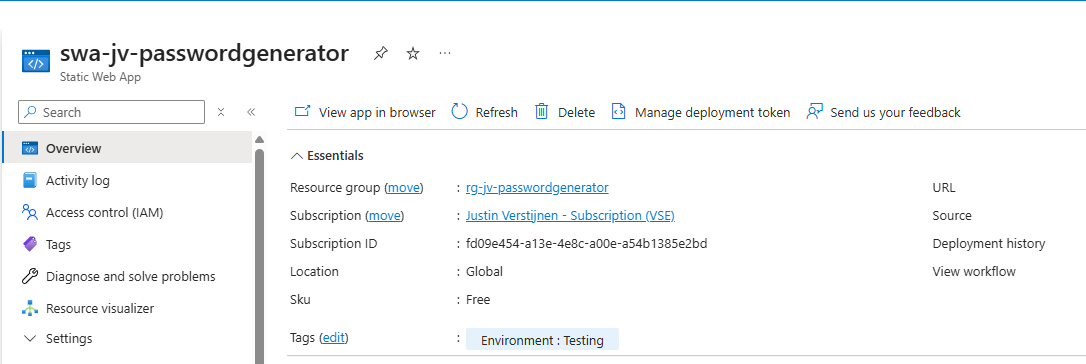

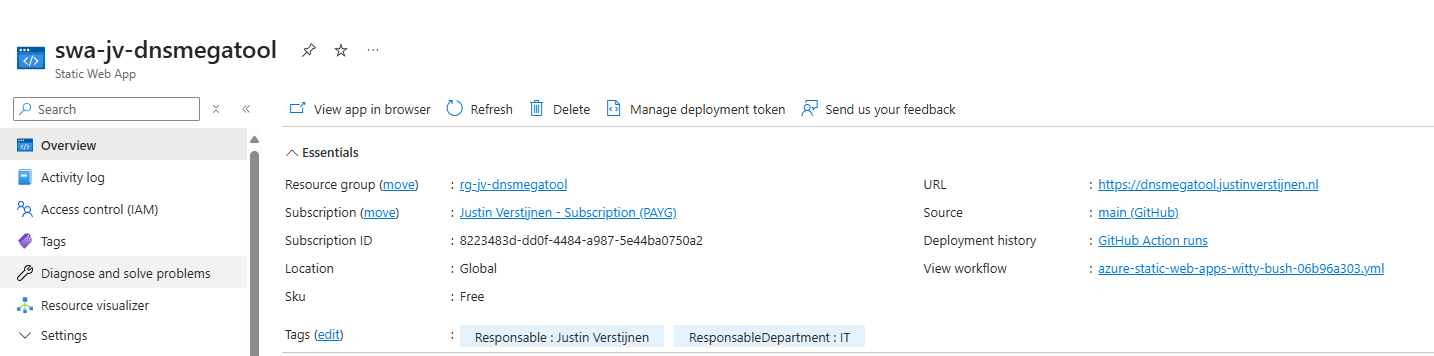

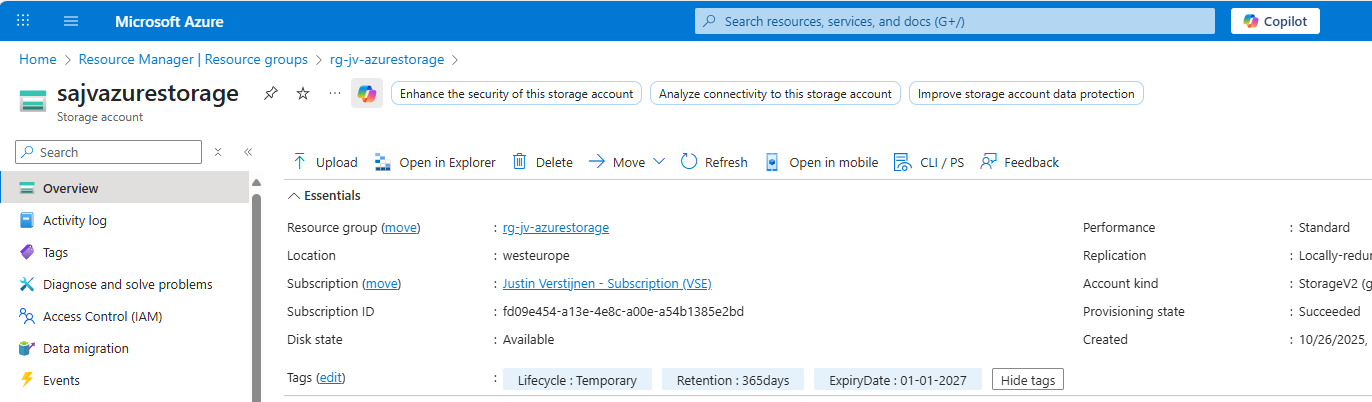

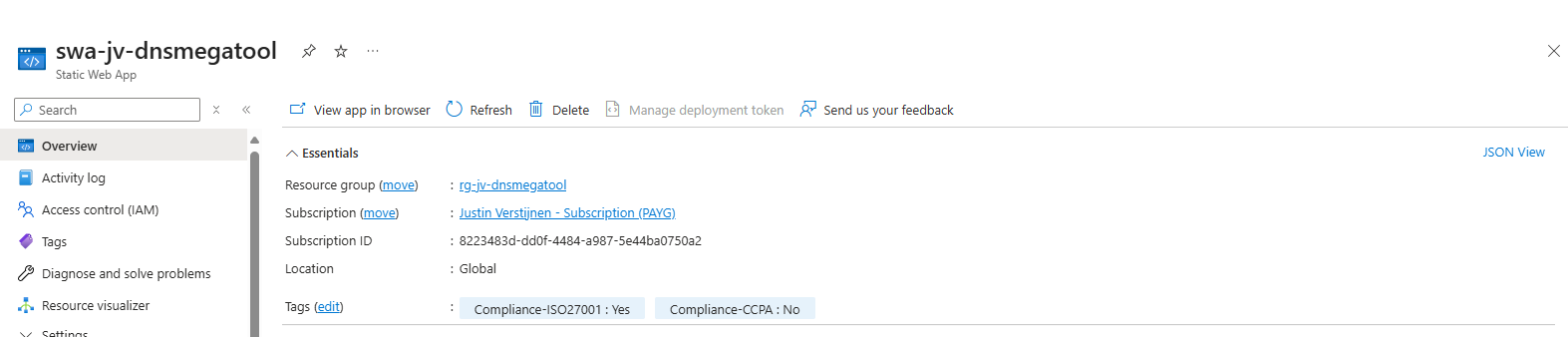

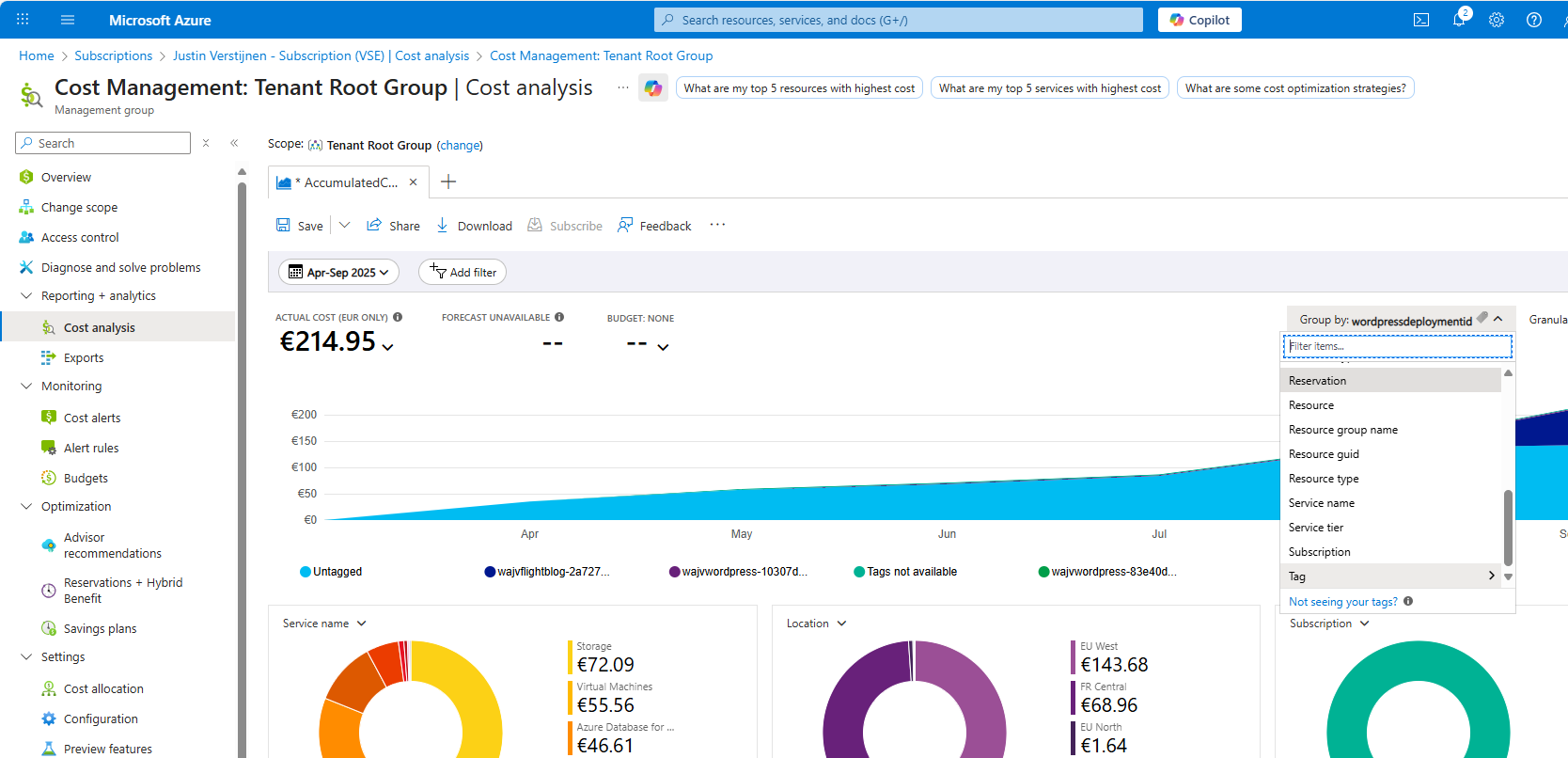

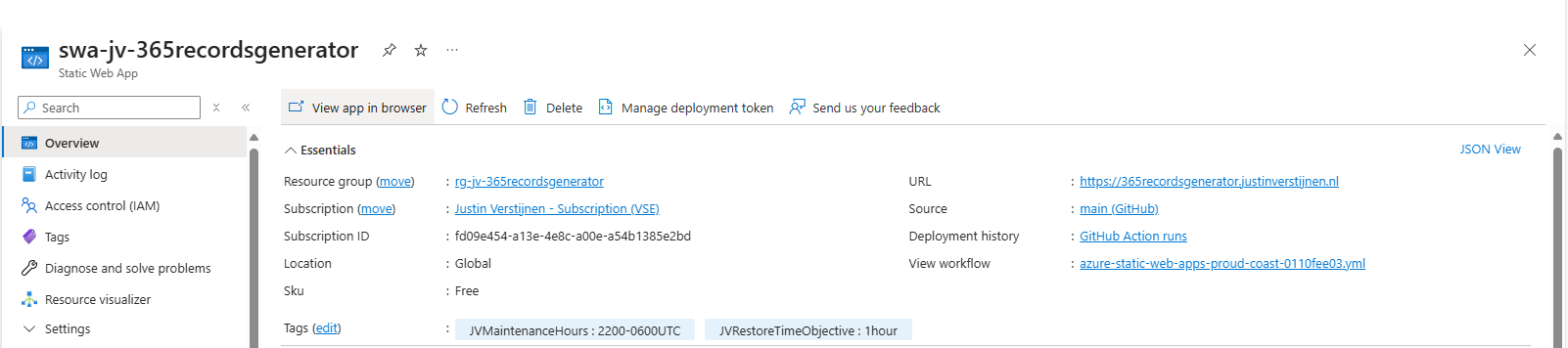

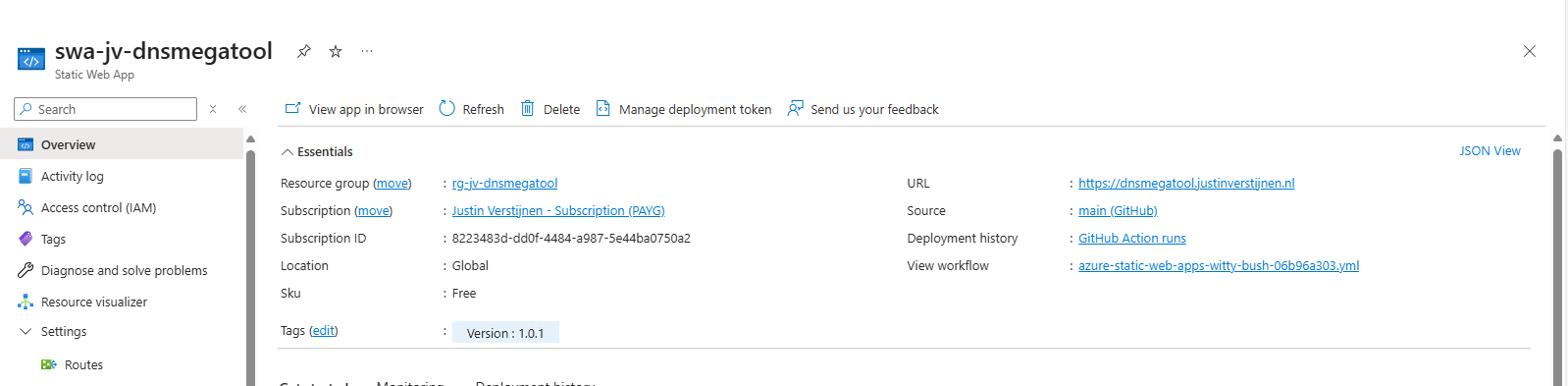

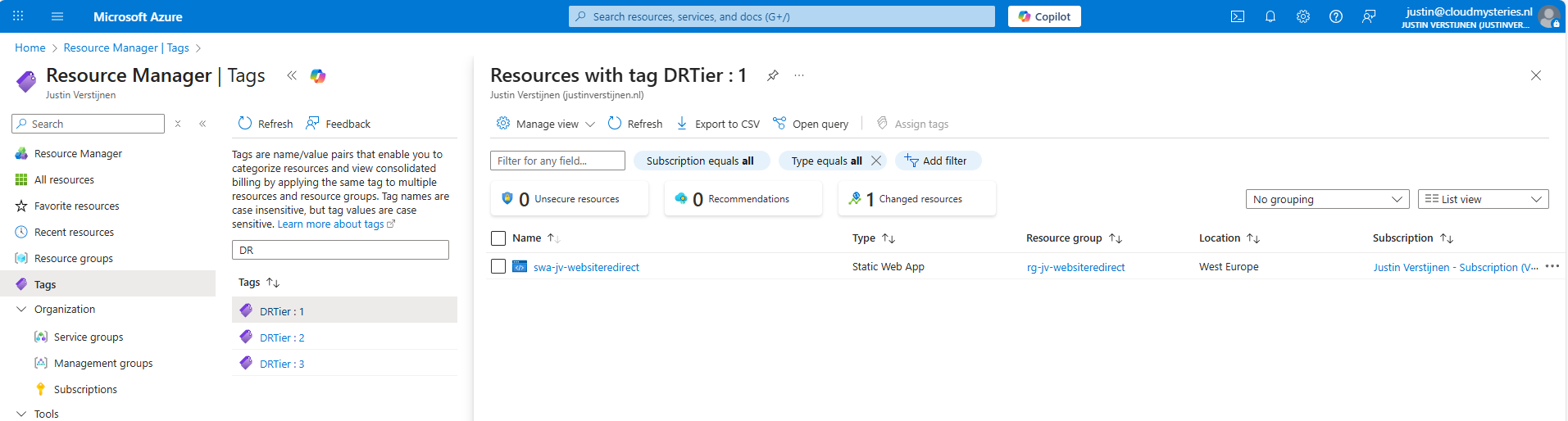

- 10 ways to use tags in Microsoft Azure

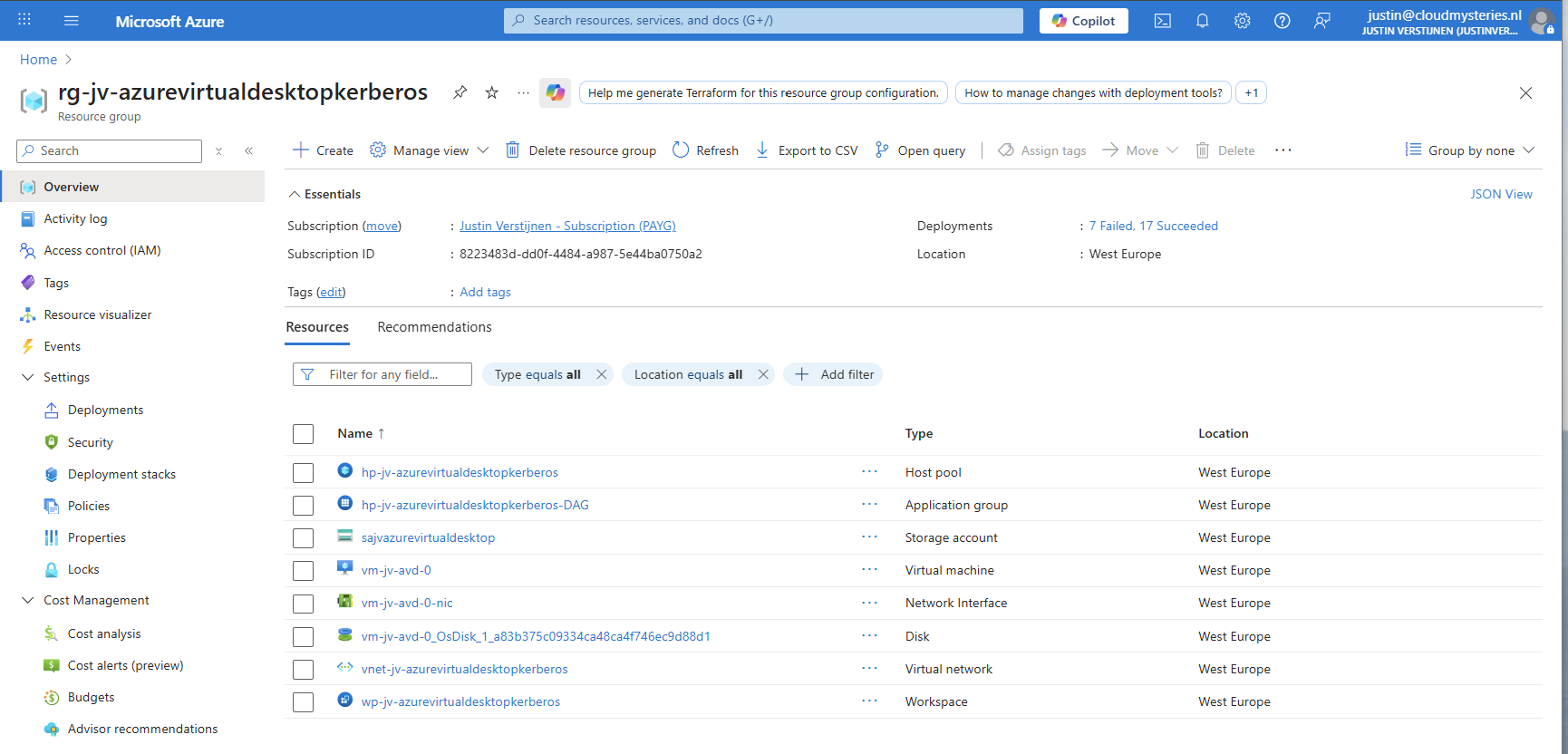

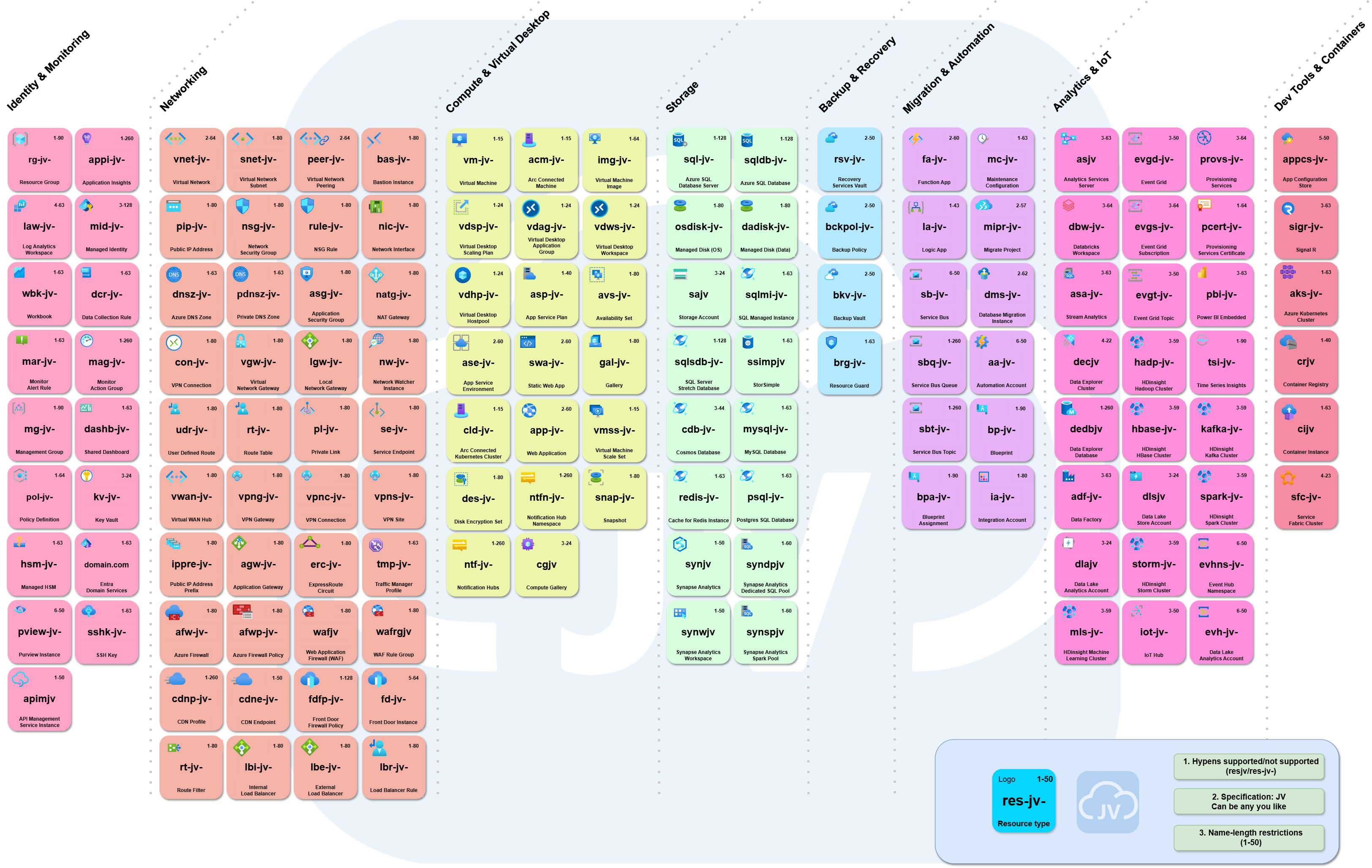

- My Azure Naming Framework

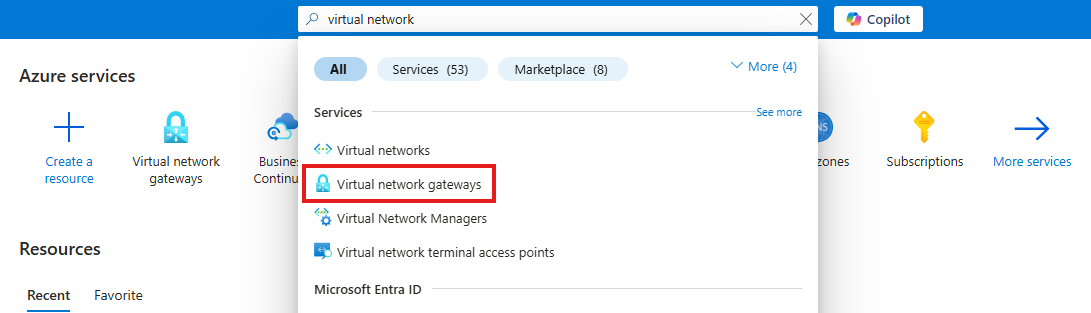

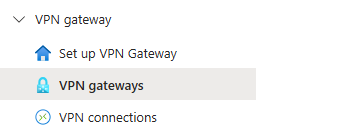

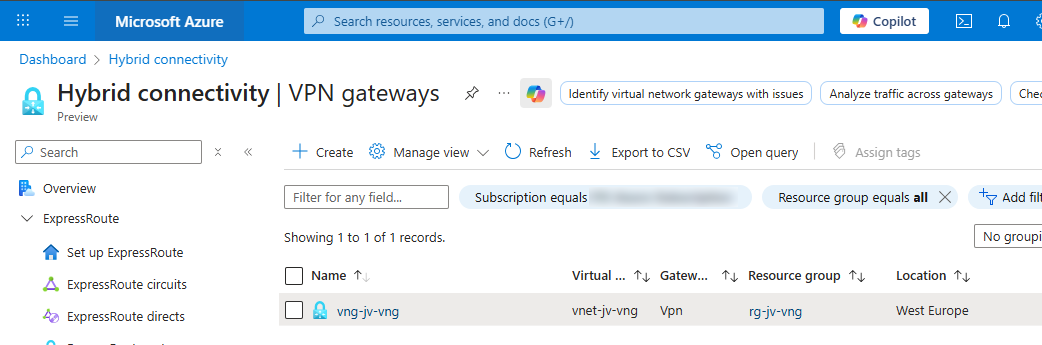

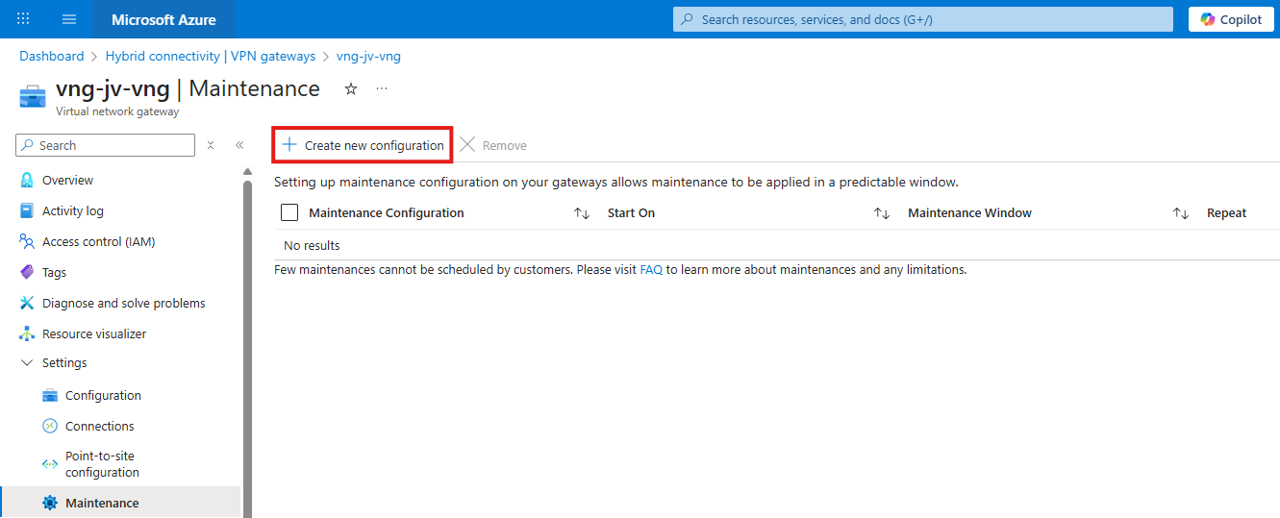

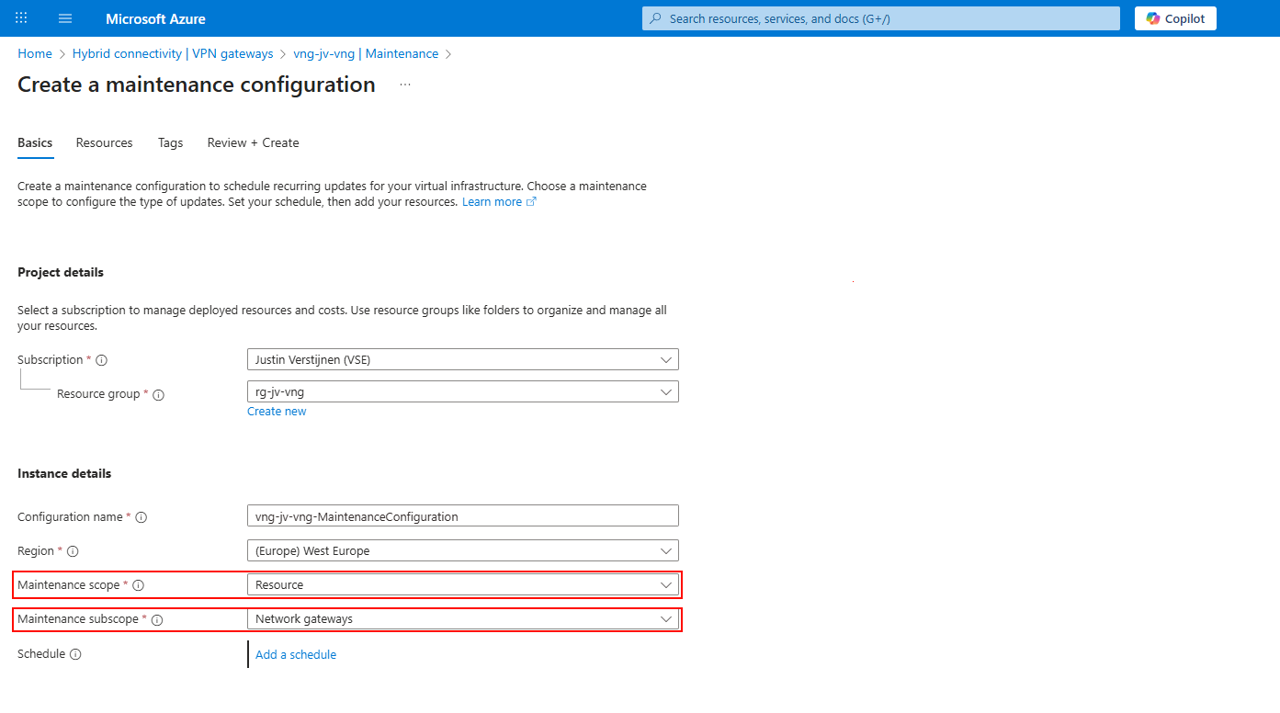

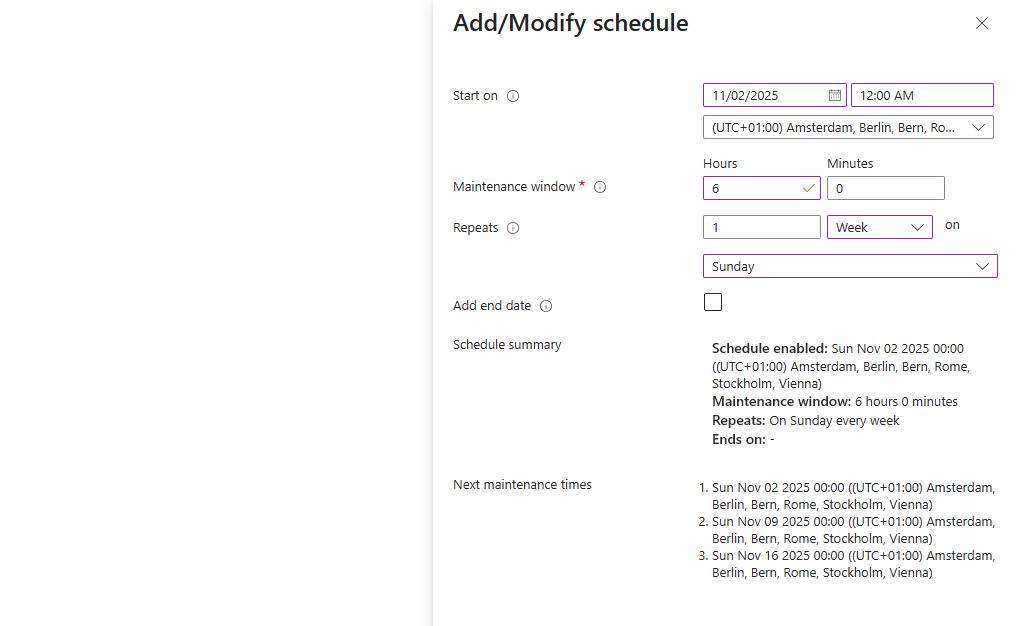

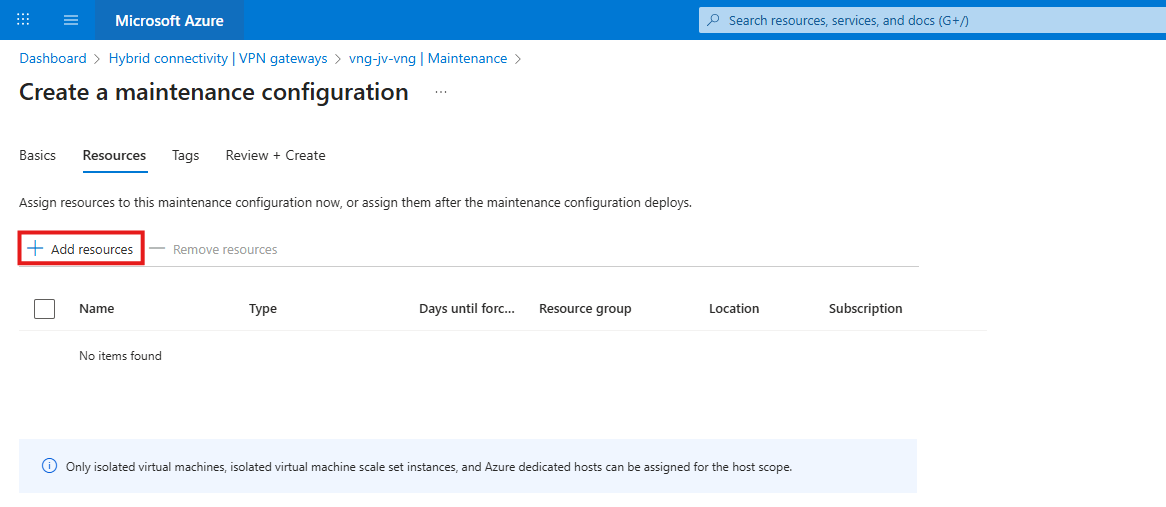

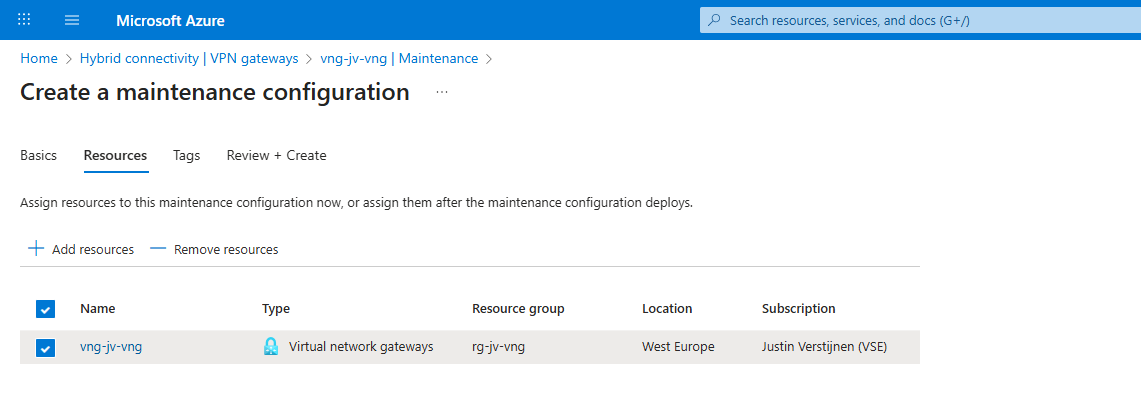

- Azure VPN Gateway Maintenance - How to configure

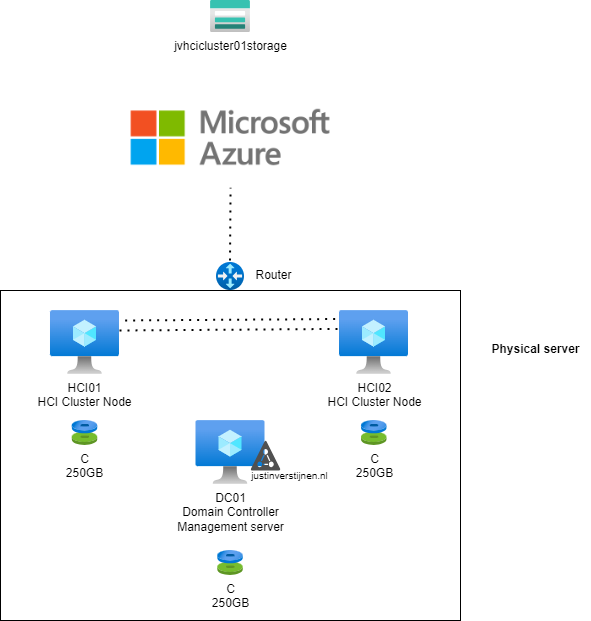

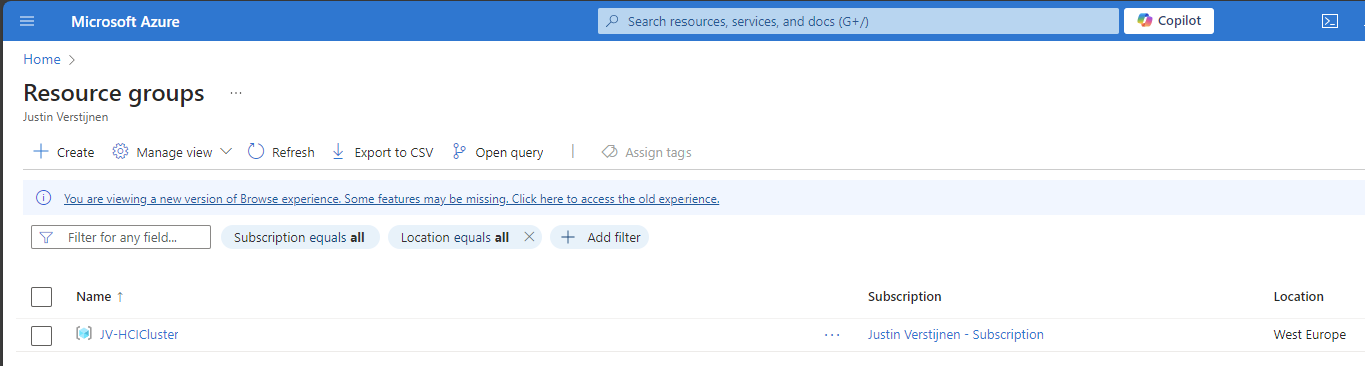

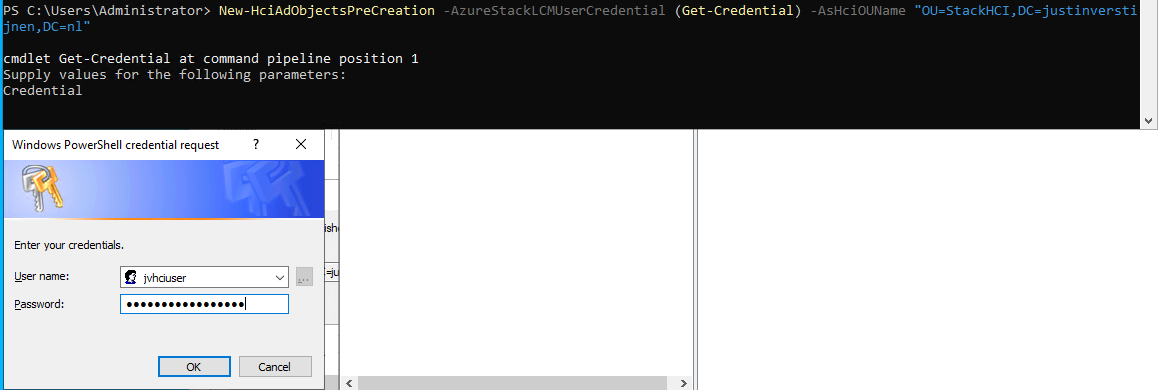

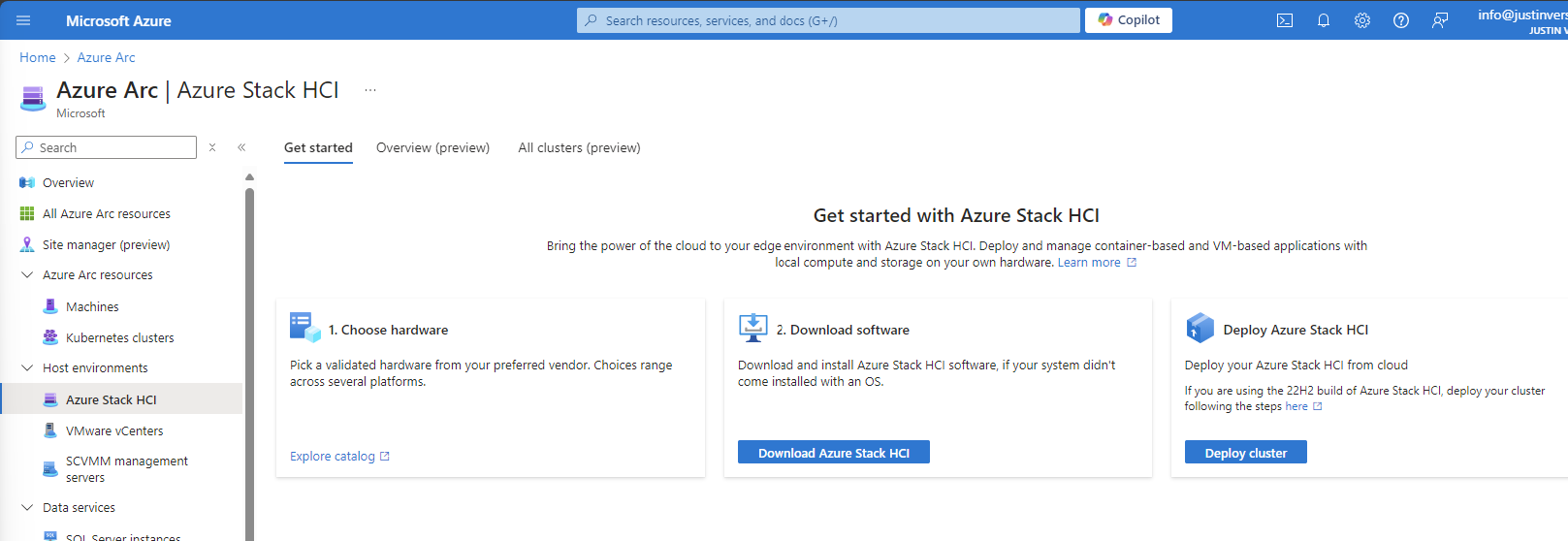

- Azure Stack HCI - Host your Virtual Desktops locally

- How to learn Azure - My learning resources

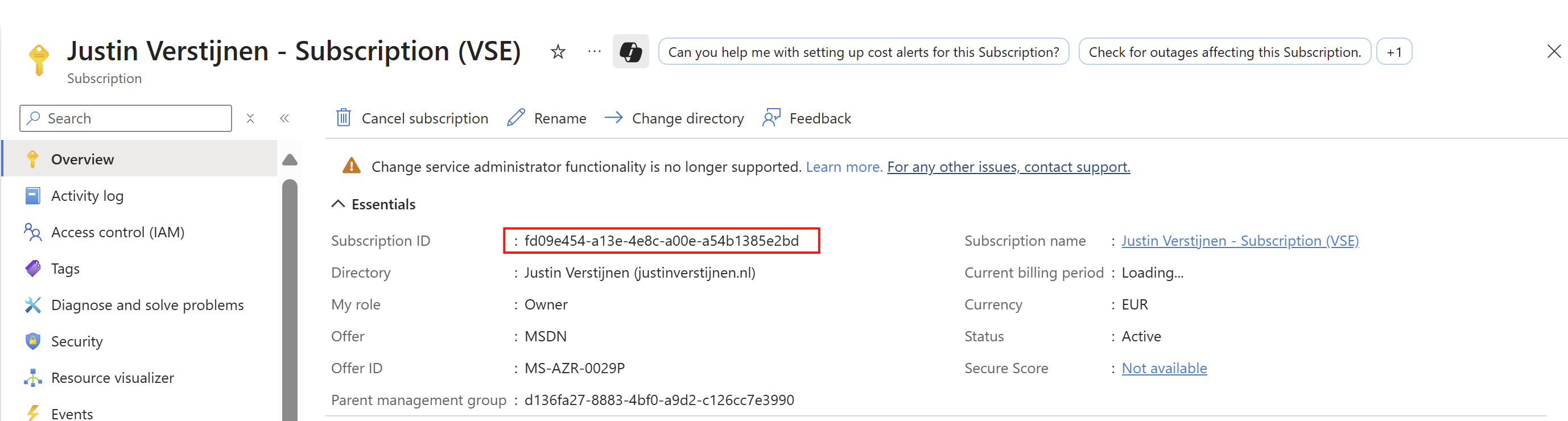

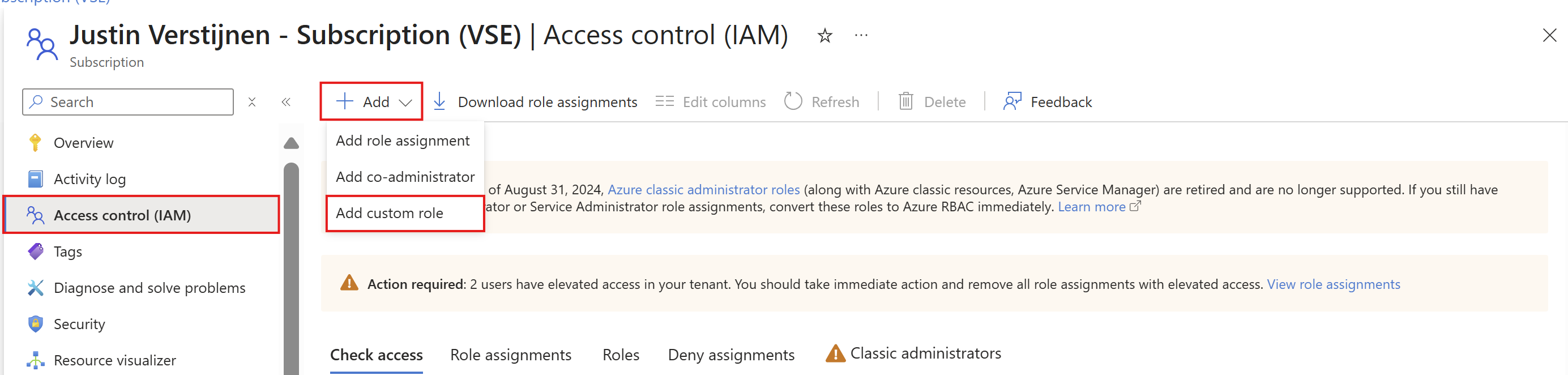

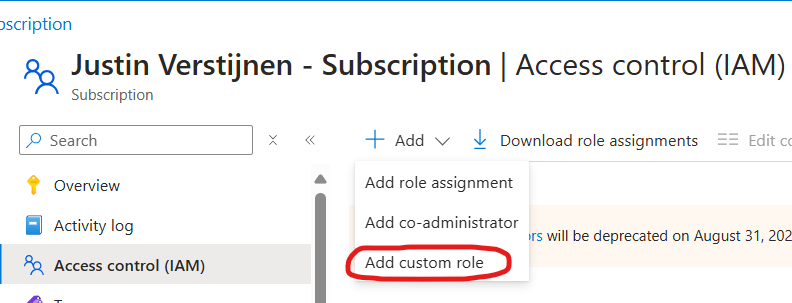

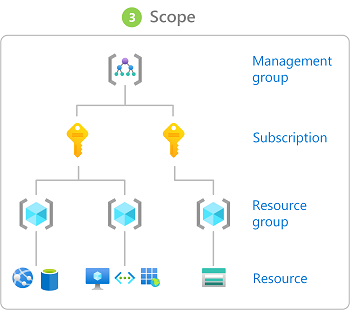

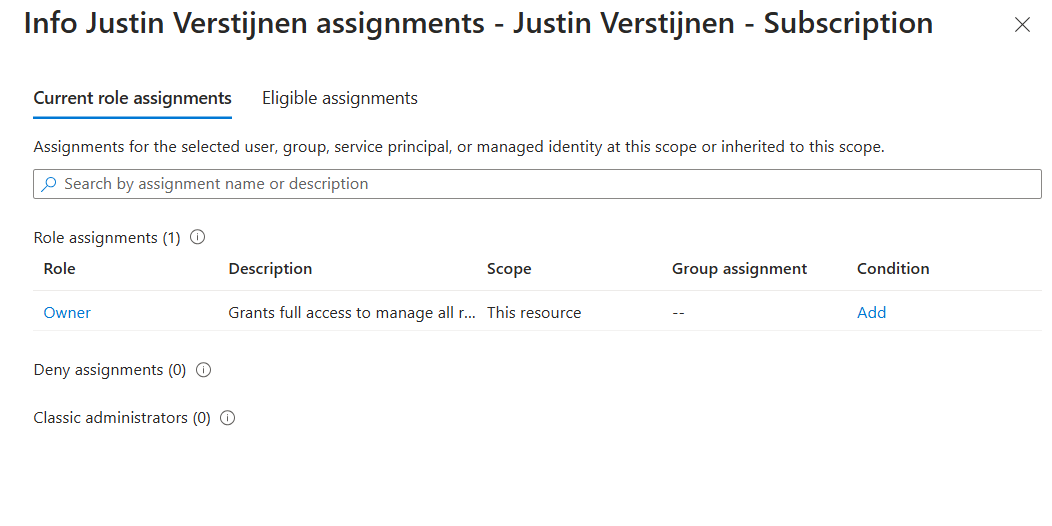

- Introduction to Azure roles and permissions (RBAC/IAM)

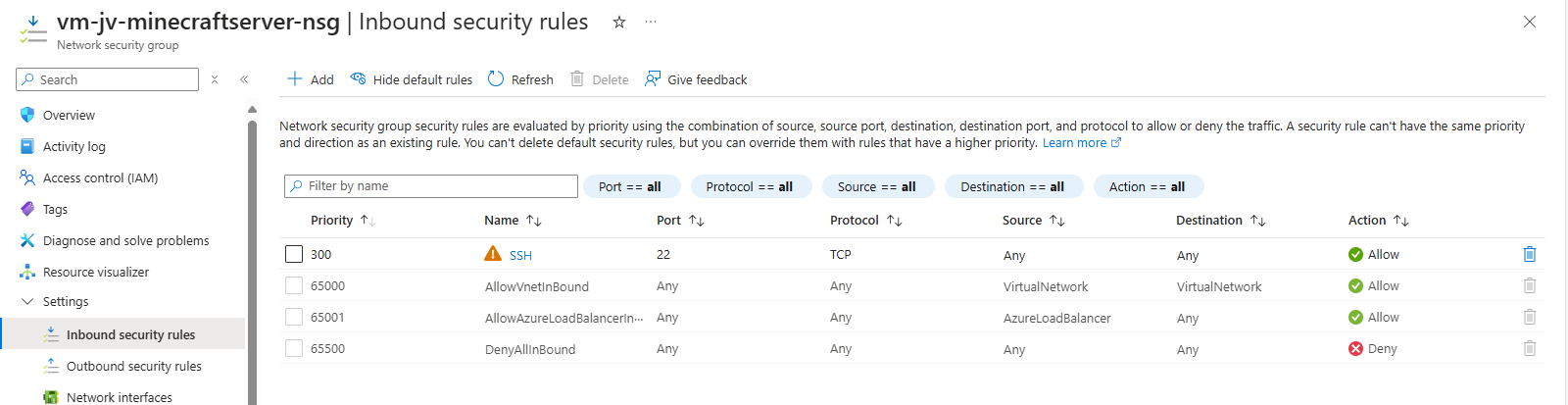

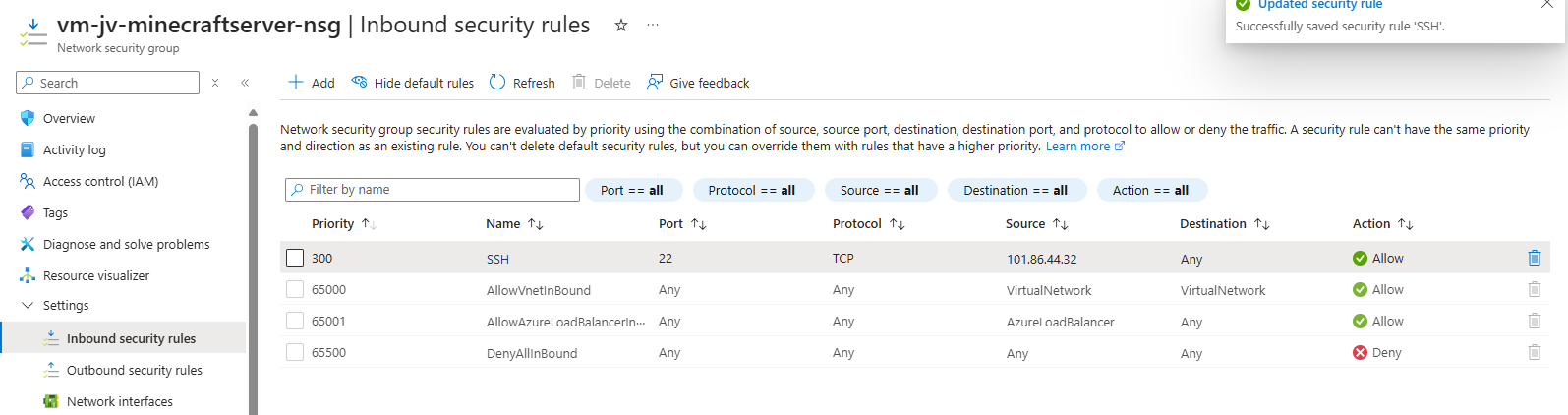

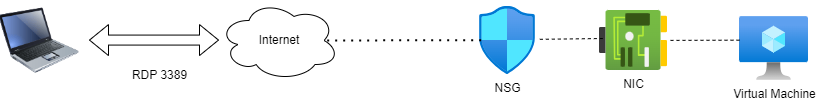

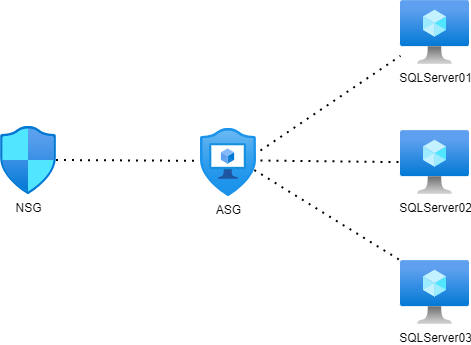

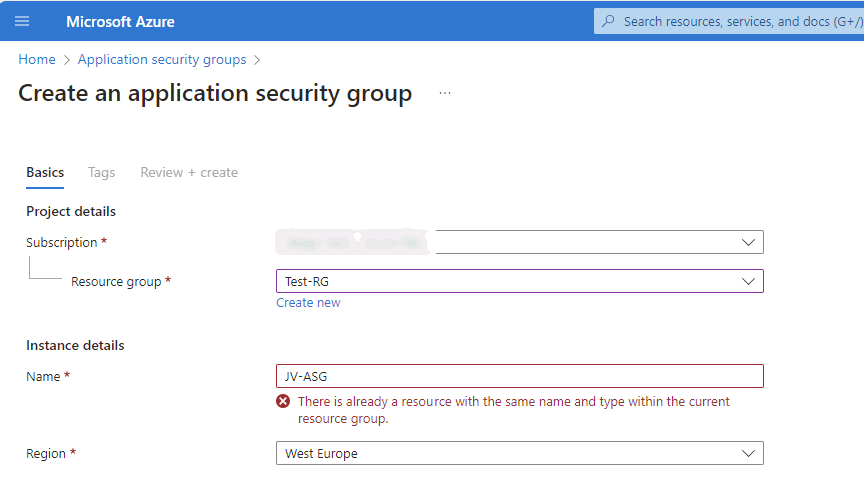

- Network security in Azure with NSG and ASG

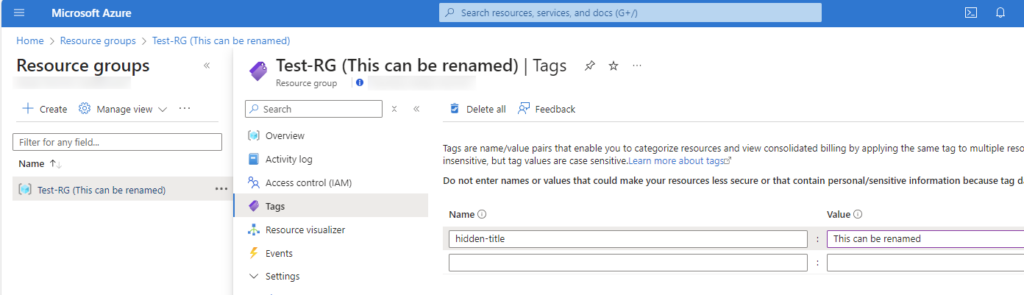

- Rename name-tags to resource groups and resources

Useful Azure links/tools

Introduction to page and tools

I mostly use these tools regularly to check the latest Azure updates, watch service health, calculate costs, build diagrams, create documentation, run commands easily, learn new skills, and manage your resources better.

In this page, I therefore not focussed on a single category but selected some tools for multiple categories.

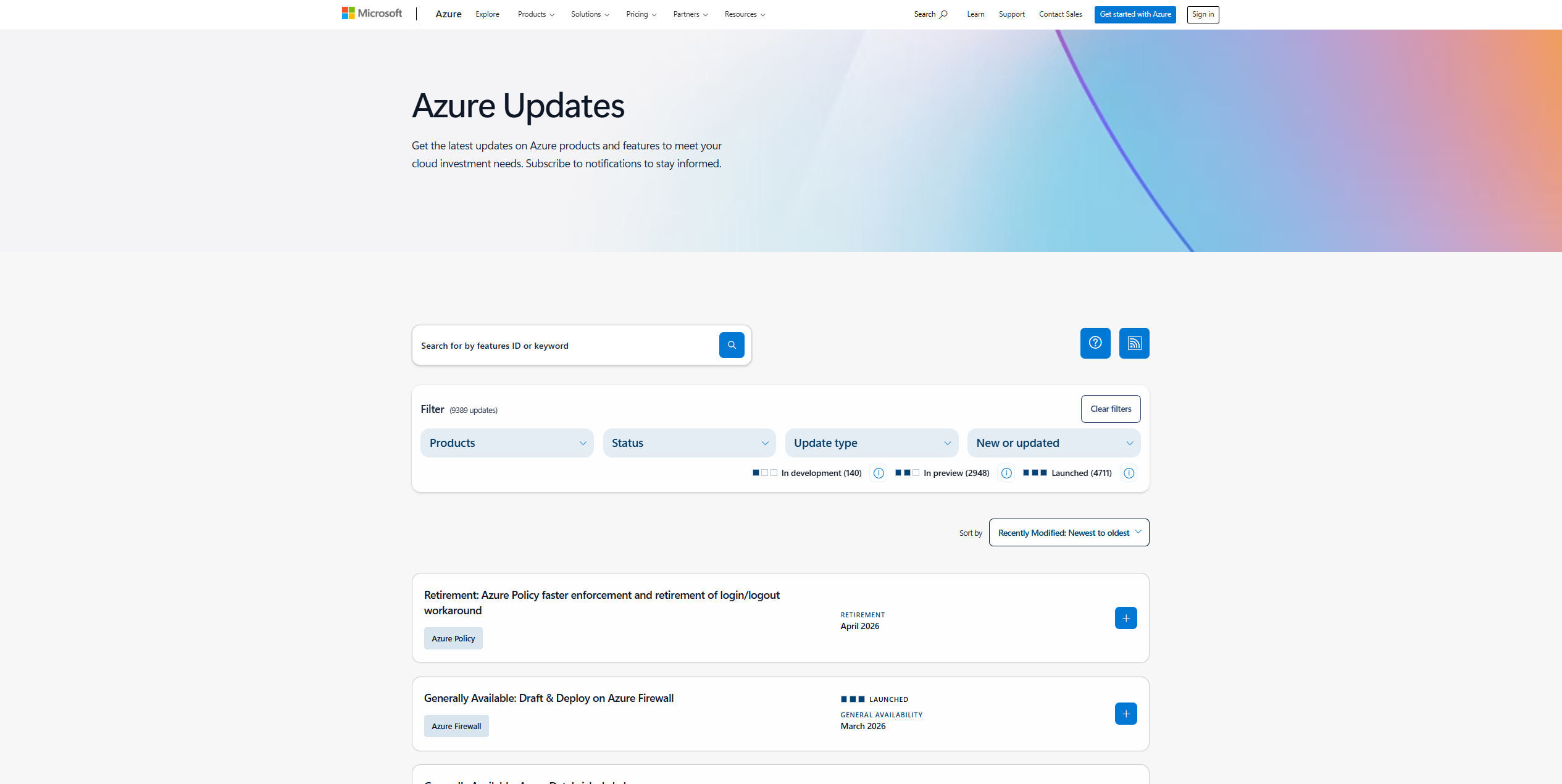

1. Azure Updates

This website shows all new features, fixes, and announcements from Azure. It helps you stay informed about important changes, like retiring services or previews transitioning to generally available options.

https://azure.microsoft.com/en-us/updates

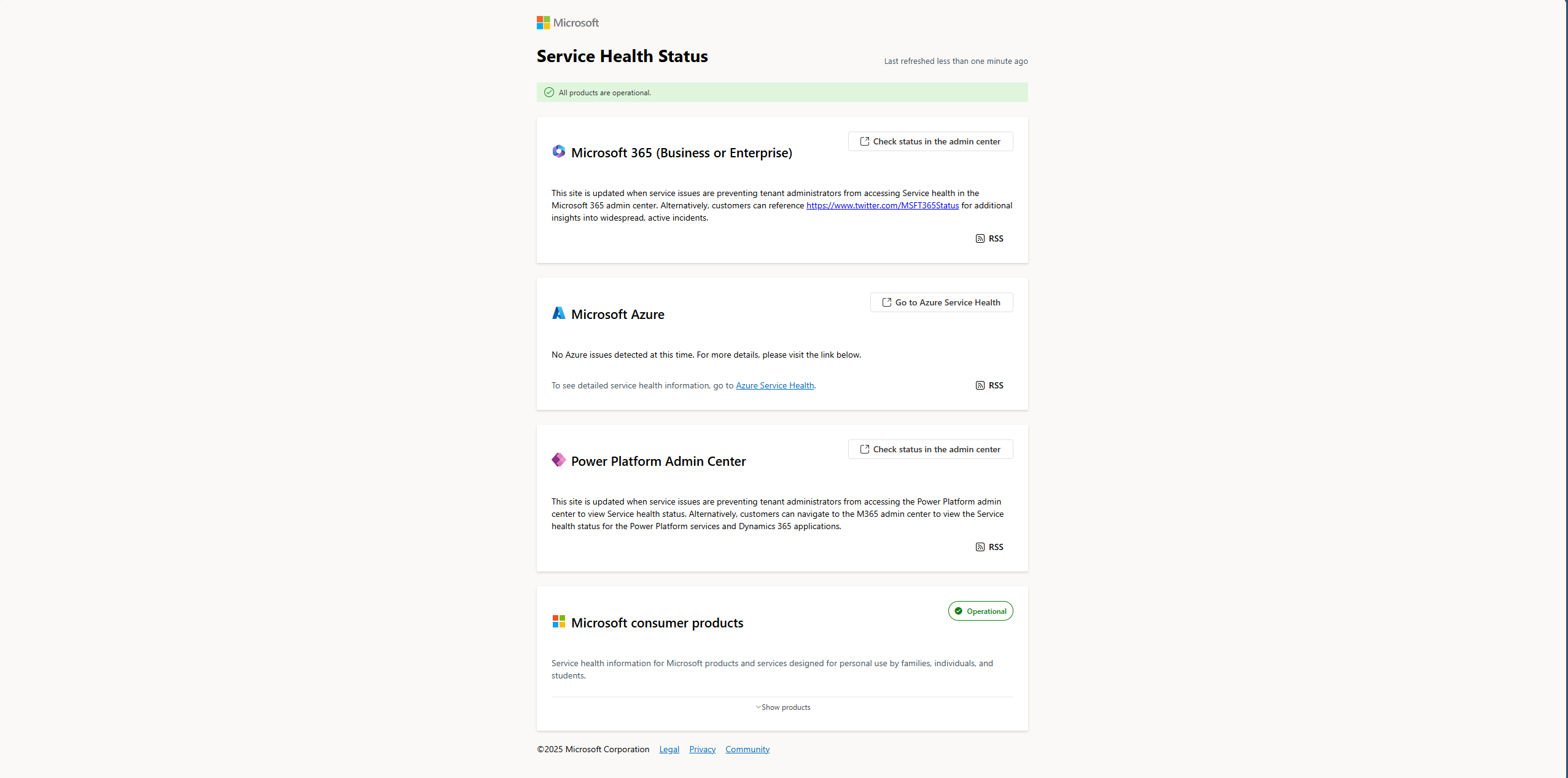

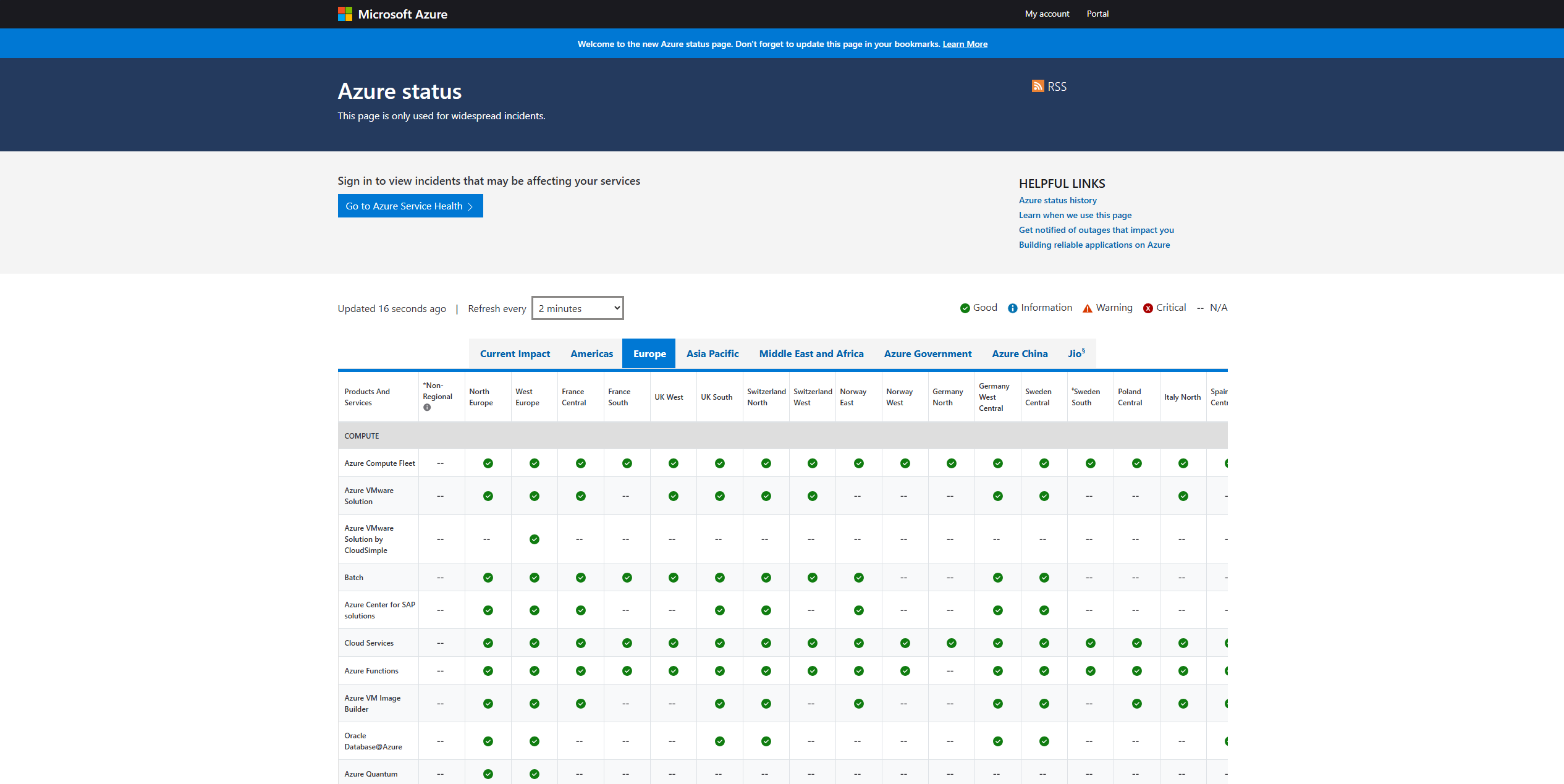

2. Microsoft Cloud Service Status (Overview/Azure)

These two sites, status.cloud.microsoft.com and status.azure.com, show the current health of Microsoft cloud services. You can use the first site to get an overview of all Microsoft Services, and the Azure Status page for only Azure services.

https://status.cloud.microsoft.com

You can also check on Azure Service Health if there are any issues which impacts your environment through this link:

https://portal.azure.com/#view/Microsoft_Azure_Health/AzureHealthBrowseBlade/~/serviceIssues

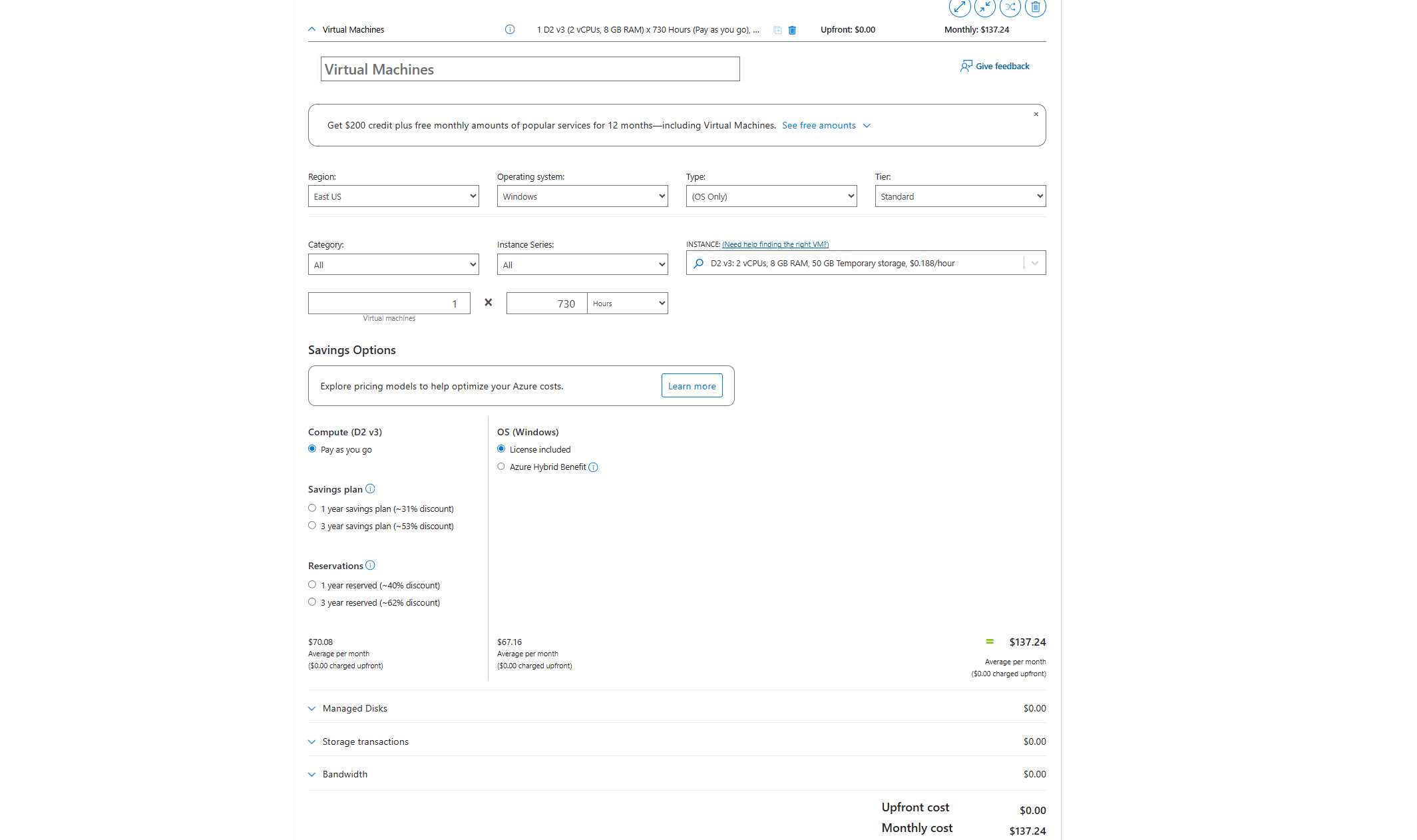

3. Azure Pricing Calculator

This tool helps you estimate how much your Azure services will cost. You can choose different resources and get an easy-to-understand price before building your environment. Very useful tool when designing an environment and making a quote for your customer.

https://azure.microsoft.com/en-us/pricing/calculator

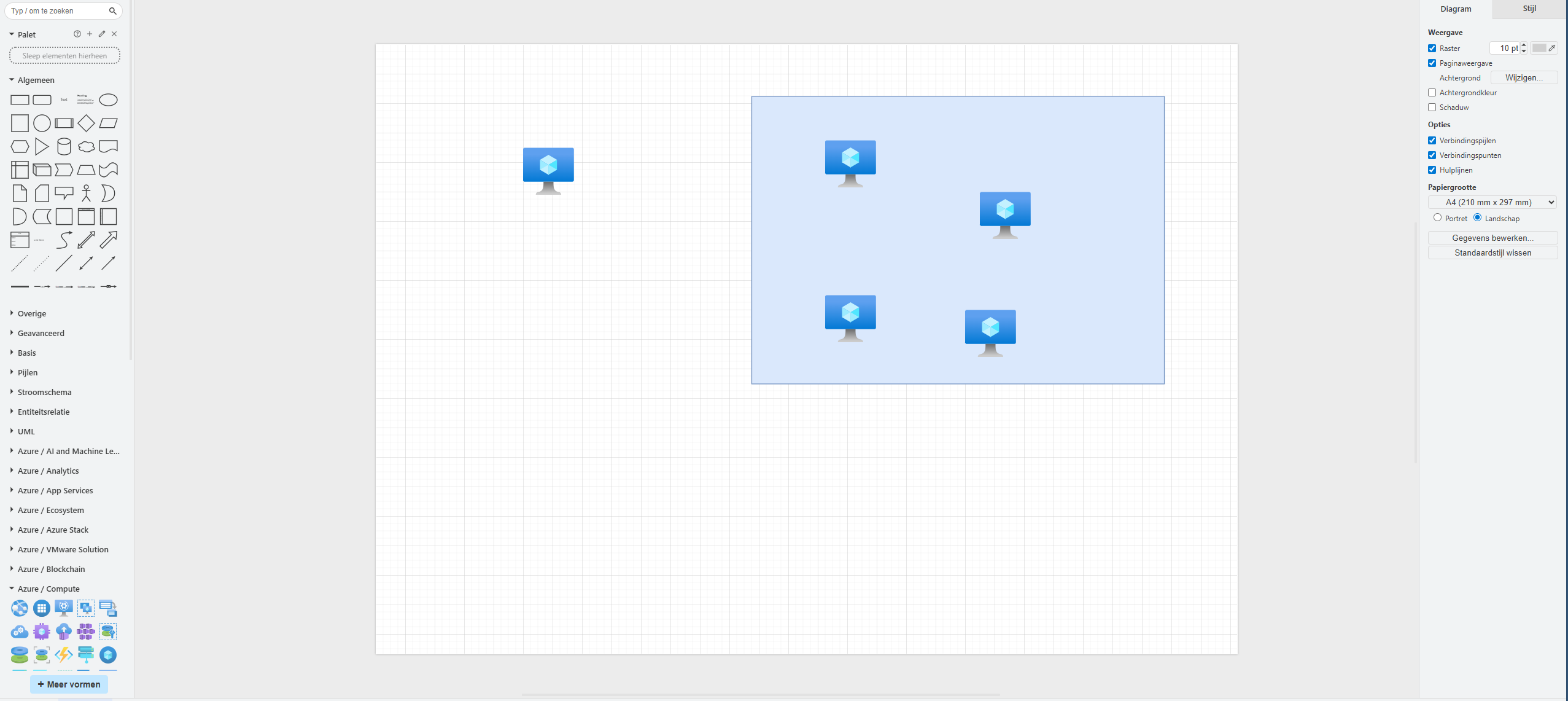

4. draw.io

draw.io is an amazing online tool for drawing diagrams. You can create network maps, architecture diagrams, or flowcharts for your Azure environment without installing software. It has almost all icons for Azure natively built-in for easy charts and diagrams.

Every time you see a nice moving and interactive diagram on my website, I have used Draw.io to create it.

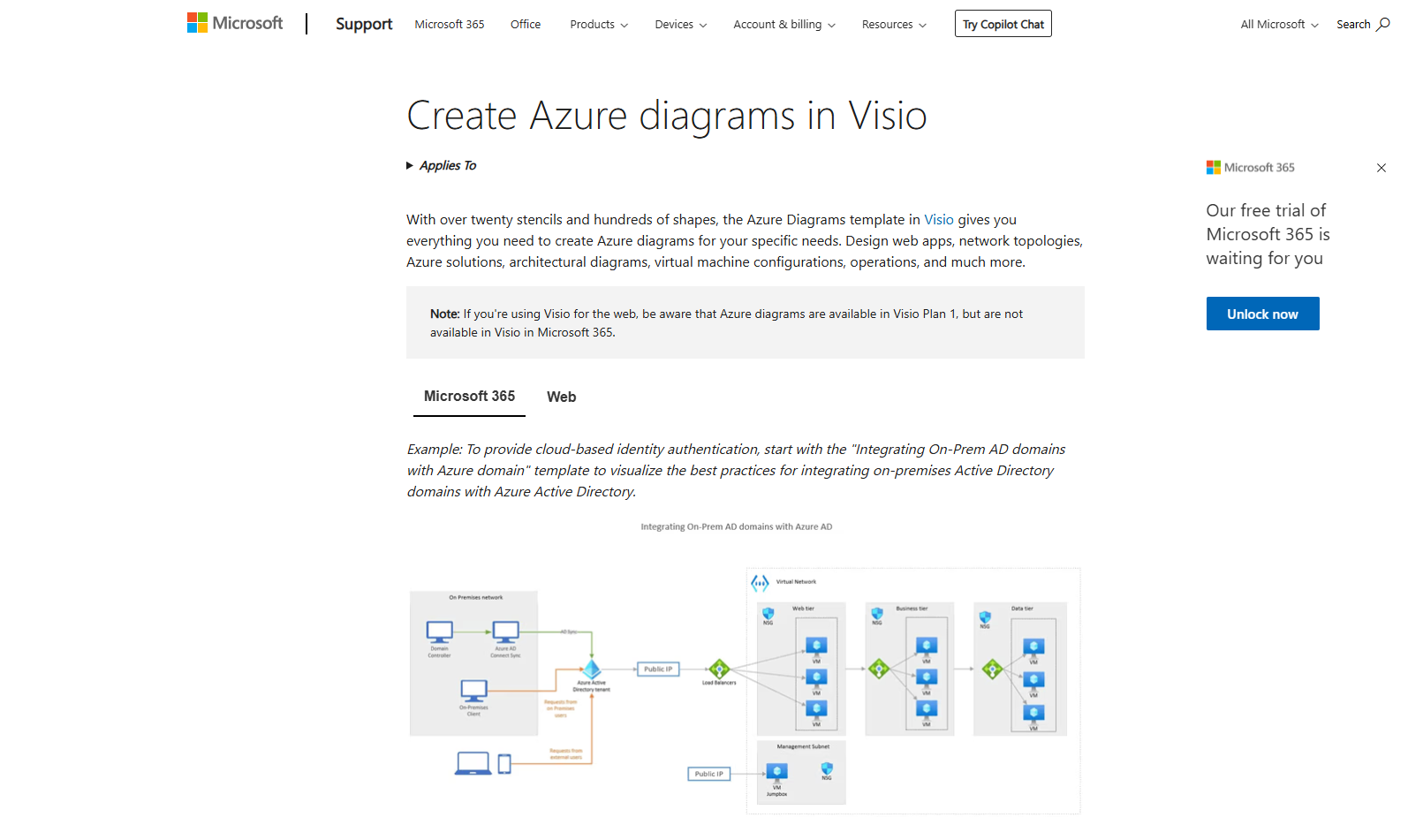

5. Microsoft Visio Azure Diagrams

Visio is a popular Microsoft tool to draw professional diagrams. With its Azure template, you can build detailed Azure diagrams using official icons and symbols easily. However, Visio is software you have to pay for and it must be installed. But it works great.

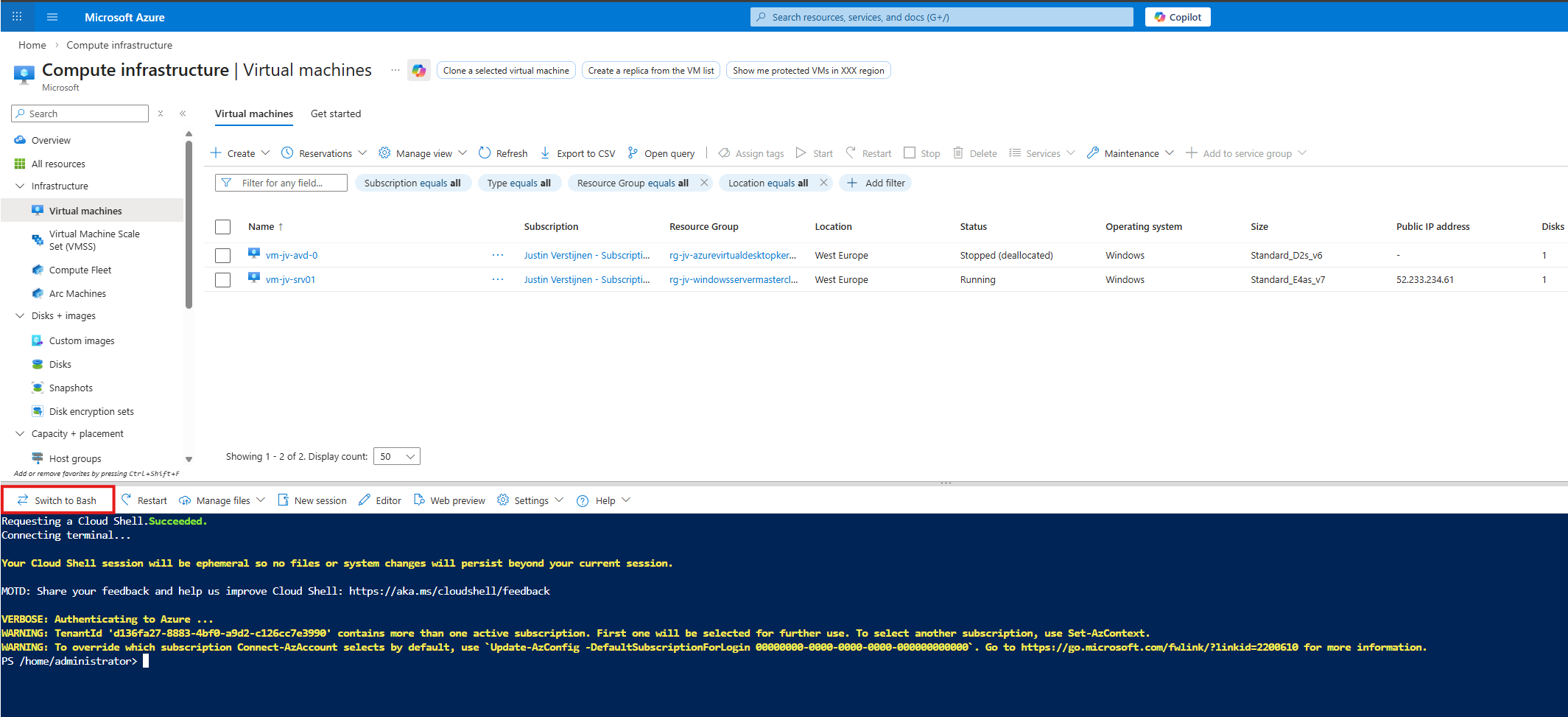

6. Azure CLI and Azure PowerShell

Azure CLI and PowerShell let you manage Azure using commands in a terminal. These tools are great for automation and managing resources faster than using the portal. We have the CLI and PowerShell directly in the Portal available using the “Cloud shell” button:

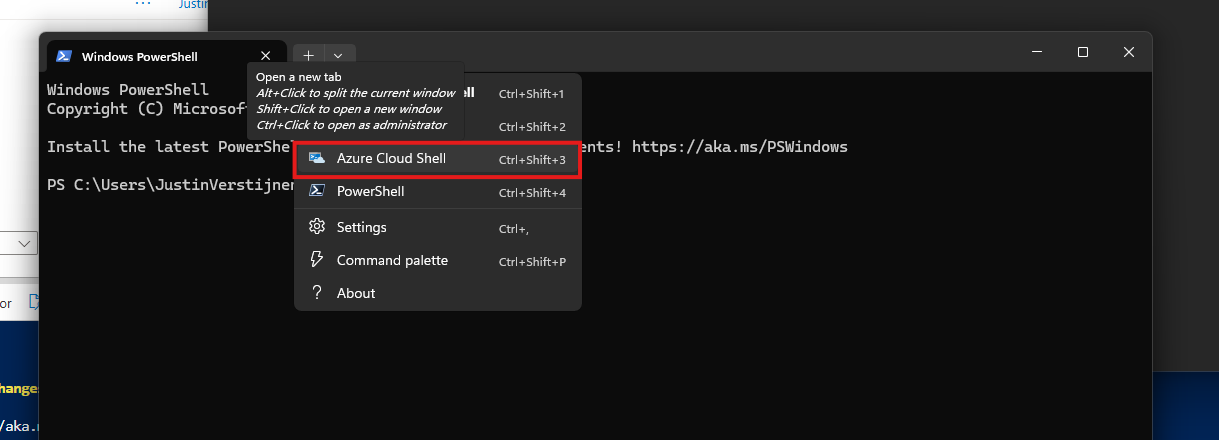

On Windows 11, you already have Azure CLI ready to use on your device. You only need to login to the tenant itself:

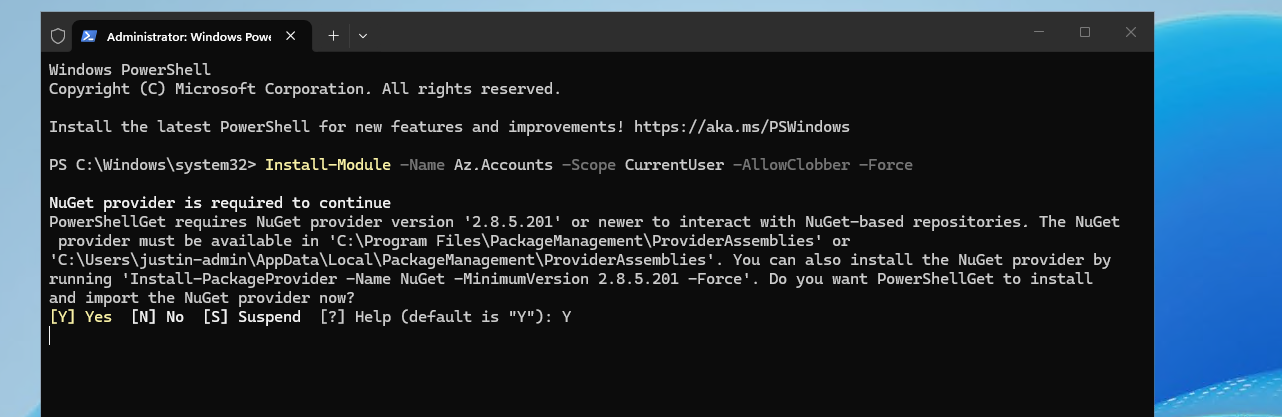

To use Azure PowerShell, you need to open PowerShell on your endpoint and install the needed modules:

Install-Module -Name Az.Accounts -Scope CurrentUser -AllowClobber -Force

Then connect to your tenant using this command:

Connect-AzAccount7. Azure Cloud Shell

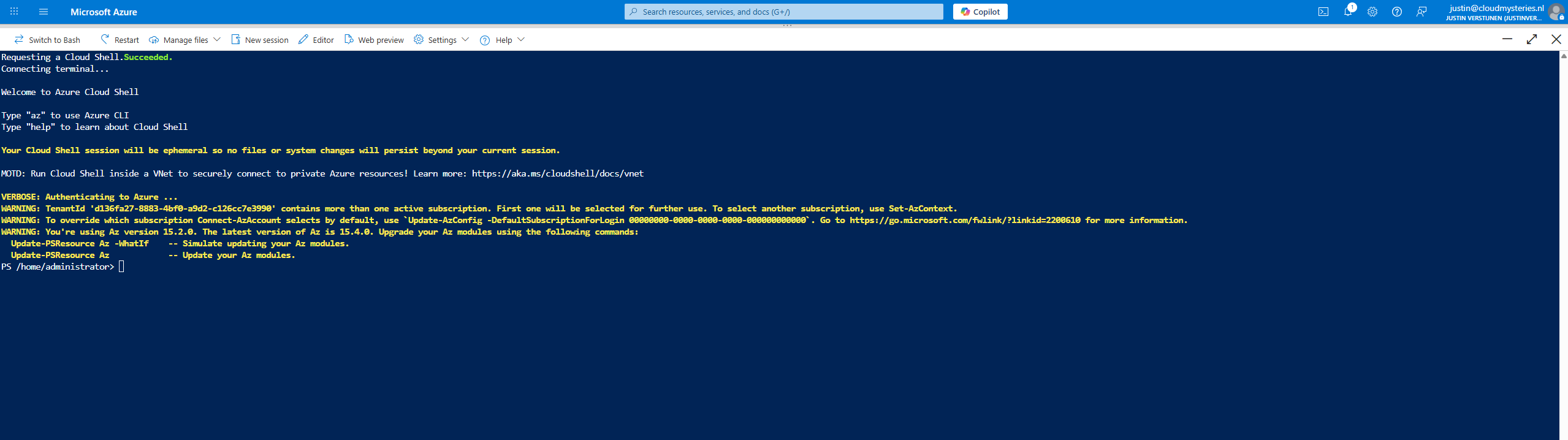

Cloud Shell is an online command-line environment you can use directly in your browser. It includes Azure CLI and PowerShell, so you don’t need to install anything locally. Very useful for fast tasks like deallocating a hung virtual machine or removing a resource that’s not visible in the Portal.

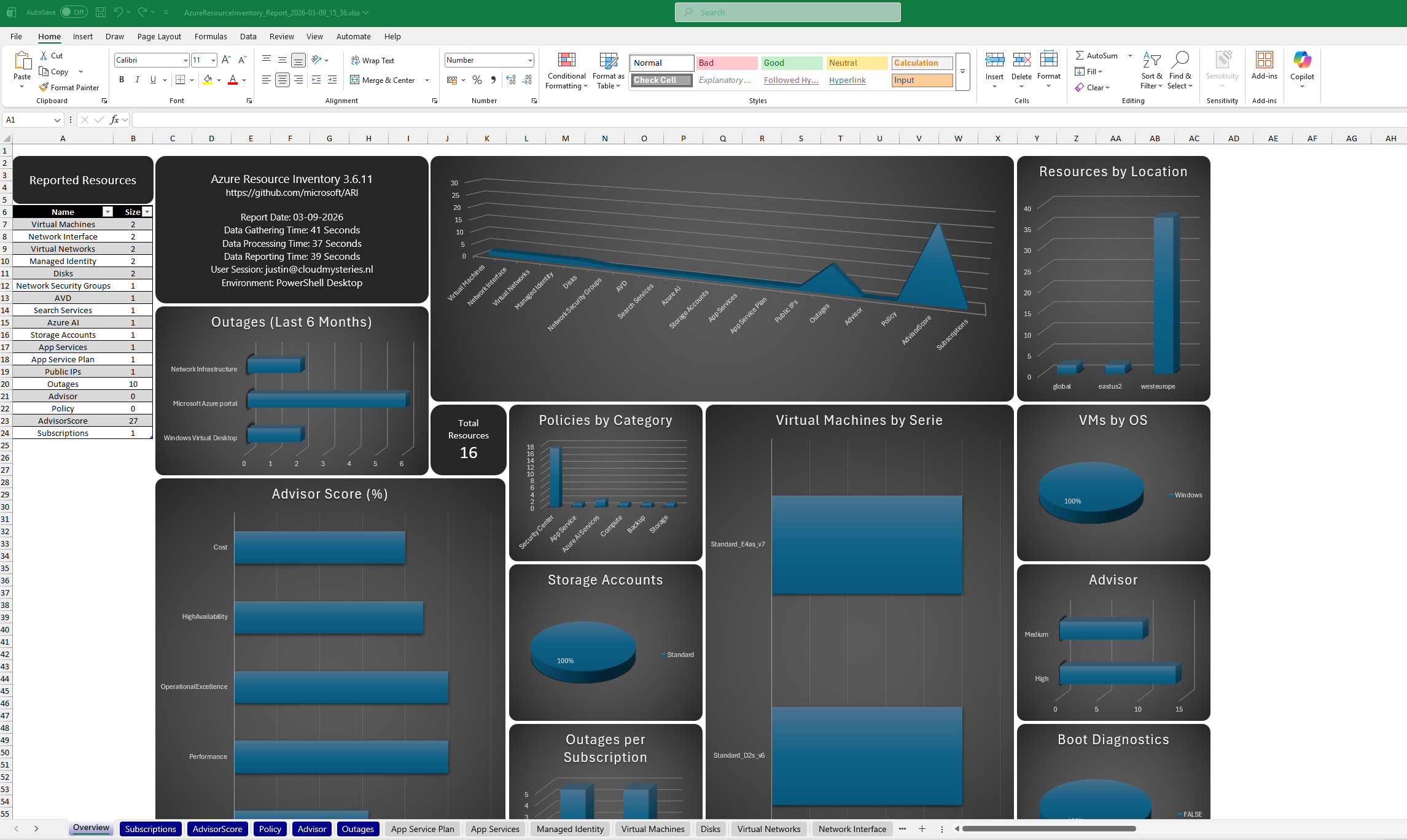

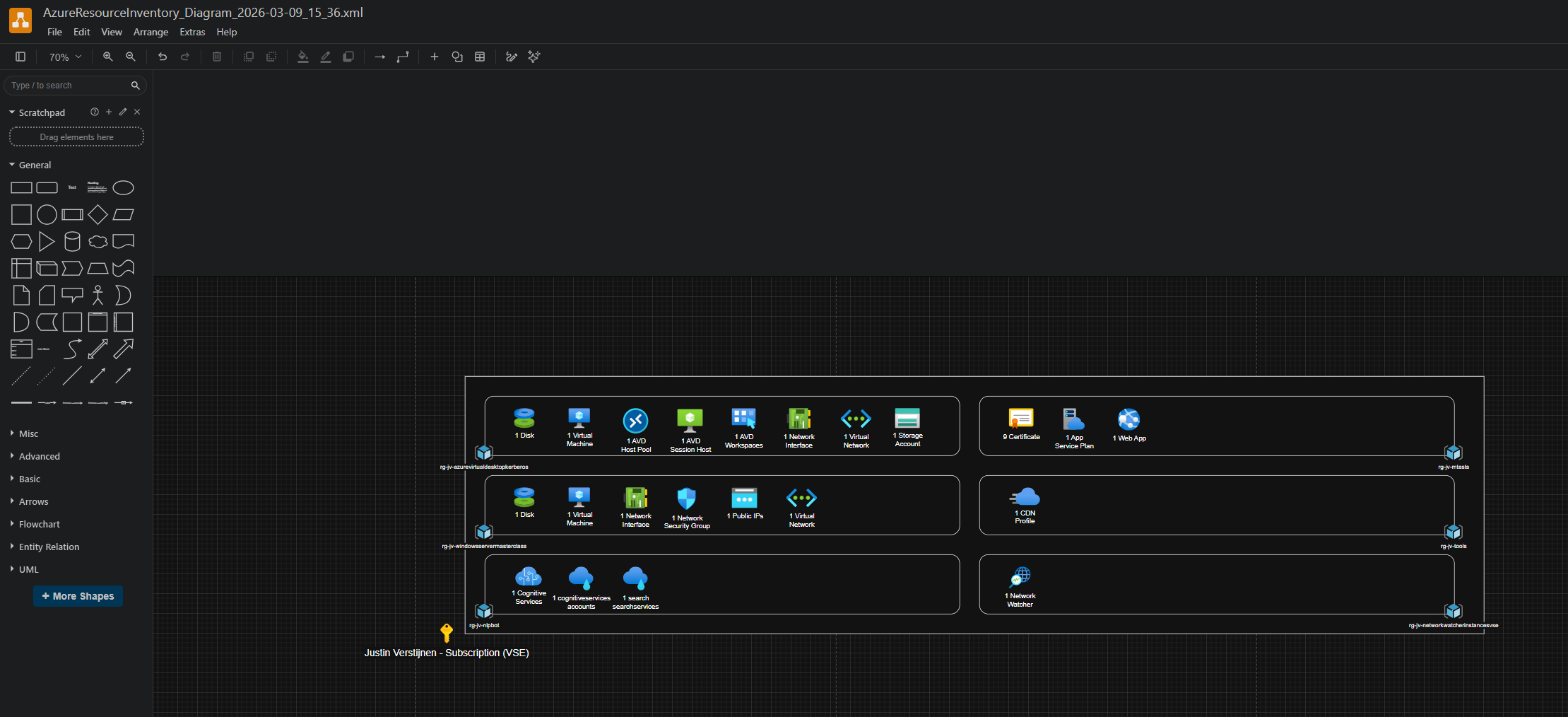

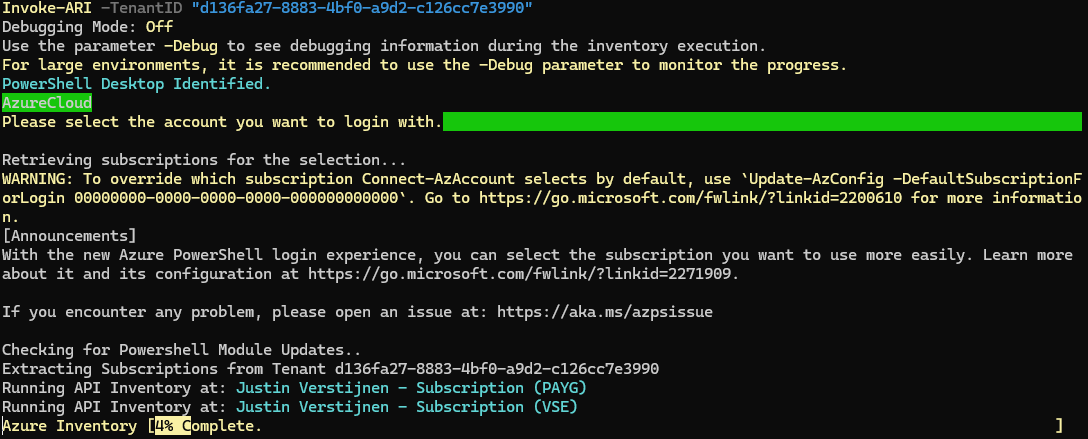

8. Azure Resource Indexer (ARI)

ARI is a tool from Microsoft on GitHub that helps find and visualize your Azure resources and their relations with each other. It is a useful to document your cloud setup or discover a new environment. It also has a export option to Draw.io, further helping you creating nice documentation.

https://github.com/microsoft/ARI

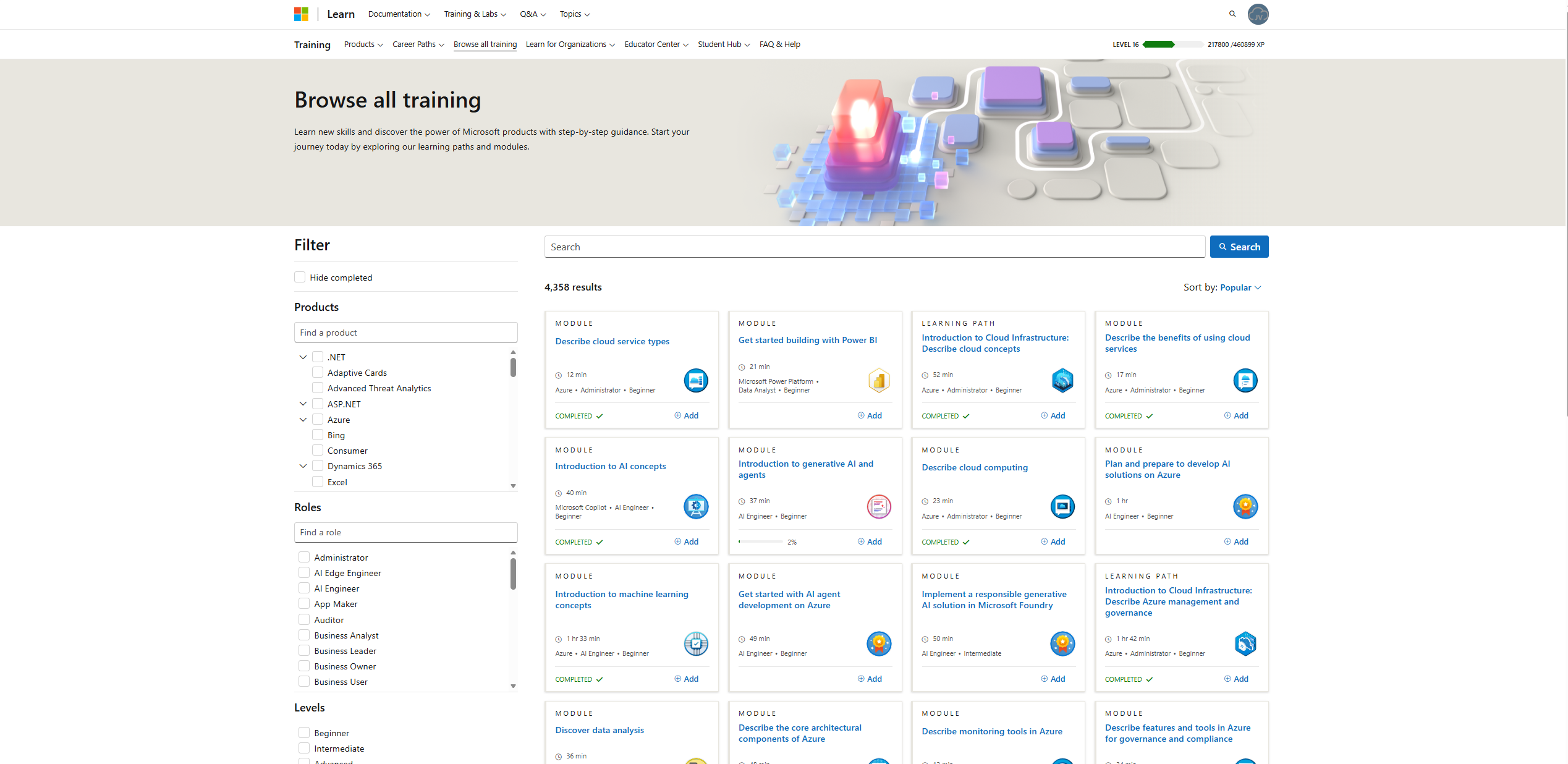

9. Microsoft Learn

Microsoft Learn offers free, step-by-step learning paths for Azure and many other Microsoft products. It helps you build skills and to introduce you to the stuff needed to learn for a certification. It also contains a lot of Microsoft documentation like how PowerShell scripts and modules work or licensing requirements.

https://learn.microsoft.com/en-us/credentials/browse

https://learn.microsoft.com/en-us/credentials/browse/?credential_types=applied%20skills

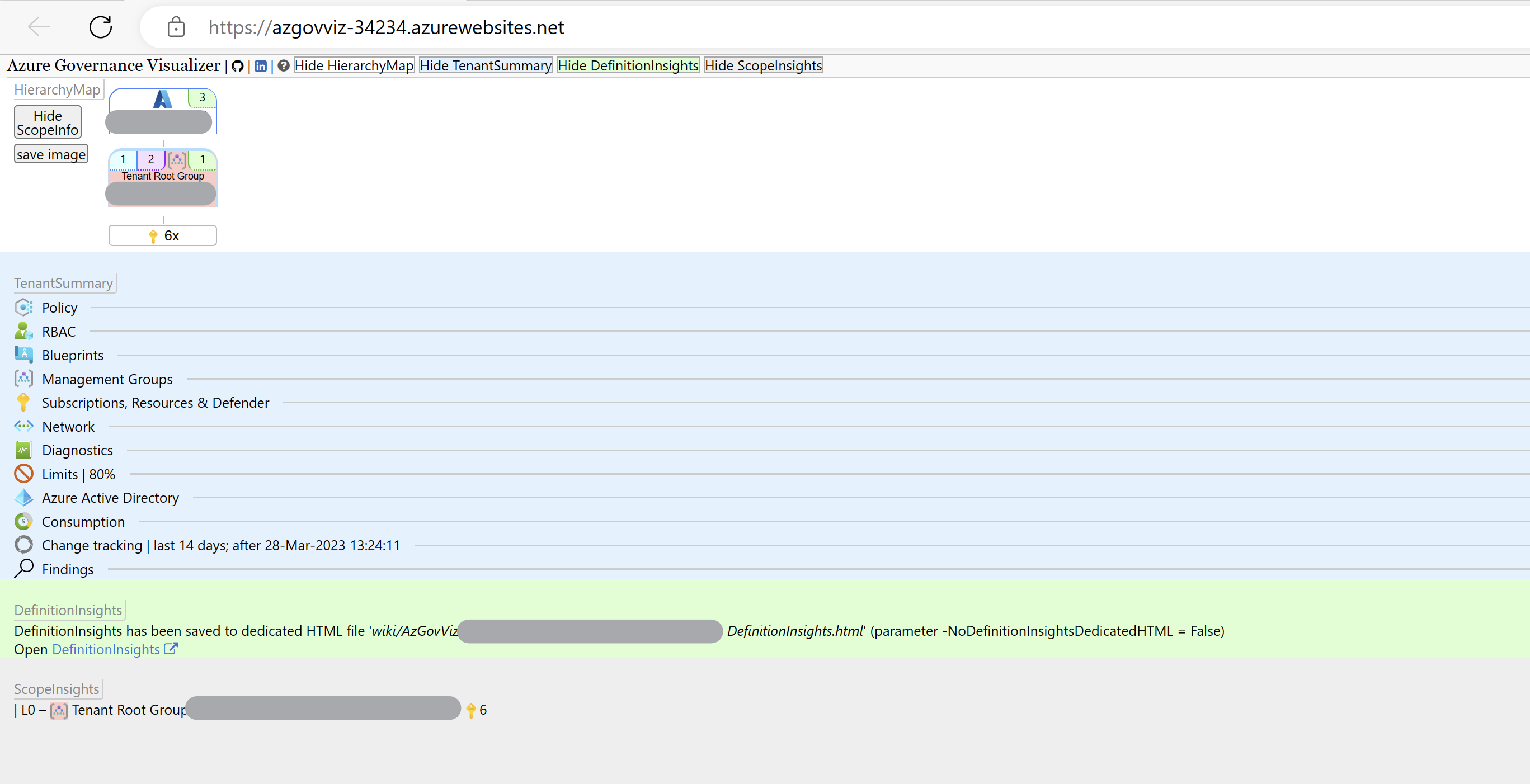

10. Azure Governance Visualizer Accelerator

This is a comprehensive tool that helps you see and understand how governance policies affect your Azure environment. It shows things like resource access rules visually, helping keep your setup secure and compliant. The setup will take some time but is really useful.

https://github.com/Azure/Azure-Governance-Visualizer-Accelerator

Summary

These 10 tools cover many aspects of working with Azure and related services. From staying updated to managing costs, drawing diagrams, running commands, automating tasks and learning new skills. They all make cloud management easier and more efficient.

Thank you for reading this post and I hope it was helpful!

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Create HTTPS 301 redirects with Azure Front Door

Requirements

For this solution, you need the following stuff:

- An Azure Subscription

- A domain name or multiple domain names, which may also be subdomains (subdomain.domain.com)

- Some HTTPS knowledge

- Some Azure knowledge

The solution explained

I will explain how I have made the shortcuts to my tools at https://justinverstijnen.nl/tools, as this is something what Azure Front Door can do for you.

In short, Azure Front Door is a load balancer/CDN application with a lot of load balancing options to distribute load onto your backend. In this guide we will use a simple part, only redirecting traffic using 301 rules, but if interested, its a very nice application.

- Our client is our desktop, laptop or mobile phone with an internet browser

- This client will request the URL dnsmegatool.jvapp.nl

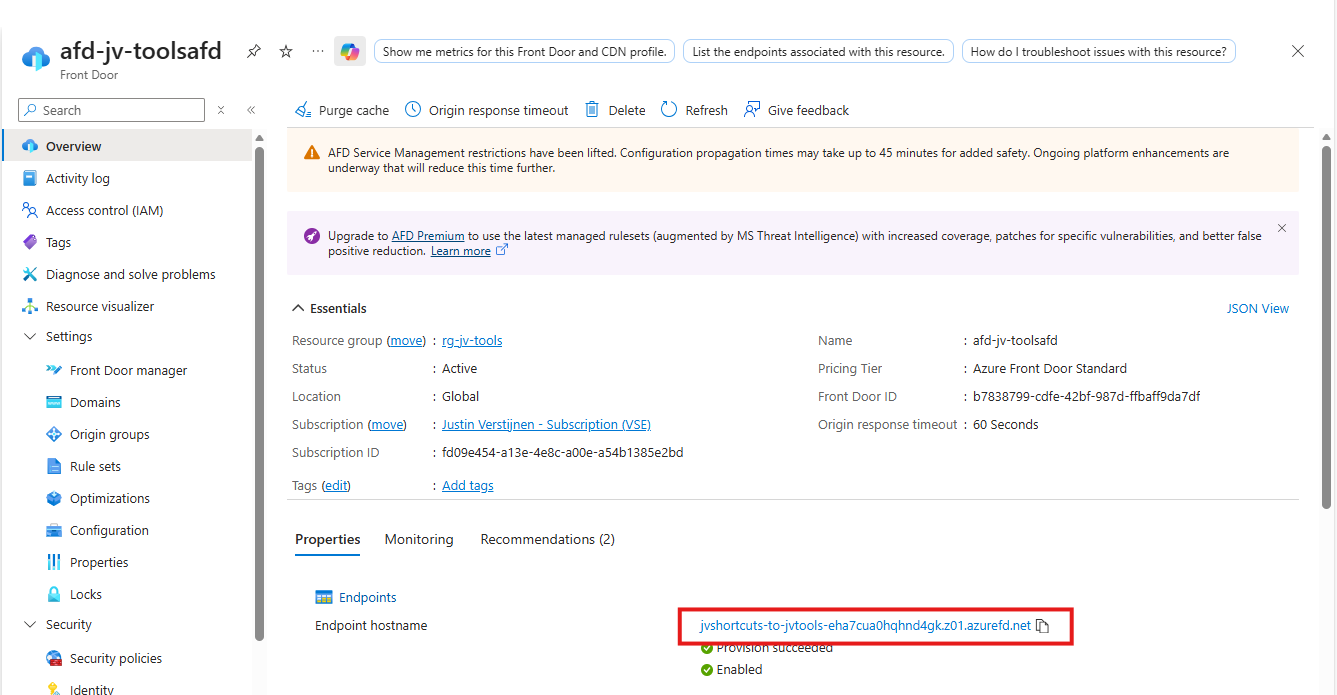

- A simple DNS lookup explains this (sub)domain can be found on Azure (jvshortcuts-to-jvtools-eha7cua0hqhnd4gk.z01.azurefd.net)

- The client will lookup Azure as he now knows the address

- Azure Front Door accepted the request and will route the request to the rule set

- The rule set will be checked if any rule exists with this parameters

- The rule has been found and the client will get a HTTPS 301 redirect to the correct URL: tools.justinverstijnen.nl/dnsmegatool.nl

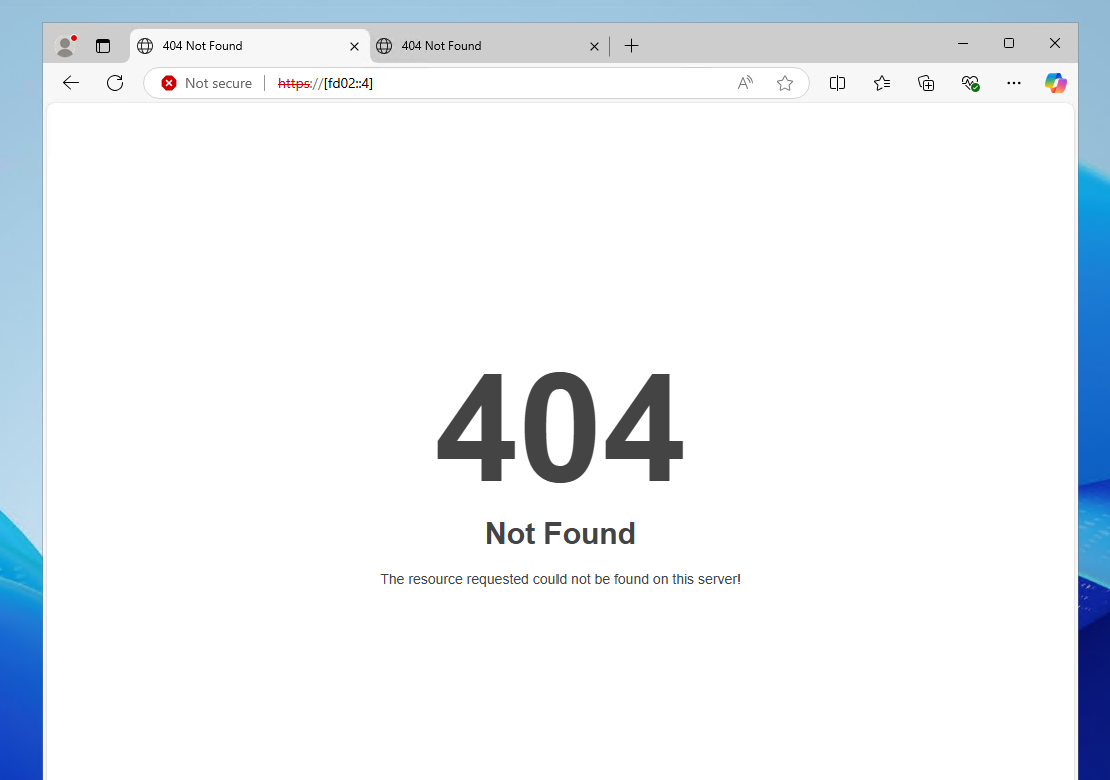

This effectively results in this (check the URL being changed automatically):

Now that we know what happens under the hood, let’s configure this cool stuff.

Step 1: Create Azure Front Door

At first we must configure our Azure Front Door instance as this will be our hub and configuration plane for 301 redirects and managing our load distribution.

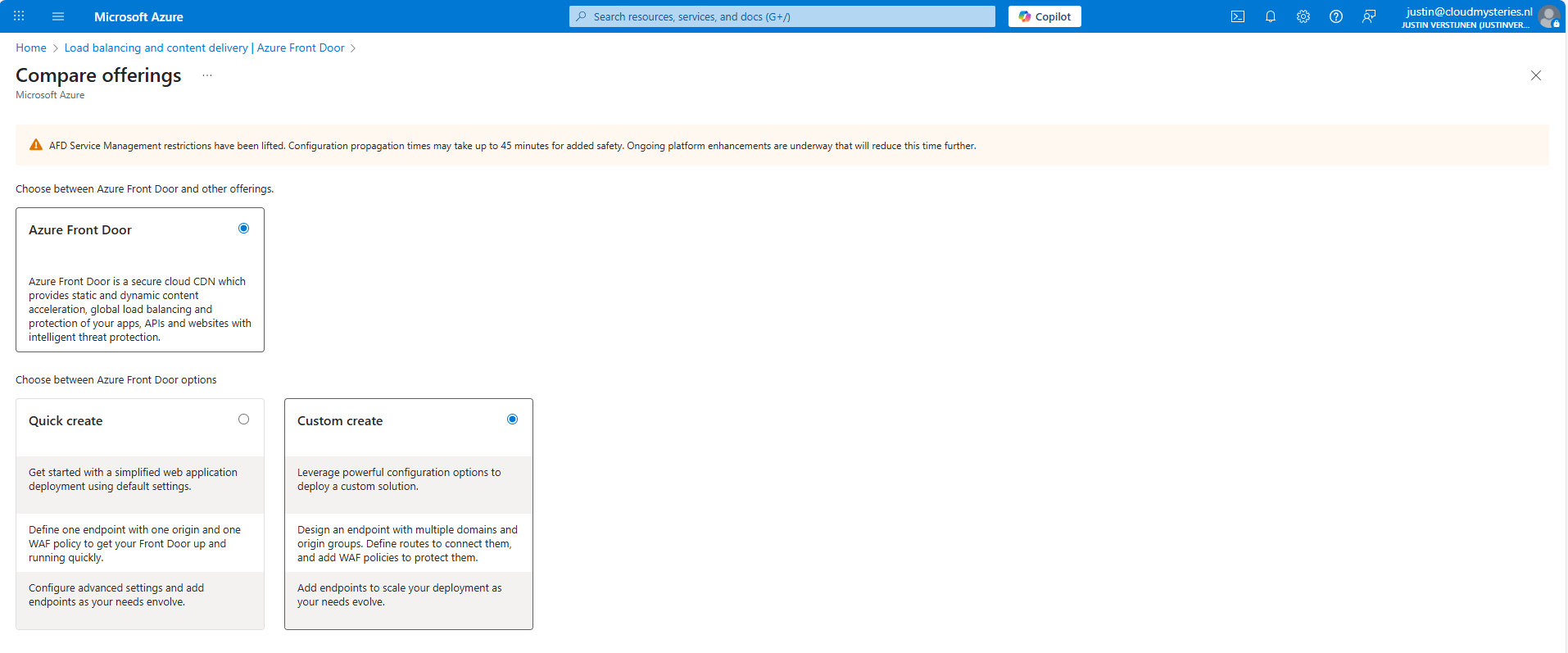

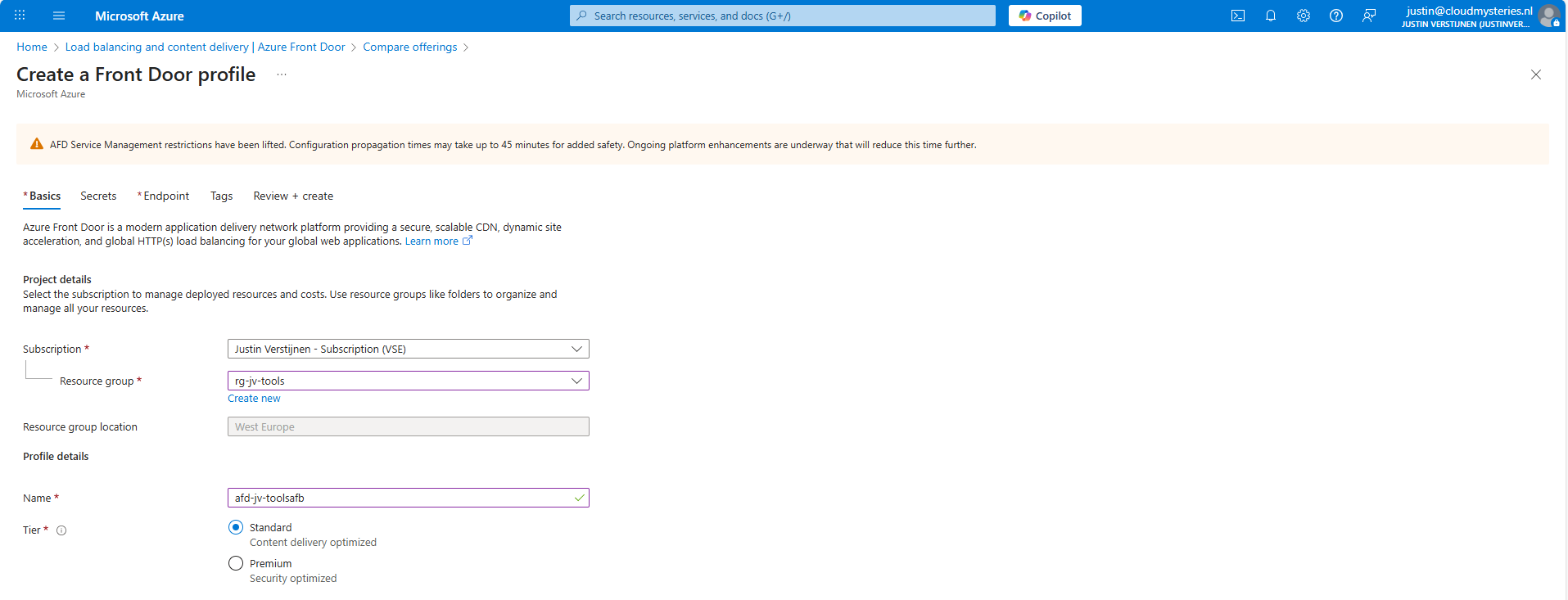

Open up the Azure Portal and go to “Azure Front Door”. Create a new instance there.

As the note describes, every change will take up to 45 minutes to be effective. This was also the case when I was configuring it, so we must have a little patience but it will be worth it.

I selected the “Custom create” option here, as we need a minimal instance.

At the first page, fill in your details and select a Tier. I will use the Standard tier. The costs will be around:

- 35$ per month for Standard

- 330$ per month for Premium

Go to the “Endpoint” tab.

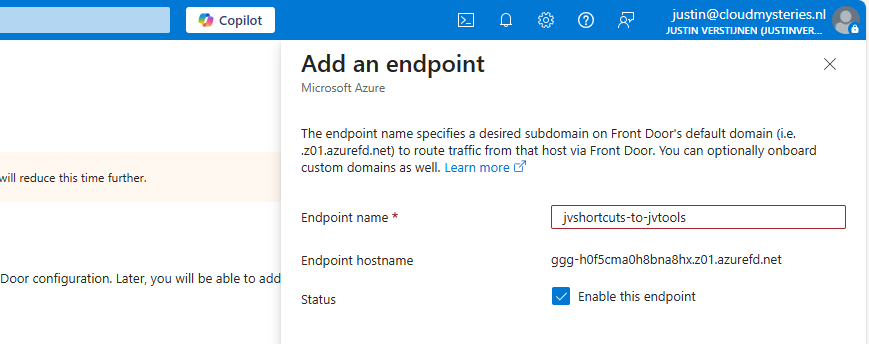

Give your Endpoint a name. This is the name you will redirect your hostname (CNAME) records to.

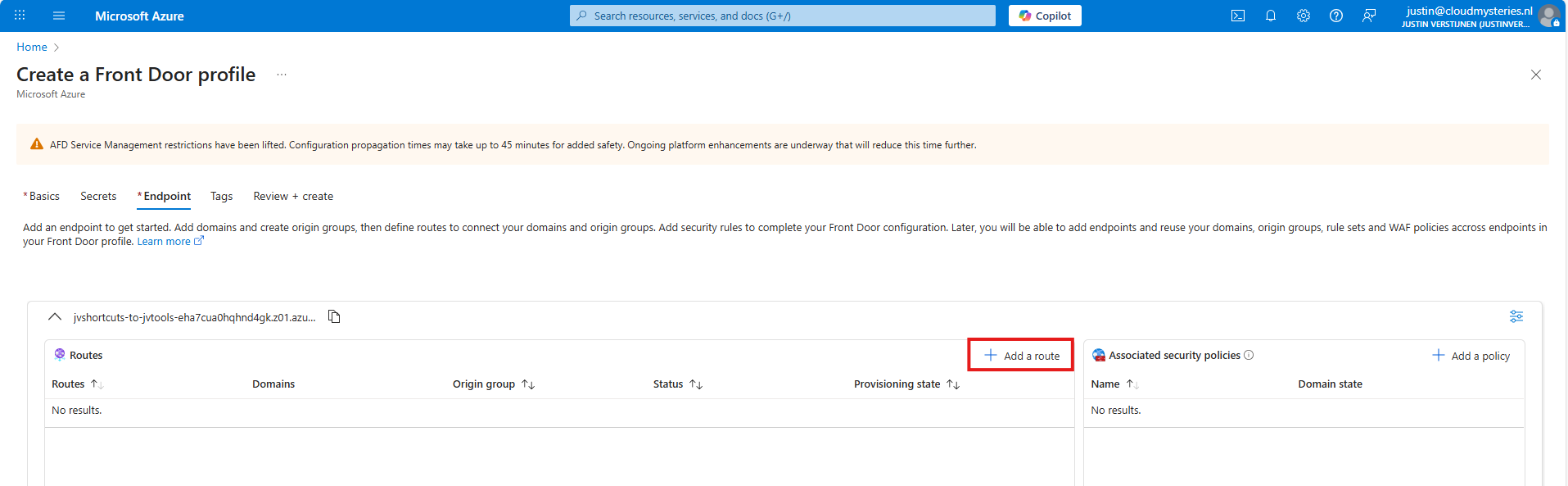

After creating the Endpoint, we must create a route.

Click “+ Add a route” to create a new route.

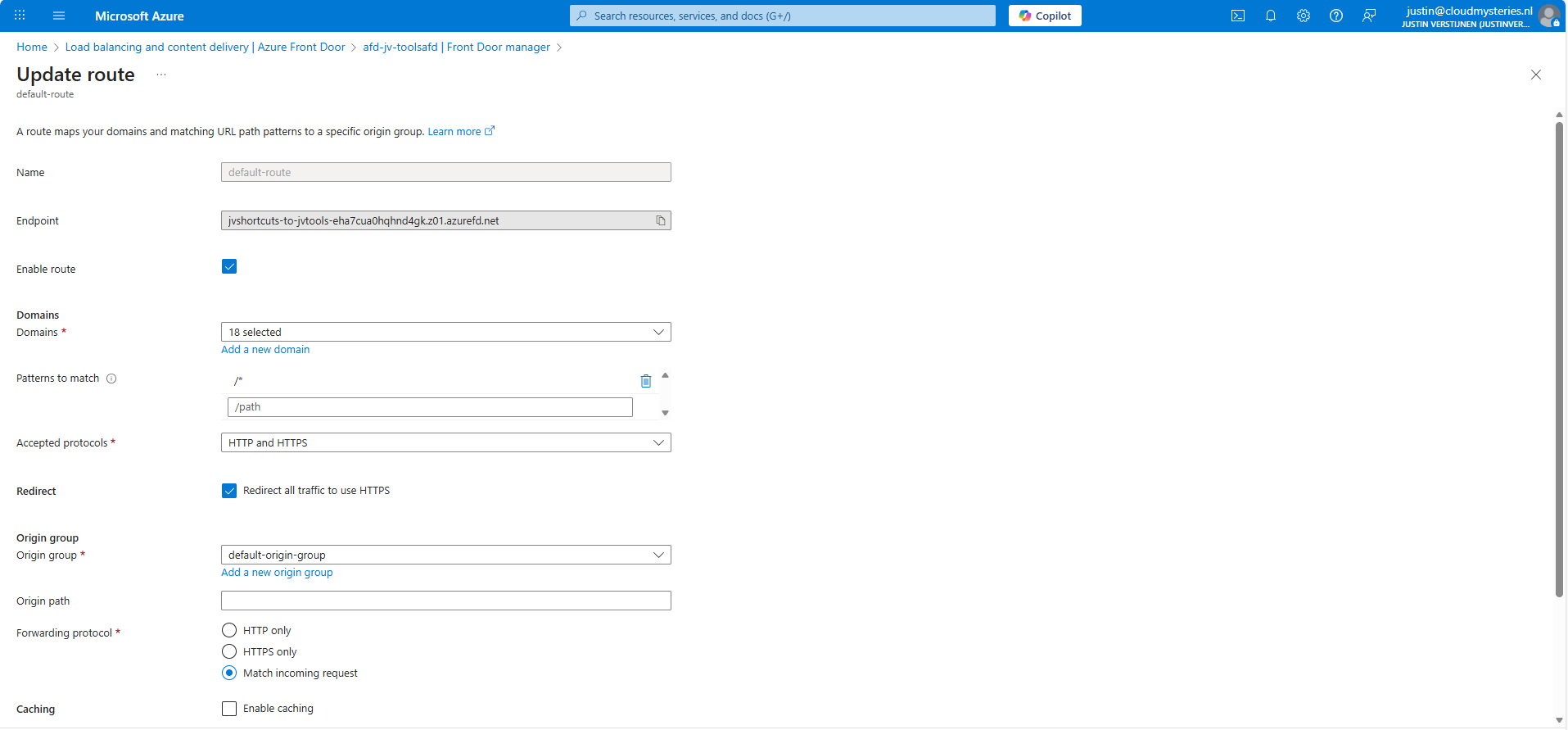

Give the route a name and fill in the following fields:

- Patterns to match: /*

- Accepted protocols: HTTP and HTTPS

- Redirect all traffic to use HTTPS: Enabled

Then create a new origin group. This doesn’t do anything in our case but must be created.

After creating the origin group, finish the wizard to create the Azure Front Door instance, and we will be ready to go.

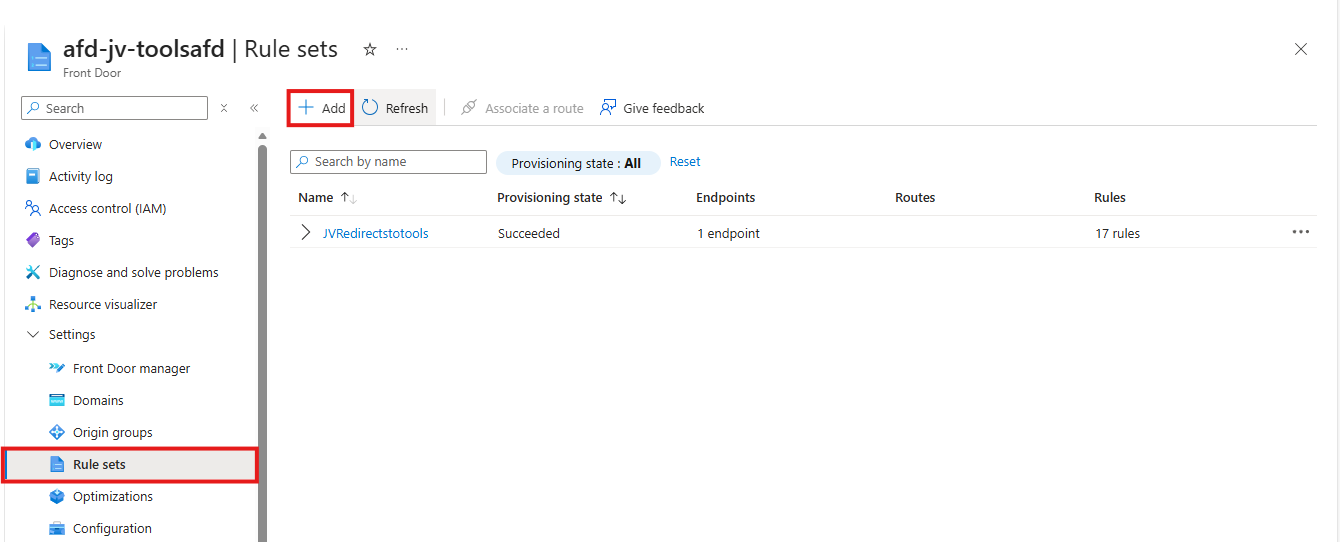

Step 2: Configure the rule set

After the Azure Front Door instance has finished deploying, we can create a Rule set. This can be found in the Azure Portal under your instance:

Create a new rule set here by clicking “+ Add”. Give the set a name after that.

The rule set is exactly what it is called, a set of rules your load balancing solution will follow. We will create the redirection rules here by basically saying:

- Client request: dnsmegatool.jvapp.nl

- Redirect to: tools.justinverstijnen.nl/dnsmegatool

Basically a if-then (do that) strategy. Let’s create such rule step by step.

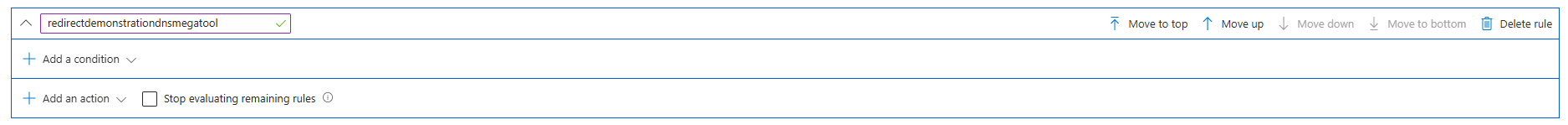

Click the “+ Add rule” button. A new block will appear.

Now click the “Add a condition” button to add a trigger, which will be “Request header”

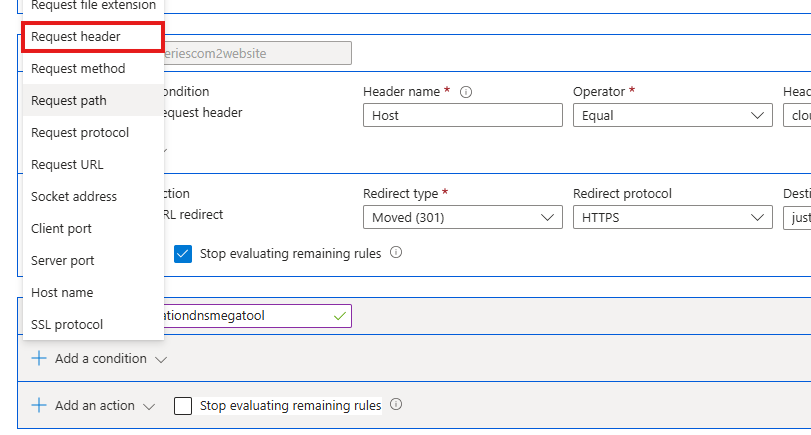

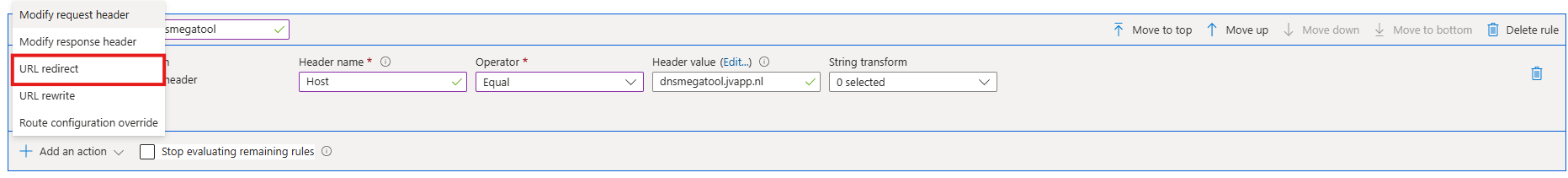

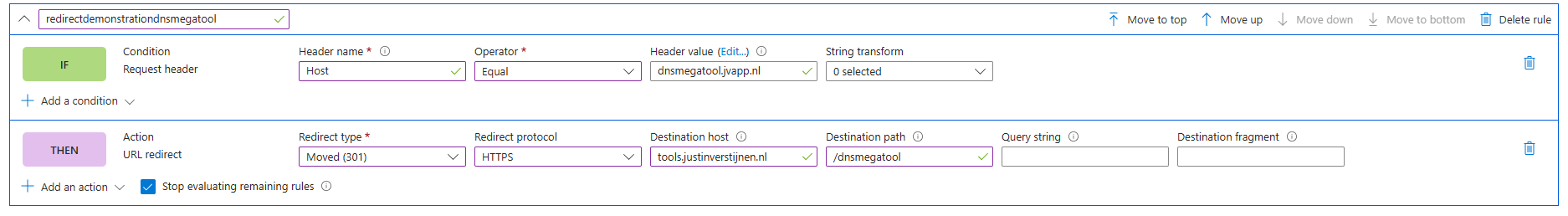

Fill in the fields as following:

- Header name: Host

- Operator: Equal

- Header value: dnsmegatool.jvapp.nl (the URL before redirect)

It will look like this:

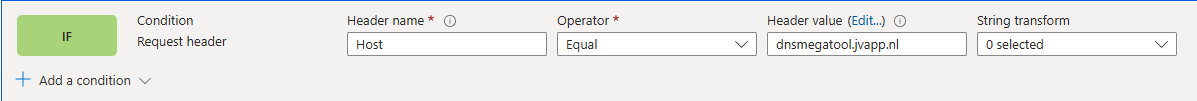

The click the “+ Add an action” button to decide on what to do when a client requests your URL:

Select the “URL redirect” option and fill in the fields:

- Redirect type: Moved (301)

- Redirect protocol: HTTPS

- Destination host: tools.justinverstijnen.nl

- Destination path: /dnsmegatool (only use this if the site is not at the top level of the domain)

Then enable the “Stop evaluating remaining rules” option to stop processing after this rule has applied.

The full rule looks like this:

Now we can update the rule/rule set and do the rest of the configurations.

Step 3: Custom domain configuration

How we have configured that we want domain A to link to domain B, but Azure requires us to validate the ownership of domain A before able to set redirections.

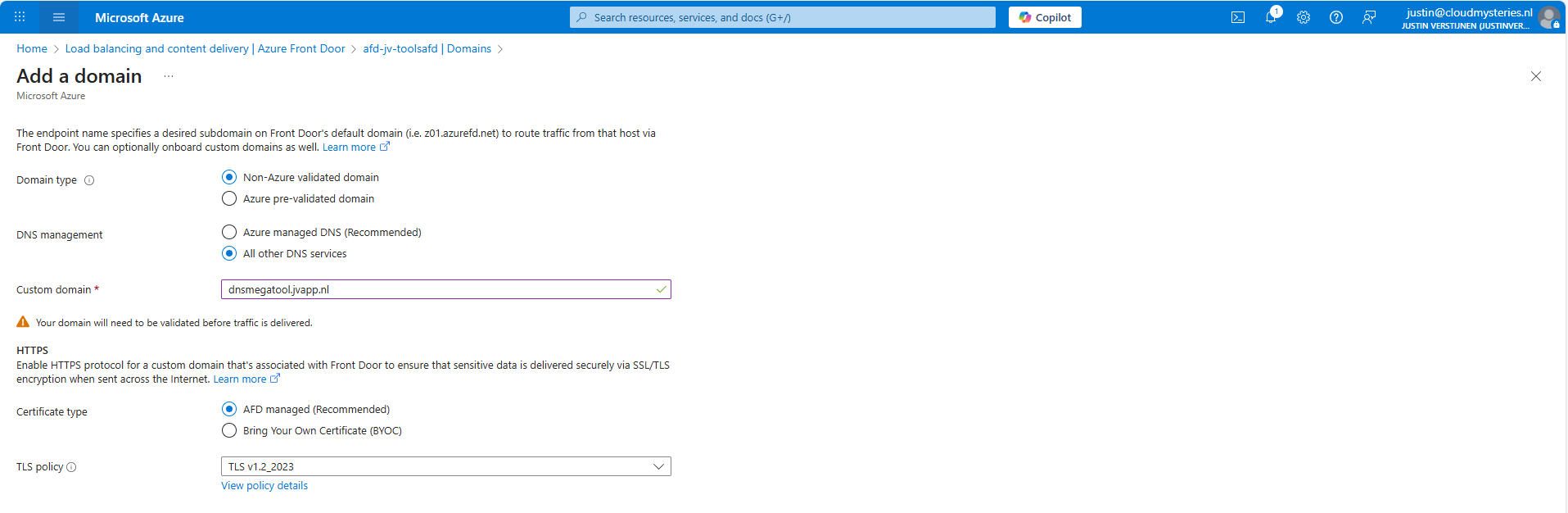

In the Azure Front Door instance, go to “Domains” and “+ Add” a domain here.

Fill in your desired domain name and click on “Add”. We now have to do a validation step on your domain by creating a TXT record.

Wait for a minute or so for the portal to complete the domain add action, and go to the “Domain validation section”:

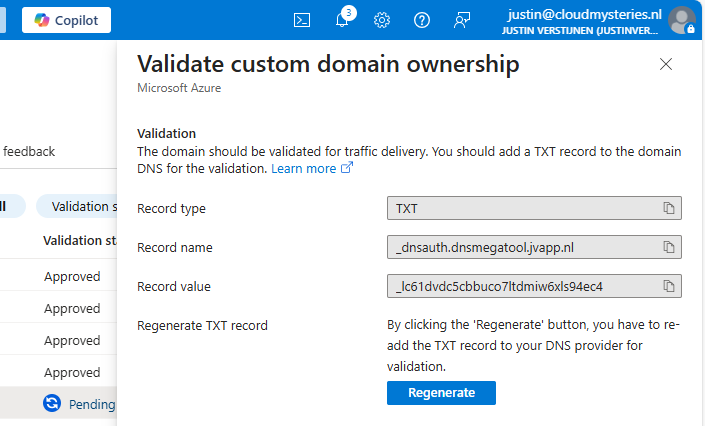

Click on the Pending state to unveil the steps and information for the validation:

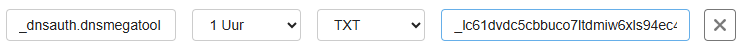

In this case, we must create a TXT record at our DNS hosting with this information:

- Record name: _dnsauth.dnsmegatool (domain will automatically be filled in)

- Record value: _lc61dvdc5cbbuco7ltdmiw6xls94ec4

Let’s do this:

Save the record, and wait for a few minutes. The Azure Portal will automatically validate your domain. This can take up to 24 hours.

In the meanwhile, now we have all our systems open, we can also create the CNAME record which will route our domain to Azure Front Door. In Azure Front Door collect your full Endpoint hostname, which is on the Overview page:

Copy that value and head back to your DNS hosting.

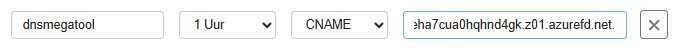

Create a new CNAME record with this information:

- Name: dnsmegatool

- Type: CNAME

- Value: jvshortcuts-to-jvtools-eha7cua0hqhnd4gk.z01.azurefd.net**.**

Make sure to end the value with a trailing dot (.), as this is a hostname externally to your DNS zone.

Save the DNS configuration, and your complete setup will now work in around 45 to 60 minutes.

This domain configuration has to be done for every domain and subdomain Azure Front Door must redirect. This is by design due to domain security.

Summary

Azure Front Door is a great solution for managing redirects for your webservers and tools in a central dashboard. Its a serverless solution so no patching or maintenance is needed. Only the configuration has to be done.

Azure Front Door does also manage your SSL certificates used in the redirections which is really nice.

Thank you for visiting this guide and I hope it wass helpful.

Sources

These sources helped me by writing and research for this post;

- https://azure.microsoft.com/en-in/pricing/details/frontdoor/?msockid=0e4eda4e5e6161d61121ccd95f0d60f5

- https://learn.microsoft.com/en-us/azure/frontdoor/front-door-url-redirect?pivots=front-door-standard-premium

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Everything you need to know about Azure Bastion

How does Azure Bastion work?

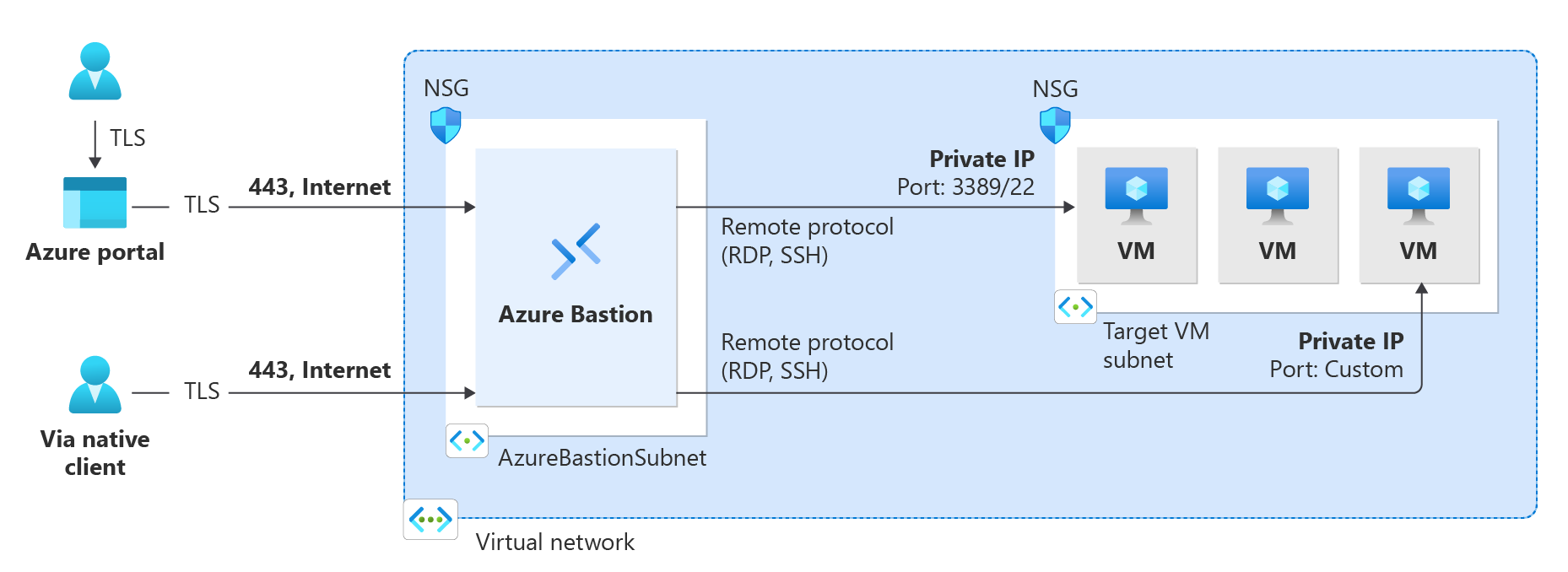

Azure Bastion is a serverless instance you deploy in your Azure virtual network. It resides there waiting for users to connect with it. It acts like a Jump-server, a secured server from where an administrative user connects to another server.

The process of it looks like this:

A user can choose to connect from the Azure Portal to Azure Bastion and from there to the destination server or use a native client, which can be:

- SSH for Linux-based virtual machines

- RDP for Windows virtual machines

Think of it as a layer between user and the server where we can apply extra security, monitoring and governance.

Azure Bastion is an instance which you deploy in a virtual network in Azure. You can choose to place an instance per virtual network or when using peered networks, you can place it in your hub network. Bastion supports connecting over VNET peerings, so you will save some money if you only place instances in one VNET.

Features of Azure Bastion

Azure Bastion has a lot of features today. Some years ago, it only was a method to connect to a server in the Azure Portal, but it is much more than that. I will highlight some key functionality of the service here:

| Feature | Basic | Standard | Premium |

| Connecting to Windows VMs | ✅ | ✅ | ✅ |

| Connecting to Linux VMs | ✅ | ✅ | ✅ |

| Concurrent connections | ✅ | ✅ | ✅ |

| Custom inbound port | ❌ | ✅ | ✅ |

| Shareable link | ❌ | ✅ | ✅ |

| Disable copy/paste | ❌ | ✅ | ✅ |

| Session recording | ❌ | ❌ | ✅ |

Now that we know more about the service and it’s features, let’s take a look at the pricing before configuring the service.

Pricing of Azure Bastion

Azure Bastion Instances are available in different tiers, as with most of the Azure services. The normal price is calculated based on the amounth of hours, but in my table I will pick 730 hours which is a full month. We want exactly know how much it cost, don’t we?

The fixed pricing is by default for 2 instances:

| SKU | Hourly price | Monthly price (730 hours) |

| Basic | $ 0,19 | $ 138,70 |

| Standard | $ 0,29 | $ 211,70 |

| Premium | $ 0,45 | $ 328,50 |

The cost is based on the time of existence in the Azure Subscription. We don’t pay for any data rates at all. The above prices are exactly what you will pay.

Extra instances

For the Standard and Premium SKUs of Azure Bastion, it is possible to get more than 2 instances which are a discounted price. These instances are half the prices of the base prices above and will cost you:

| SKU | Hourly price | Monthly price (730 hours) |

| Standard | $ 0,14 | $ 102,20 |

| Premium | $ 0,22 | $ 160,60 |

How to deploy Azure Bastion

We can deploy Azure Bastion through the Azure Portal. Search for “Bastions” and you will find it:

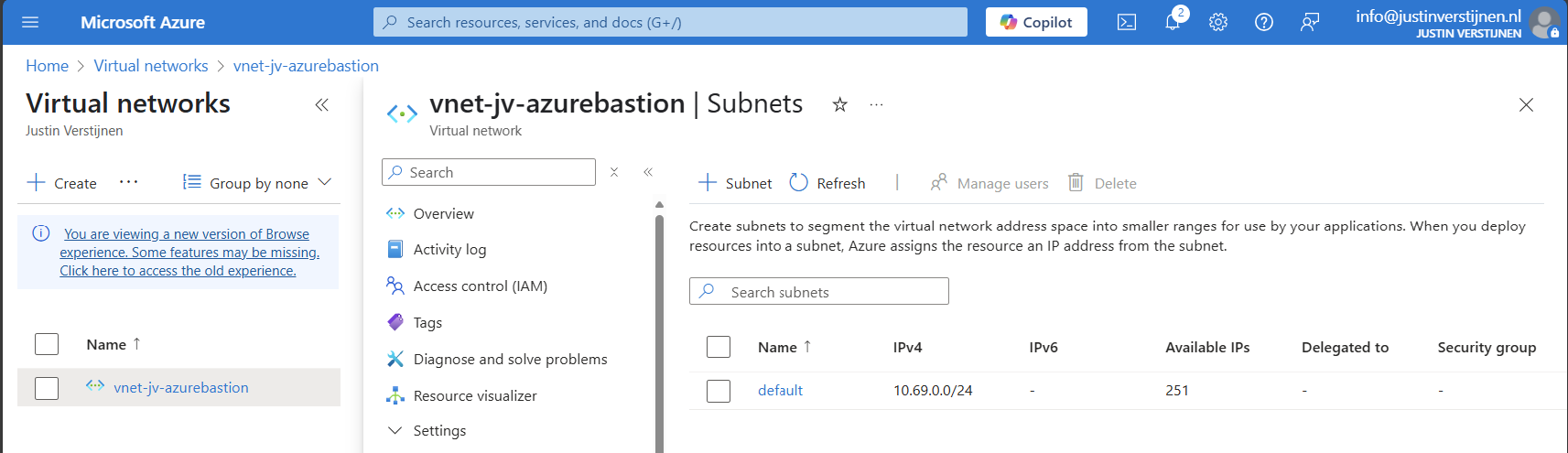

Create Azure Bastion subnet

Before we can deploy Azure Bastion to a network, we must create a subnet for this managed service. This can be done in the virtual network. Then go to “subnets”:

Click on “+ Subnet” to create a new subnet:

Select “Azure Bastion” at the subnet purpose field, this is a template for the network.

Click on “Add” to finish the creation of this subnet.

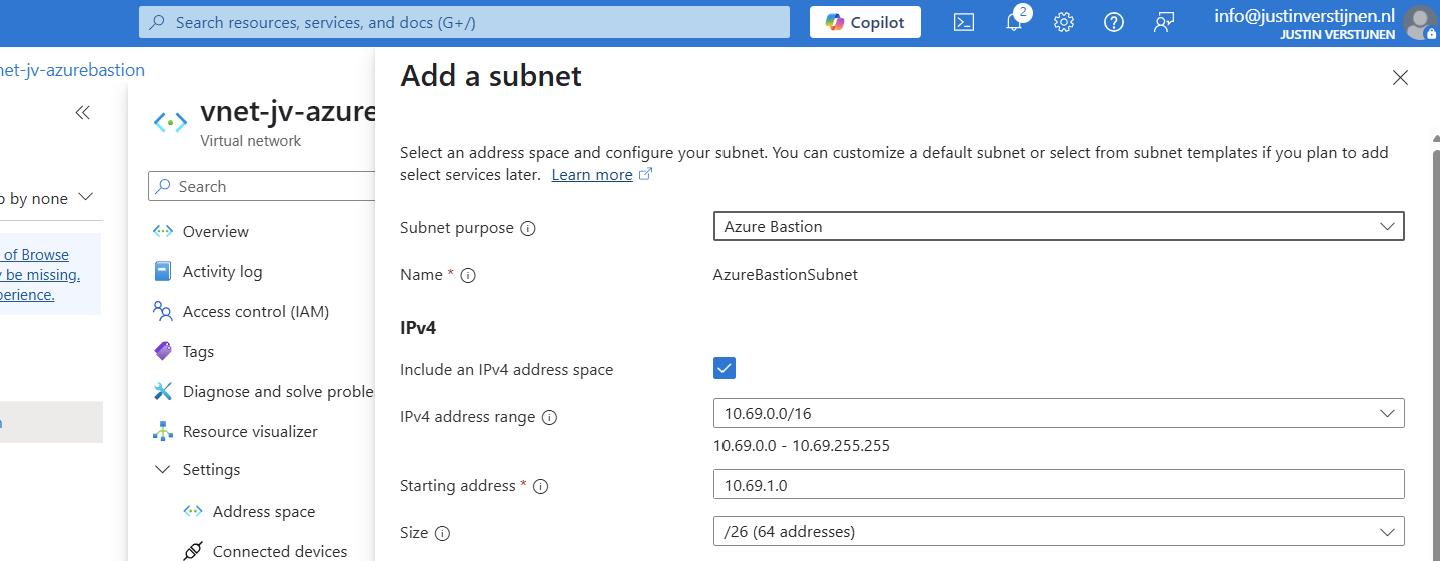

Deploy Azure Bastion instance

Now go back to “Bastions” and we can create a new instance:

Fill in your details and select your Tier (SKU). Then choose the network to place the Bastion instance in. The virtual network and the basion instance must be in the same region.

Then create a public IP which the Azure Bastion service uses to form the bridge between internet and your virtual machines.

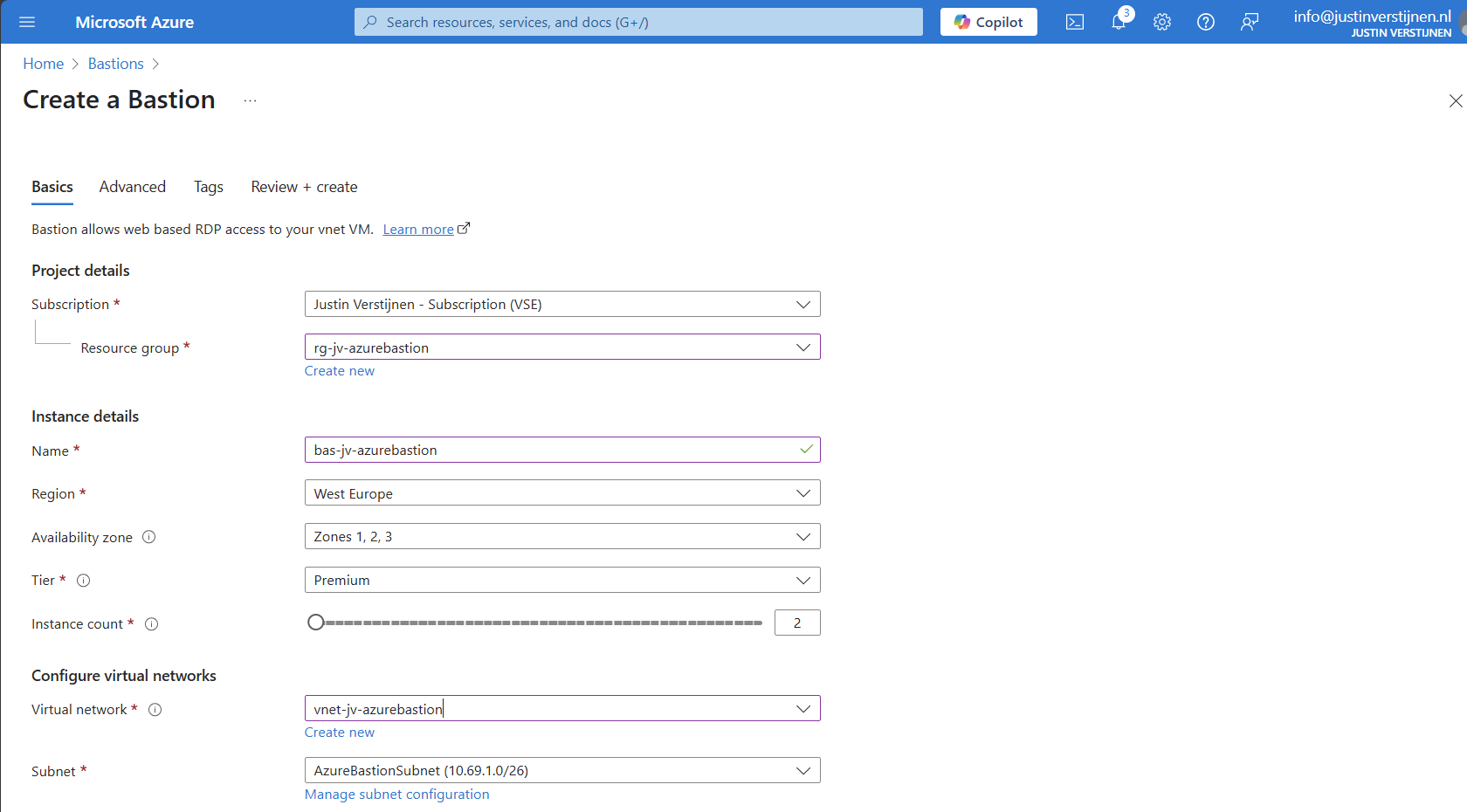

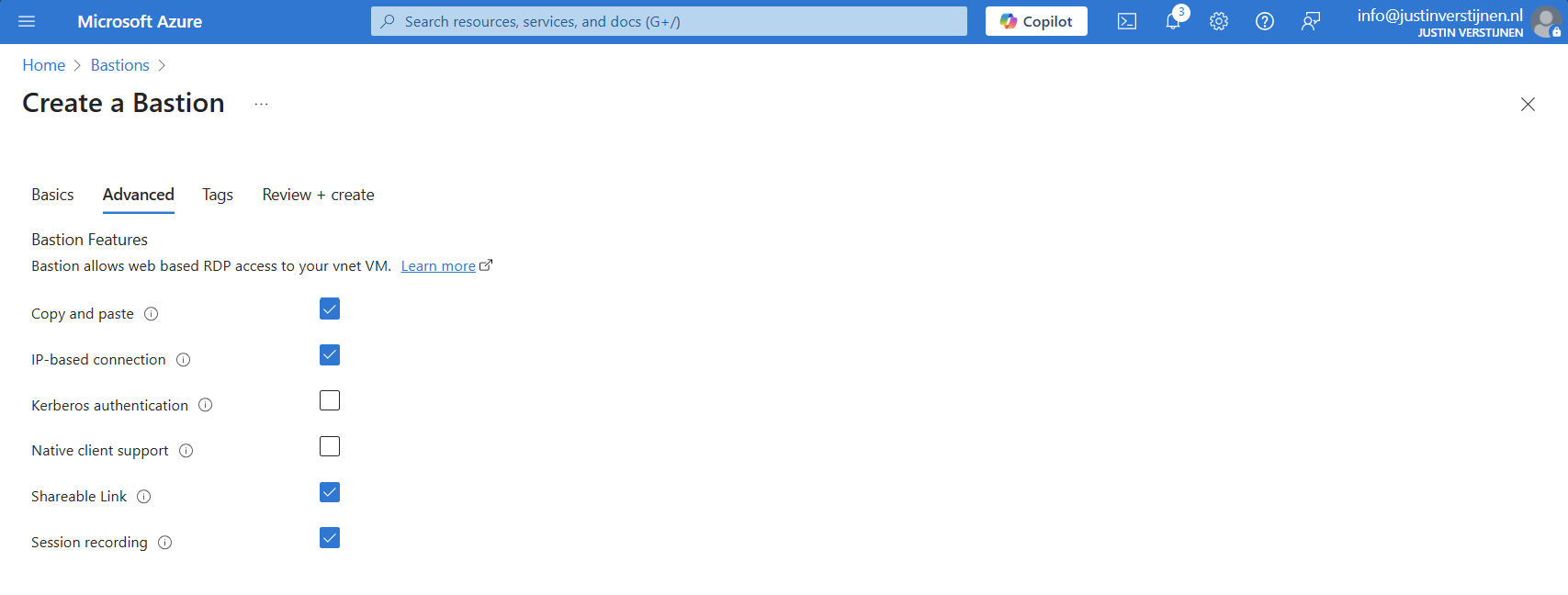

Now we advance to the tab “Advanced” where we can enable some Premium features:

I selected these options for showcasing them in this post.

Now we can deploy the Bastion instance. This will take around 15 minutes.

Alternate way to deploy Bastions

You can also deploy Azure Bastion when creating a virtual network:

However, this option has less control over naming structure and placement. Something we don’t always want :)

Using Azure Bastion

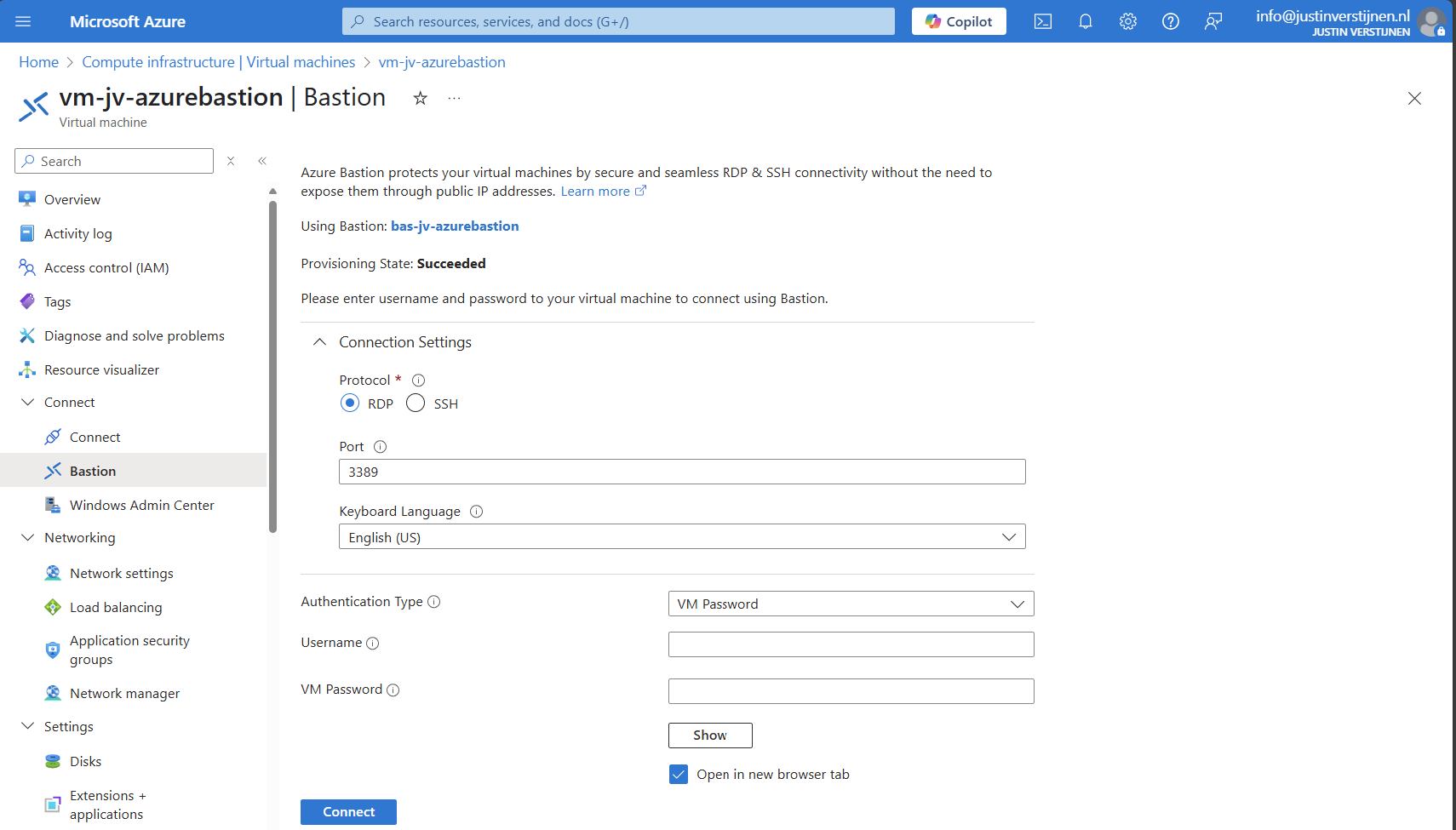

We can now use Azure Bastion by going to the instance itself or going to the VM you want to connect with.

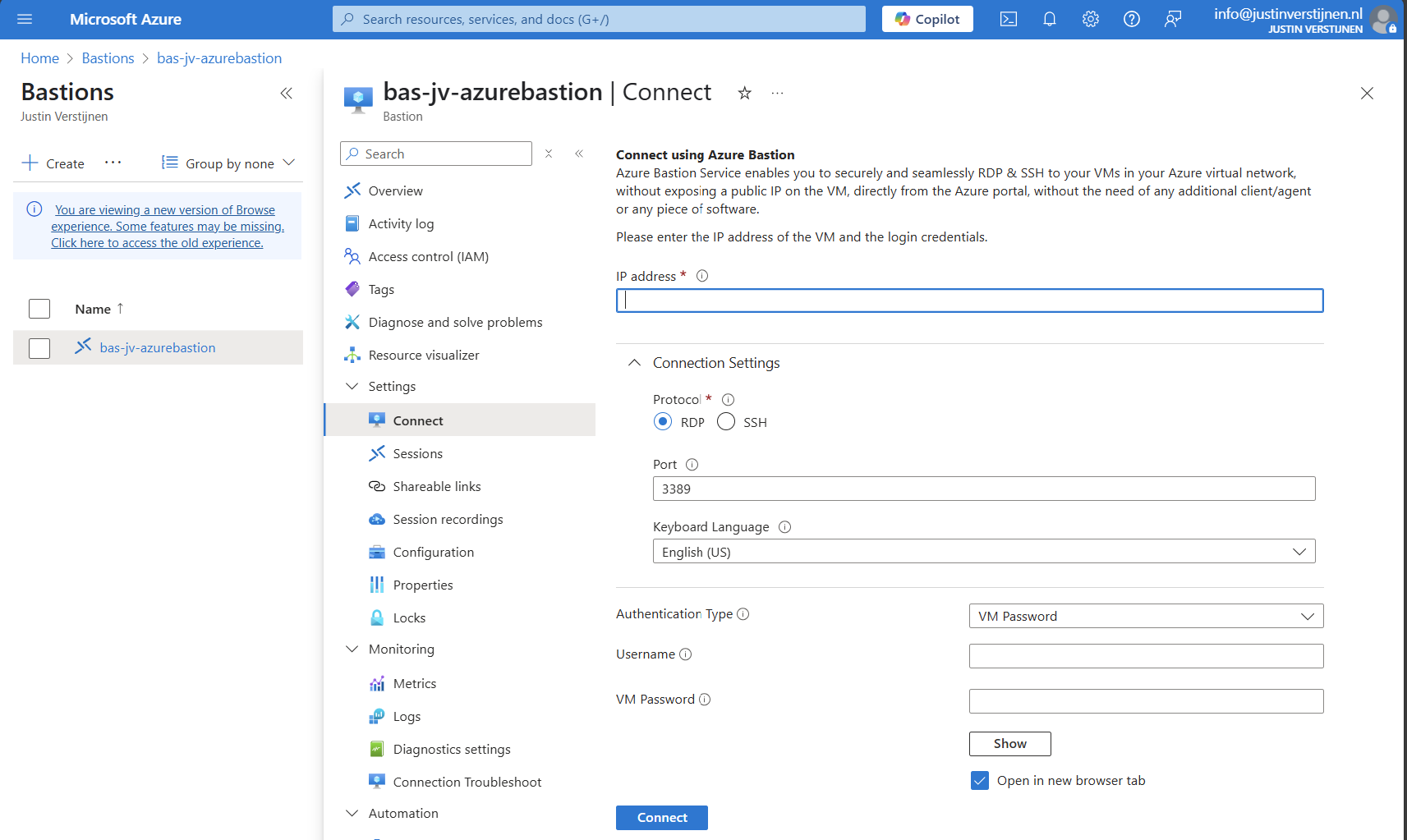

Via instance:

Via virtual machine:

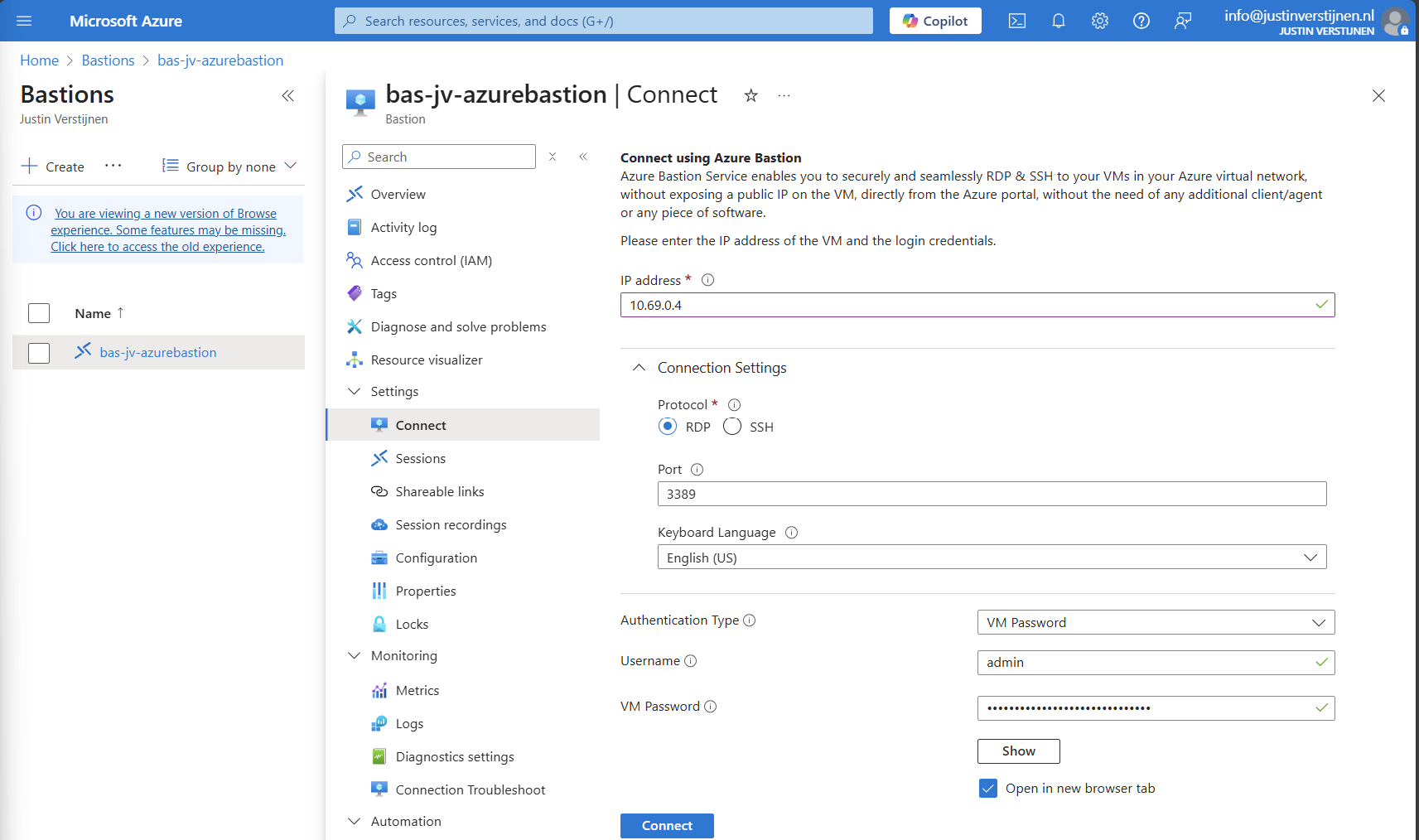

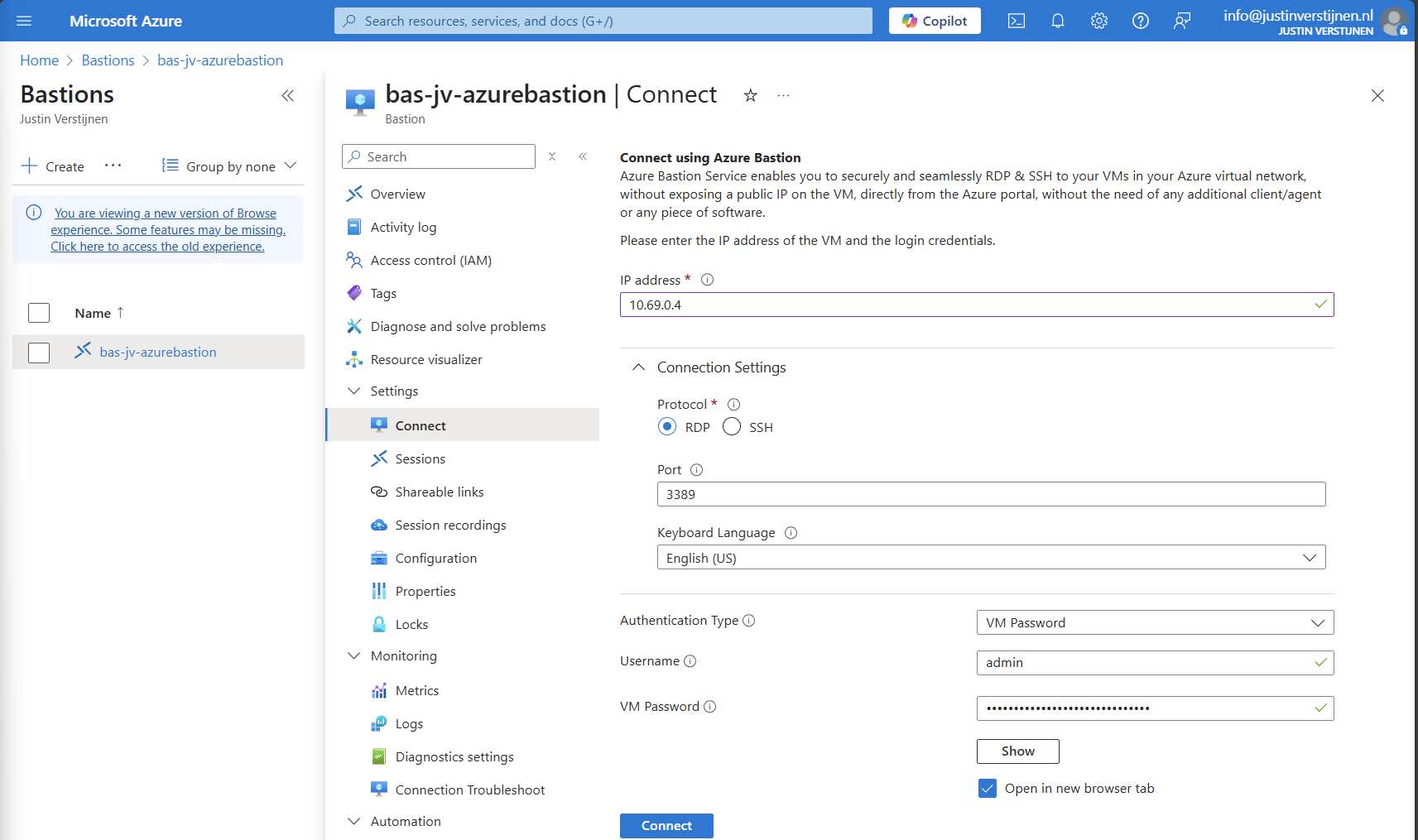

Connecting to virtual machine

We can now connect to a virtual machine. In this case I will use a Windows VM:

Fill in the details like the internal IP address and the username/password. Then click on “Connect”.

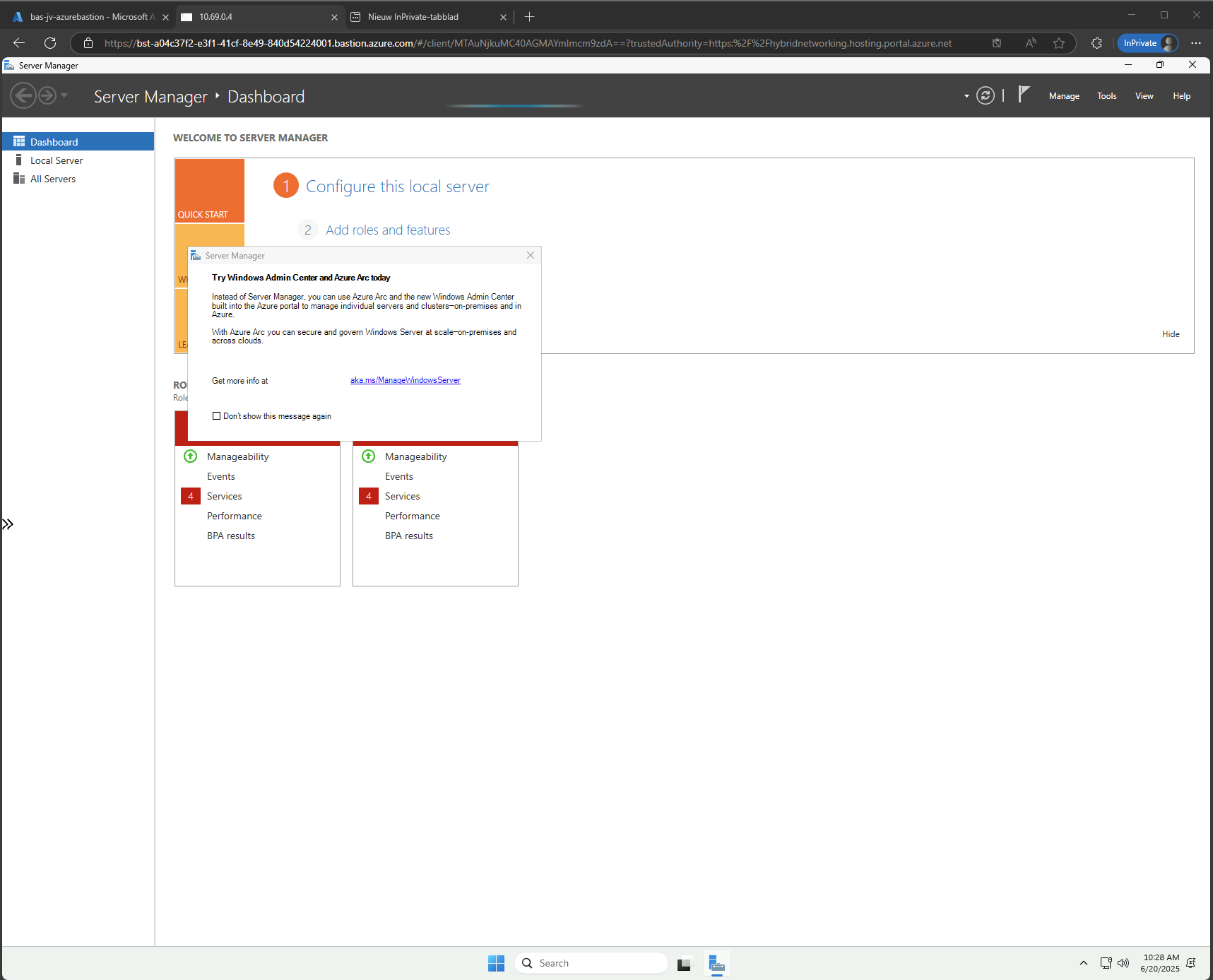

Now we are connected through the browser, without needing to open any ports or to install any applications:

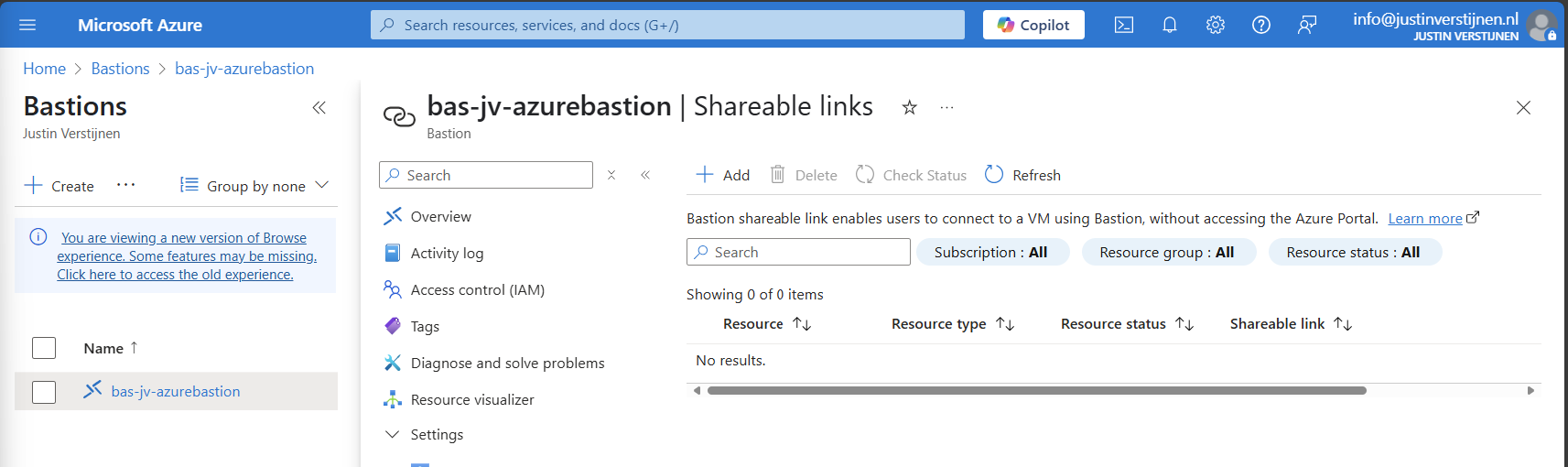

Use shareable links with Azure Bastion (optional)

In Azure Bastion, it’s possible to have shareable links. With these links you can connect to the virtual machine directly from a URL, even without logging into the Azure Portal.

This may decrease the security, so be aware of how you store these links.

In the Azure Bastion instance, open the menu “Shareable links”:

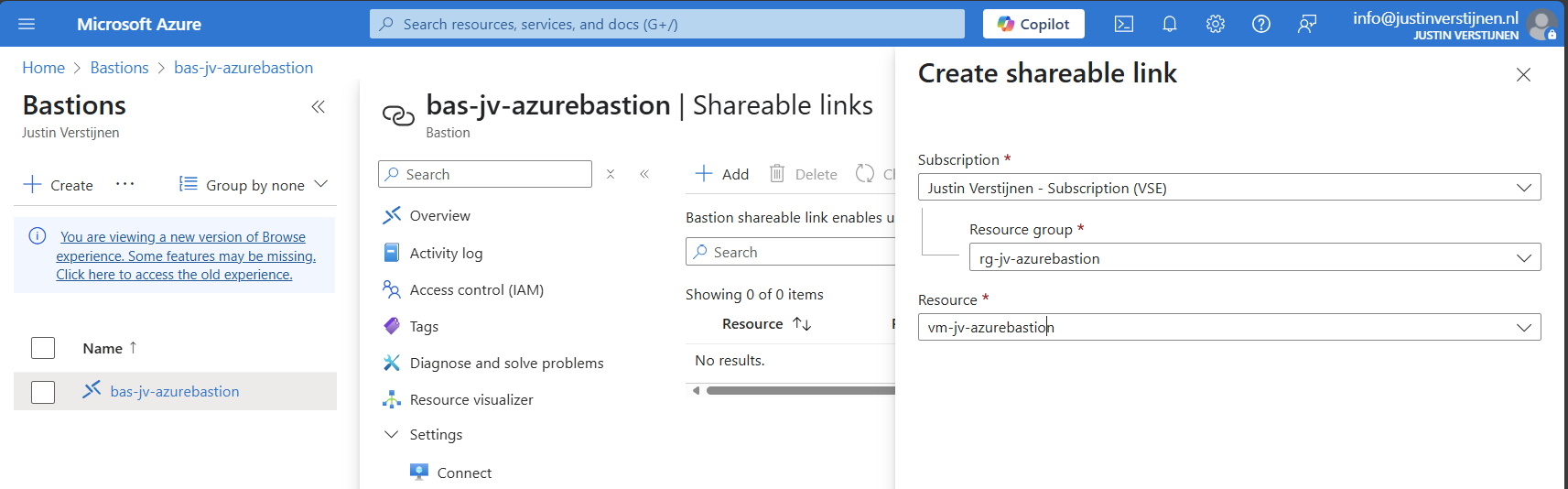

Click on “+ Add”

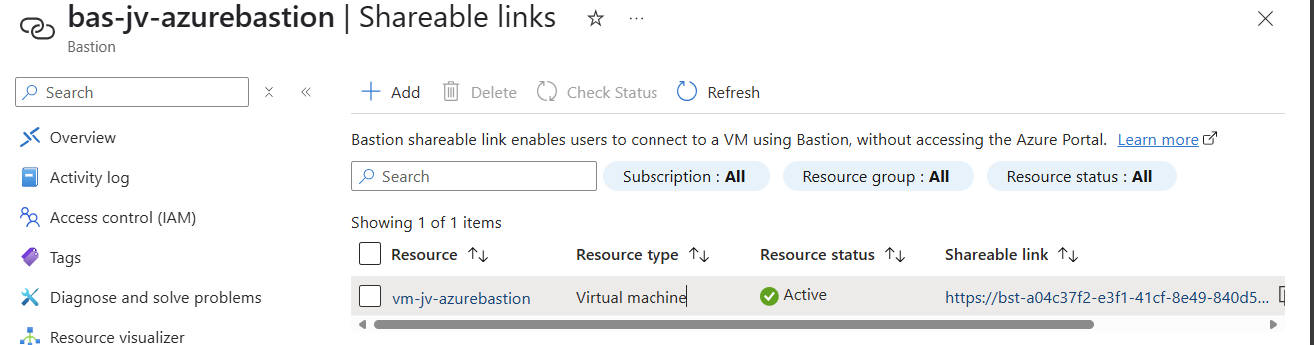

Select the resource group and then the virtual machine you want to share. Click on “Create”.

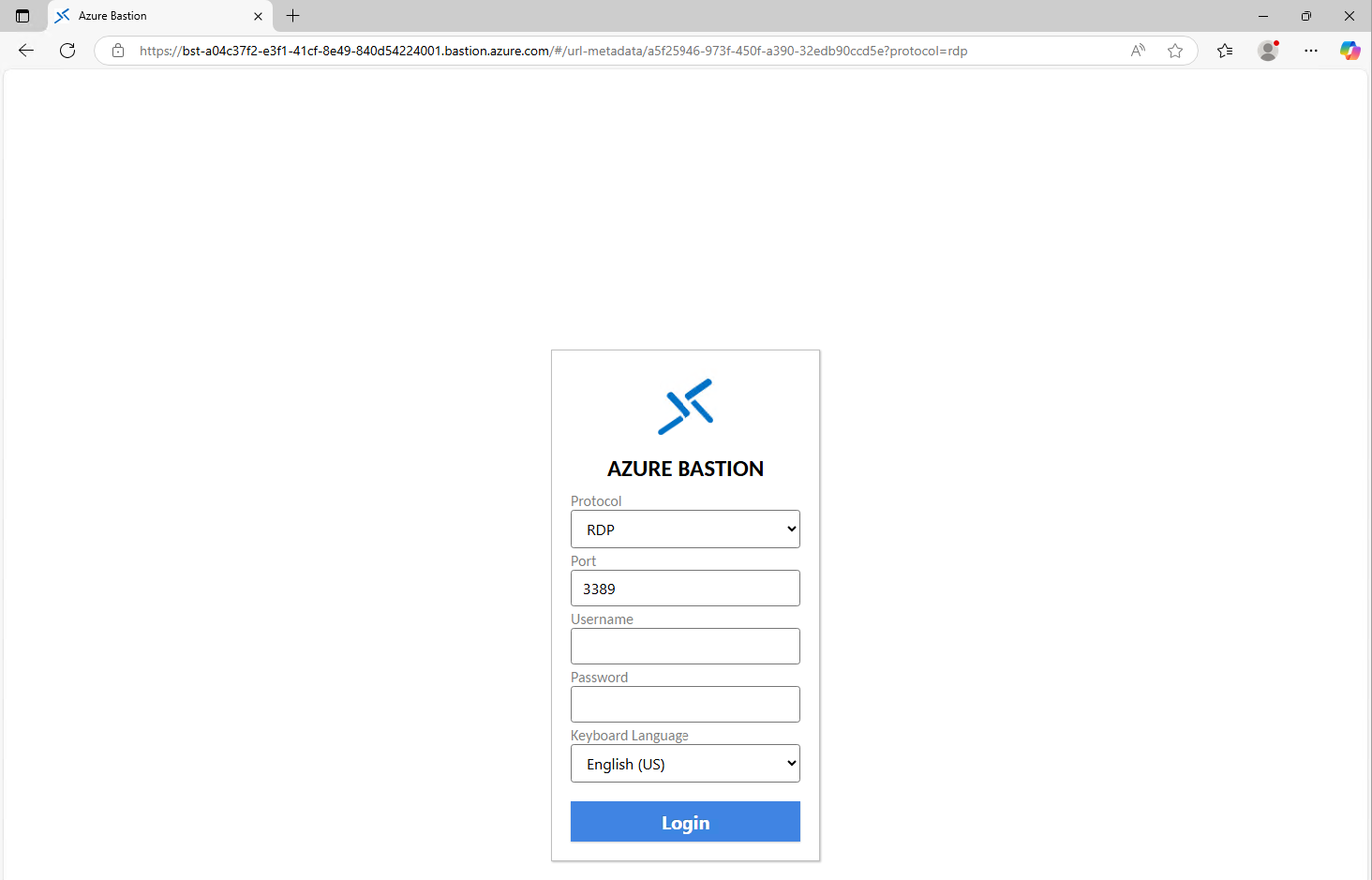

We can now connect to the machine using the shareable link. This looks like this:

Of course you still need to have the credentials and the connection information, but this is less secure than accessing servers via the Azure Portal only. This will expose a login page to the internet, and with the right URL, its a matter of time for a hacker to breach your system.

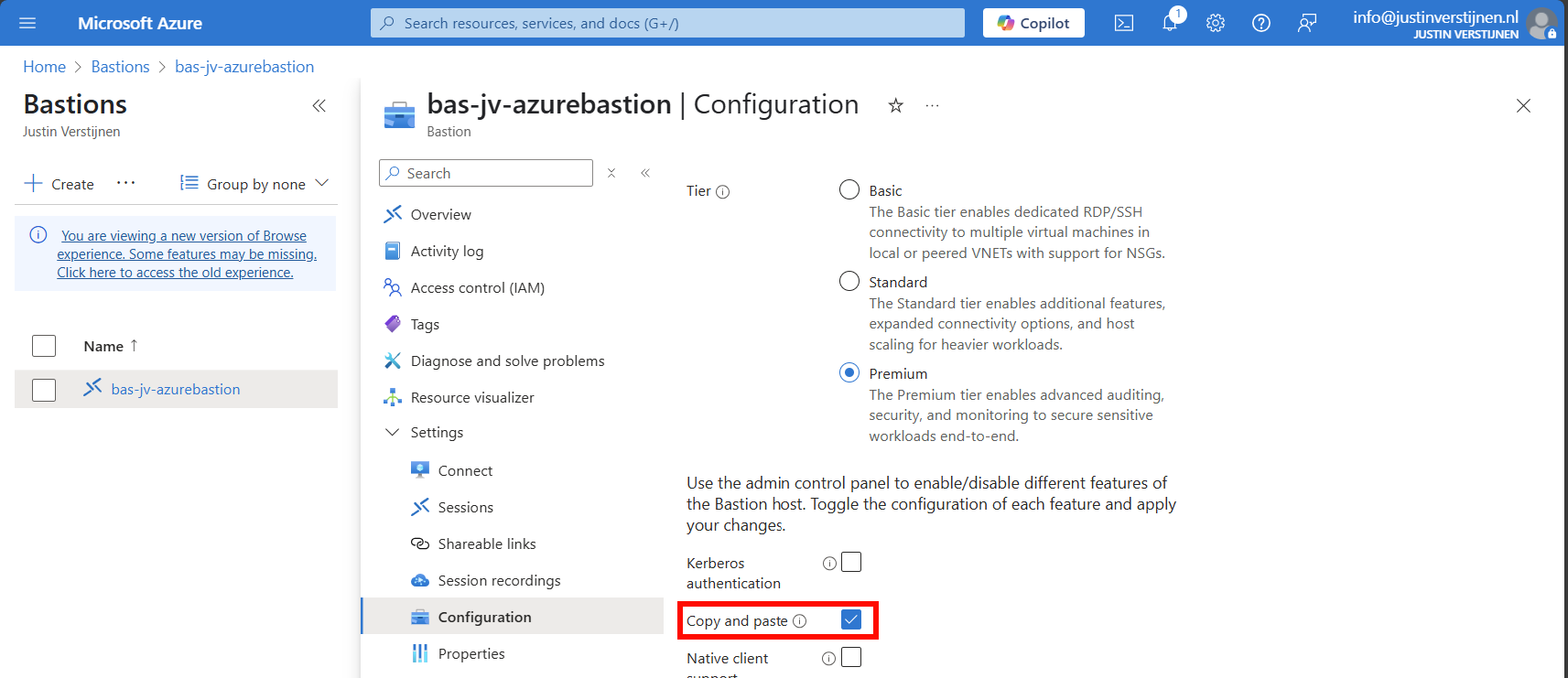

Disable Copy/Paste in sessions (optional)

We also have the option to disable copy/paste functionality in the sessions. This improves the security while decreasing the user experience for the administrators.

You can disable this by deselecting this option above.

Configure session recording (optional)

When you want to configure session recording, we have to create a storage account in Azure for the recordings to be saved. This must be configured in these steps, where I will guide you through:

- Create a Storage account

- Configure CORS resource sharing

- Create a container

- Create SAS token

- Configure Azure Bastion side

Let’s follow these steps:

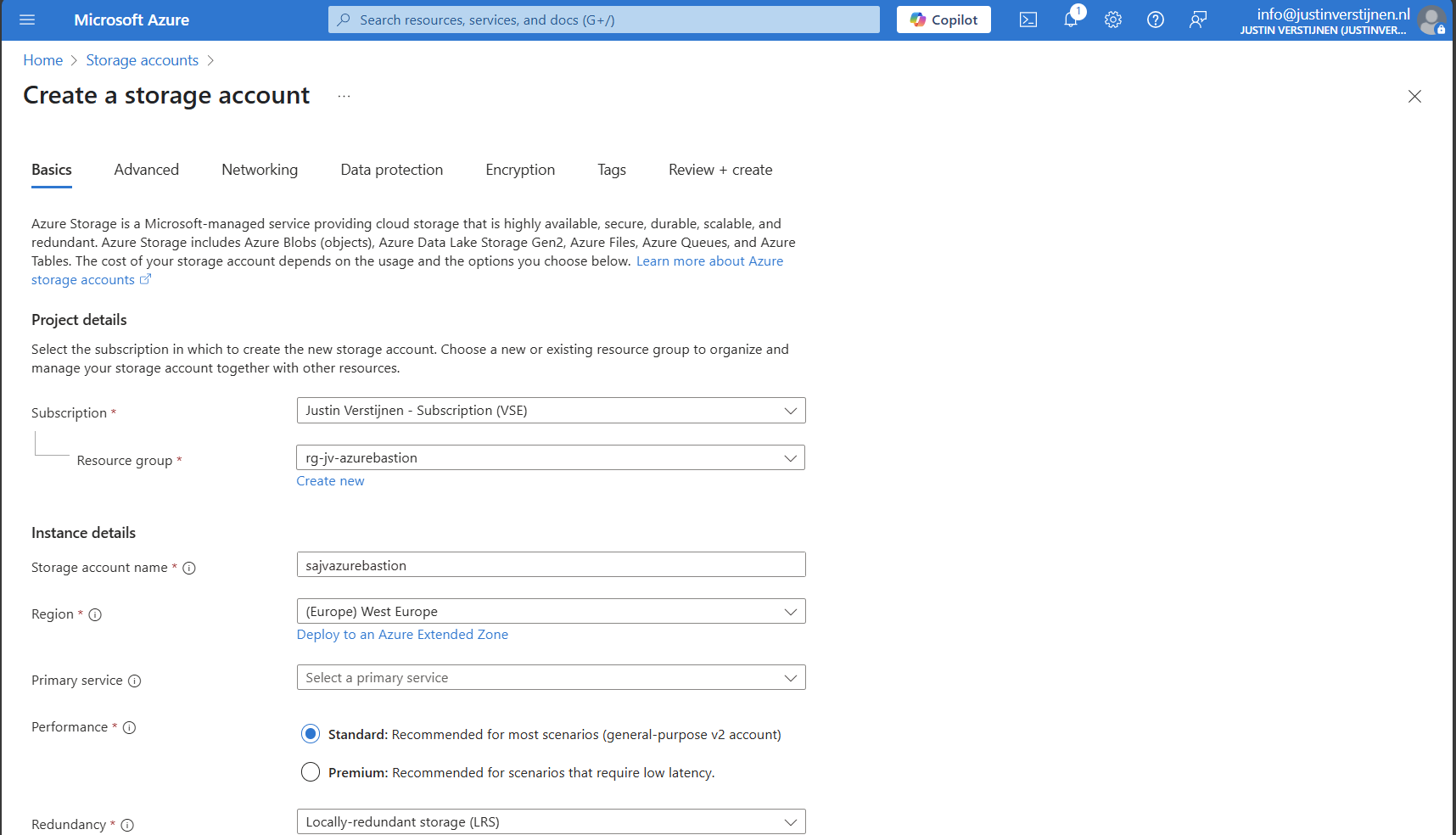

Create storage account

Go to “Storage accounts” and create a new storage account:

Fill in the details on the first page and skip to the deployment as we don’t need to change other settings.

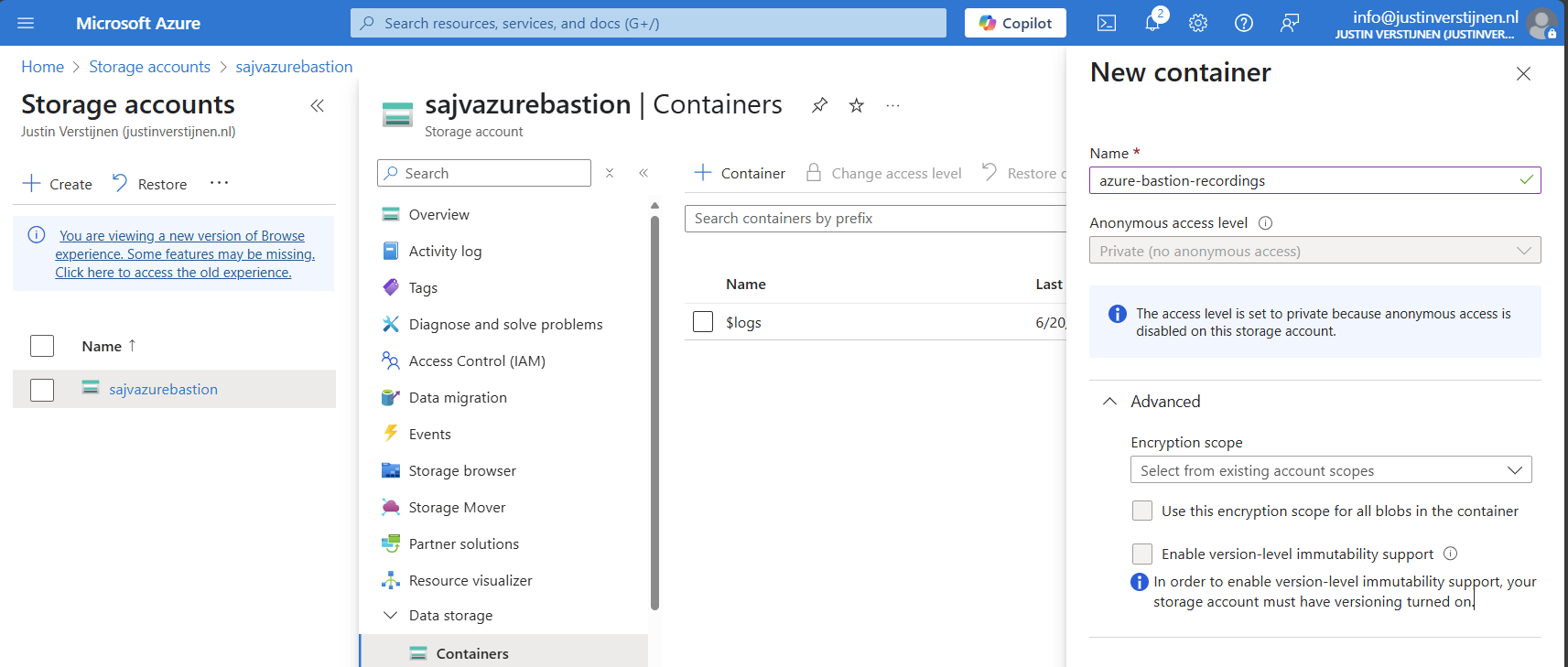

We need to create a container on the storage account. A sort of folder/share when talking in Windows language. Go to the storage account.

Configure CORS resource sharing

We need to configure CORS resource sharing. This is a fancy way of permitting that the Blob container may be used by an endpoint. In our case, the endpoint is the bastion instance.

In the storage account, open the section “Resource sharing (CORS)”

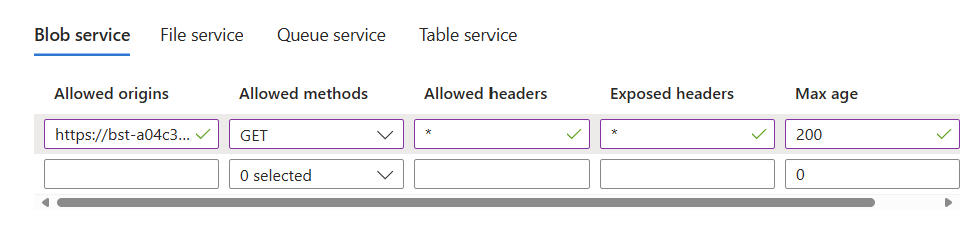

Here fill in the following:

| Allowed Origins | Allowed methods | Allowed headers | Exposed headers | Max age |

| Bastion DNS name* | GET | * | * | 86400 |

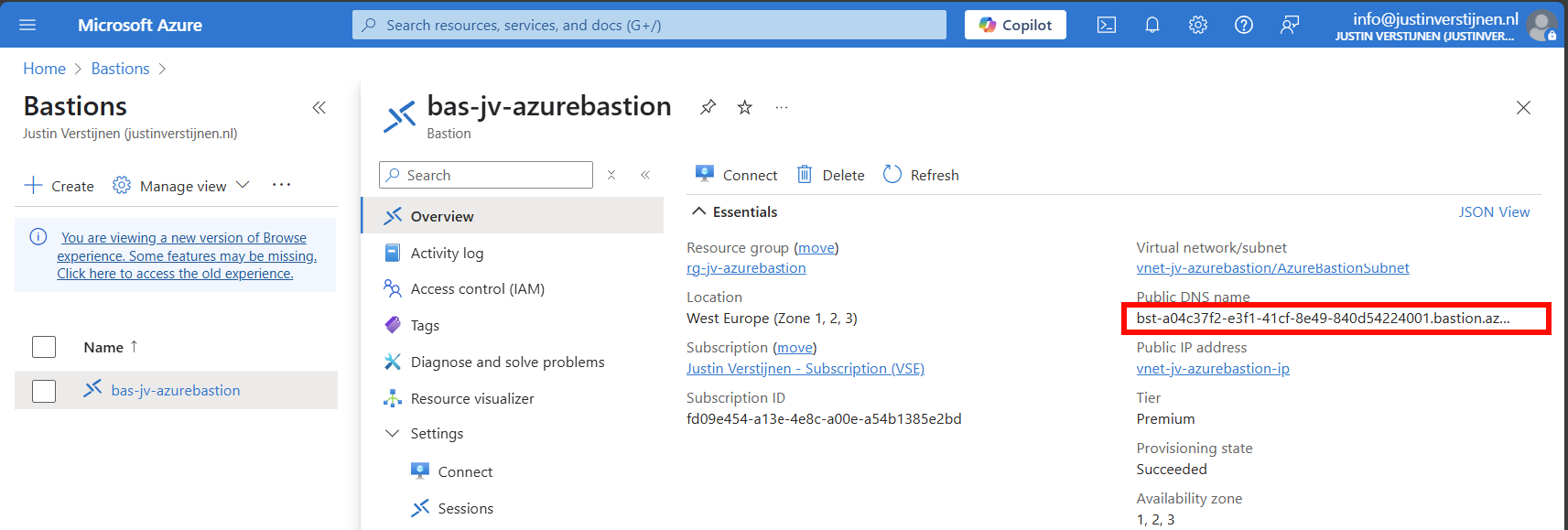

*in my case: https://bst-a04c37f2-e3f1-41cf-8e49-840d54224001.bastion.azure.com

The Bation DNS name can be found on the homepage of the Azure Bastion instance:

Ensure the CORS settings look like this:

Click on “Save” and we are done with CORS.

Create container

Go to the storage account again and create a new container here:

Create the container and open it.

Create SAS token

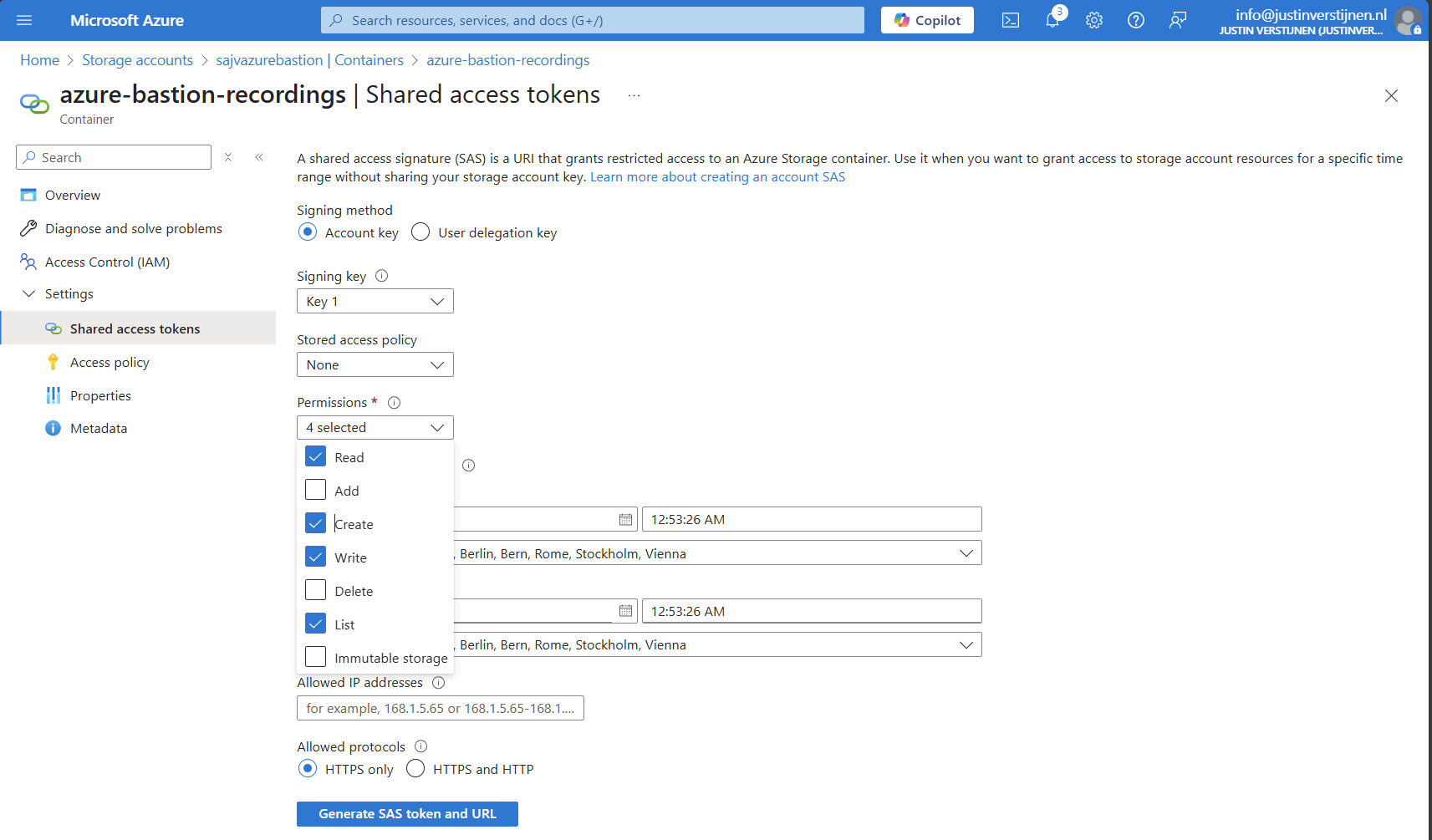

We need to create a Shared Access Signature for the Azure Bastion instance to access our newly created storage account and container.

A Shared Access Signature (SAS) is a granular token which permits limited access to a storage account. A limited token with limited permissions at suit your needs, while using least-privilege.

To learn more about SAS tokens: click here

When you have opened the container, open “Shared access tokens”:

- Under permissions, select:

- Read

- Create

- Write

- List

- Set your timeframe for the access to be active. This has to be active now so we can test the configuration

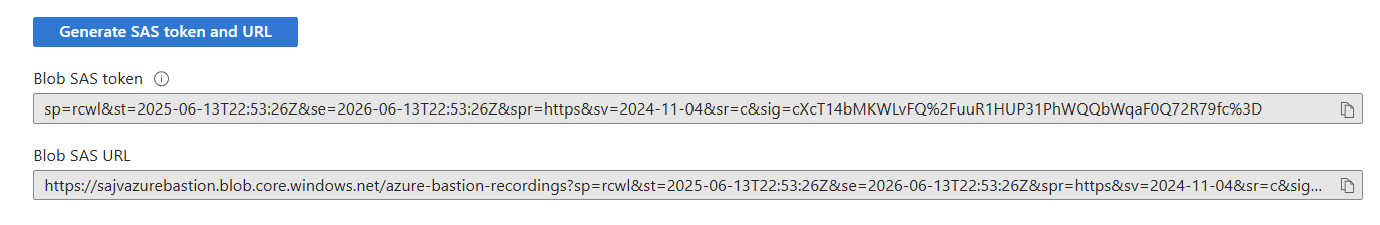

Then click on “Generate SAS token and URL” to generate a URL:

Copy the Blob SAS URL, as we need this in the next step.

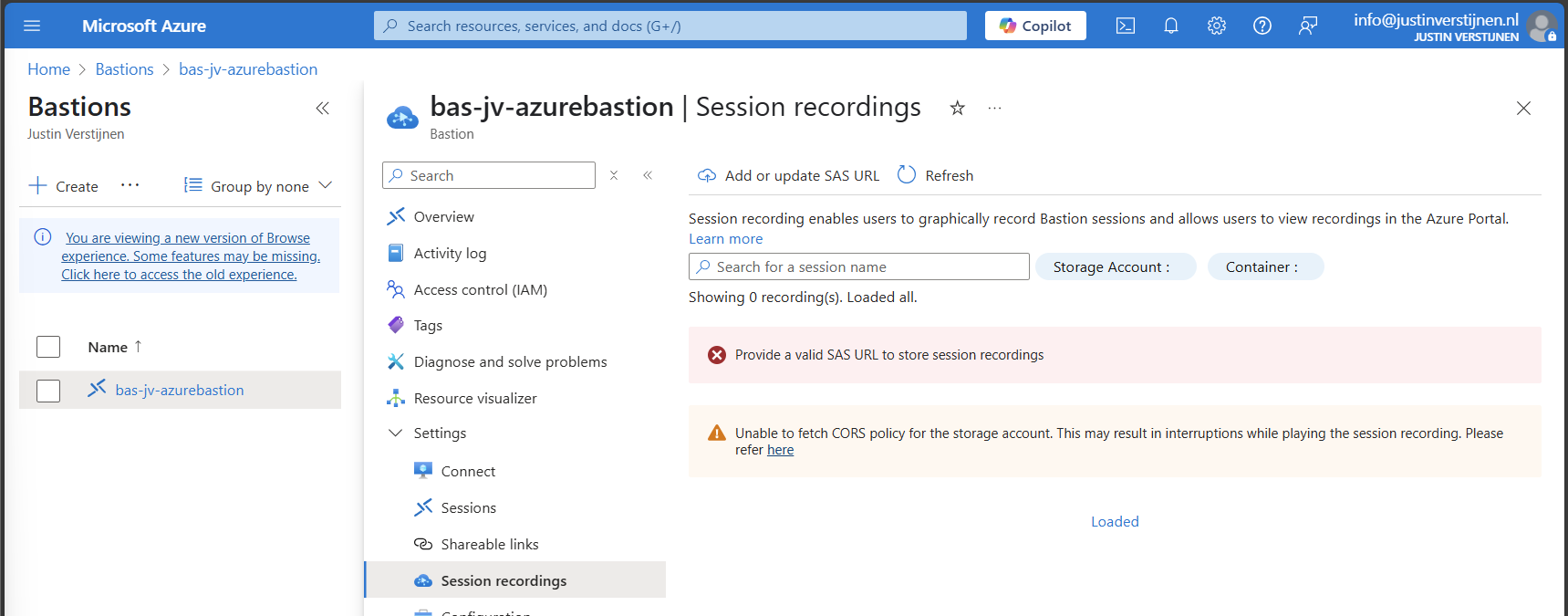

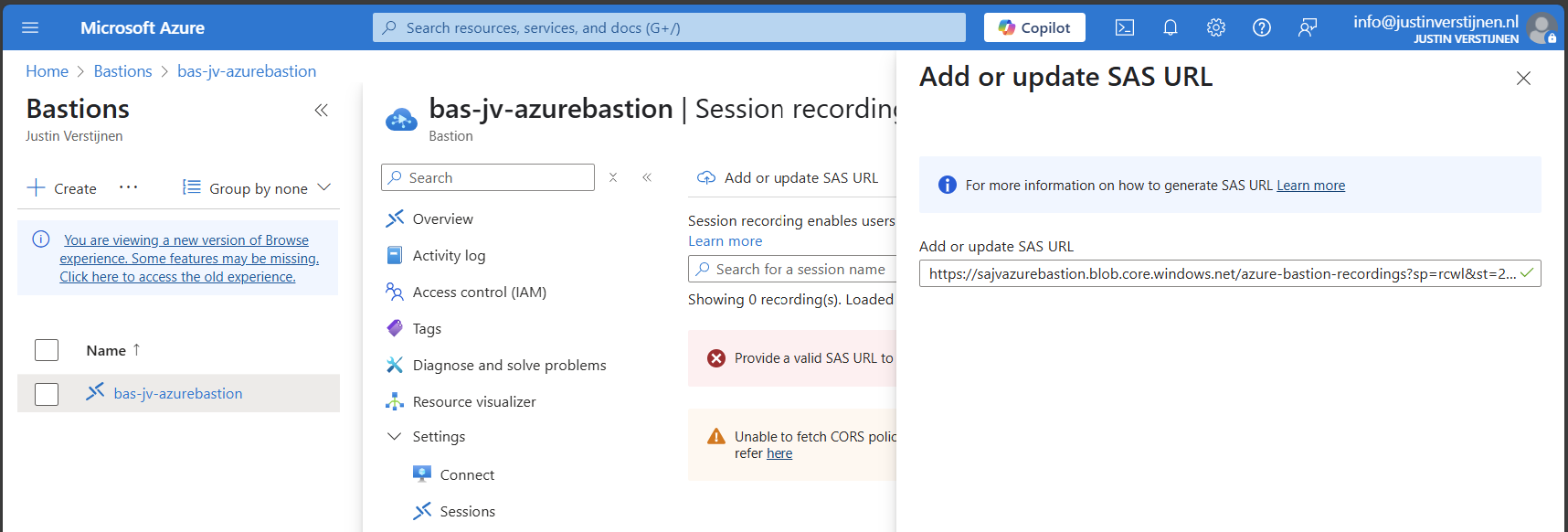

Configure Azure Bastion-side for session recording

We need to paste this URL into Azure Bastion, as the instance can save the recordings there. Head to the Azure Bastion instance:

Then open the option “Session recordings” and click on “Add or update SAS URL”.

Paste the URL here and click on “Upload”.

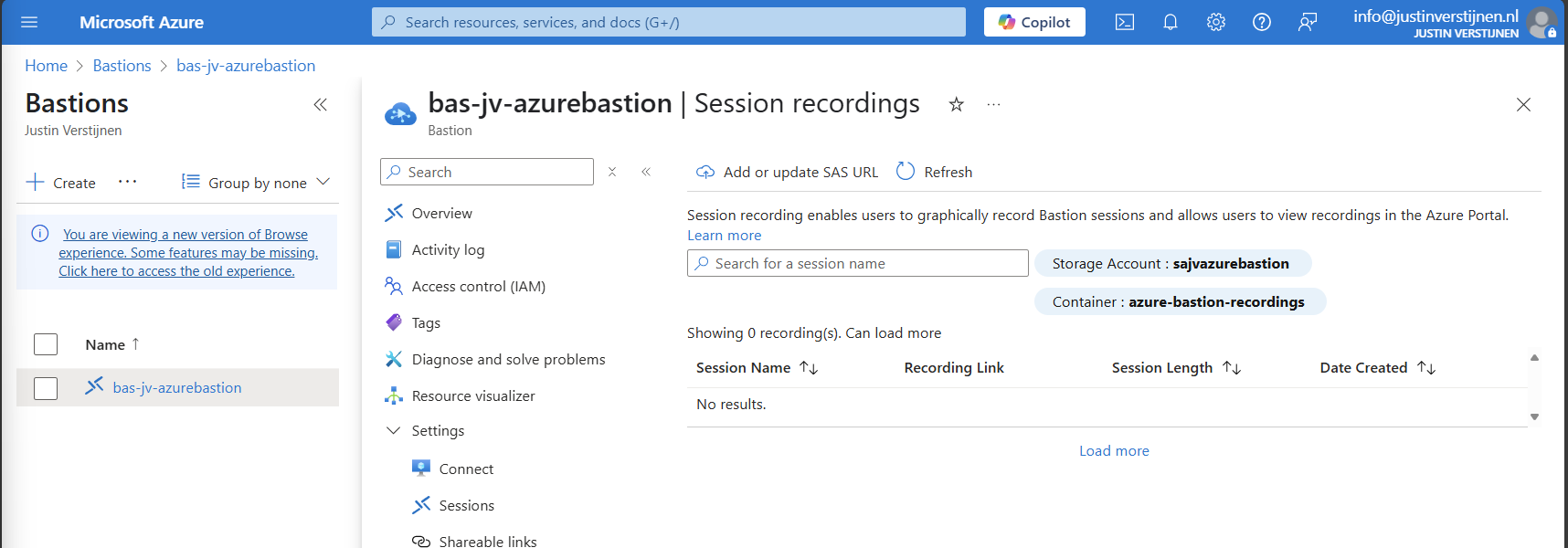

Now the service is succesfully configured!

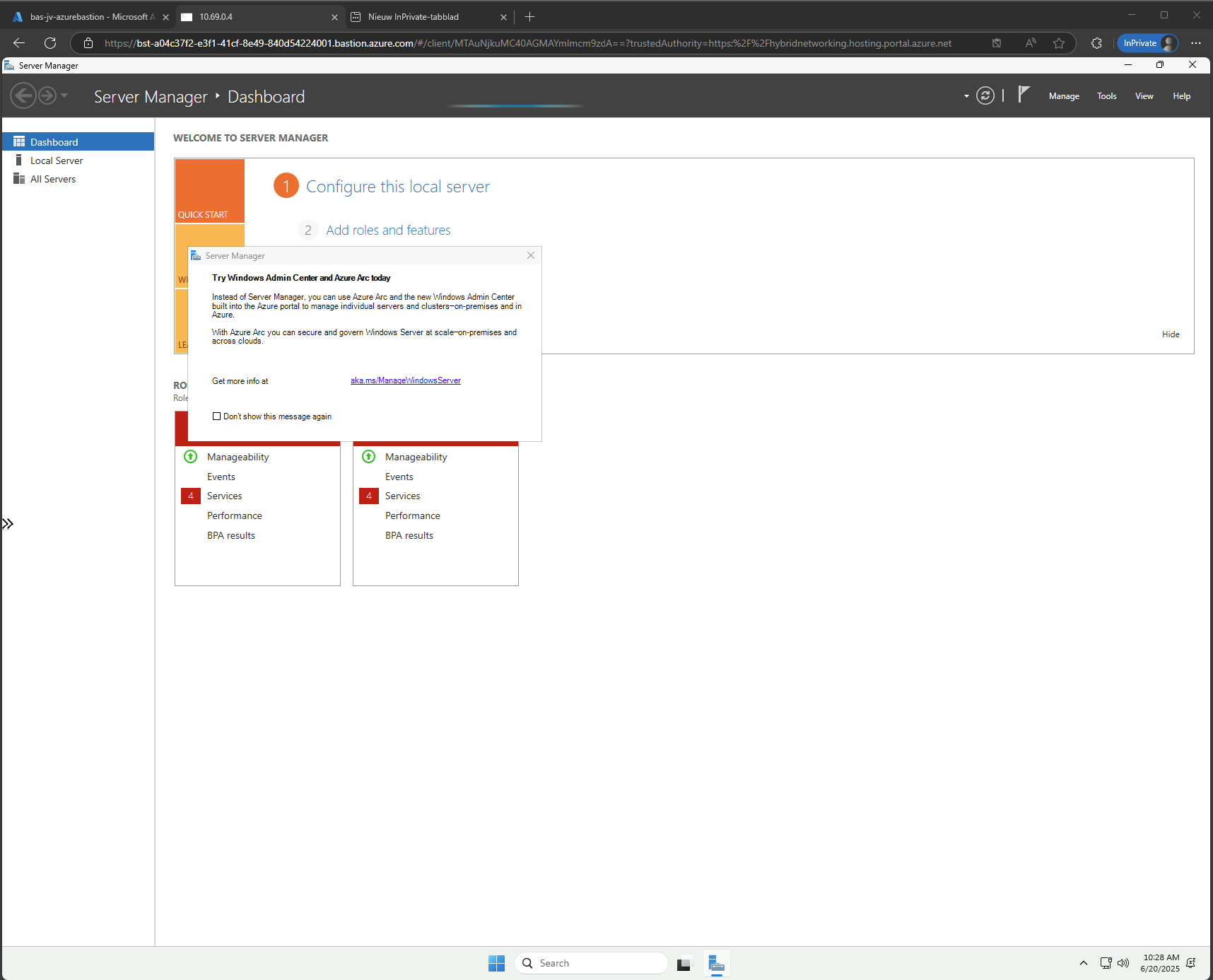

Testing Azure Bastion session recording

Now let’s connect again to a VM now by going to the instance:

Now fill in the credentials of the machine to connect with it.

We are once again connected, and this session will be recorded. You can find these recordings in the Session recordings section in the Azure portal. These will be saved after a session is closed.

The recording looks like this, watch me installing the ISS role for demonstration of this function. This is a recording that Azure Bastion has made.

Summary

Azure Bastion is a great tool for managing your servers in the cloud without opening sensitive TCP/IP ports to the internet. It also can be really useful as Jump server.

In my opinion it is relatively expensive, especially for smaller environments because for the price of a basic instance we can configure a great Windows MGMT server where we have all our tools installed.

For bigger environments where security is a number one priority and money a much lower priority, this is a must-use tool and I really recommend it.

Sources:

- https://learn.microsoft.com/en-us/azure/bastion/bastion-overview

- https://azure.microsoft.com/nl-nl/pricing/details/azure-bastion?cdn=disable

- https://justinverstijnen.nl/amc-module-6-networking-in-microsoft-azure/#azure-bastion

Thank you for reading this post and I hope it was helpful.

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

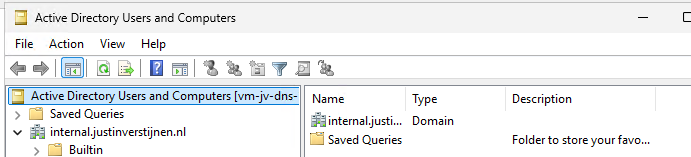

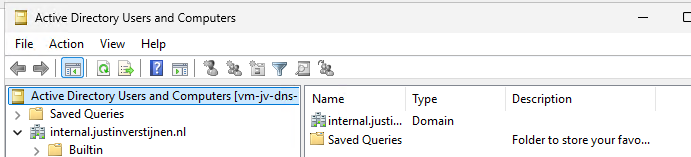

I tried running Active Directory DNS on Azure Private DNS

The configuration explained

The configuration in this blog post is a virtual network with one server and one client. In the virtual network, we will deploy a Azure Private DNS instance and that instance will do everything DNS in our network.

This looks like this:

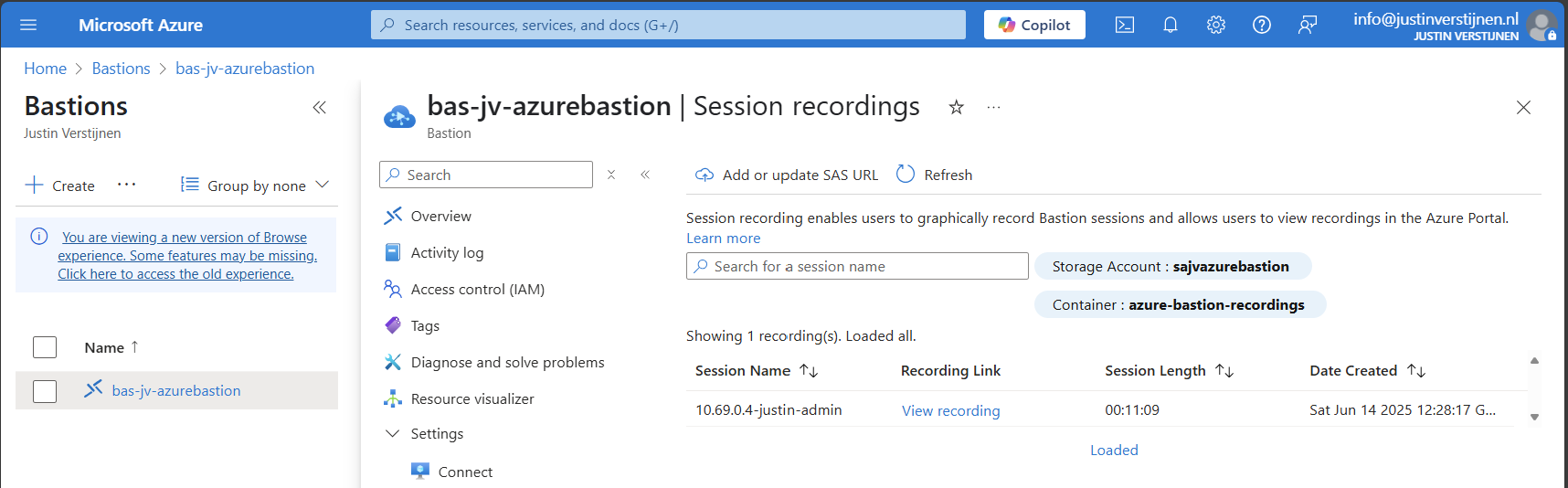

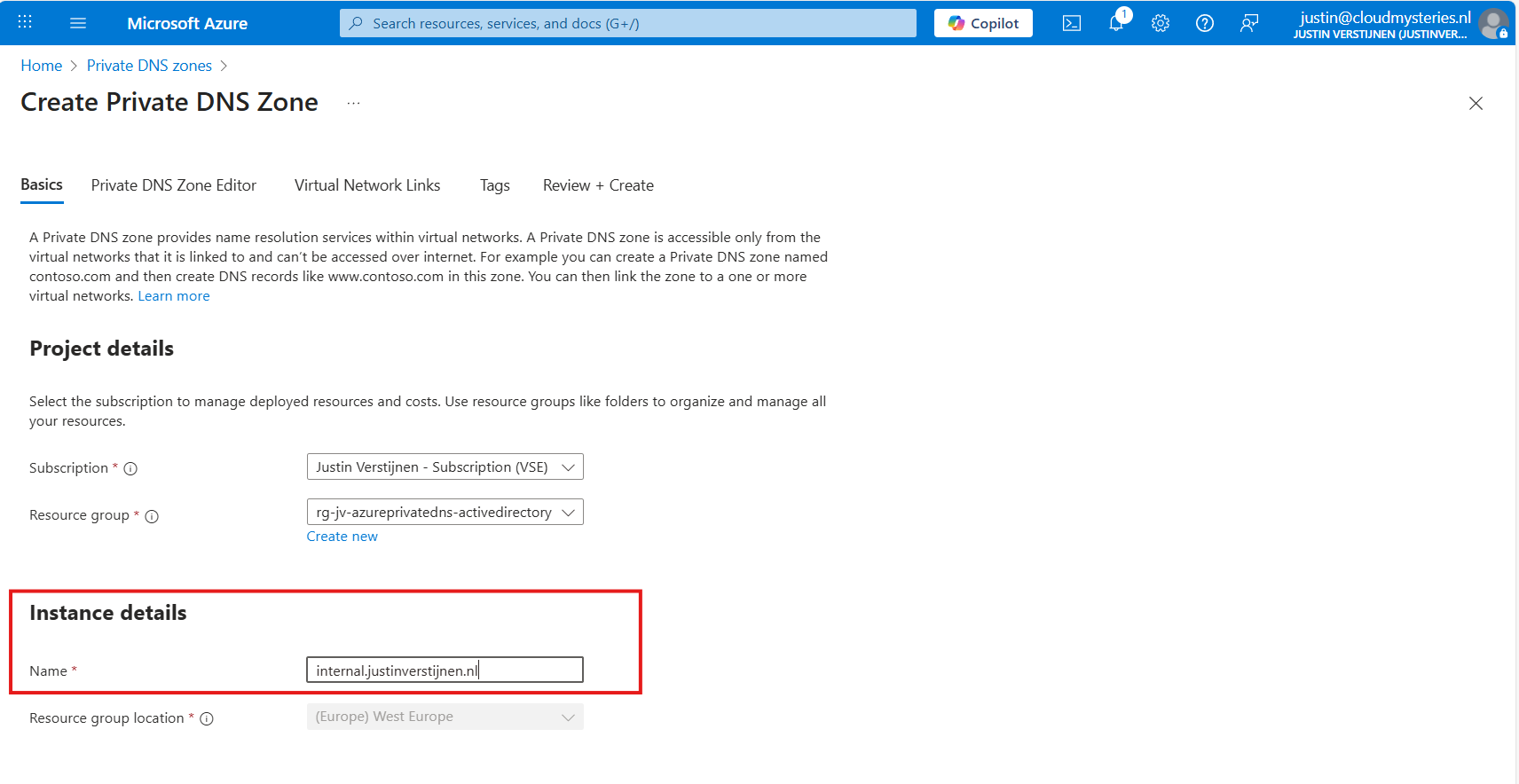

Deploying Azure Private DNS

Assuming you have everything already in plave, we will now deploy our Azure Private DNS zone. Open the Azure Portal and search for “Private DNS zones”.

Create a new DNS zone here.

Place it in the right resource group and name the domain your desired domain name. If you actually want to link your Active Directory, this must be the same as your Active Directory domain name.

In my case, I will name it internal.justinverstijnen.nl

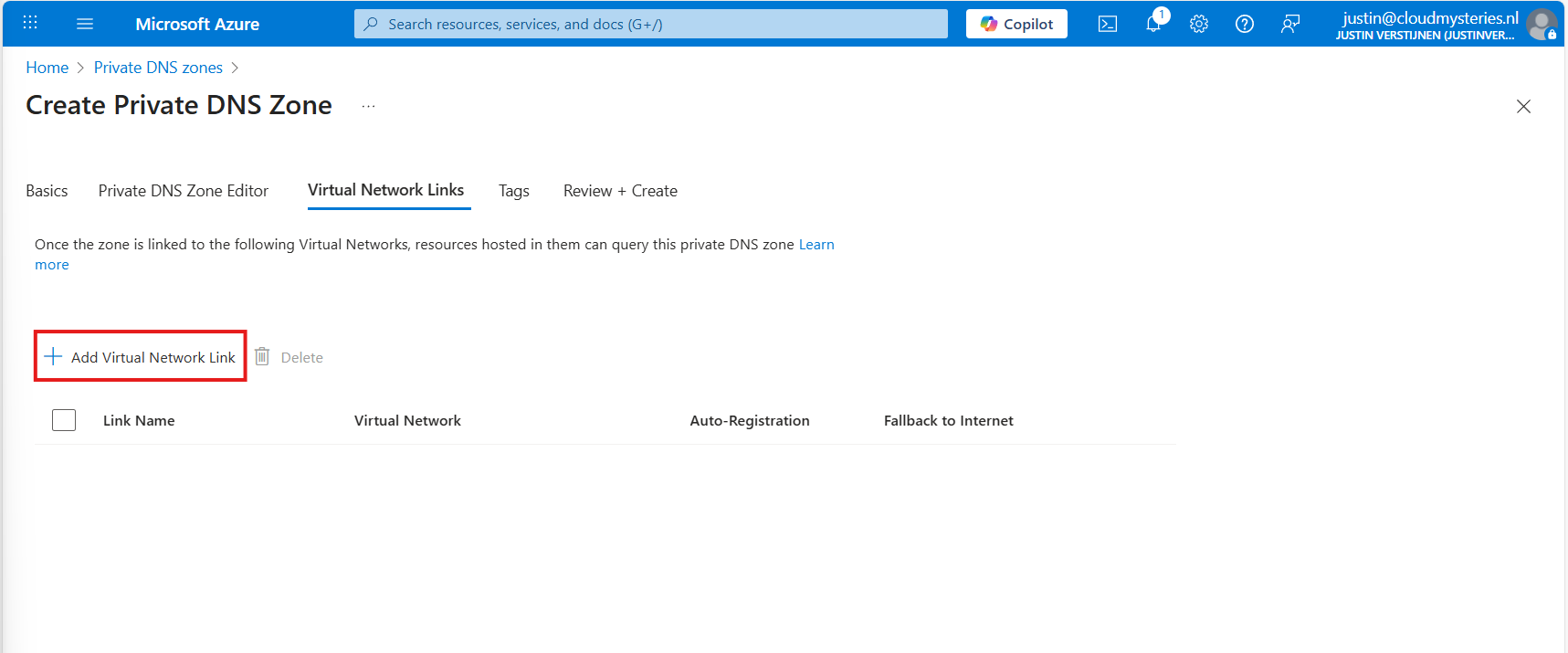

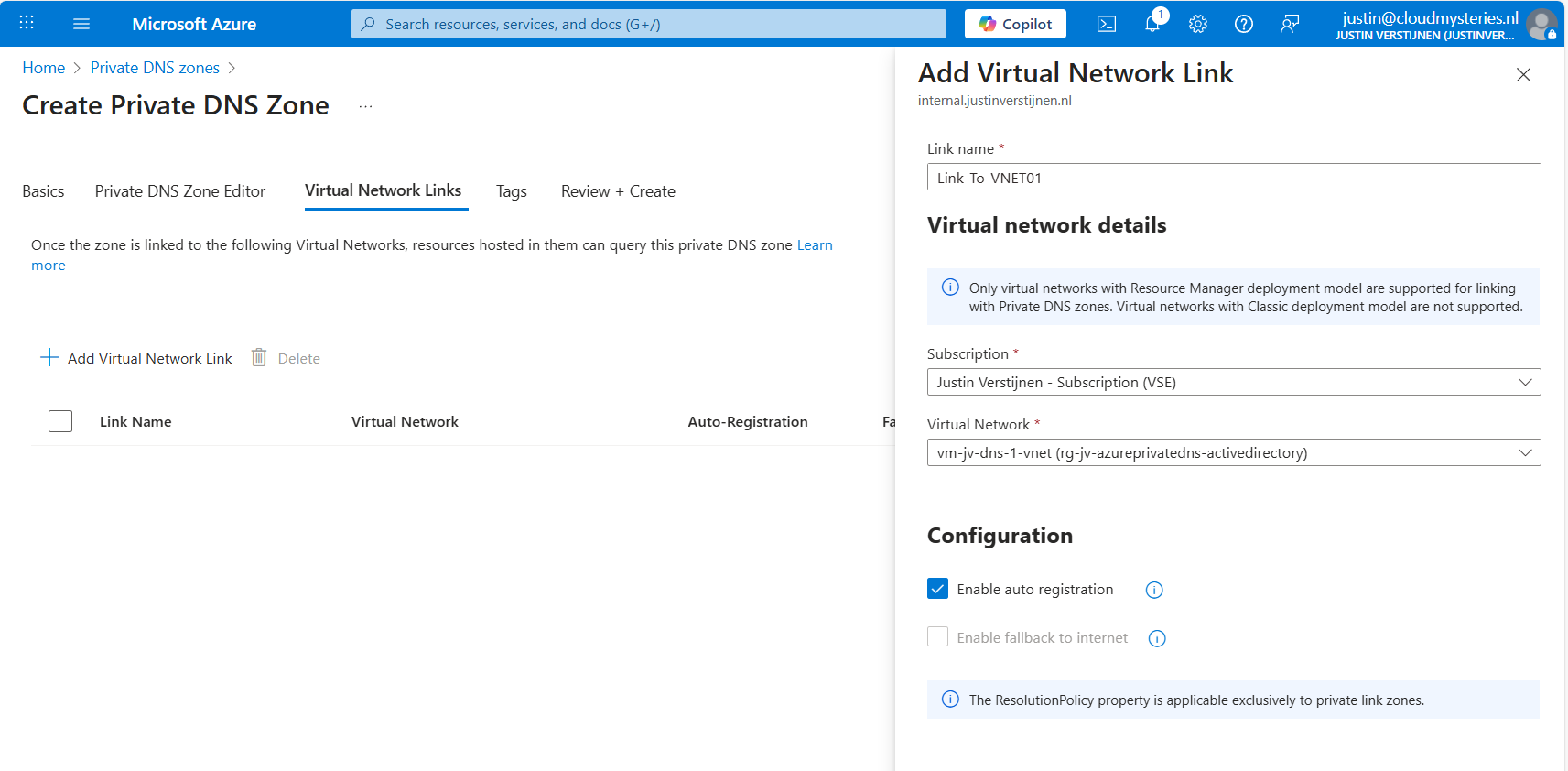

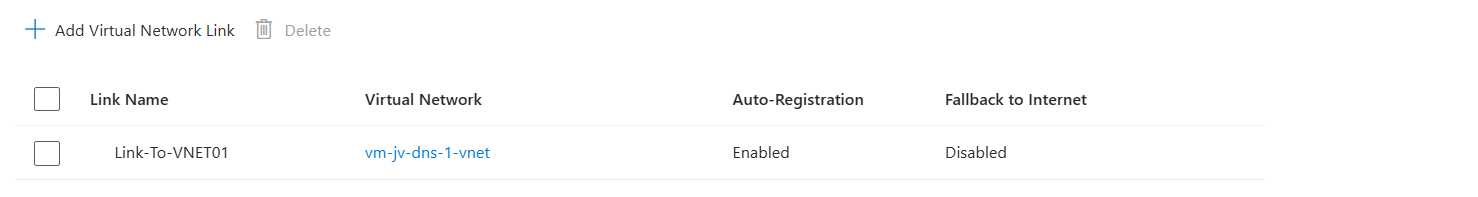

Link the DNS zone to your network

Advance to the tab “Virtual Network Links”, and we have to link our virtual network with Active Directory here:

Give the link a name and select the right virtual network.

You can enable “Auto registration” here, this means every VM in the network will be automatically registered to this DNS zone. In my case, I enabled it. This saves us from having to create records by hand later on.

Advance to the “Review + create” tab and create the DNS zone.

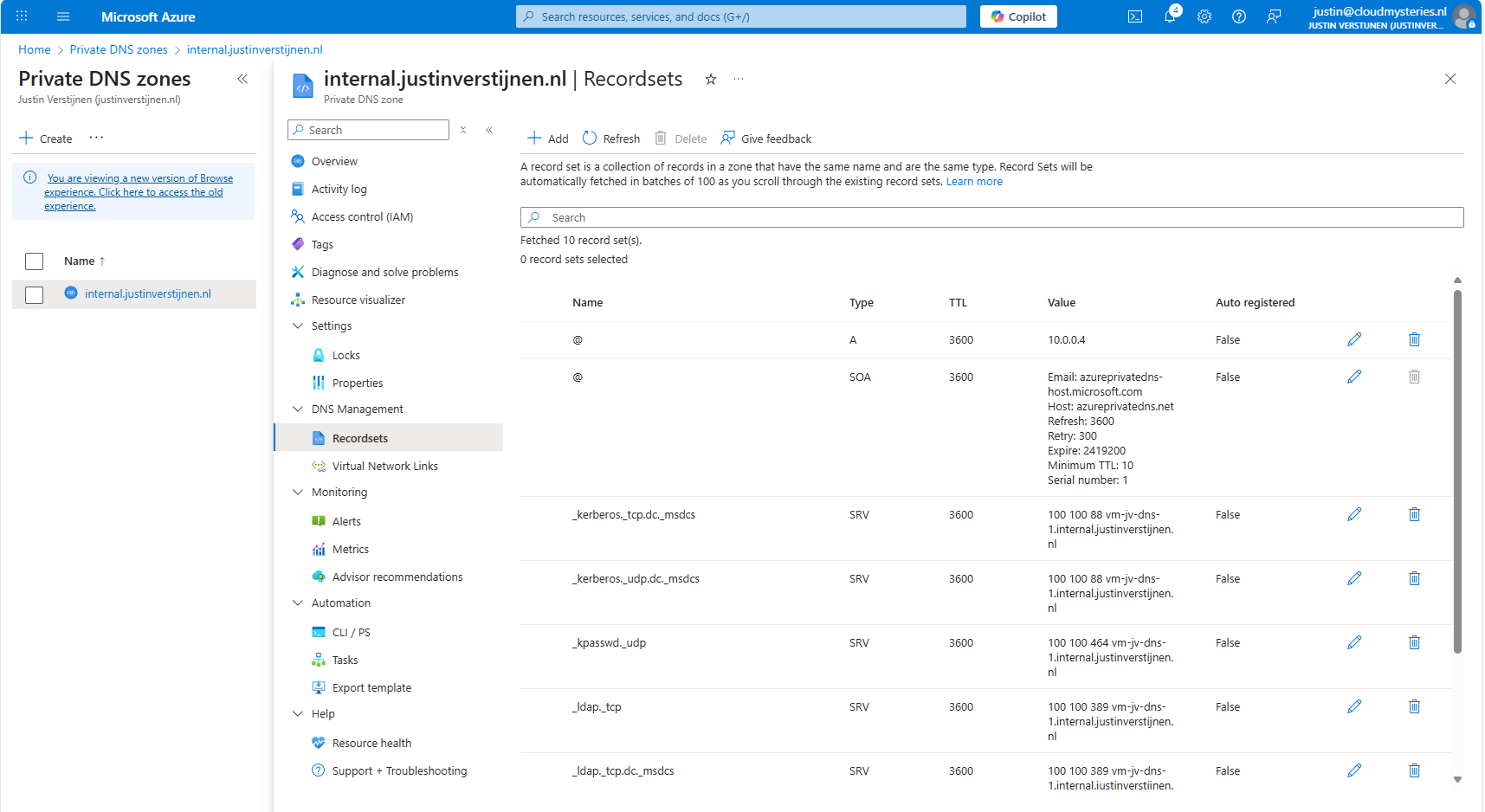

Creating the required DNS records

For Active Directory to work, we need to create a set of DNS records. Active Directory relies heavily on DNS, not only for A records but also for SRV and NS records. I used priority and weight 100 for all SRV records.

| Recordname | Type | Target | Poort | Protocol |

|---|---|---|---|---|

| _ldap._tcp.dc._msdcs.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 389 | TCP |

| _ldap._tcp.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 389 | TCP |

| _kerberos._tcp.dc._msdcs.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 88 | TCP |

| _kerberos._udp.dc._msdcs.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 88 | UDP |

| _kpasswd._udp.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 464 | UDP |

| _ldap._tcp.pdc._msdcs.internal.justinverstijnen.nl | SRV | vm-jv-dns-1.internal.justinverstijnen.nl | 389 | TCP |

| vm-jv-dns-1.internal.justinverstijnen.nl | A | 10.0.0.4 | - | - |

| @ | A | 10.0.0.4 | - | - |

After creating those records in Private DNS, the list looks like this:

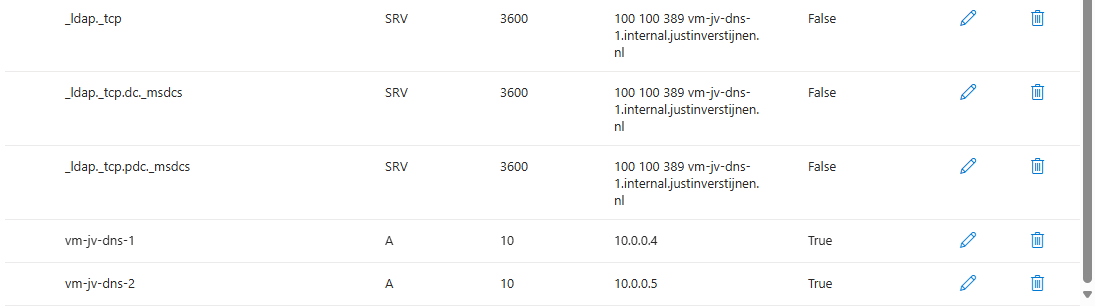

Joining a second virtual machine to the domain

Now I headed over to my second machine, did some connectivity tests and tried to join the machine to the domain which instantly works:

After restarting, no errors occured at this just domain joined machine and I was even able to fetch some Active Directory related services.

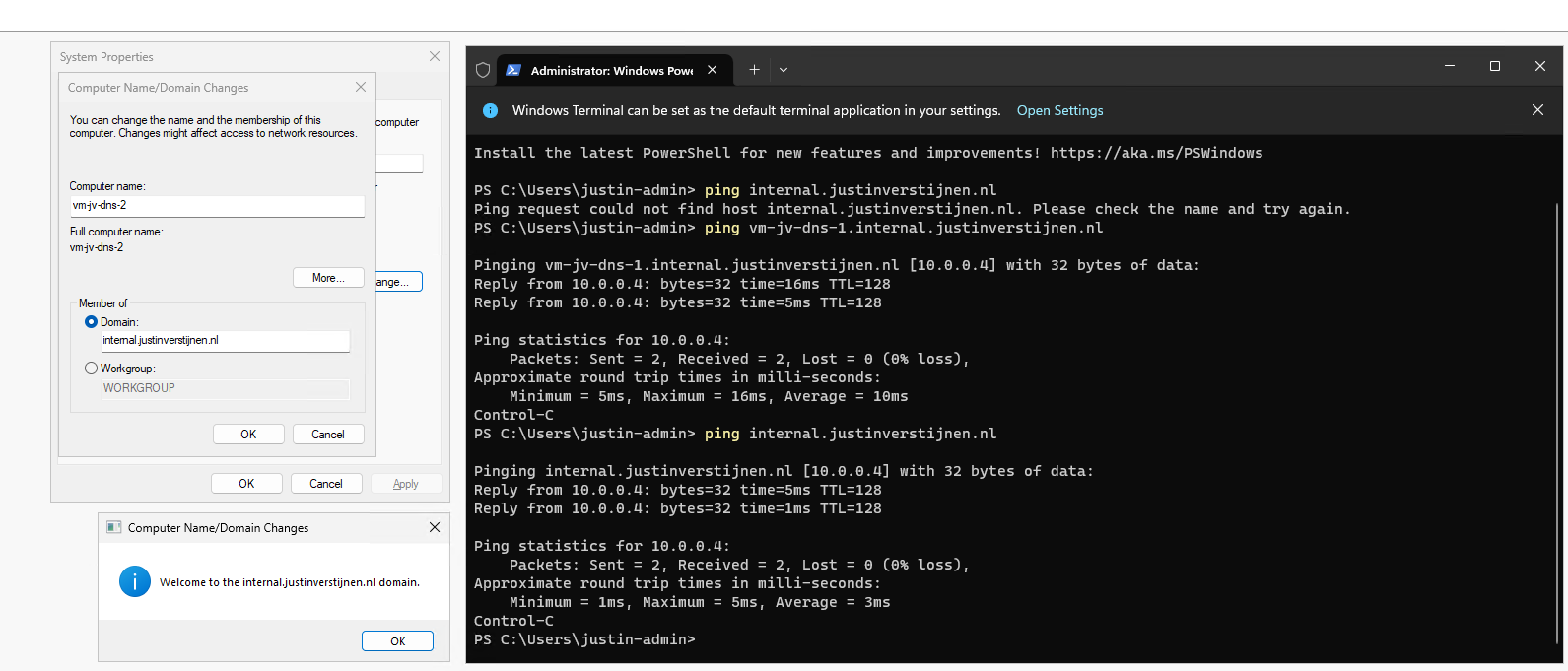

The ultimate test

To 100% ensure that this works, I will install the Administration tools for Active Directory on the second server:

And I can create everything just like it is supposed. Really cool :)

Summary

This option may work flawlessly, I still don’t recommend it in any production environment. The extra redundancy is cool but it comes with extra administrative overhead. Every domain controller or DNS server for the domain must be added manually into the DNS zone.

The better option is to still use the Active Directory built-in DNS or Entra Domain Services and ensure this has the highest uptime possible by using availability zones.

Sources

These sources helped me by writing and research for this post;

- https://learn.microsoft.com/en-us/windows-server/identity/ad-ds/plan/integrating-ad-ds-into-an-existing-dns-infrastructure

- https://learn.microsoft.com/en-us/previous-versions/windows/it-pro/windows-server-2003/cc738266(v=ws.10)

- https://learn.microsoft.com/en-us/azure/dns/private-dns-overview

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

ARM templates and Azure VM + Script deployment

In this post I will show some examples of deploying with ARM templates and also will show you how to deploy a PowerShell script to run directly after the deployment of an virtual machine. This further helps automating your tasks.

Requirements

- Around 30 minutes of your time

- An Azure subscription to deploy resources (if wanting to follow the guide)

- A Github account, Azure Storage account or other hosting option to publish Powershell scripts to URL

- Basic knowledge of Azure

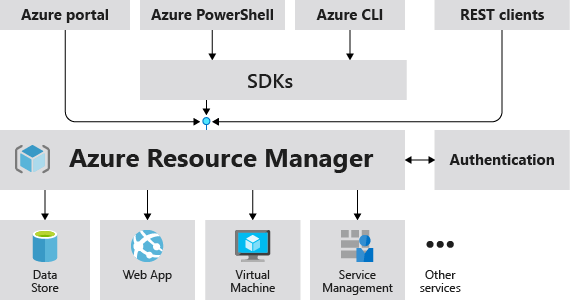

What is ARM?

ARM stands for Azure Resource Manager and is the underlying API for everything you deploy, change and manage in the Azure Portal, Azure PowerShell and Azure CLI. A basic understanding of ARM is in this picture:

I will not go very deep into Azure Resource Manager, as you can better read this in the Microsoft site: https://learn.microsoft.com/en-us/azure/azure-resource-manager/management/overview

Creating copies of an virtual machine with ARM

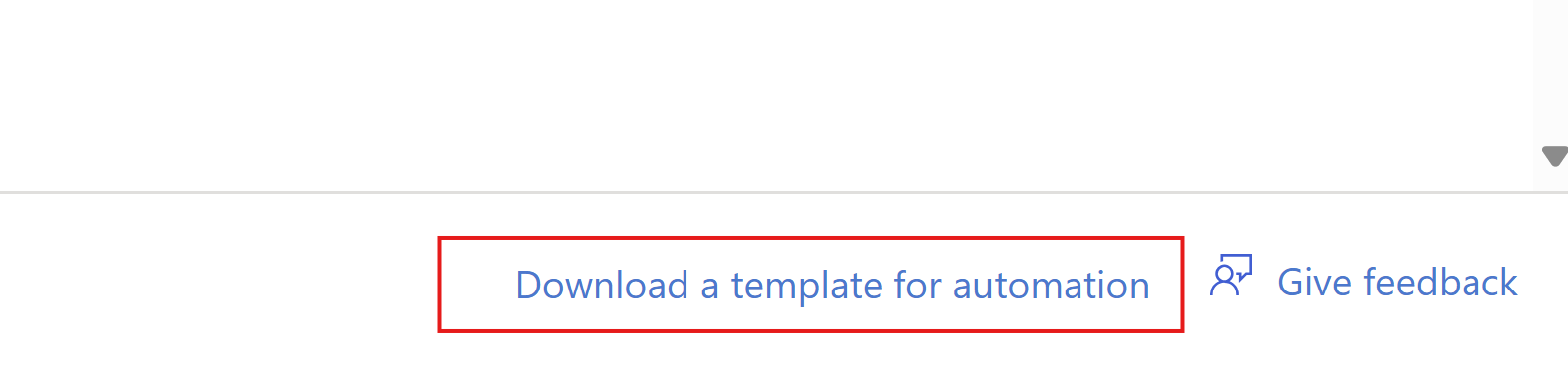

Now ARM allows us to create our own templates for deploying resources by defining a resource first, and then by clicking this link on the last page, just before deployment:

Then click “Download”.

This downloads a ZIP file with 2 files:

- Template.json

- This file contains what resources are going to deployed.

- Parameters.json

- This file contains the parameters of the resources are going to deployed like VM name, NIC name, NSG name etc.

These files can be changed easily to create duplicates and to deploy 5 similar VMs while minimizing effort and ensuring consistent VMs.

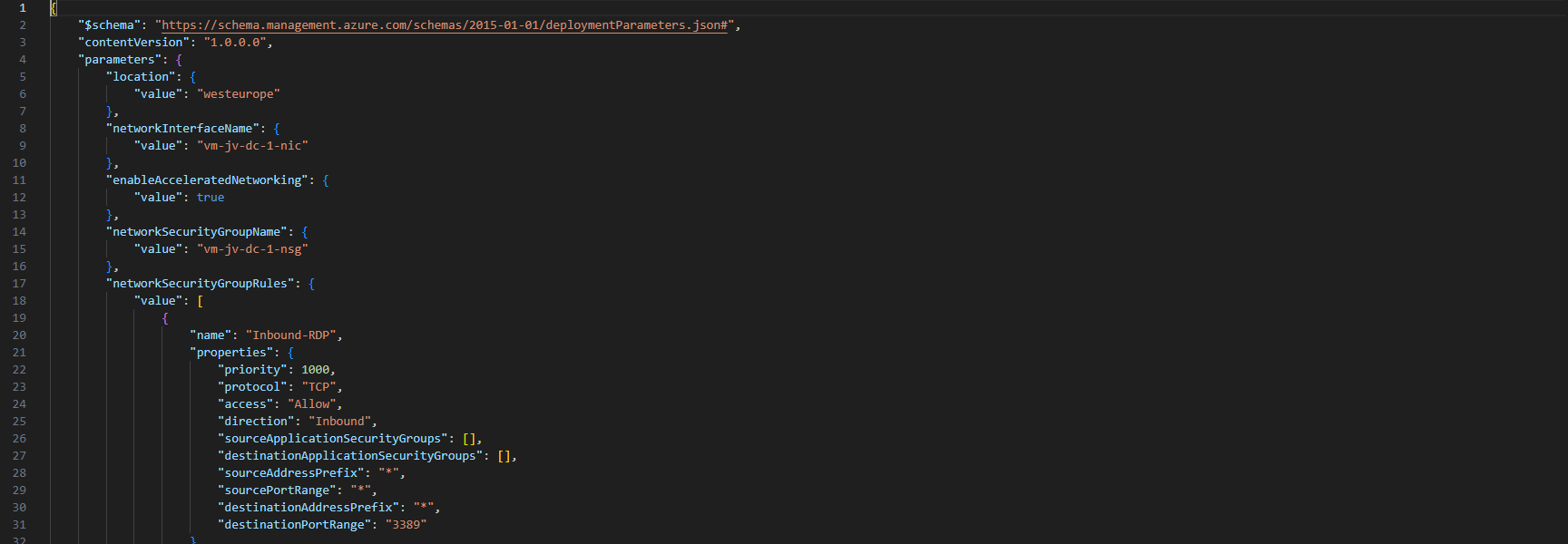

Changing ARM template parameters

After creating your ARM template by defining the wizard and downloading the files, you can change the parameters.json file to change specific settings. This contains the naming of the resources, the region, your administrator and such:

Ensure no templates contain the same names as that will instantly result in an error.

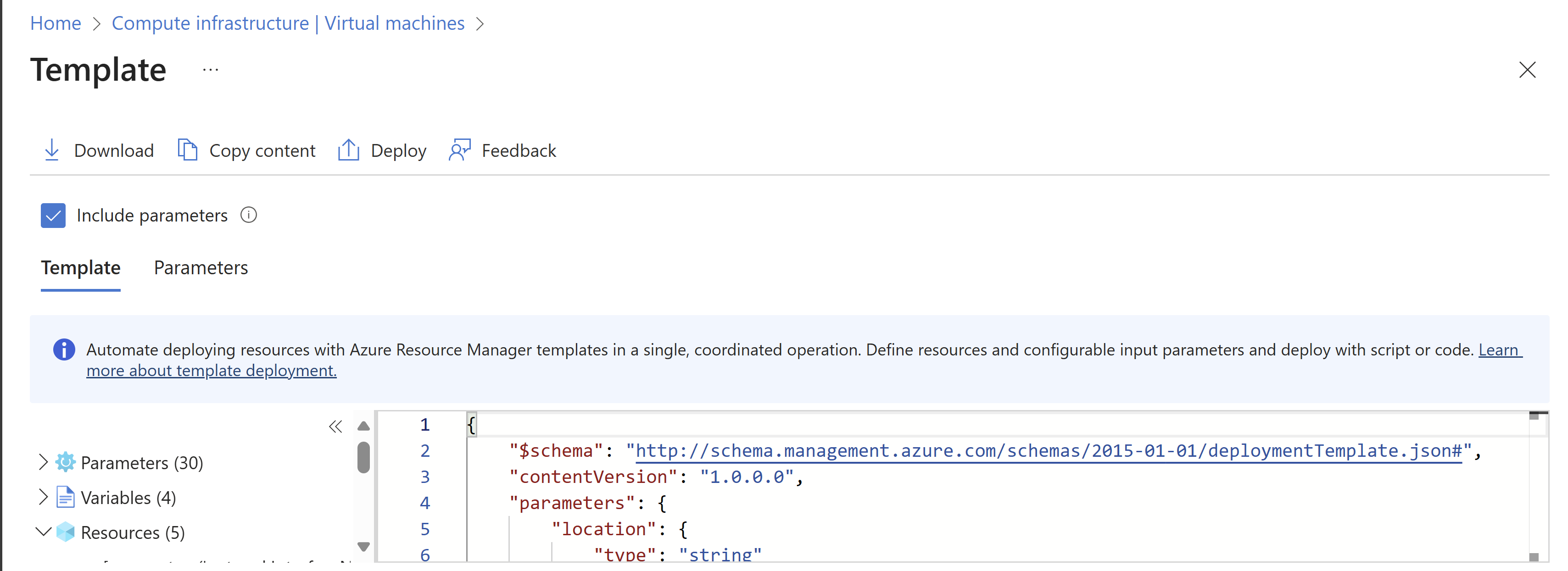

Deploying an ARM template using the Azure Portal

After you have changed your template and adjusted it to your needs, you can deploy it in the Azure Portal.

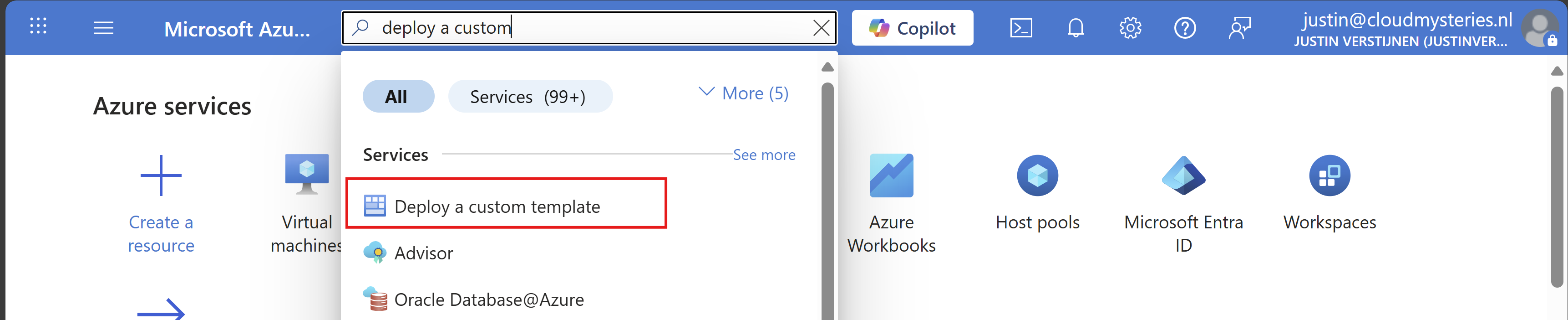

Open up the Azure Portal, and search for “Deploy a custom template”, and open that option.

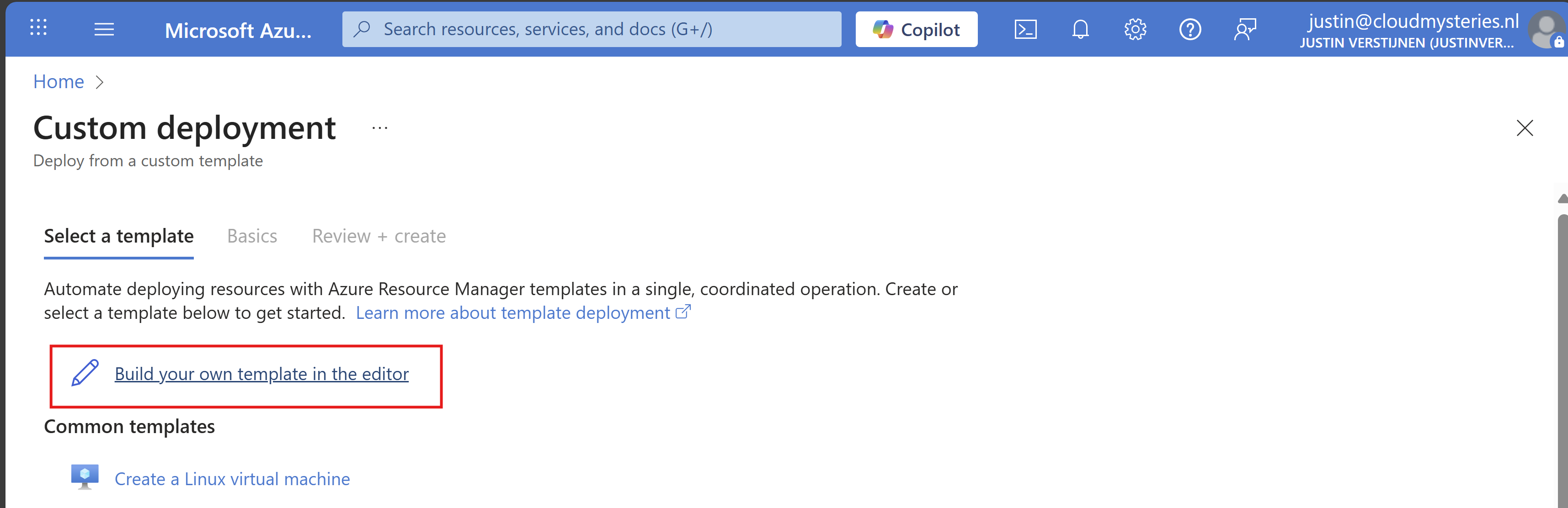

Now you get on this page. Click on “Build your own template in the editor”:

You will get on this editor page now. Click on “Load file” to load our template.json file.

Now select the template.json file from your created and downloaded template.

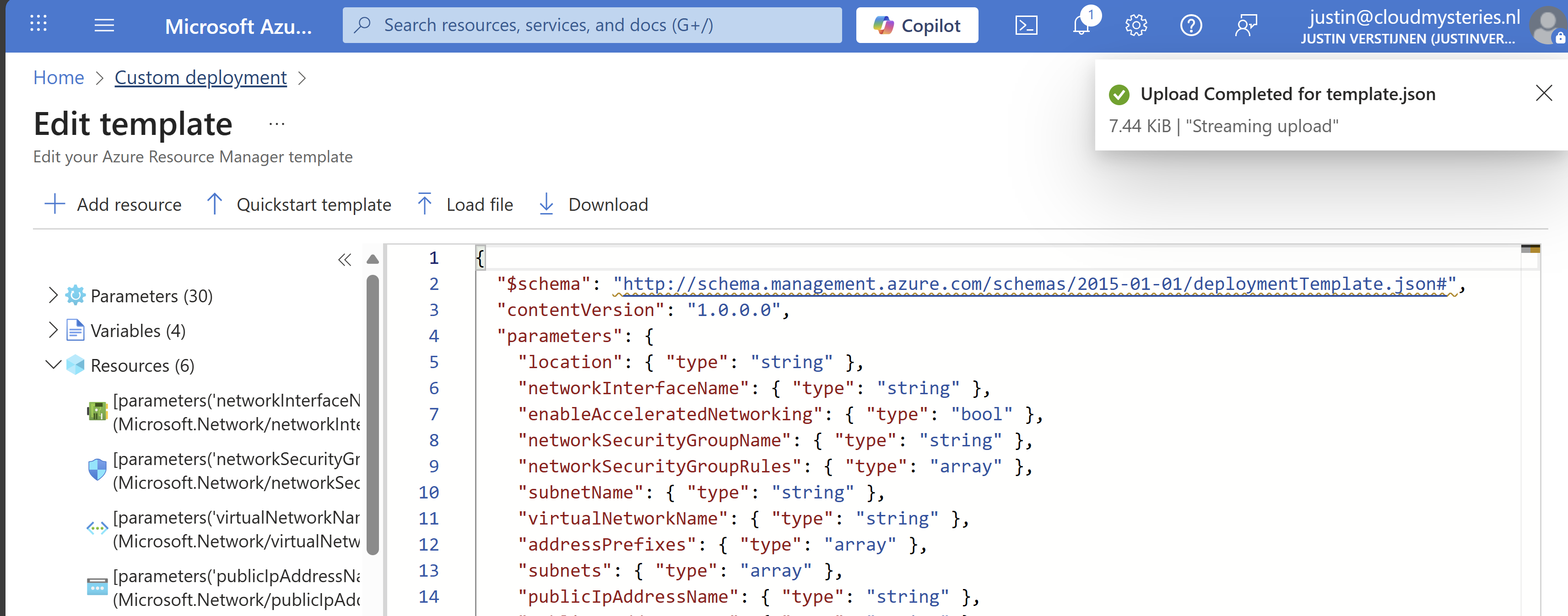

It will now insert the template into the editor, and you can see on the left side what resource types are defined in the template:

Click on “Save”. Now we have to import the parameters file, otherwise all fields will be empty.

Click on “Edit parameters”, and we have to also upload the parameters.json file.

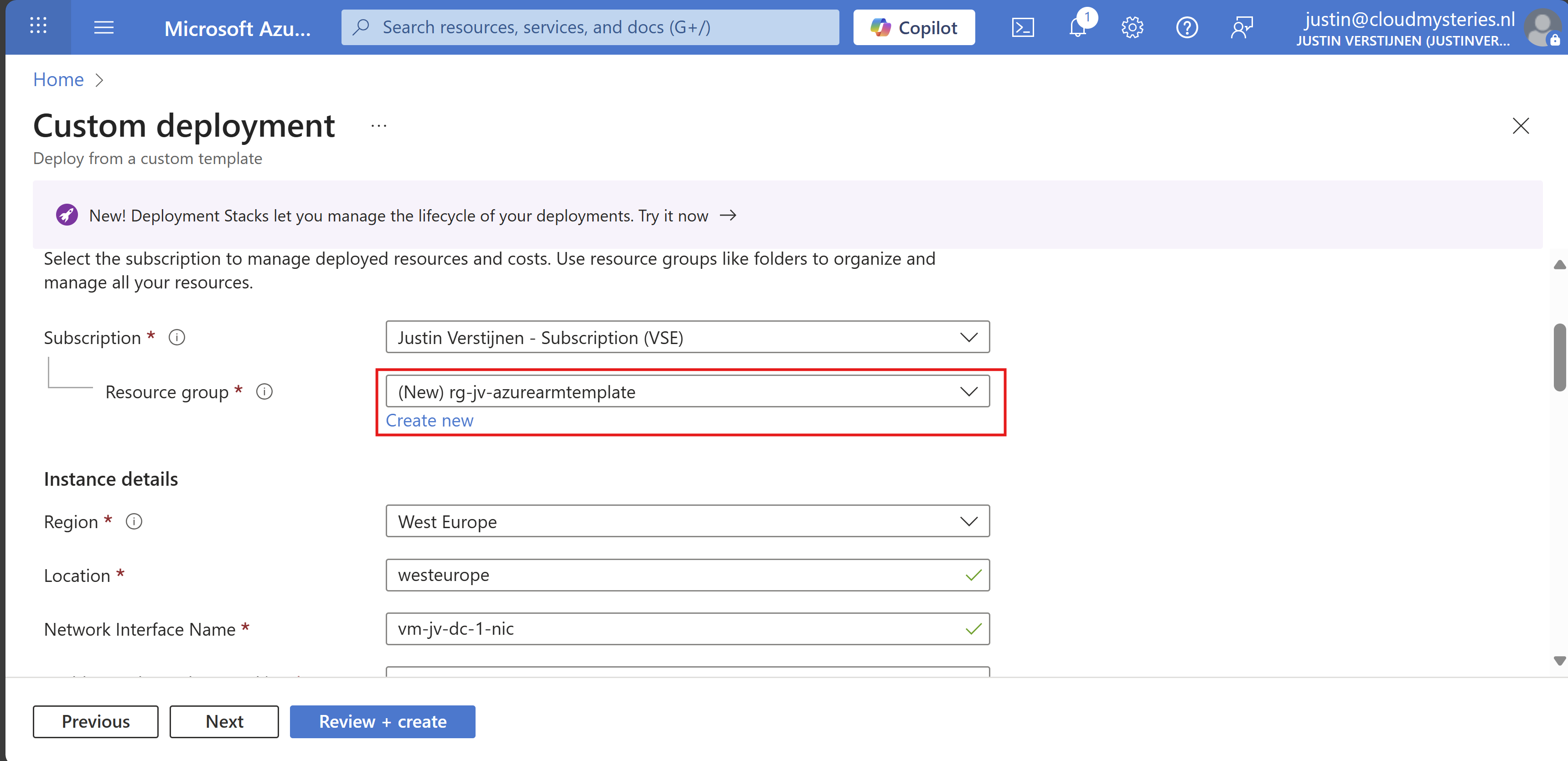

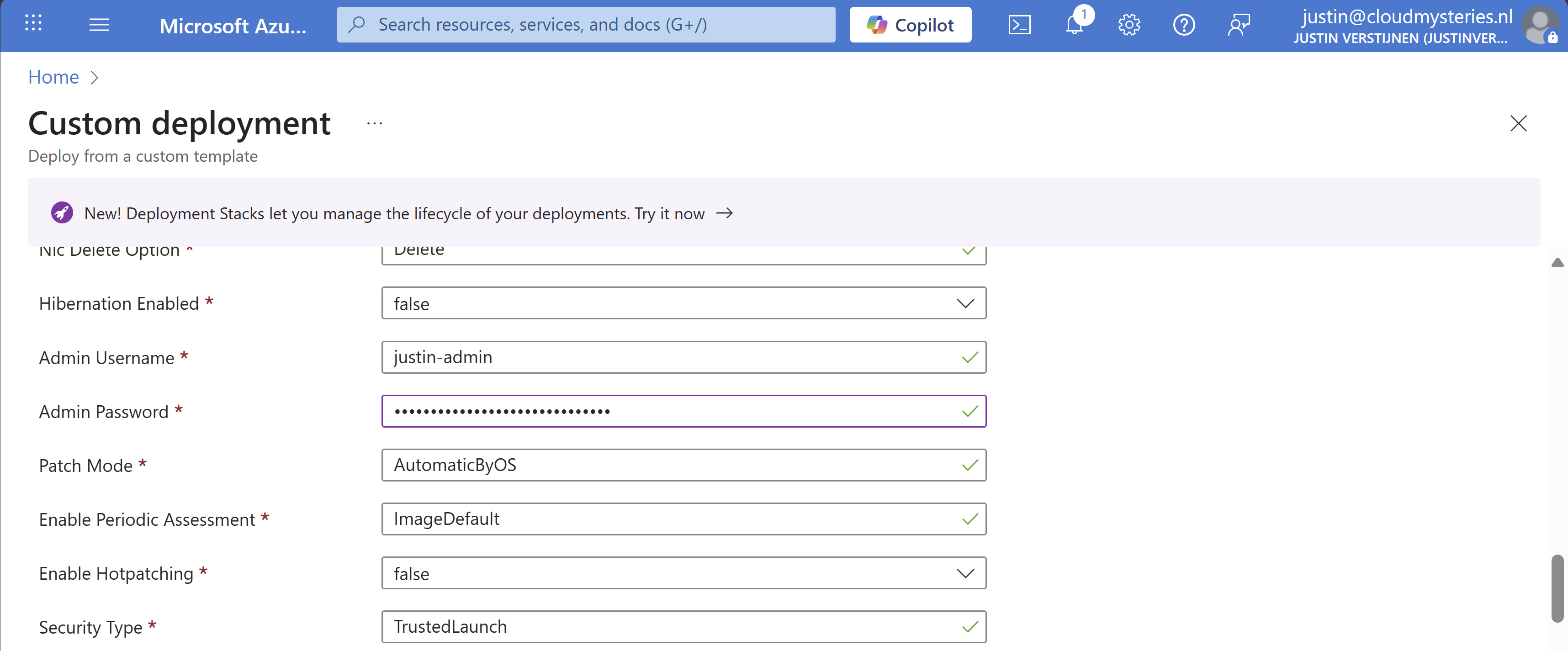

Click on “Save” and our template will be filled in for 85%. We only have to set the important information:

- Resource group

- Administrator password (as we don’t want this hardcoded in the template -> security)

Select your resource group to deploy all the resources in.

Then fill in your administrator password:

Review all of the settings and then advance to the deployment.

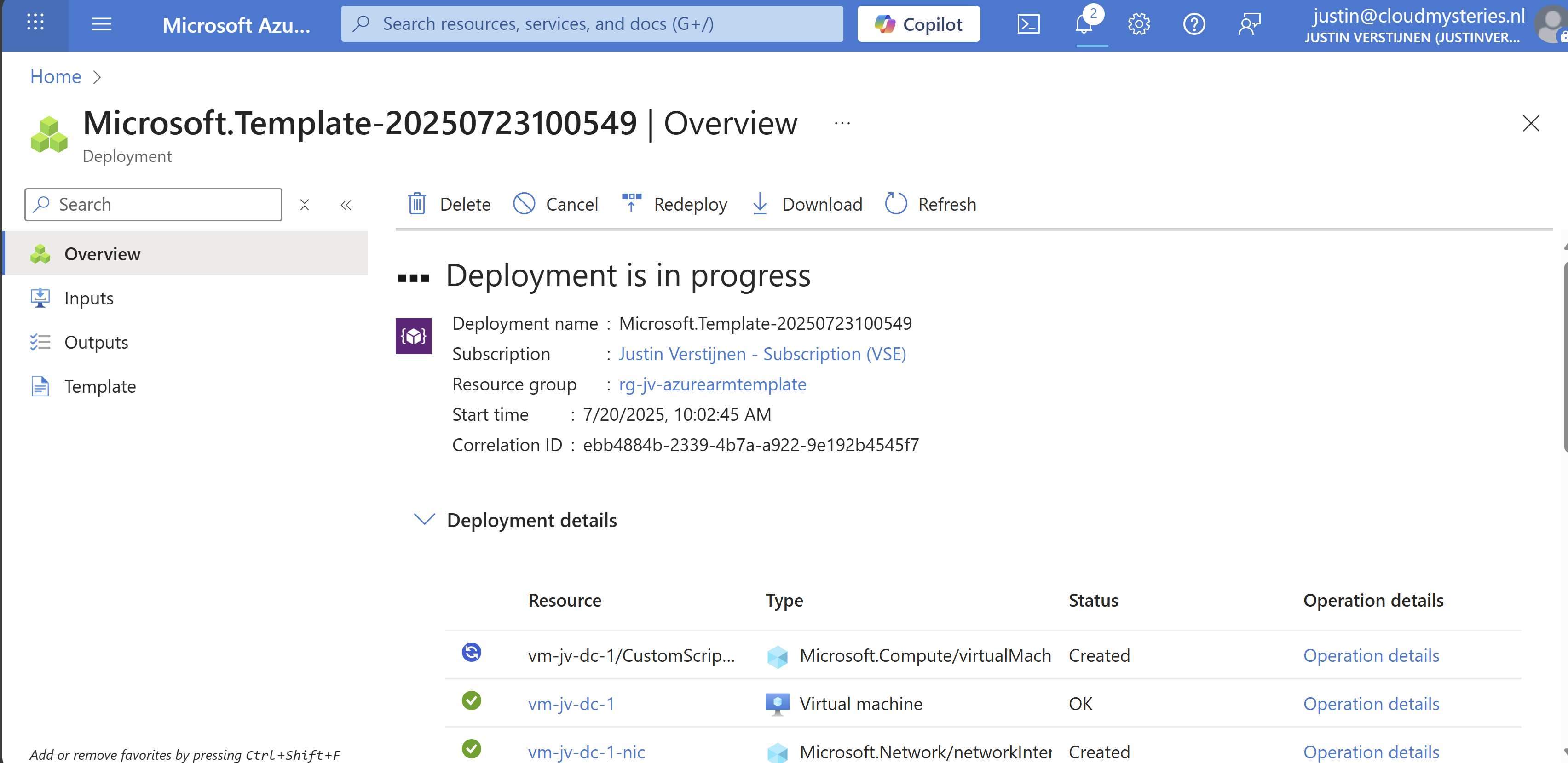

Now everything in your template will be deployed into Azure:

As you can see, you can repeat these steps if you need multiple similar virtual machines as we only need to load the files and change 2 settings. This saves a lot of time of everything in the normal VM wizard and this decreases human errors.

Add Powershell script to ARM template

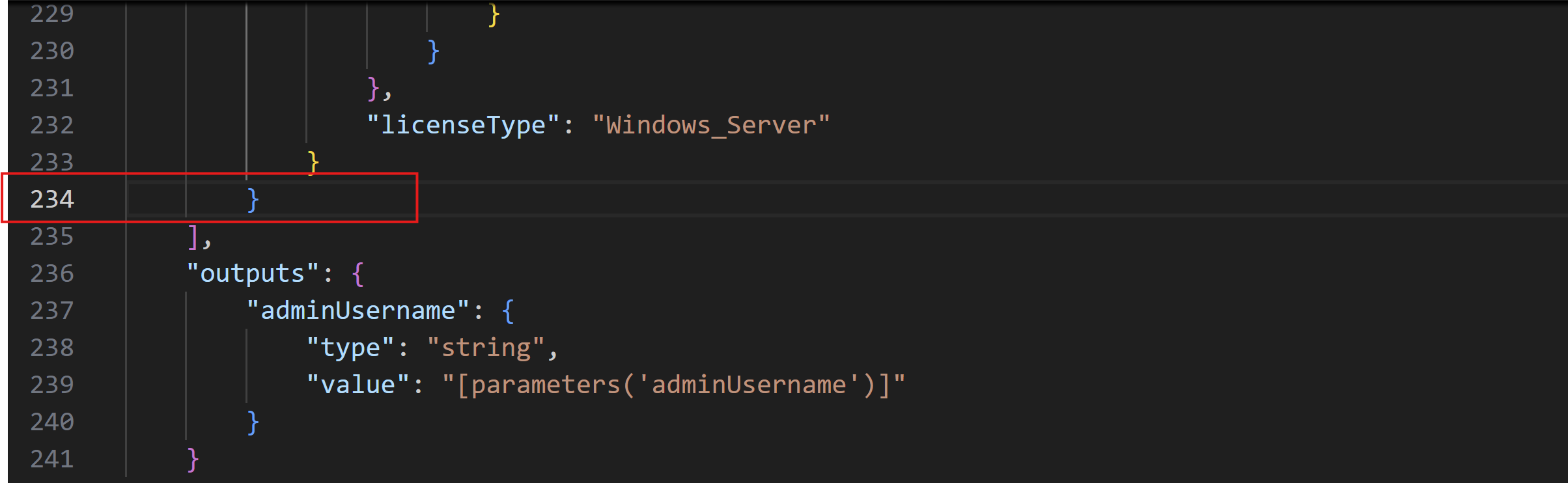

We can also add a PowerShell script to an ARM template to directly run after deploying. Azure does this with an Custom Script Extenstion that will be automatically installed after deploying the VM. After installing the extension, the script will be running in the VM to change certain things.

I use a template to deploy an VM with Active Directory everytime I need an Active Directory to test certain things. So I have a modified version of my Windows Server initial installation script which also installs the Active Directory role and promotes the VM to my internal domain. This saves a lot of time configuring this by hand every time:

The Custom Script Extension block and monifying

We can add this Custom Script Extension block to our ARM template.json file:

{

"type": "Microsoft.Compute/virtualMachines/extensions",

"name": "[concat(parameters('virtualMachineName'), '/CustomScriptExtension')]",

"apiVersion": "2021-03-01",

"location": "[parameters('location')]",

"dependsOn": [

"[resourceId('Microsoft.Compute/virtualMachines', parameters('virtualMachineName'))]"

],

"properties": {

"publisher": "Microsoft.Compute",

"type": "CustomScriptExtension",

"typeHandlerVersion": "1.10",

"autoUpgradeMinorVersion": true,

"settings": {

"fileUris": [

"url to script"

]

},

"protectedSettings": {

"commandToExecute": "powershell -ExecutionPolicy Unrestricted -Command ./script.ps1"

}

}

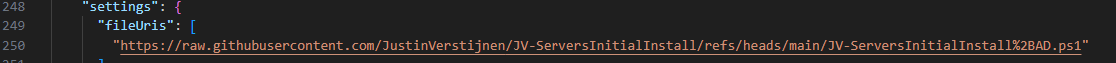

}Then change the 2 parameters in the file to point it to your own script:

- fileUris: This is the public URL of your script (line 16)

- commandToExecute: This is the name of your script (line 20)

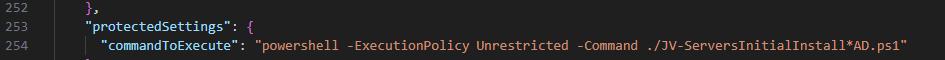

Placing the block into the existing ARM template

This block must be placed after the virtual machine, as the virtual machine must be running before we can run a script on it.

Search for the “Outputs” block and on the second line just above it, place a comma and hit Enter and on the new line paste the Custom Script Extension block. Watch this video as example where I show you how to do this:

Testing the custom script

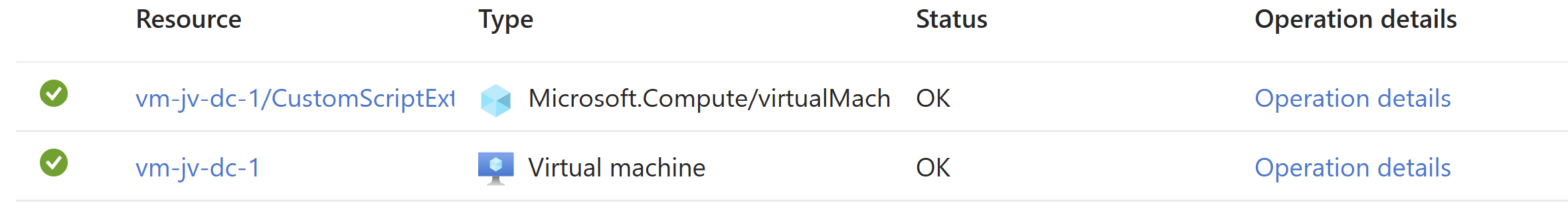

After changing the template.json file, save it and then follow the custom template deployment step again of this guide to deploy the custom template which includes the PowerShell script. You will see it appear in the deployment after the virtual machine is deployed:

After the VM is deployed, I will login and check if the script has run:

The domain has been succesfully installed with management tools and such. This is really cool and saves a lot of time.

Summary

ARM templates are an great way to deploy multiple instances of resources and with extra customization like running a PowerShell script afterwards. This is really helpful if you deploy machines for every blog post like I do to always have the same, empty configuration available in a few minutes. The whole proces now takes like 8 minutes but when configuring by hand, this will take up to 45 minutes.

ARM is a great step between deploying resources completely by hand and IaC solutions like Terraform and Bicep.

Thank you for visiting this webpage and I hope this was helpful.

Sources

These sources helped me by writing and research for this post;

- https://learn.microsoft.com/en-us/azure/azure-resource-manager/management/overview

- https://learn.microsoft.com/en-us/azure/virtual-machines/extensions/custom-script-windows

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Automatic Azure Boot diagnostics monitoring with Azure Policy

In short, Azure Policy is a compliance/governance tool in Azure with capabilities for automatically pushing your resources to be compliant with your stated policy. This means if we configure Azure Policy to automatically configure boot diagnostics and save the information to a storage account, this will be automatically done for all existing and new virtual machines.

Step 1: The configuration explained

The boot diagnostics in Azure enables you to monitor the state of the virtual machine in the portal. By default, this will be enabled with a Microsoft managed storage account but we don’t have control over the storage account.

With using our custom storage account for saving the boot diagnostics, these options are available. We can control where our data is saved, which lifecycle management policies are active for retention of the data and we can use GRS storage for robust, datacenter-redundancy.

For saving the information in our custom storage account, we must tell the machines where to store it and we can automate this process with Azure Policy.

The solution we’re gonna configure in this guide consists of the following components in order:

- Storage Account: The place where serial logs and screenshots are actually stored

- Policy Definition: Where we define what Azure Policy must evaluate and check

- Policy Assignment: Here we assign a policy to a certain scope which can be subscriptions, resource groups and specific resources

- Remediation task: This is the task that kicks in if the policy definition returns with “non-compliant” status

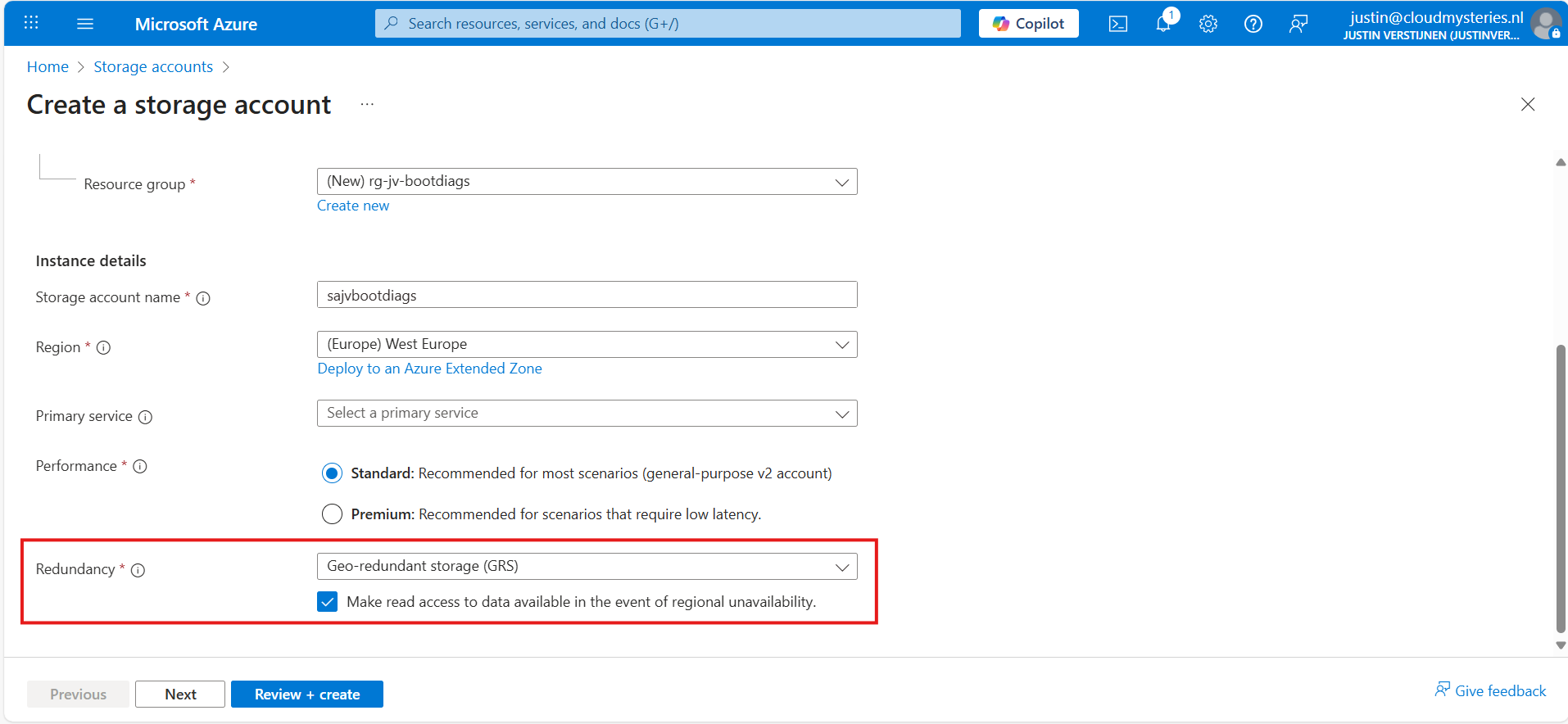

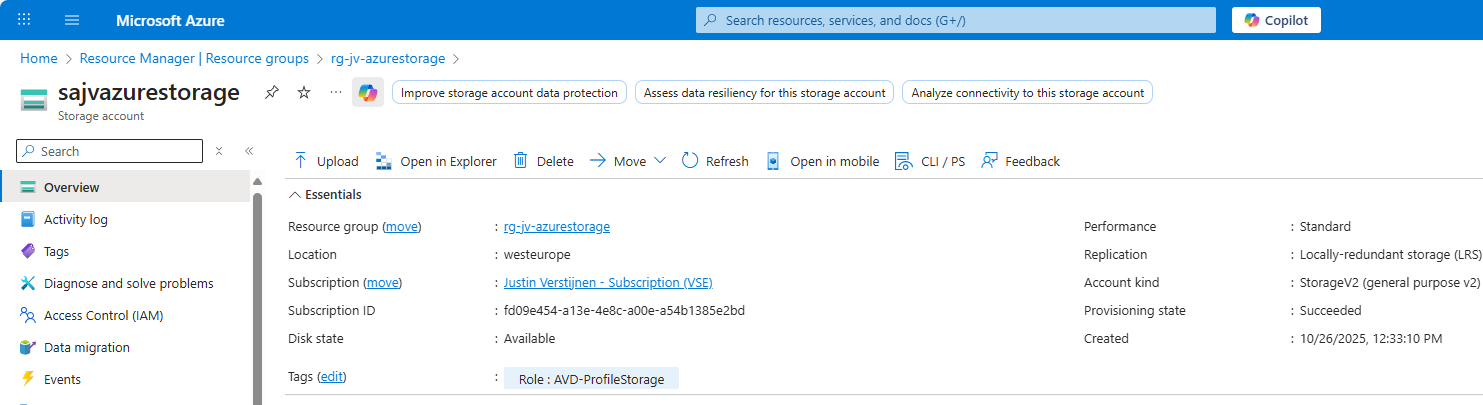

Step 2: How to create your custom storage account for boot diagnostics

Assuming you want to use your own storage account for saving Boot diagnostics, we start with creating our own storage account for this purpose. If you want to use an existing managed storage account, you can skip this step.

Open the Azure Portal and search for “Storage Accounts”, click on it and create a new storage account. Then choose a globally unique name with lowercase characters only between 3 and 24 characters.

Make sure you select the correct level of redundancy at the bottom as we want to defend ourselves against datacenter failures. Also, don’t select a primary service as we need this storage account for multiple purposes.

At the “Advanced” tab, select “Hot” as storage tier, as we might ingest new information continueosly. We also leave the “storage account key access” enabled as this is required for the Azure Portal to access the data.

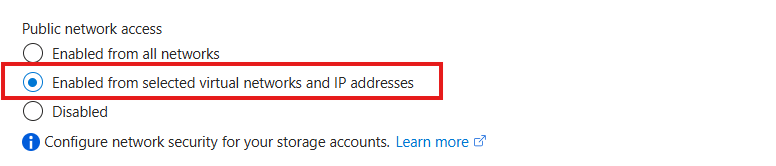

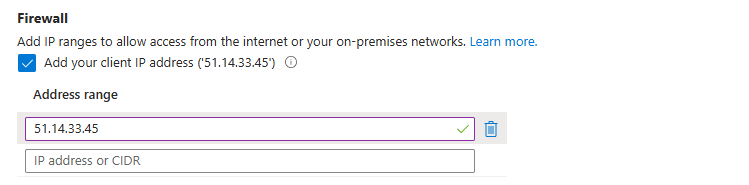

Advance to the “Networking” tab. Here we have the option to only enable public access for our own networks. This is highly recommended:

This way we expose the storage account access but only for our services that needs it. This defends our storage account from attackers outside of our environment.

For you actually able to see the data in the Azure Portal, you need to add the WAN IP address of your location/management server:

You can do that simply by checking the “Client IP address”. If you skip this step, you will get an error that the boot diagnostics cannot be found later on.

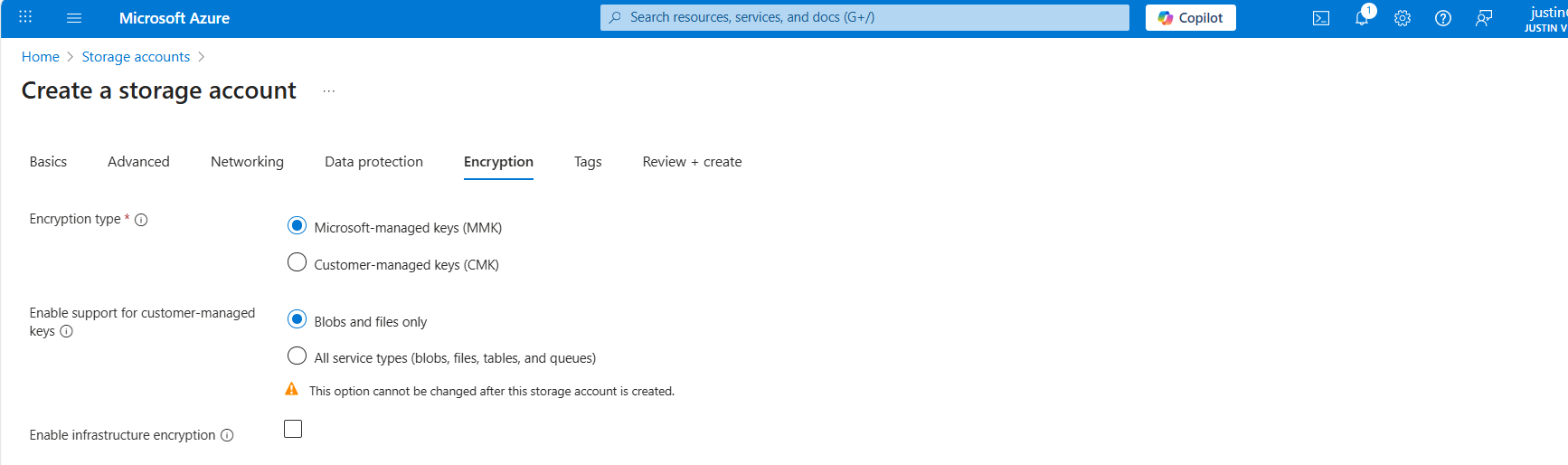

At the “Encryption” tab we can configure the encryption, if your company policies states this. For the simplicity of this guide, I leave everything on “default”.

Create the storage account.

Step 3: How to create the Azure Policy definition

We can now create our Azure Policy that alters the virtual machine settings to save the diagnostics into the custom storage account. The policy overrides every other setting, like disabled or enabled with managed storage account. It 100% ensures all VMs in the scope will save their data in our custom storage account.

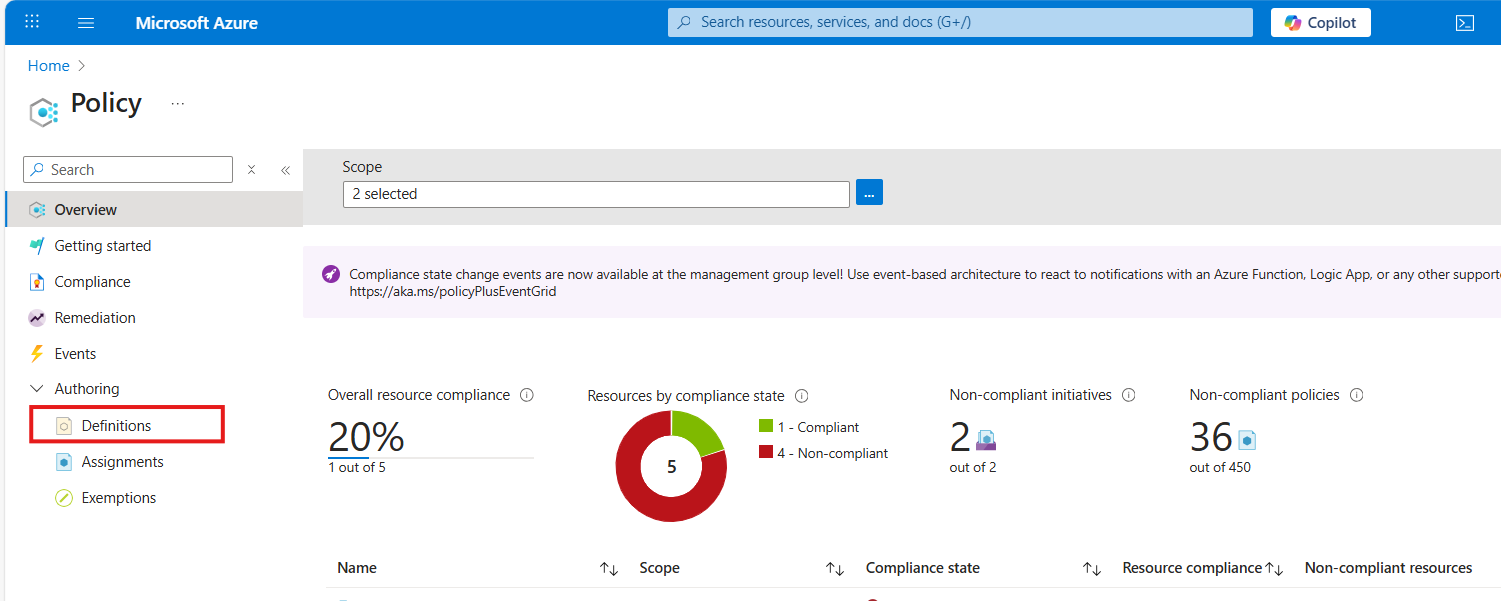

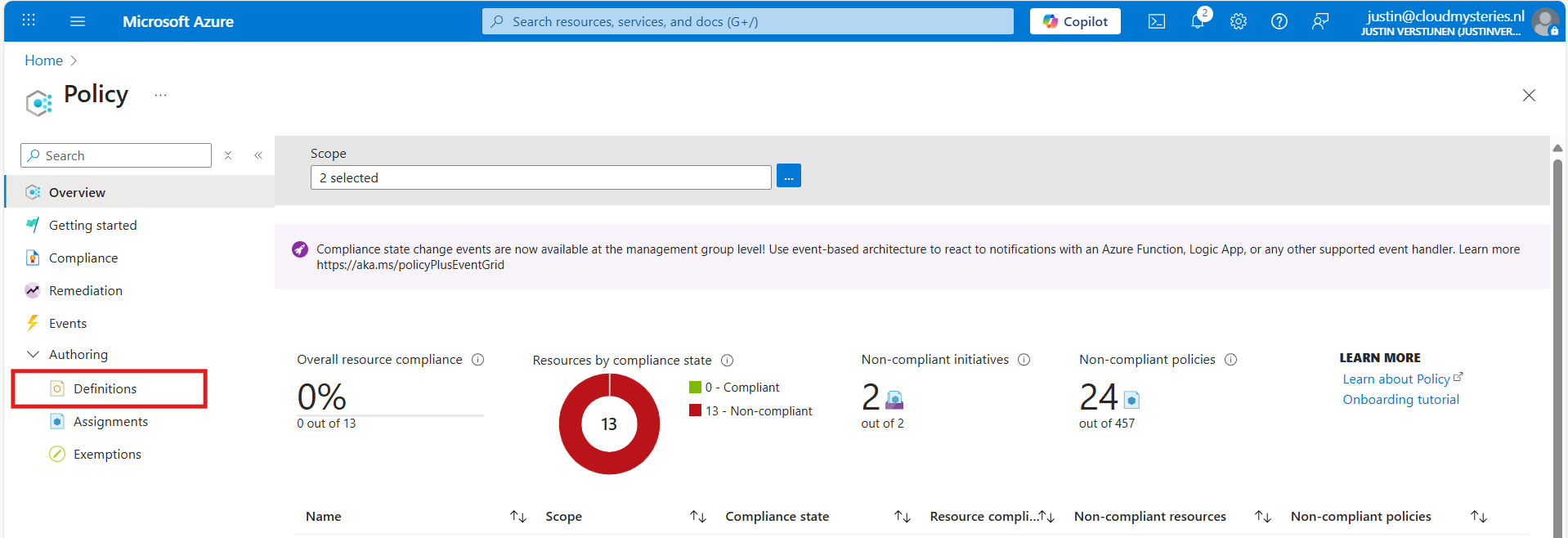

Open the Azure Portal and go to “Policy”. We will land on the Policy compliancy dashboard:

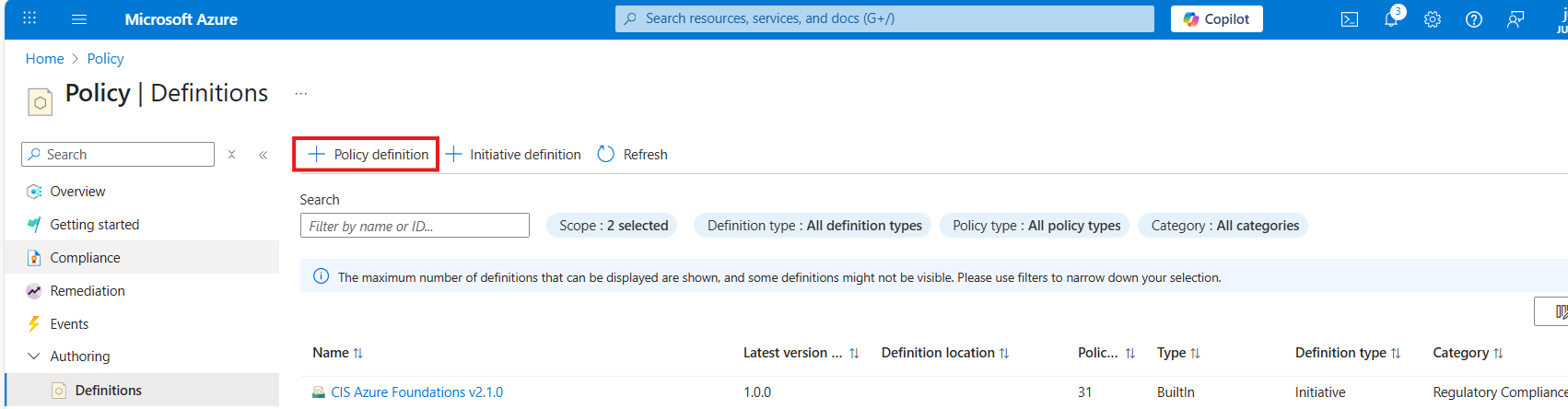

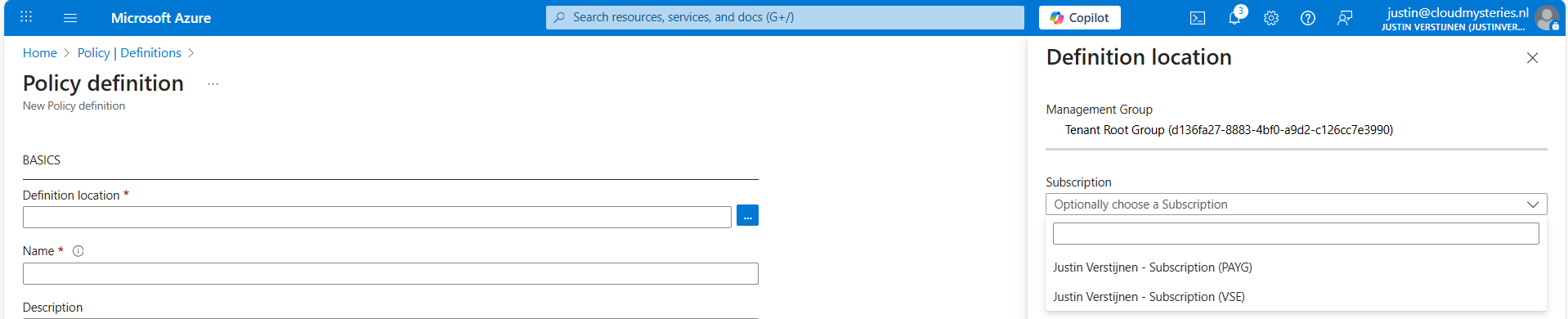

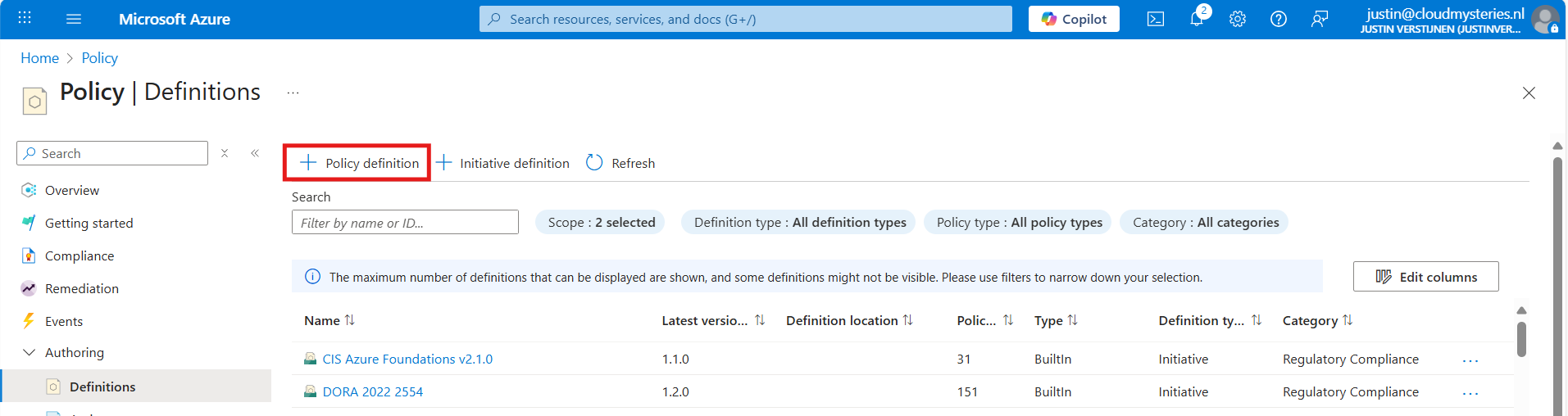

Click on “Definitions” as we are going to define a new policy. Then click on “+ Policy Definition” to create a new:

At the “definition location”, select your subscription where you want this configuration to be active. You can also select the tenant root management group, so this is enabled on all subscriptions. Caution with this of course.

Warning: Policies assigned to the Tenant Management Group cannot be assigned remediation tasks. Select one or more subscriptions instead.

Then give the policy a good name and description.

At the “Category” section we can assign the policy to a category. This changes nothing to the effect of the policy but is only for your own categorization and overview. You can also create custom categories if using multiple policies:

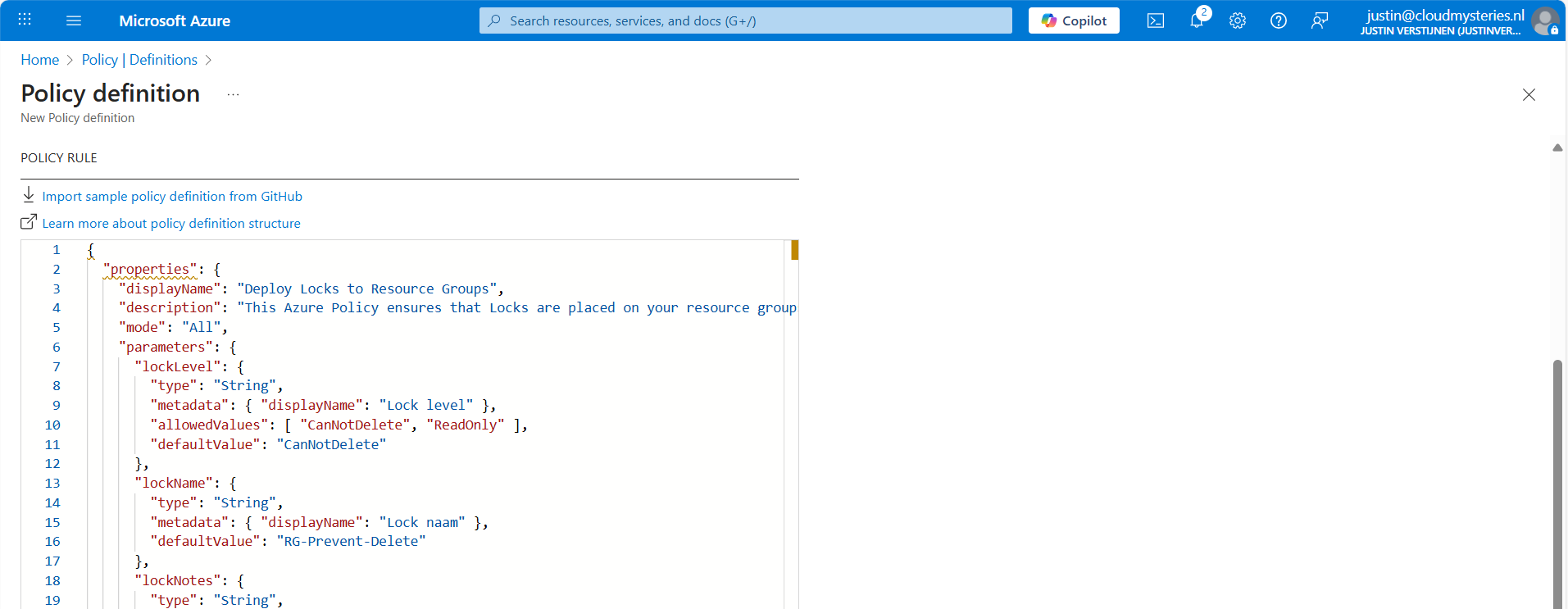

At the policy rule, we have to paste a custom rule in JSON format which I have here:

{

"mode": "All",

"parameters": {

"customStorageUrl": {

"type": "String",

"metadata": {

"displayName": "Custom Storage",

"description": "The custom Storage account used to write boot diagnostics to."

},

"defaultValue": "https://*your storage account name*.blob.core.windows.net"

}

},

"policyRule": {

"if": {

"allOf": [

{

"field": "type",

"equals": "Microsoft.Compute/virtualMachines"

},

{

"field": "Microsoft.Compute/virtualMachines/diagnosticsProfile.bootDiagnostics.storageUri",

"notContains": "[parameters('customStorageUrl')]"

},

{

"not": {

"field": "Microsoft.Compute/virtualMachines/diagnosticsProfile.bootDiagnostics.storageUri",

"equals": ""

}

}

]

},

"then": {

"effect": "modify",

"details": {

"roleDefinitionIds": [

"/providers/Microsoft.Authorization/roleDefinitions/9980e02c-c2be-4d73-94e8-173b1dc7cf3c"

],

"conflictEffect": "audit",

"operations": [

{

"operation": "addOrReplace",

"field": "Microsoft.Compute/virtualMachines/diagnosticsProfile.bootDiagnostics.storageUri",

"value": "[parameters('customStorageUrl')]"

},

{

"operation": "addOrReplace",

"field": "Microsoft.Compute/virtualMachines/diagnosticsProfile.bootDiagnostics.enabled",

"value": true

}

]

}

}

}

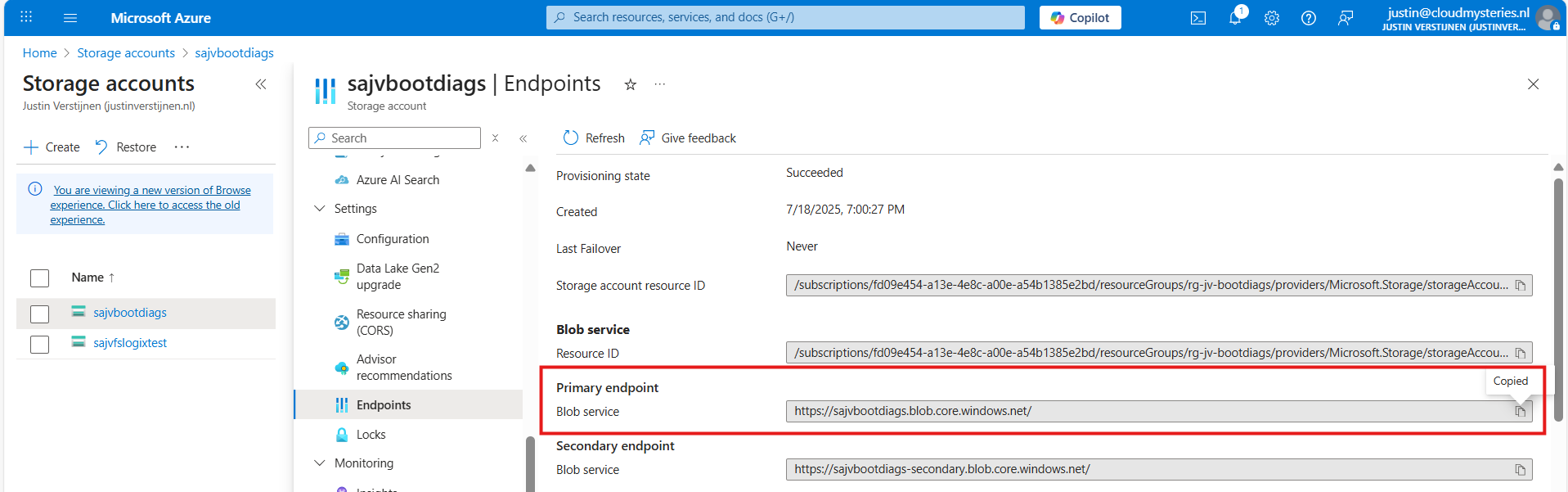

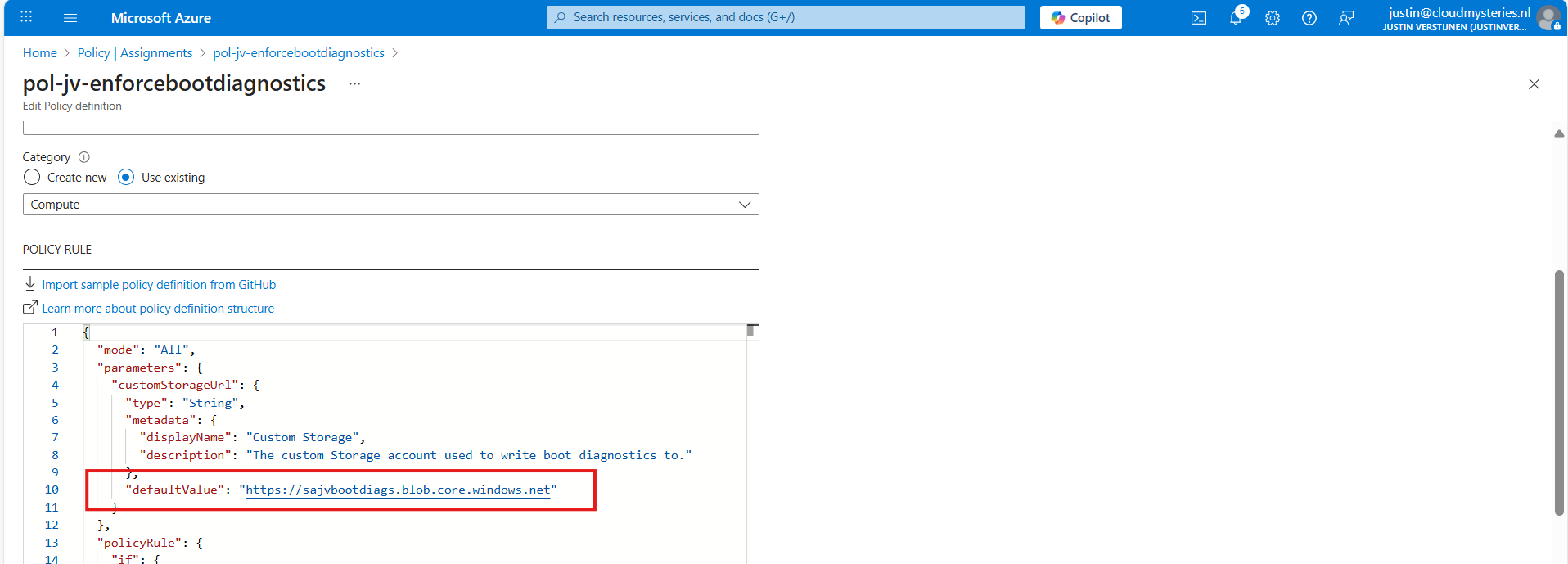

}Copy and paste the code into the “Policy Rule” field. Then make sure to change the storage account URI to your custom or managed storage account. You can find this in the Endpoints section of your storage account:

Paste that URL into the JSON definition at line 10, and if desired, change the displayname and description on line 7 and 8.

Leave the “Role definitions” field to the default setting and click on “Save”.

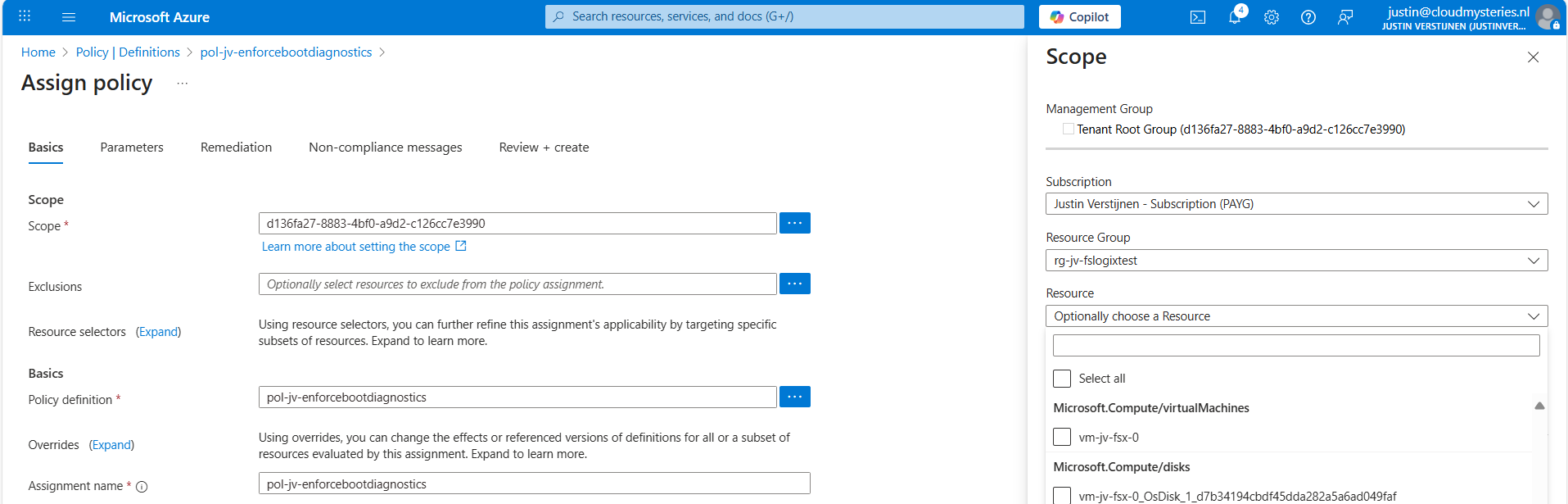

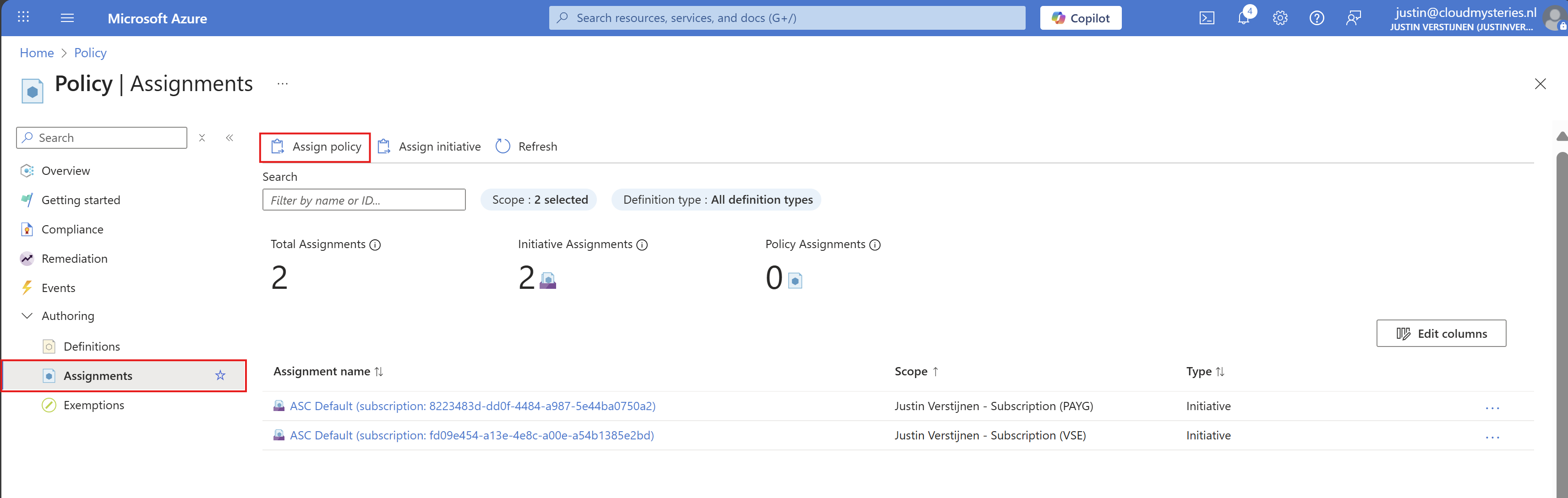

Step 4: Assigning the boot diagnostics policy definition

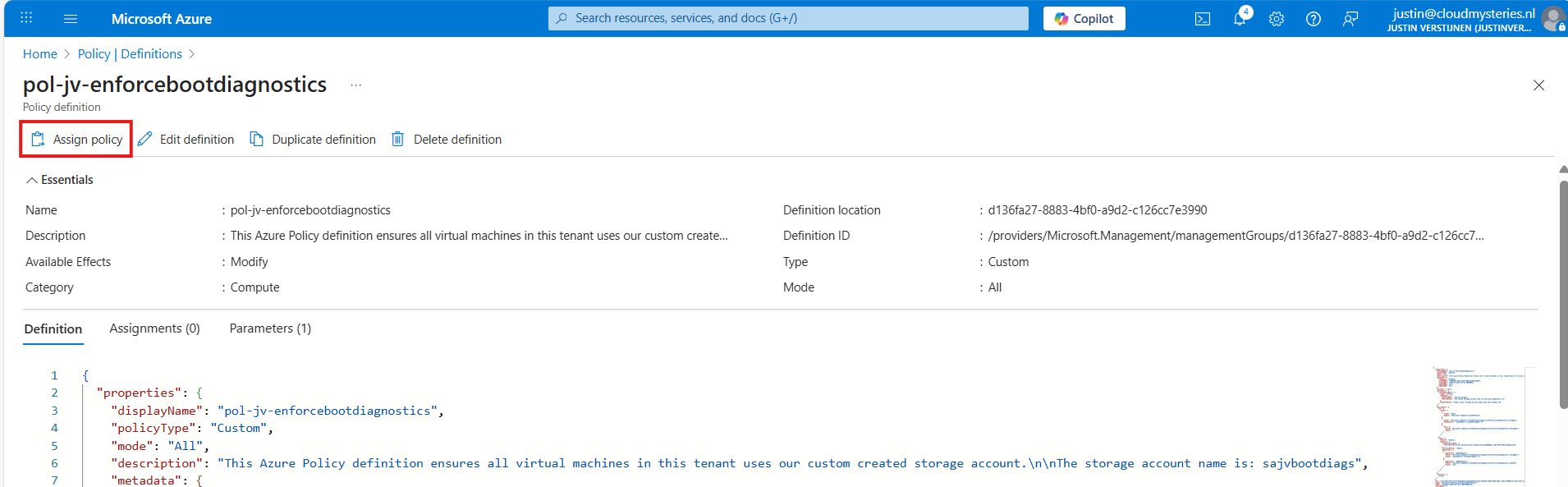

Now we have defined our policy, we can assign it to the scope where it must be active. After saving the policy you will get to the correct menu:

Otherwise, you can go to “Policy”, then to “Definitions” just like in step 3 and lookup your just created definition.

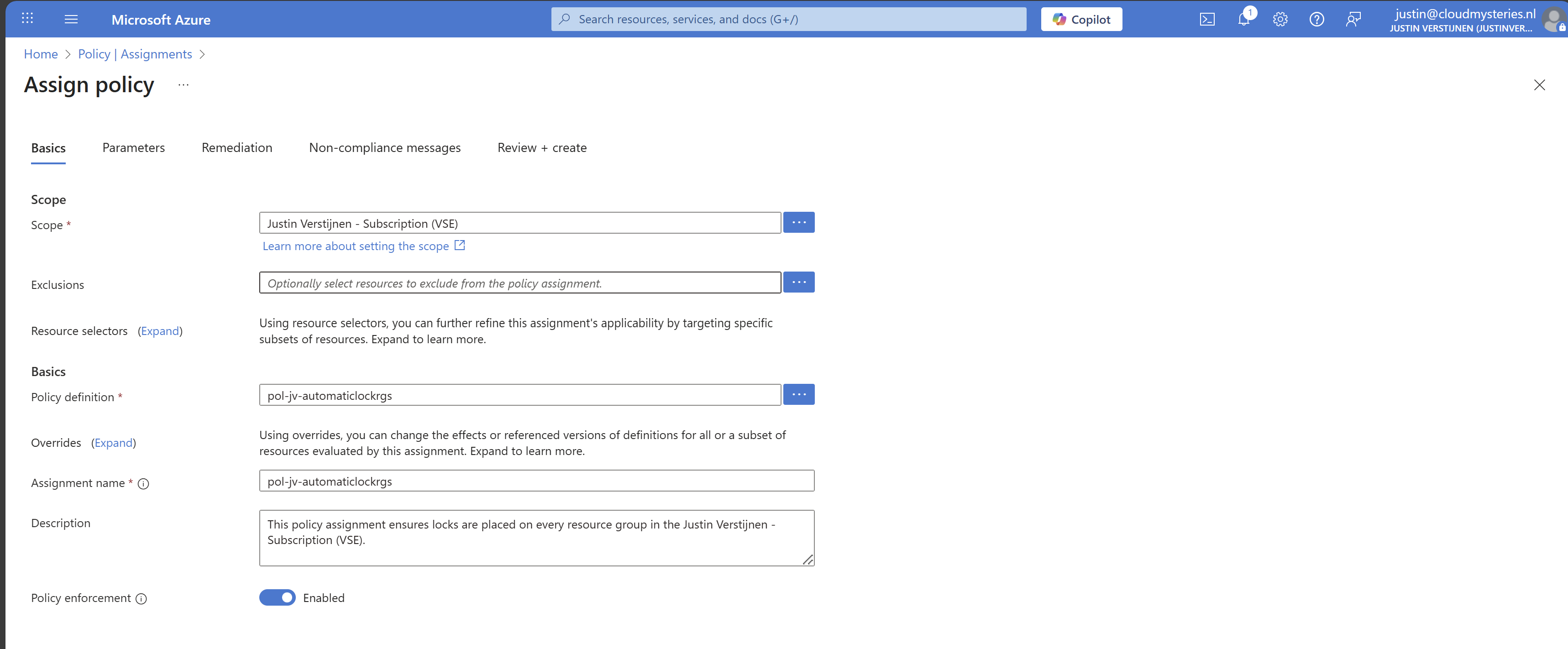

On the Assign policy page, we can once again define our scope. We can now set “Exclusions” to apply to all, but some according to your configurations. You can also select one or multiple specific resources to exclude from your Policy.

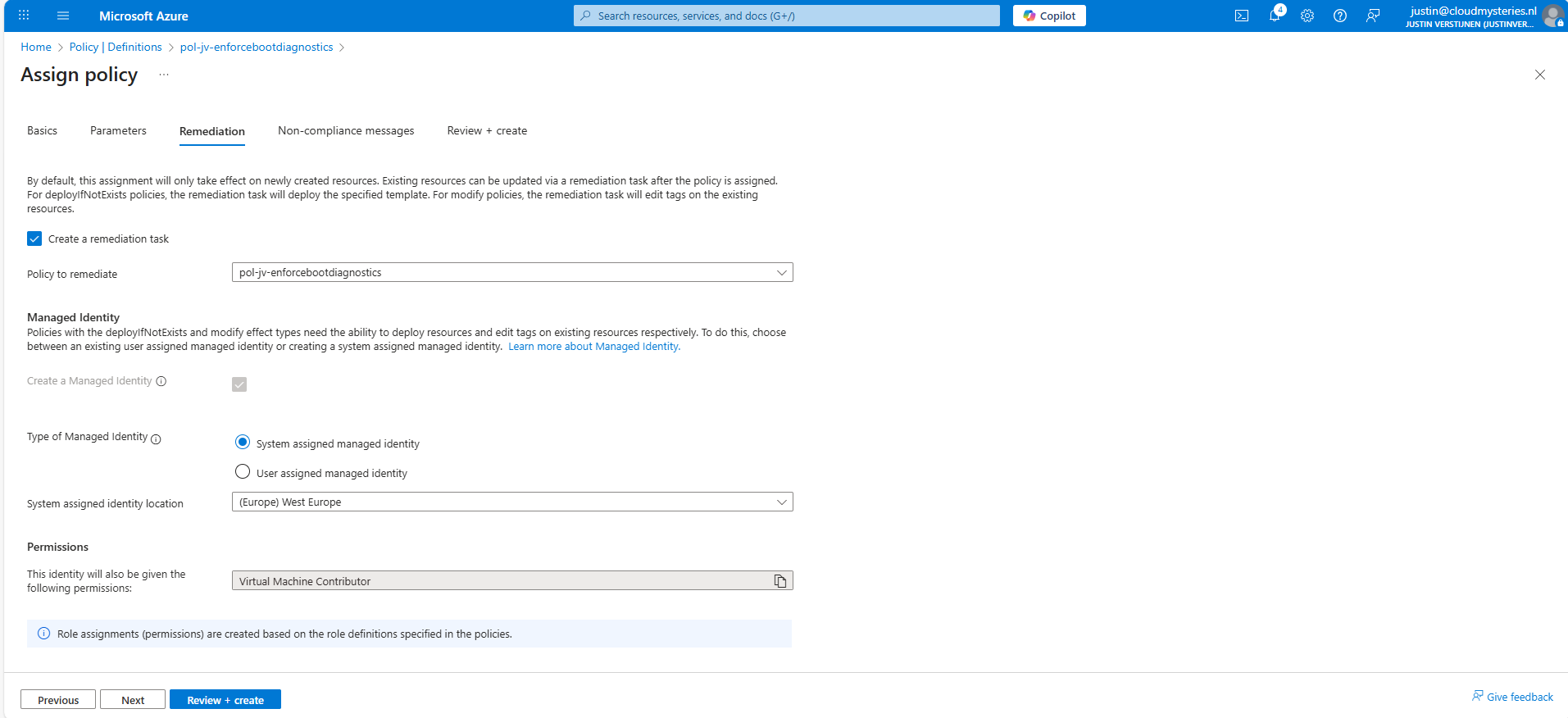

Leave the rest of the page as default and advance to the “Remediation” tab:

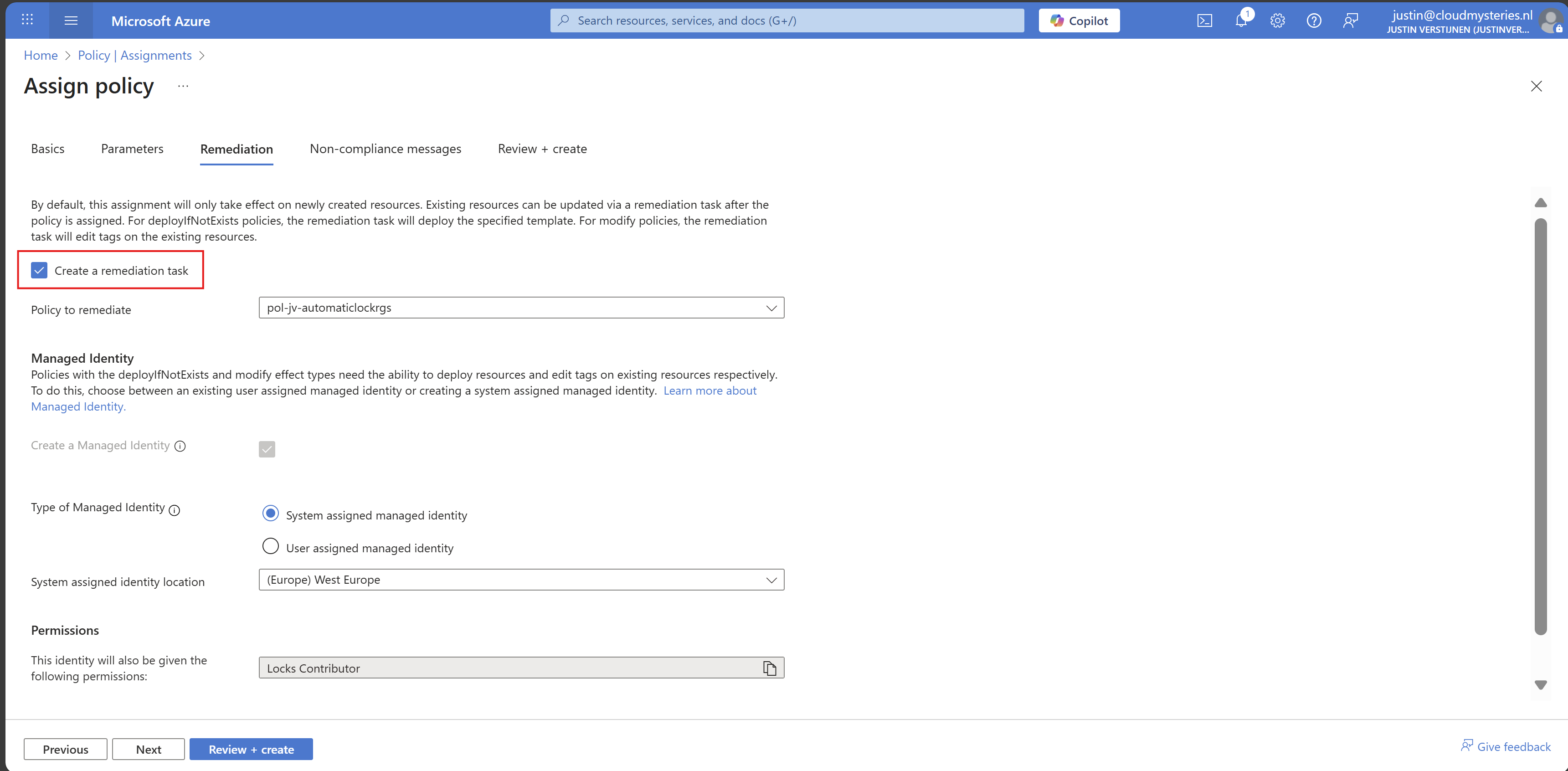

Enable “Create a remediation task” and select your policy if not already there.

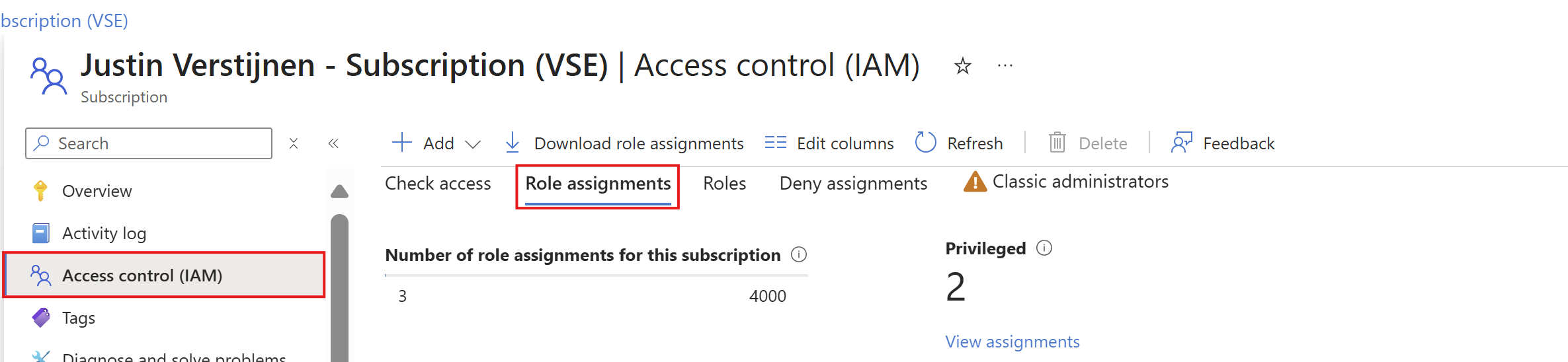

Then we must create a system or user assigned managed identity because changing the boot diagnostics needs permissions. We can use the default system assiged here and that automatically selects the role with the least privileges.

You could forbid the creation of non-compliant virtual machines and leave a custom message, like our documentation is here -> here. This then would show up when creating a virtual machine that is not configured to send boot diagnostics to our custom storage account.

Advance to the “Review + create” tab and finish the assignment of the policy.

Step 5: Test the configuration

Now that we finished the configuration of our Azure Policy, we can now test the configuration. We have to wait for around 30 minutes when assigning the policy to become active. When the policy is active, the processing of Azure policies are much faster.

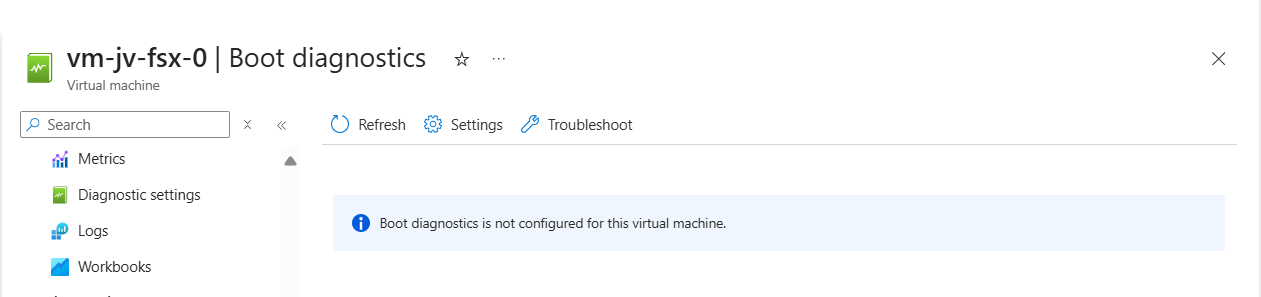

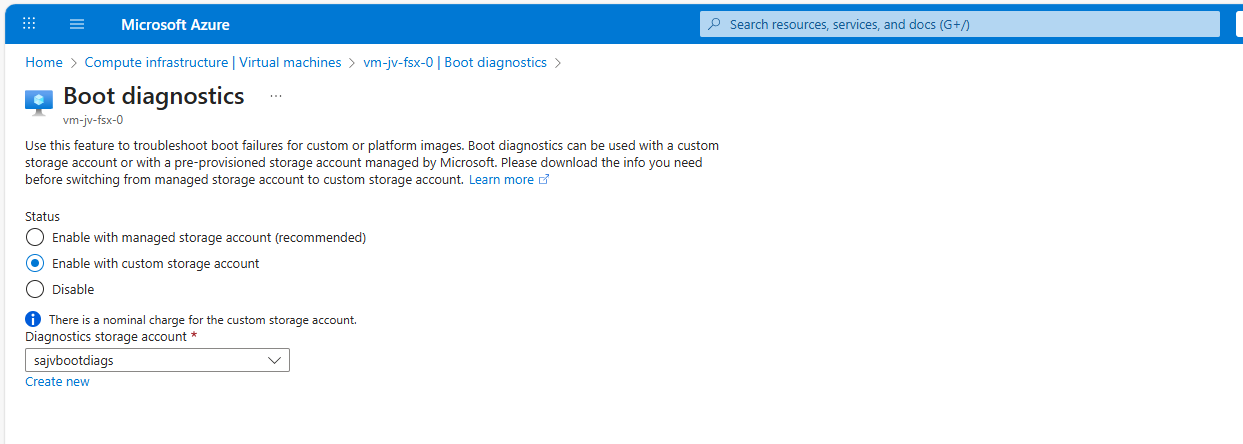

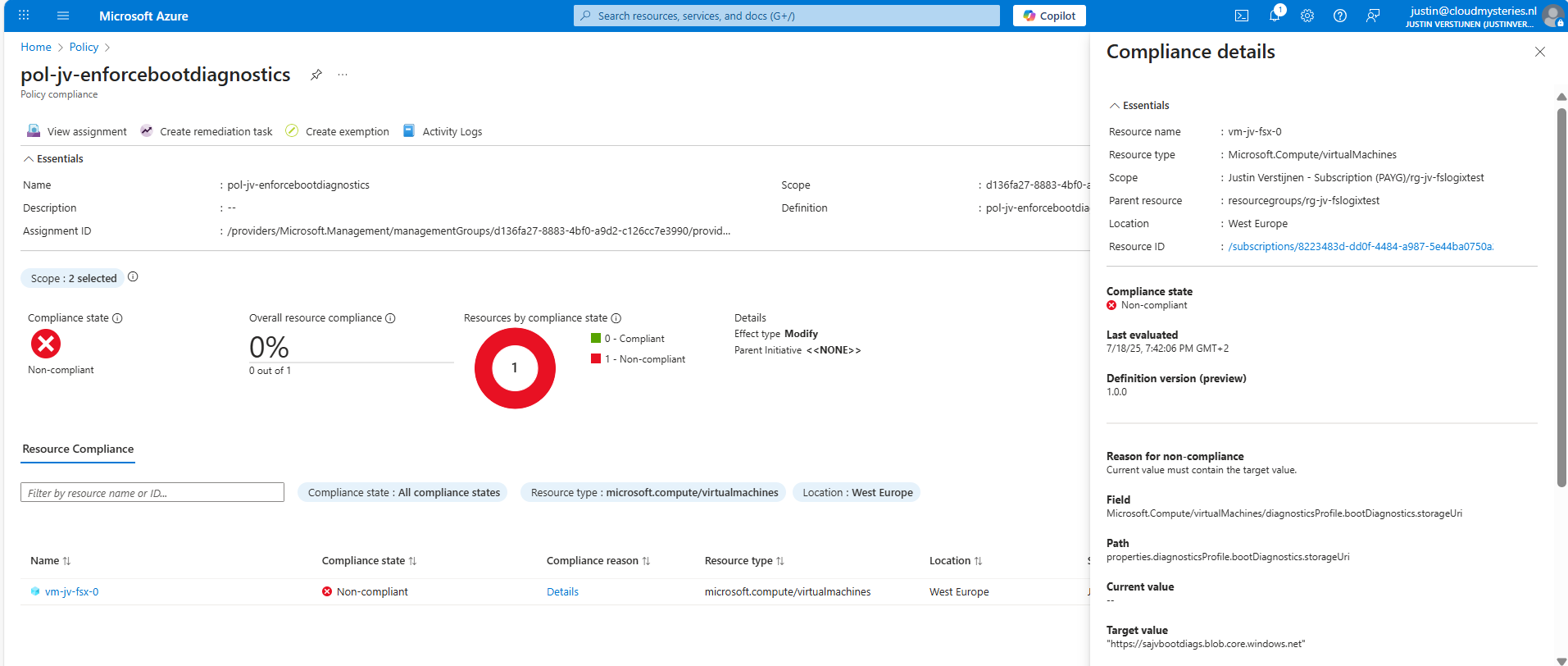

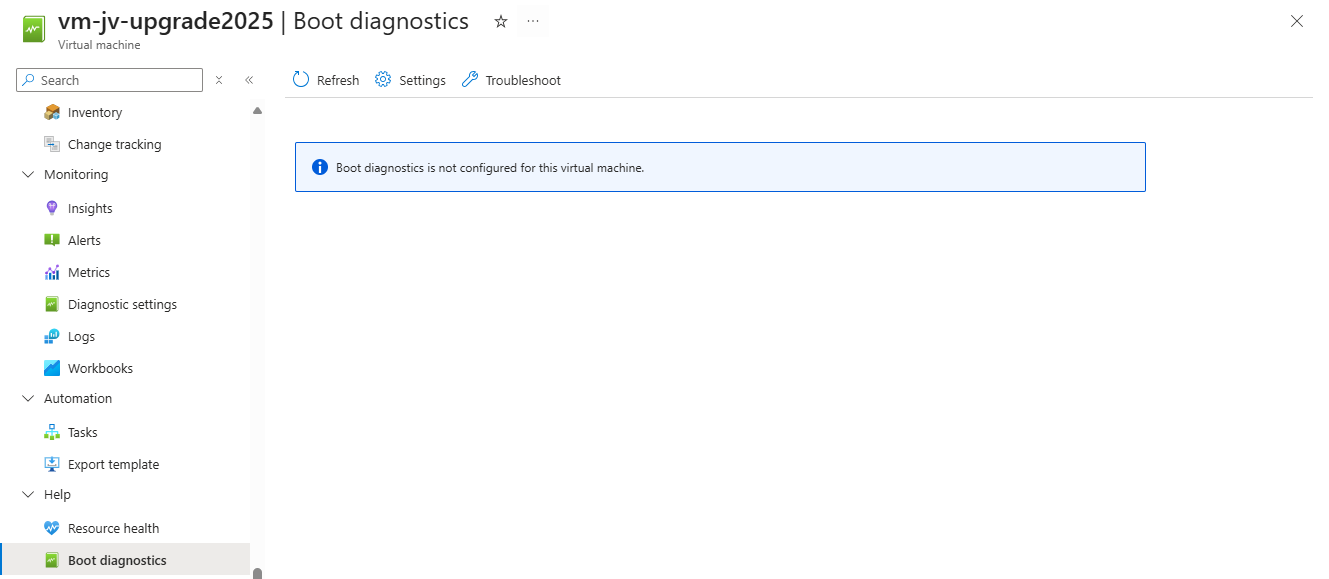

In my environment I have a test machine called vm-jv-fsx-0 with boot diagnostics disabled:

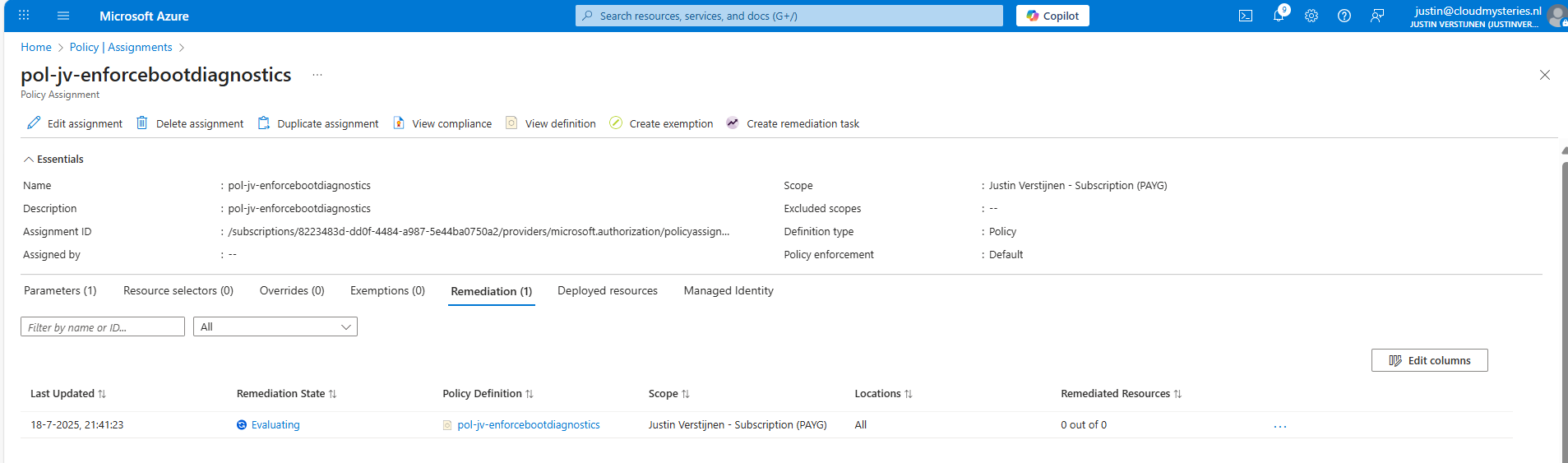

This is just after assigning the policy, so a little patience is needed. We can check the status of the policy evaluation at the policy assignment and then “Remediation”:

After 30 minutes or something, this will automatically be configured:

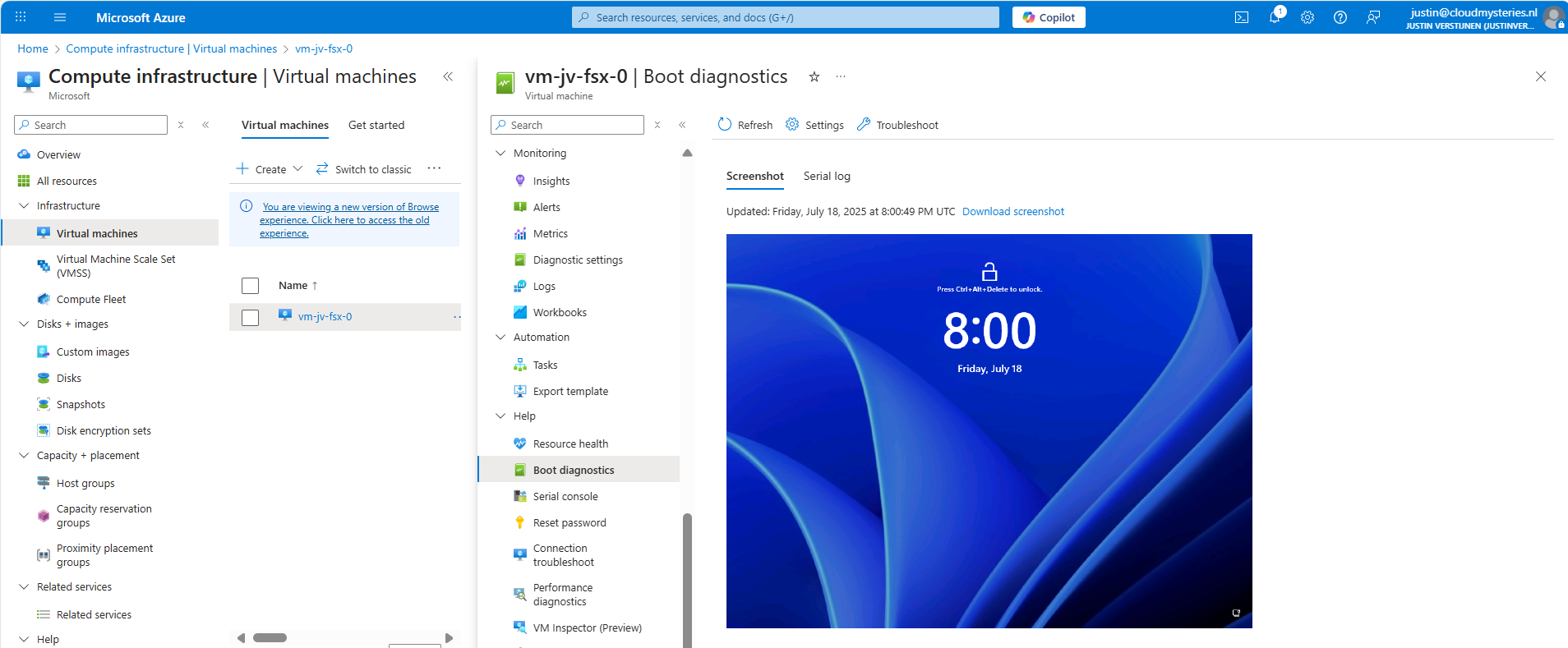

This took about 20 minutes in my case. Now we have access to the boot configuration:

Step 6: Monitor your policy compliance (optional)

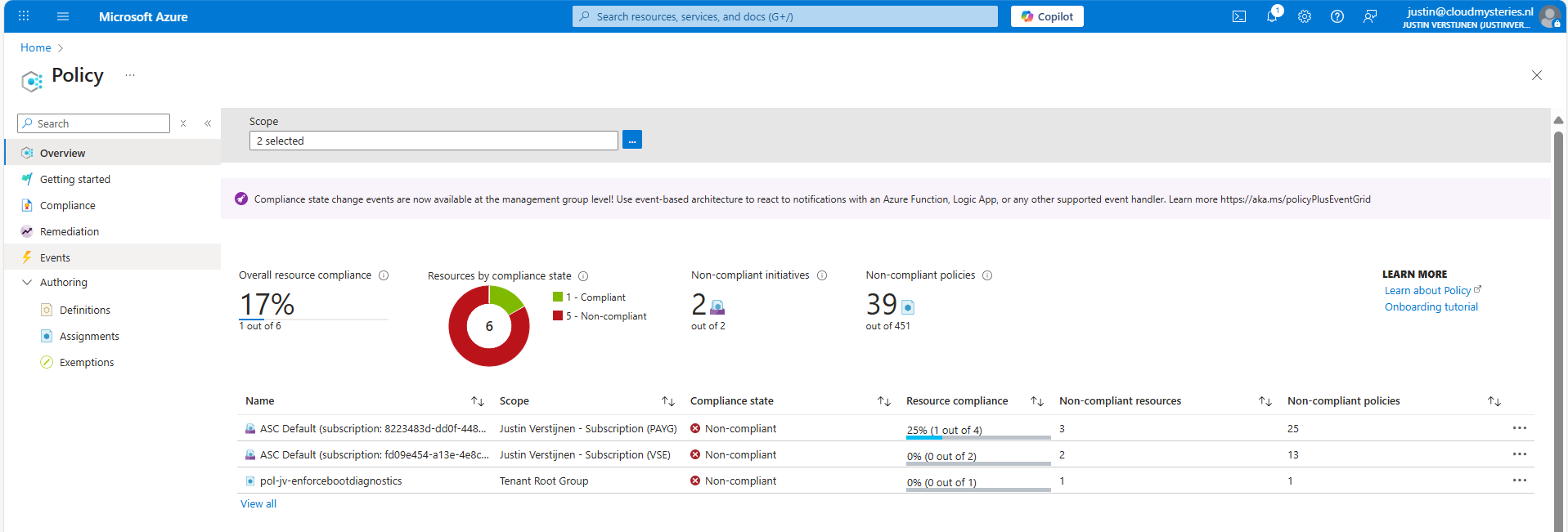

You can monitor the compliance of the policy by going to “Policy” and search for your assignment:

You will see the configuration of the definition, and you can click on “Deployed resources” to monitor the status and deployment.

It will exactly show why the virtual machine is not compliant and what to do to make it compliant. If you have multiple resources, they will all show up.

Summary

Azure Policy is a great way to automate, monitor and ensure your Azure Resources remain compliant with your policies by remediating them automatically. This is only one possibility of using Policy but for many more options.

I hope I helped you with this guide and thank you for visiting my website.

Sources

These sources helped me by writing and research for this post;

- https://learn.microsoft.com/en-us/azure/governance/policy/overview

- https://learn.microsoft.com/en-us/azure/virtual-machines/boot-diagnostics

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Wordpress on Azure

Requirements

- An Azure subscription

- A public domain name to run the website on (not required, but really nice)

- Some basic knowledge about Azure

- Some basic knowledge about IP addresses, DNS and websites

- Around 45 minutes of your time

What is Wordpress?

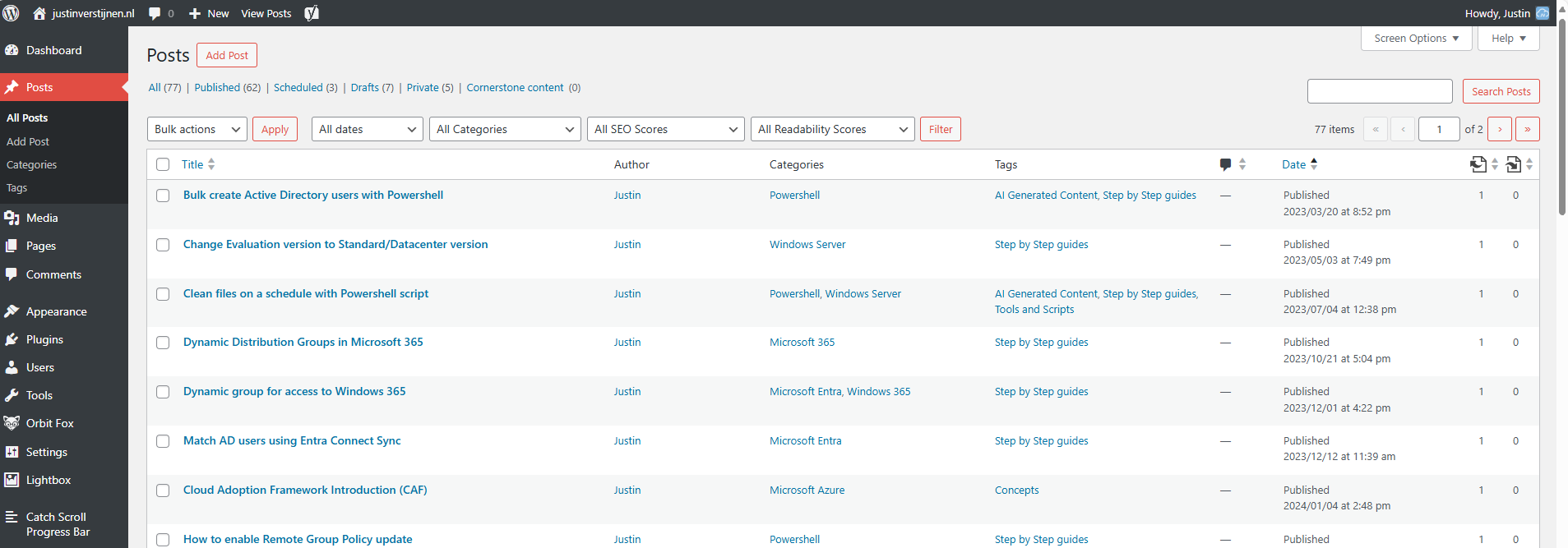

For the people who may not know what Wordpress is; Wordpress is a tool to create and manage websites, without needing to have knowledge of code. It is a so-called content management system (CMS) and has thousands of themes and plugins to play with. This website you see now is also running on Wordpress.

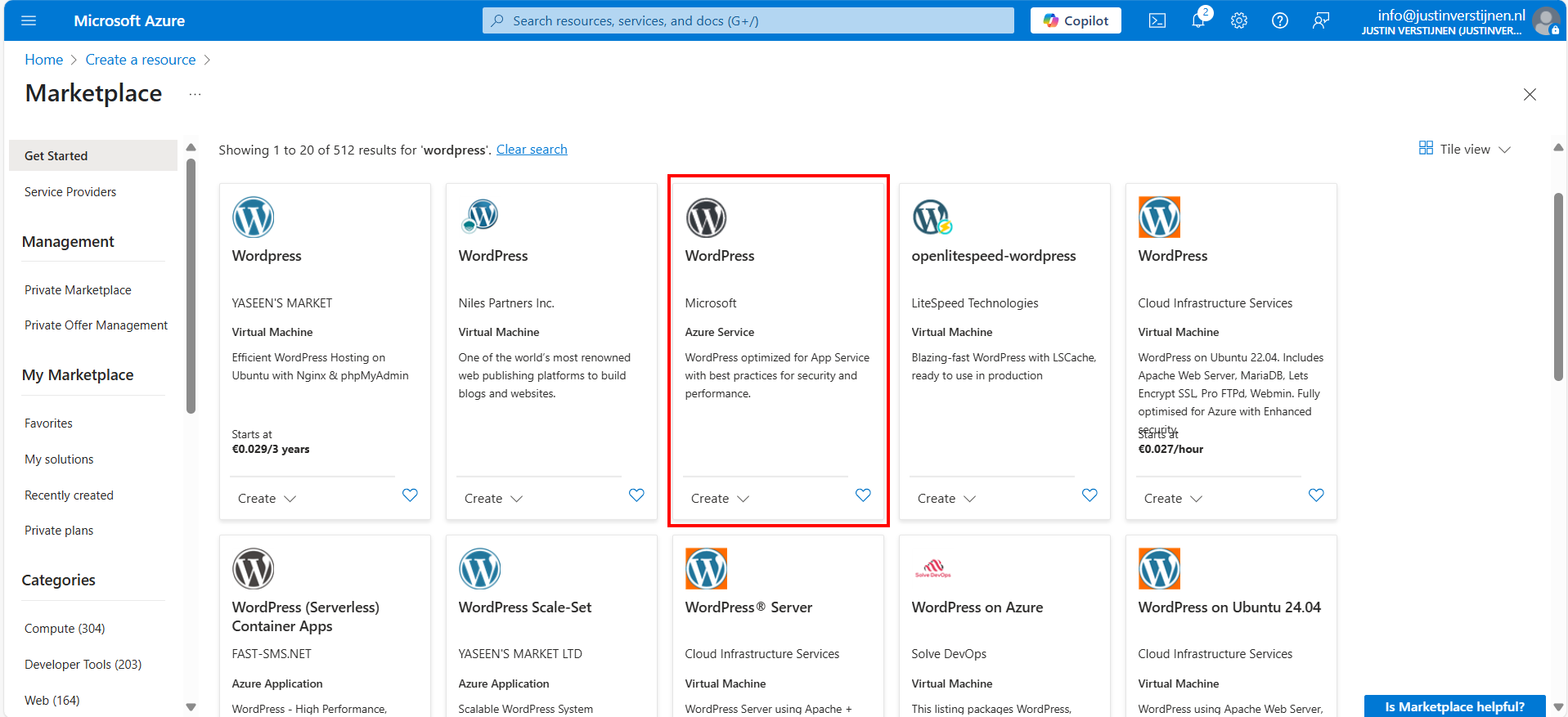

Different Azure Wordpress offerings

When we look at the Azure Marketplace, we have a lot of different Wordpress options available:

Now I want to highlight some different options, where some of these offerings will overlap or have the same features and architecture which is bold in the Azure Marketplace:

- Virtual Machine: This means Wordpress runs on a virtual machine which has to be maintained, updated and secured.

- Azure Service: This is the official offering of Microsoft, completely serverless and relying the most on Azure solutions

- Azure Application: This is an option to run Wordpress on containers or scale sets.

In this guide, we will go for the official Microsoft option, as this has the most support and we are Azure-minded.

Pricing of Wordpress on Azure (Linux)

We have the following plans and prices when running on Linux:

| Plan | Price per month | Specifications | Options and use |

| Free | 0$ | App: F1, 60 CPU minutes a day Database: B1ms | Not for production use, only for hobby projects. No custom domain and SSL support |

| Basic | ~ 25$ (consumption based) | App: B1 (1c 1,75RAM) Database: B1s (1c 1RAM) No autoscaling and CDN | Simple websites with same performance as free tier, but with custom domain and SSL support |

| Standard | ~ 85$ per instance (consumption based) | App: P1v2 (1c 3,5RAM) Database: B2s (2c 4RAM) | Simple websites who also need multiple instances for testing purposes. Also double the performance of the Basic plan. No autoscaling included. |

| Premium | ~ 125$ per instance (consumption based) | App: P1v3 (2c 8RAM) Database: D2ds_V4 (2c 16RAM) | Production websites with high traffic and option for autoscaling |

For the Standard and Premium offerings there is also an option to reserve your instance for a year for a 40% discount.

Architecture of the Wordpress solution

The Wordpress solution of Microsoft looks like this:

We start with Azure Front Door as load balancer and CDN, then we have our App service instances (1 to 3), they communicate with the private databases and thats it. The app service instances has their own delegated subnet (appsubnet) and the database instances have their own delegated subnet (dbsubnet).

This architecture is very flexible, scalable and focusses on high availability and security. It is indeed more complex than one virtual machine, but it’s better too.

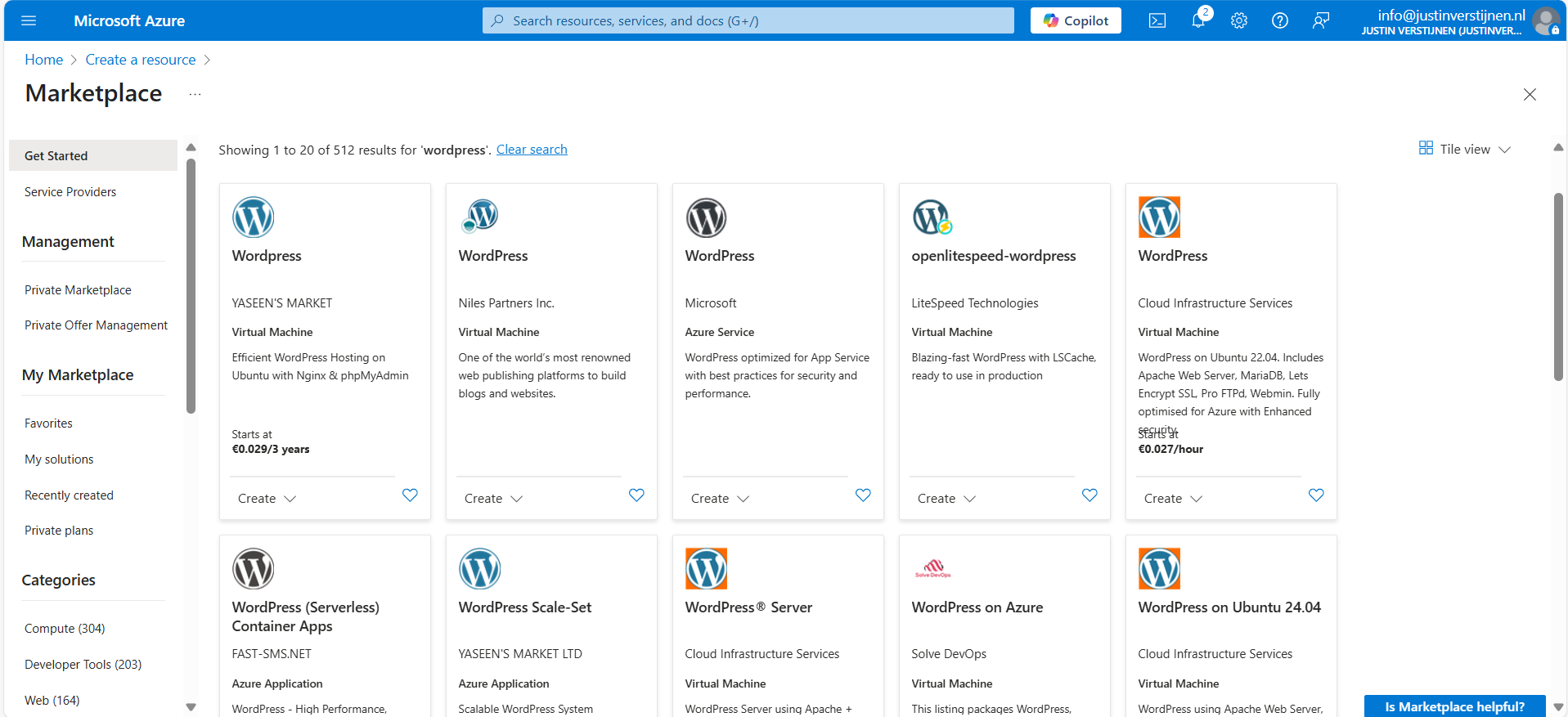

Backups of Wordpress

Backups of the whole Wordpress solution is included with the monthly price. Every hour Azure will take a backup from the App Service instance and storage account, starting from the time of creation:

I think this is really cool and a great pro that this will not take an additional 10 dollars per month.

Step 1: Preparing Azure

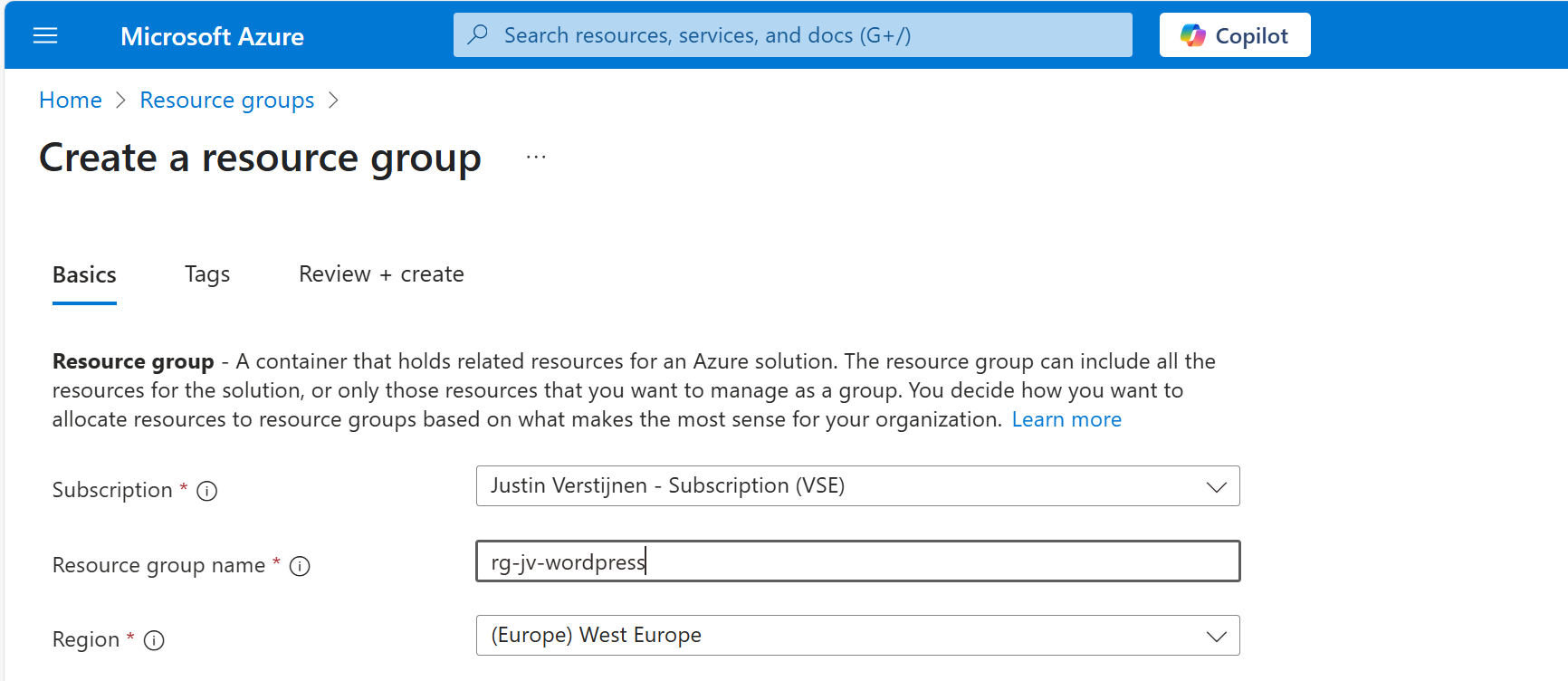

We have to prepare our Azure environment for Wordpress. We begin by creating a resource group to throw in all the dependent resources of this Wordpress solution.

Login to Microsoft Azure (https://portal.azure.com) and create a new resource group:

Finish the wizard. Now the resource group is created and we can advance to deploy the Wordpress solution.

Step 2: Deploy the Wordpress solution

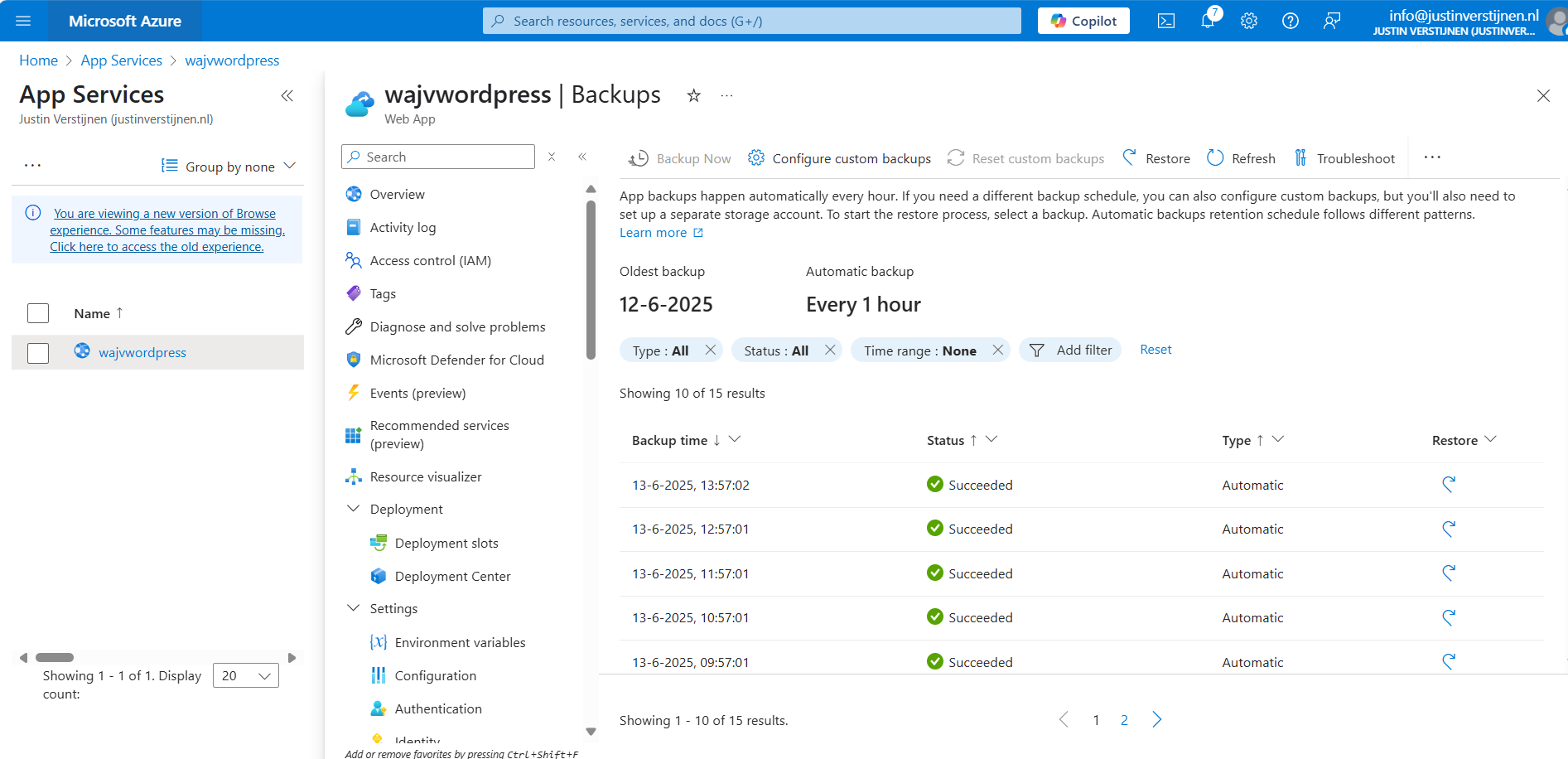

We can go to the Azure Marketplace now to search for the Wordpress solution published by Microsoft:

In this guide, we will use the Microsoft offering. You are free to choose other options, but some steps will not align with this guide.

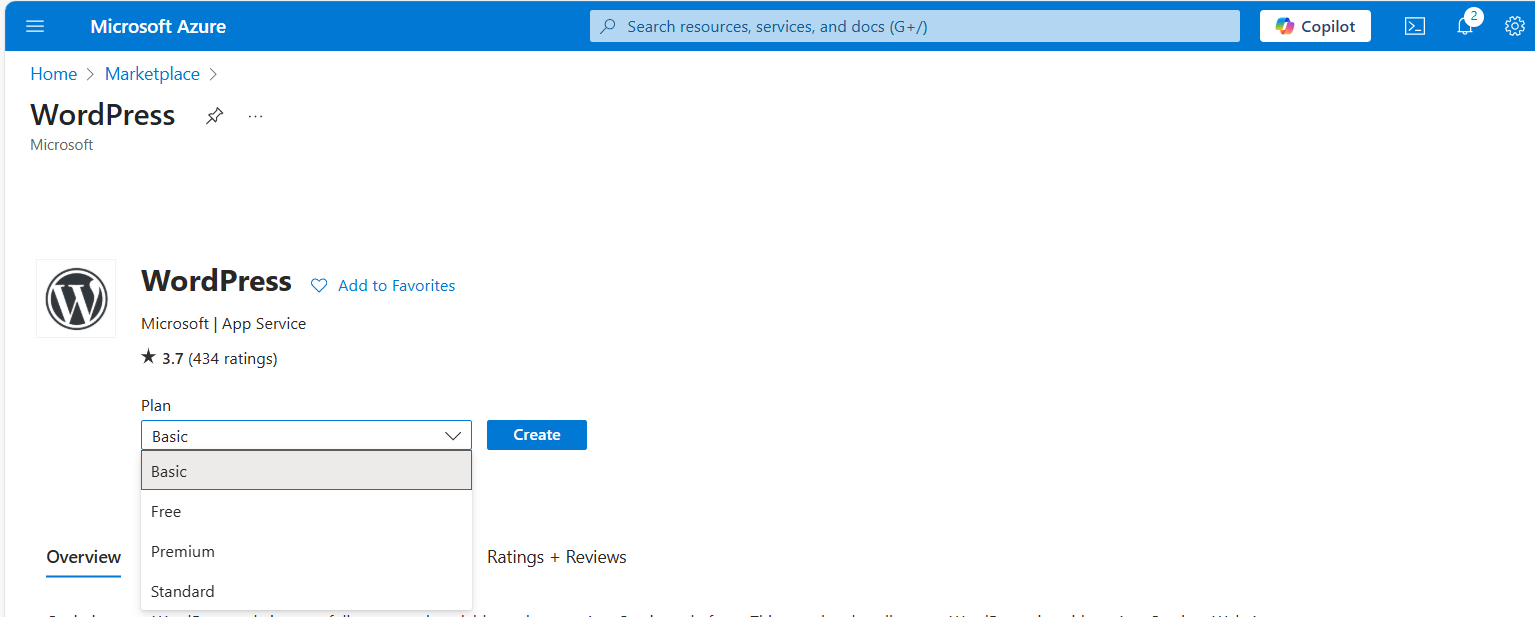

Now after selecting the option, we have 4 different plans which we can choose. This mostly depends on how big you want your environment to be:

For this guide, we will choose the Basic as we want to actually host on a custom domain name. Select the free plan and continue.

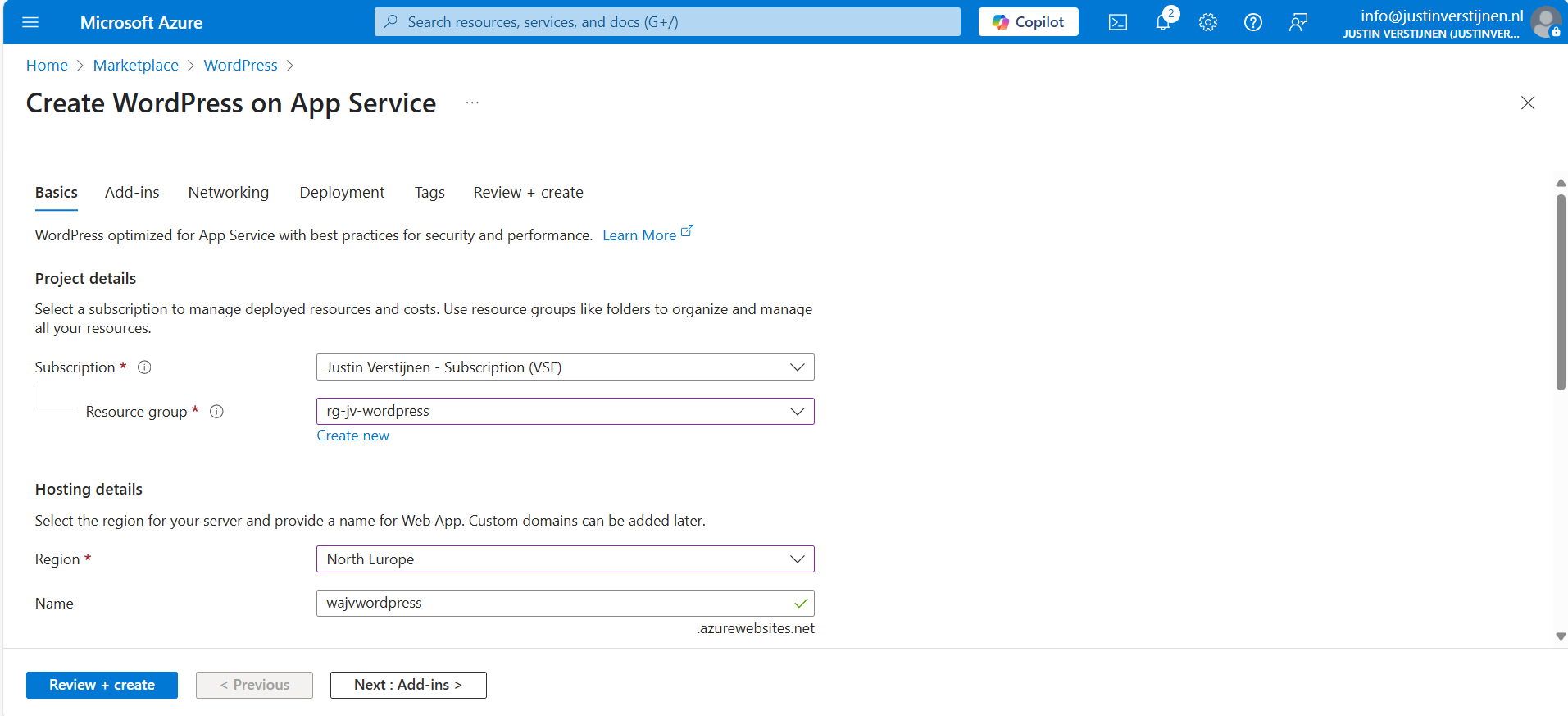

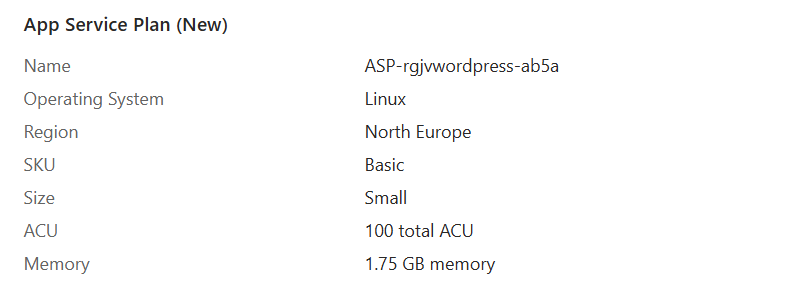

Resource group and App Service plan

Choose your resource group and choose a resource name for the Web app. This is a URL so may contain only small letters and numbers and hyphens (not ending on hyphen).

Scroll down and choose the “Basic” hosting plan. This is for the Azure App Service that is being created under the hood.

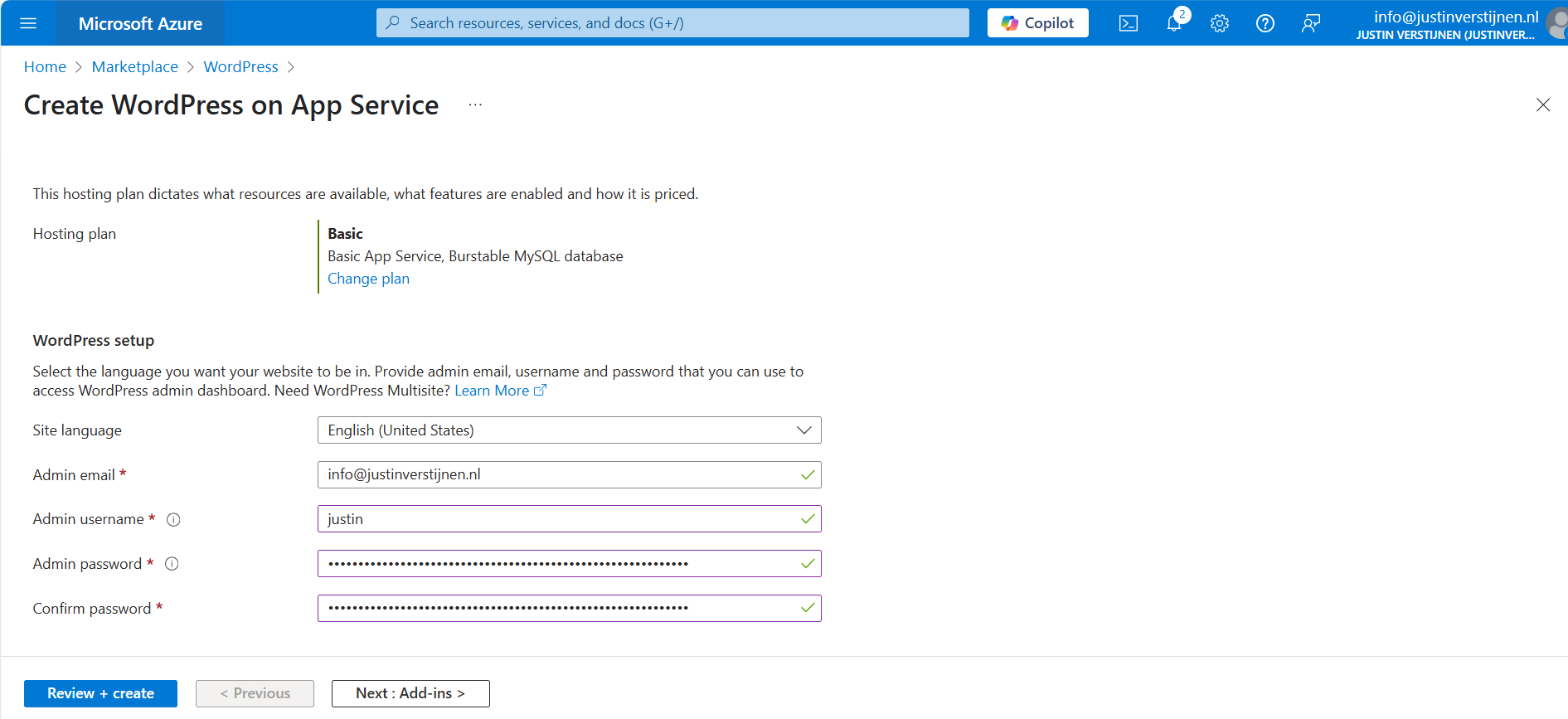

Wordpress setup

Then fill in the Wordpress Setup menu, this is the admin account for Wordpress that will be created. Fill in your email address, username and use a good password. You can also generate one with my password generator tool: https://password.jvapp.nl/

Click on “Next: Add ins >”

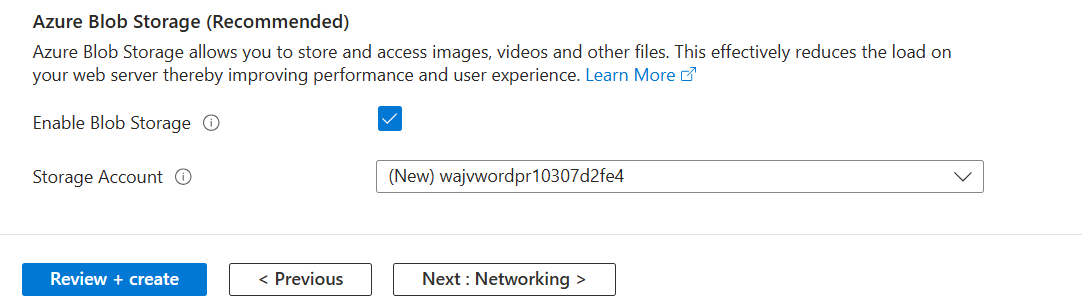

Add-ins

On the Add-ins page, i have all options as default but enabled the Azure Blob Storage. This is where the media files are stored like images, documents and stuff.

This automatically creates an storage account. Then go to the “Networking” tab.

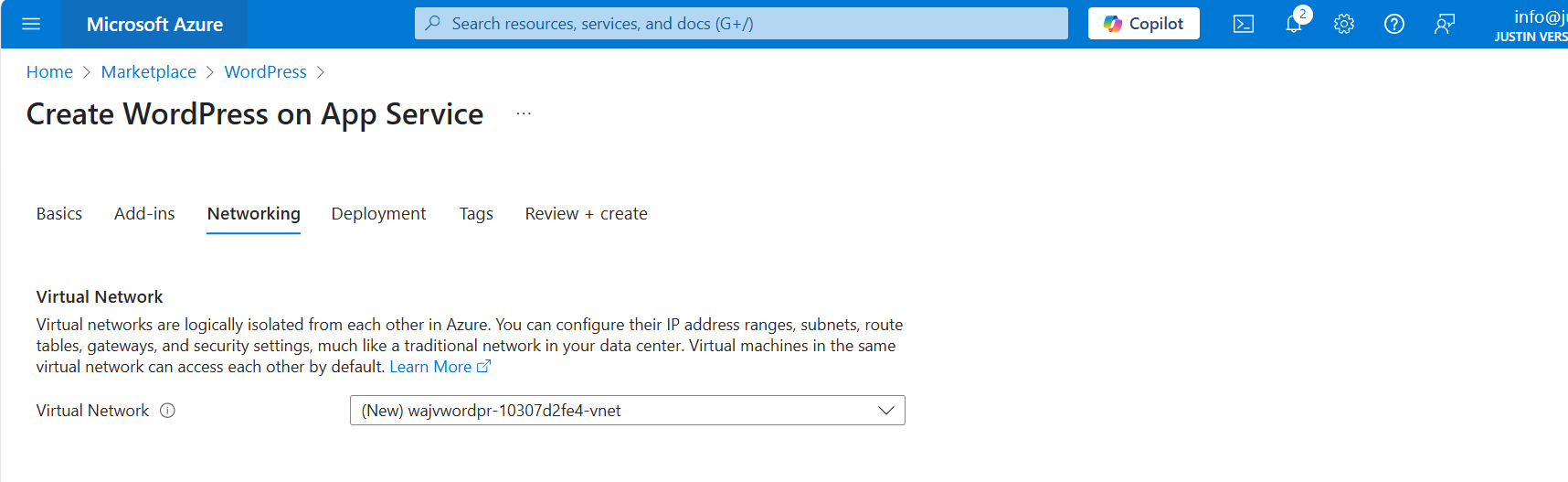

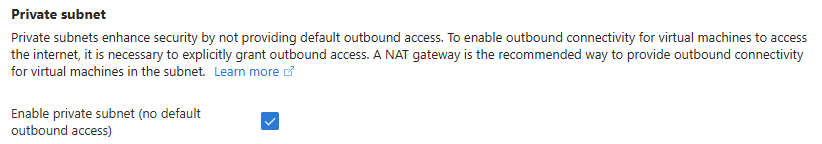

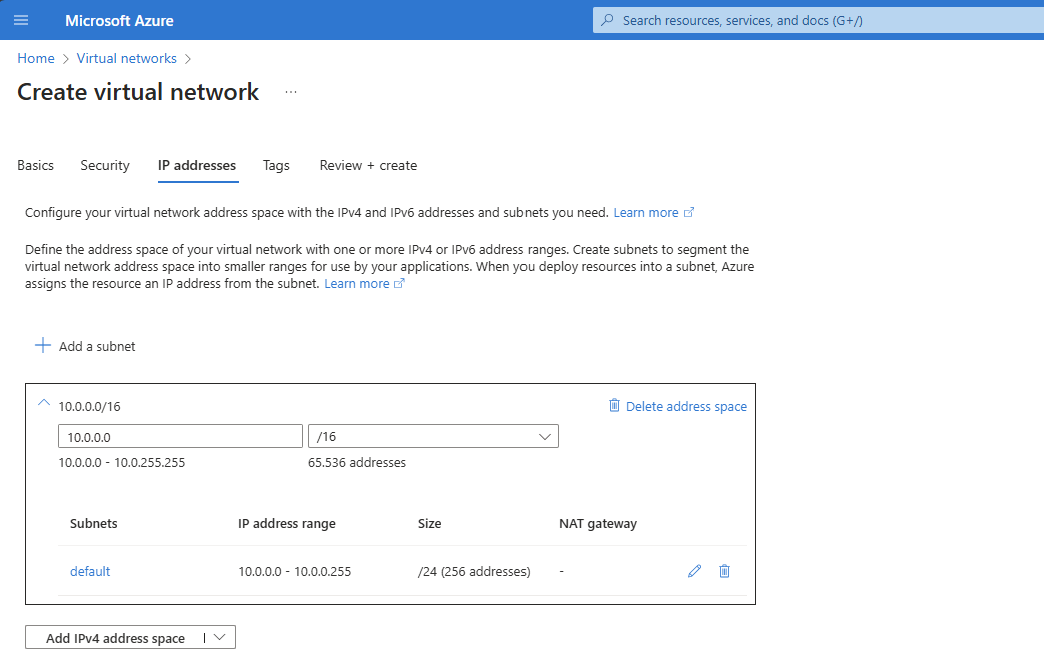

Networking

On the networking tab, we have to select a virtual network. This is because the database is hosted on a private, non public accessible network. When using a existing Azure network, select your own network. In my case, I stick to the automatic generated network.

When using your own network, you have to create 2 subnets:

- appsubnet

- dbsubnet

Click on “Next”. And finish the wizard. For the basic plan, there are no additional options available.

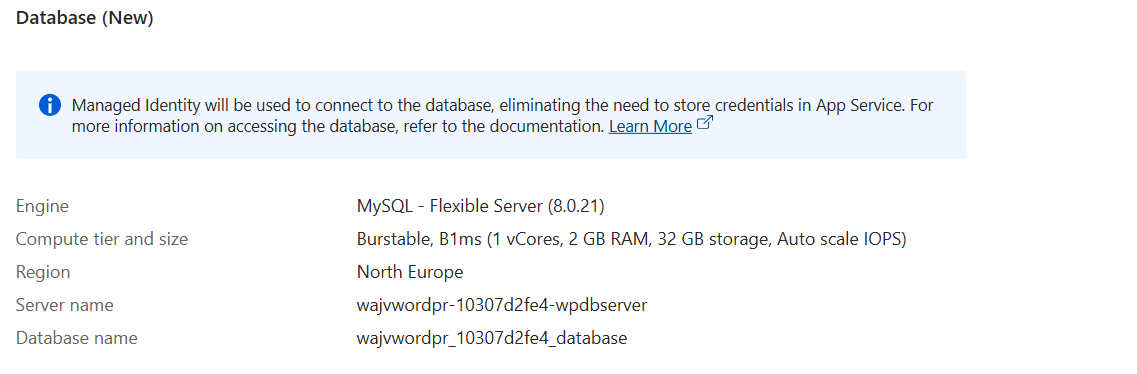

You will see at the review page that both the App service instance and the Database are being created.

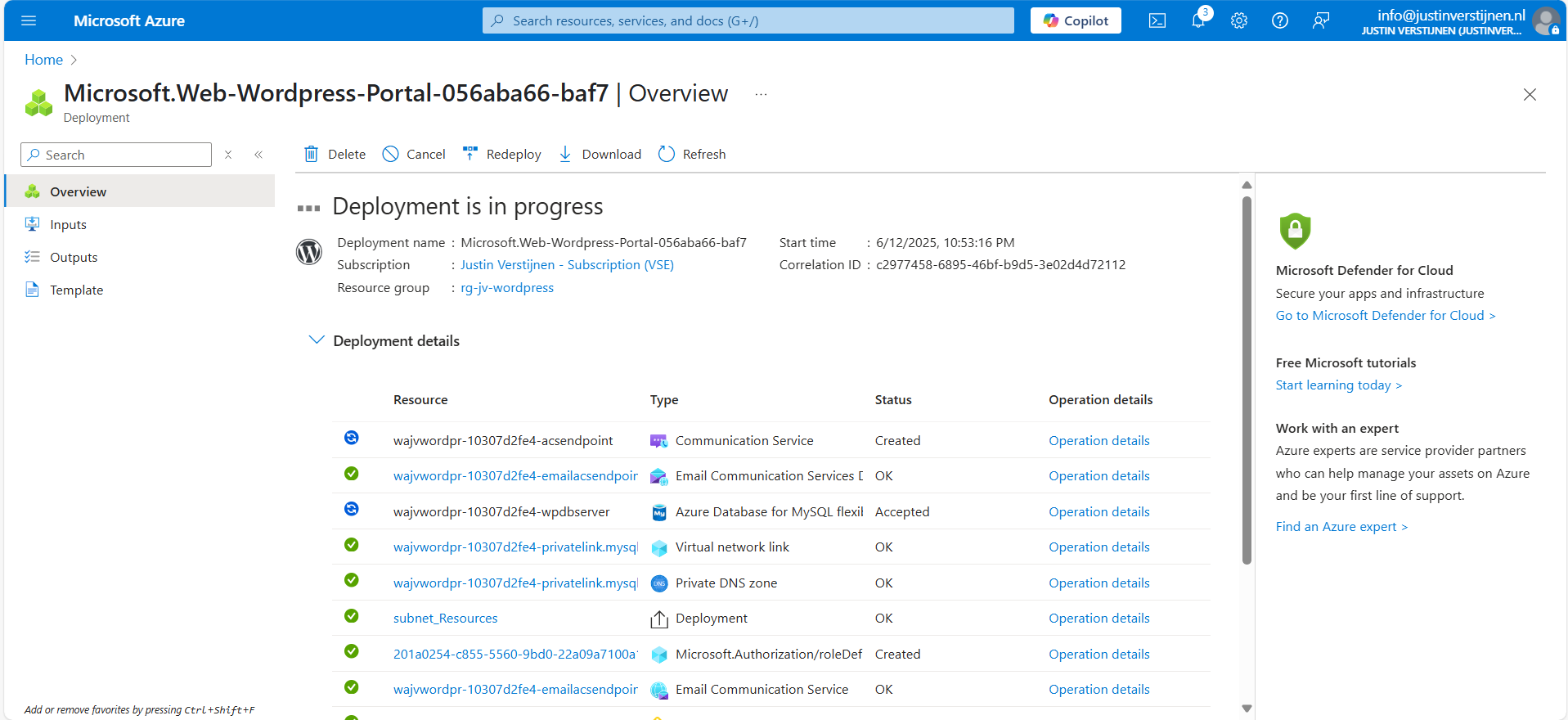

Deployment in progress

Now the deployment is in progress and you can see that a whole lot of resources are being created to make the Wordpress solution work. The nice thing about the Marketplace offerings is that they are pre-configured, and we only have to set some variables and settings like we did in Step 2.

The deployment took around 15 minutes in my case.

Step 3: Logging into Wordpress and configure the foundation

Now we are not going very deep into Wordpress itself, as this guide will only describe the process of building Wordpress on Azure. I have some post-installation recommendations for you to do which we will follow now.

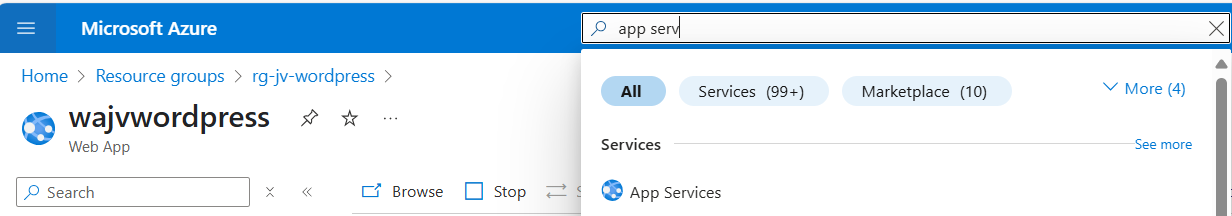

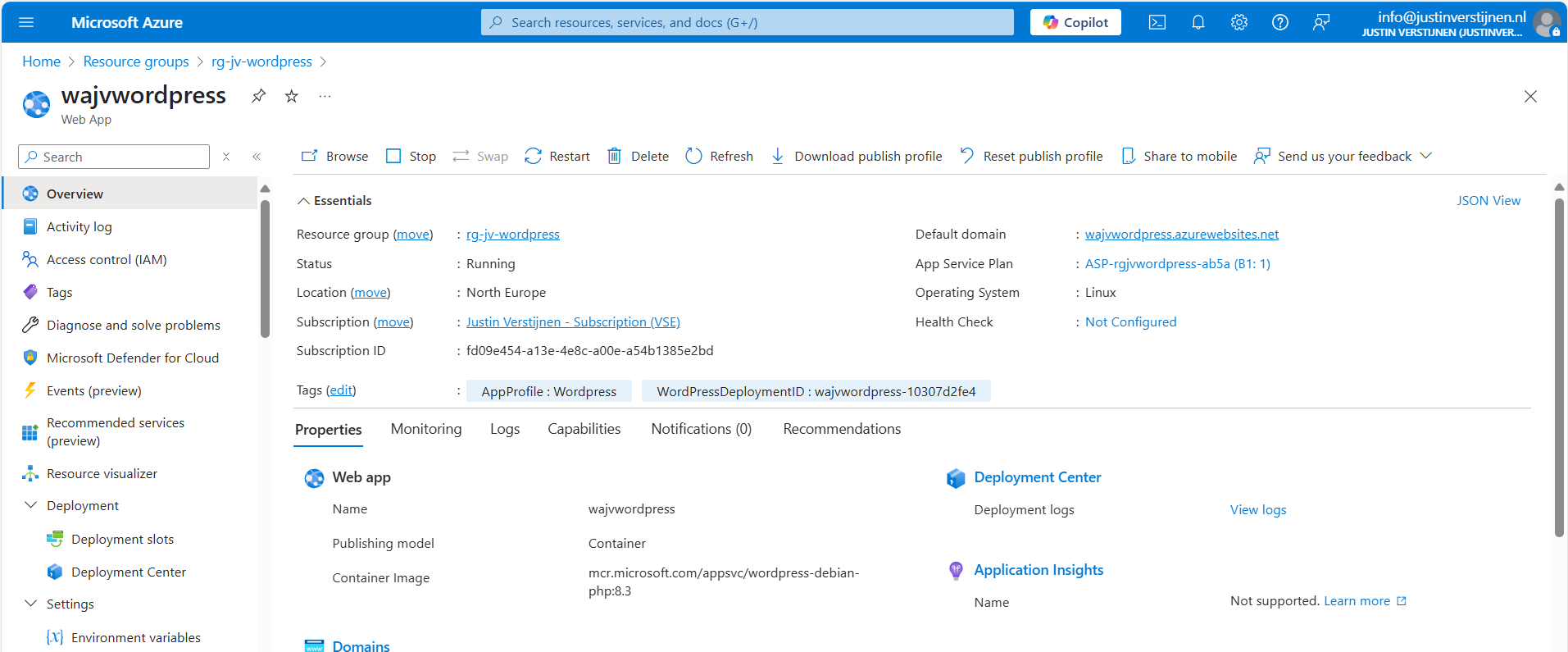

Now that the solution is deployed, we can go to the App Service in Azure by typing it in the bar:

There you can find the freshly created App Service. Let’s open it.

Here you can find the Web App instance the wizard created and the URL of Azure with it. My URL is:

- wajvwordpress.azurewebsites.net

We will configure our custom domain in step 4.

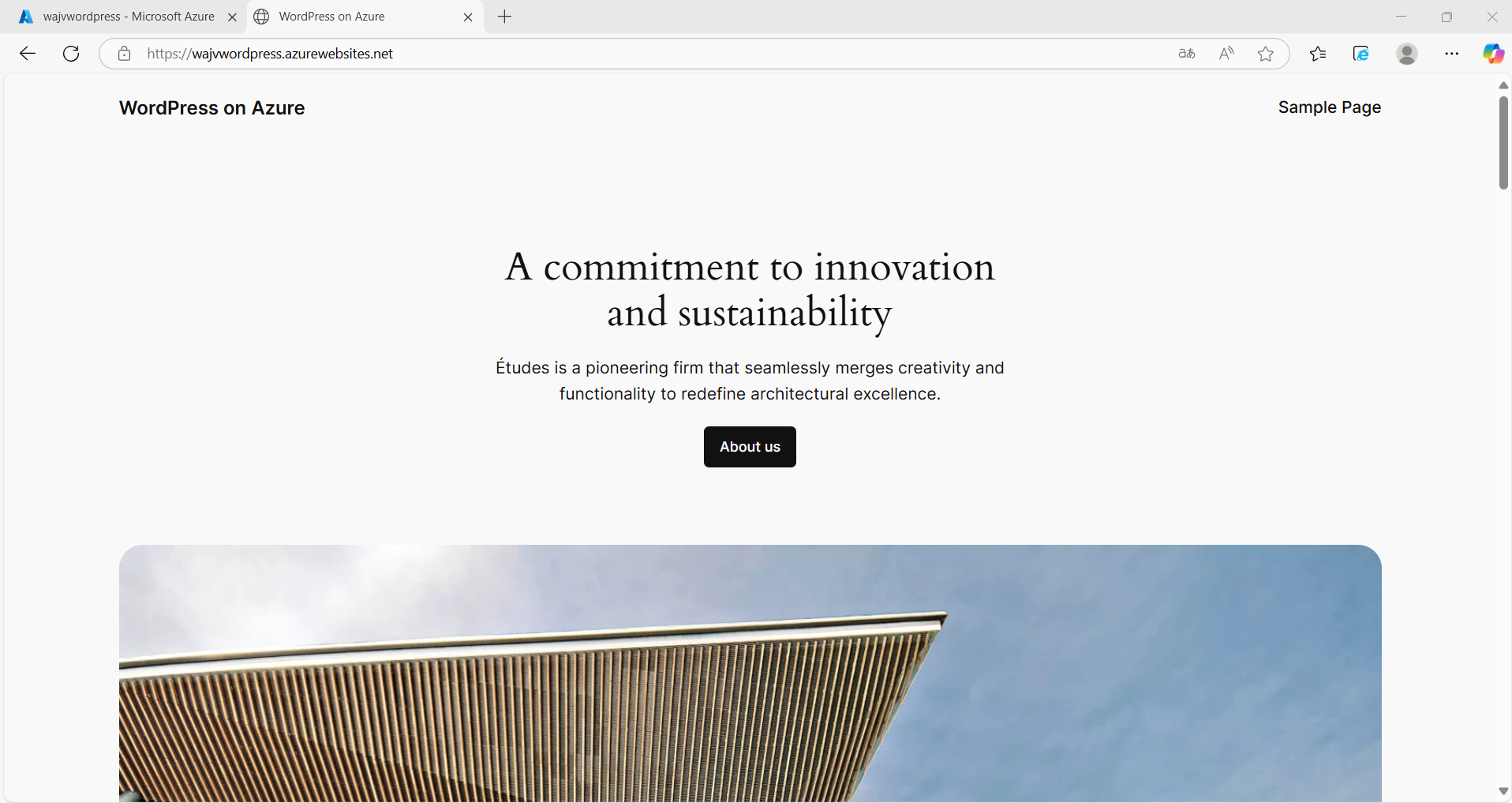

Wordpress Website

We can navigate to this URL to get the template website Wordpress created for us:

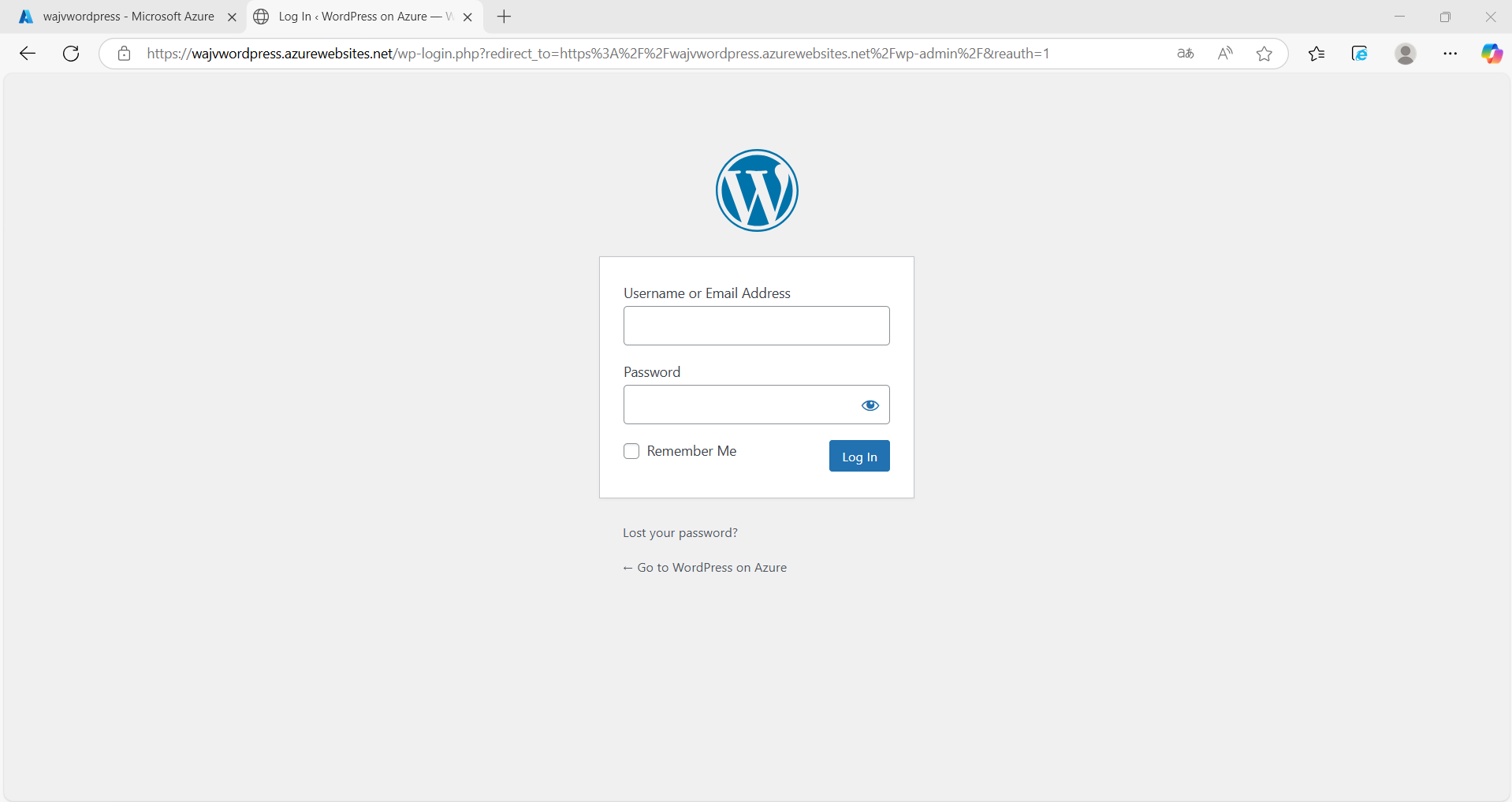

Wordpress Admin

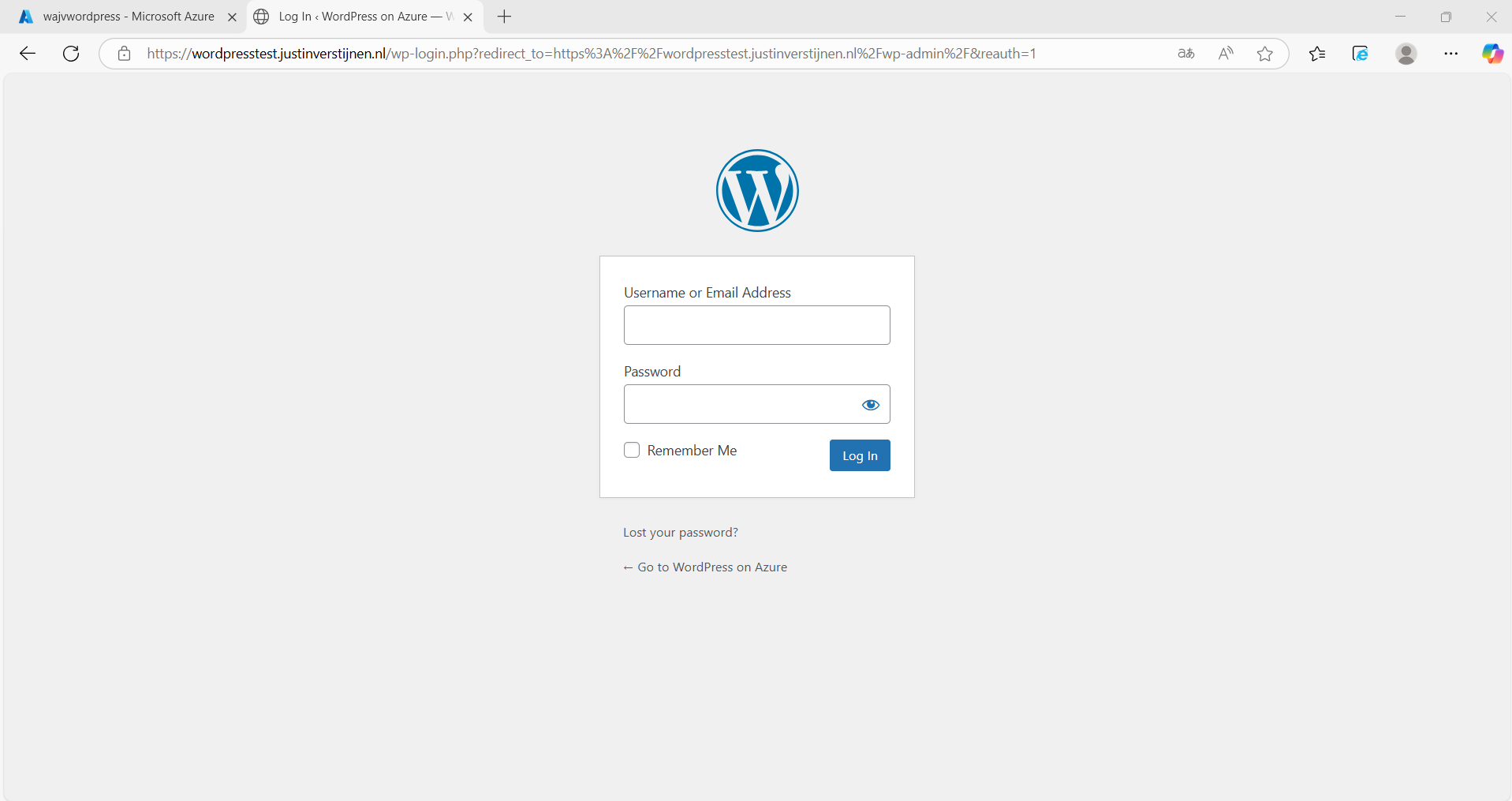

We want to configure our website. This can be done by adding “/wp-admin” to our URL:

- wajvwordpress.azurewebsites.net/wp-admin

Now we will get the Administrator login of Wordpress:

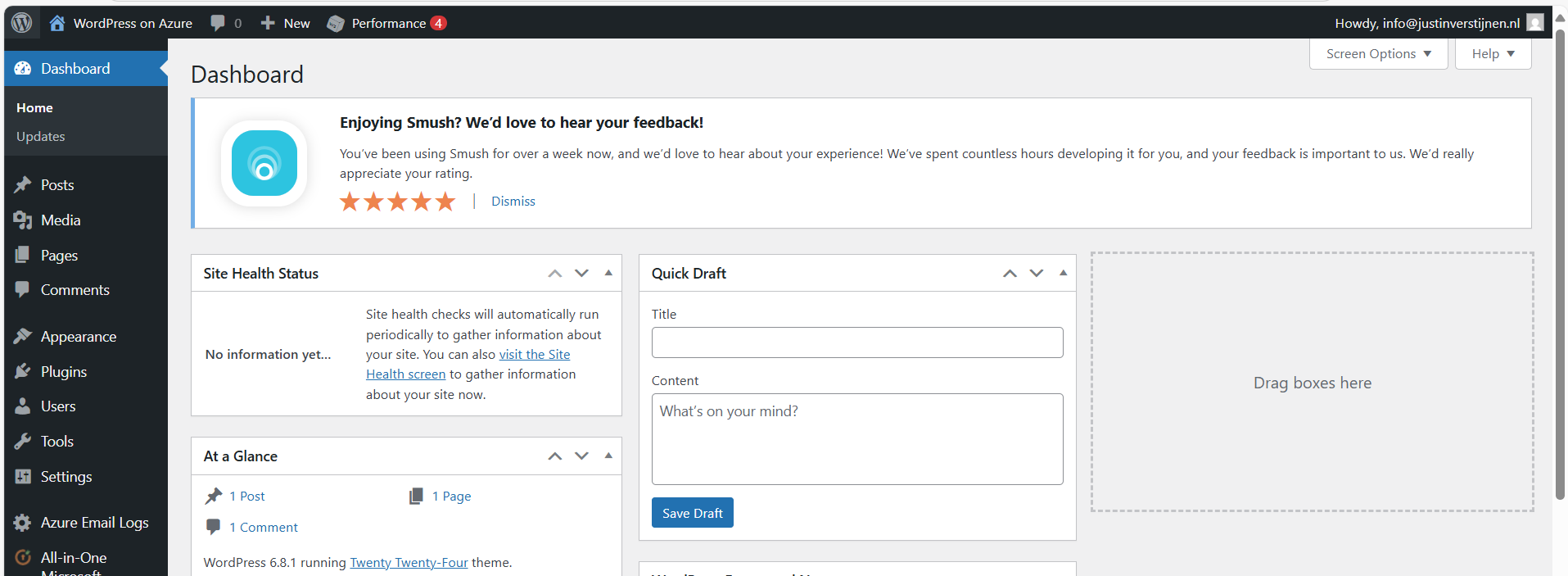

Now we can login to Wordpress with the credentials of Step 1: Wordpress setup

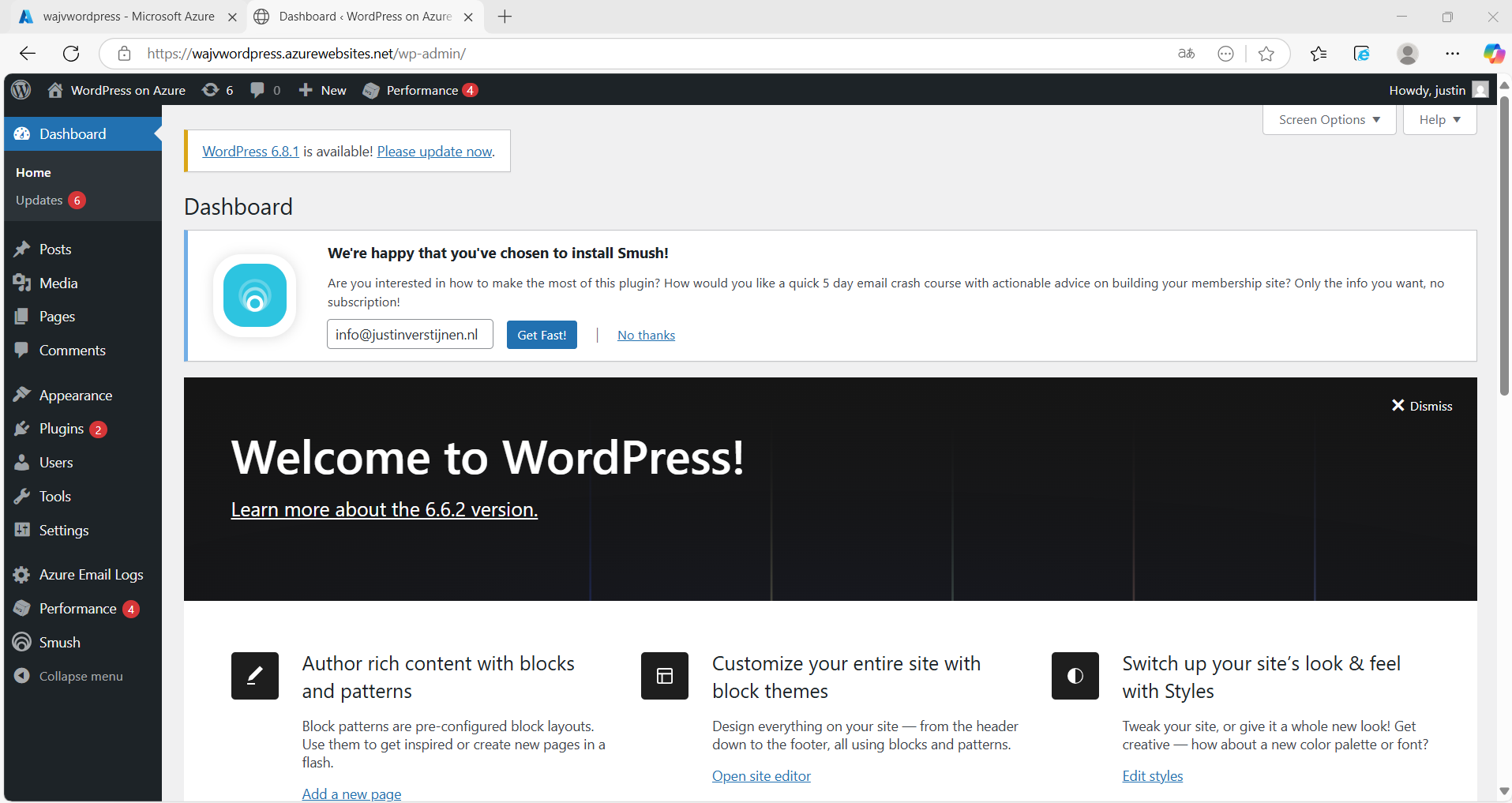

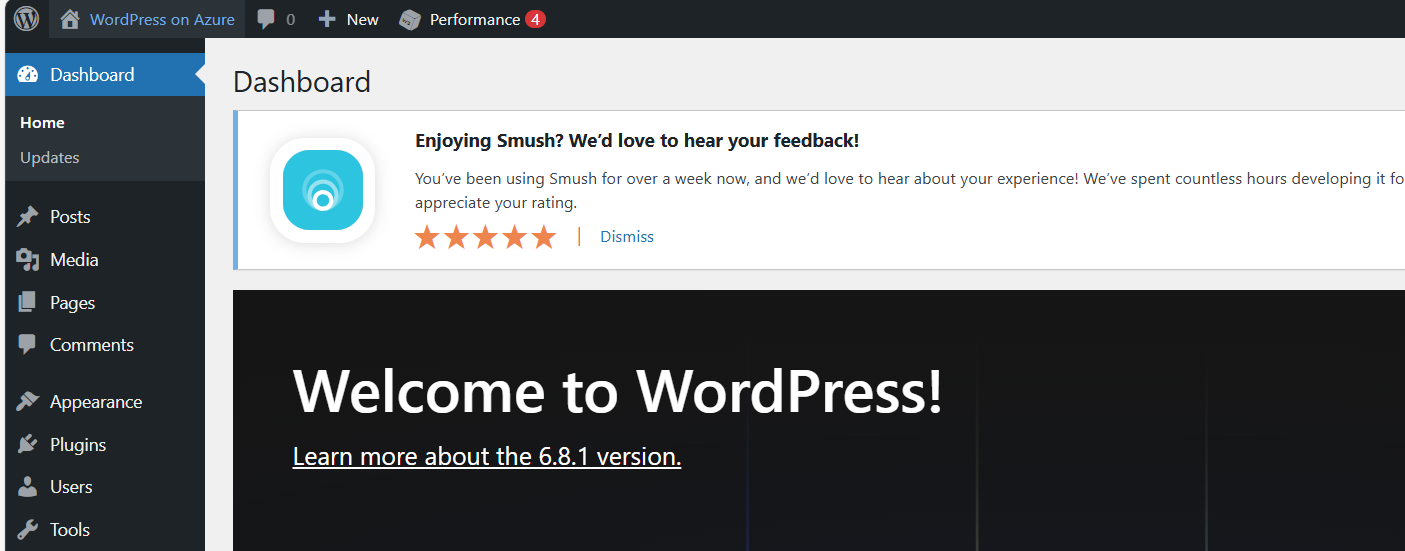

After logging in, we are presented the Dashboard of Wordpress:

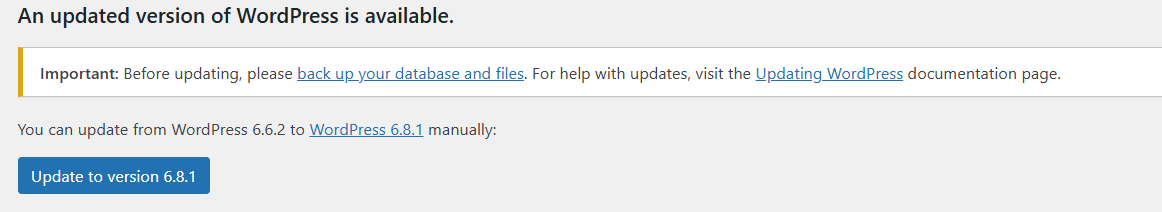

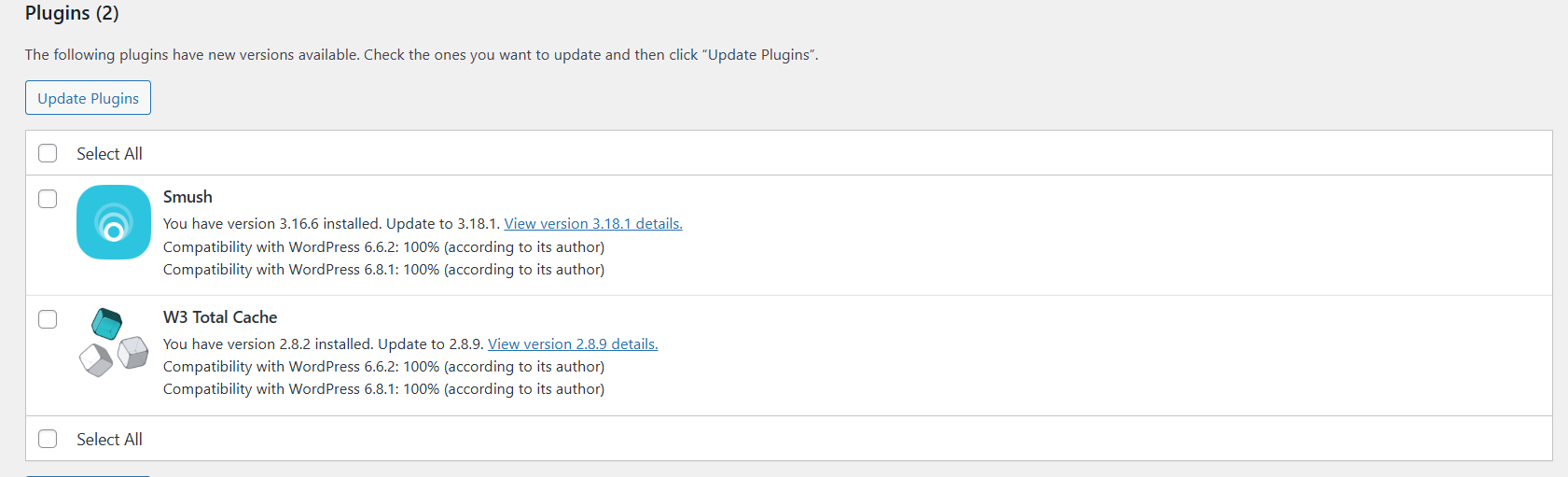

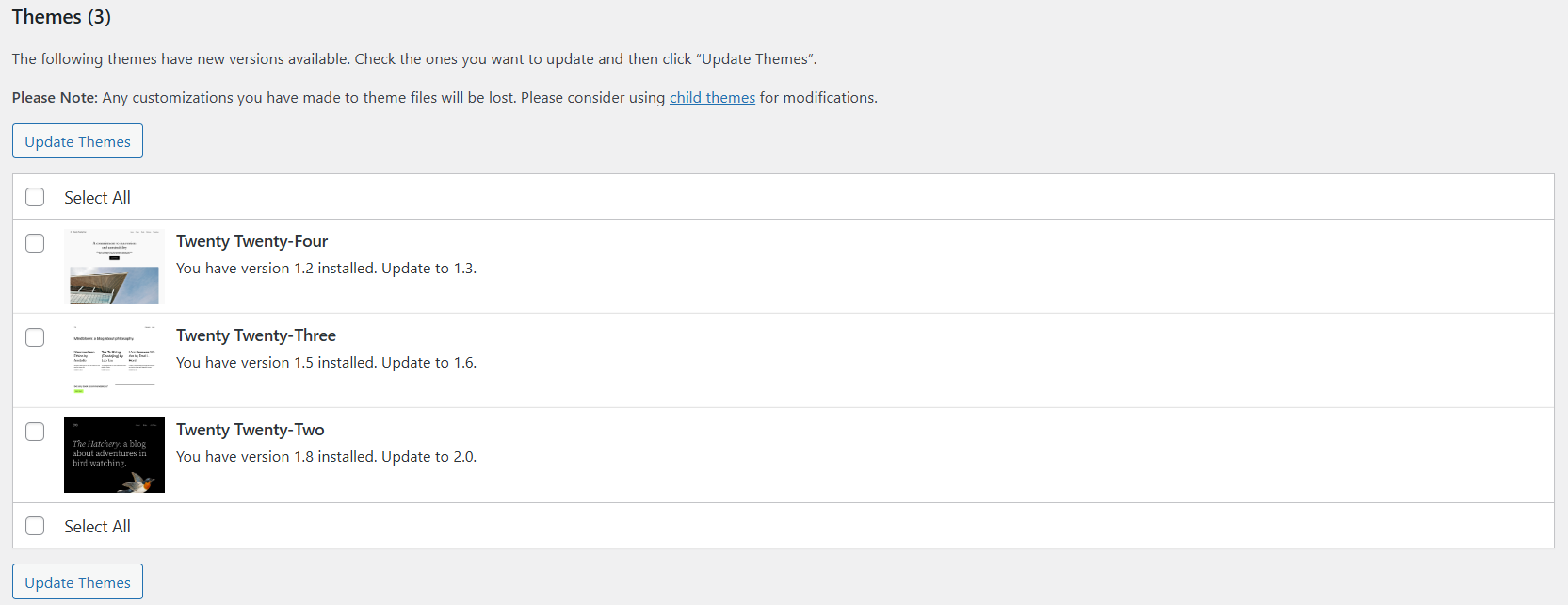

Updating to the latest version

As with every piece of software, my advice is to update directly to the latest version available. Click on the update icon in the left top corner:

Now in my environment, there are 3 types of updates available:

- Wordpress itself

- Plugins

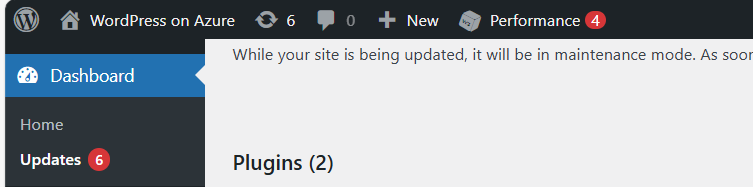

- Themes

Update everything by simply selecting all and clicking on the “Update” buttons:

After every update, you will have to navigate back to the updates window. This process is done within 10 minutes, the environment will be completely up-to-date and ready to build your website.

All updates are done now.

Step 4: Configure a custom domain

Now we can configure a custom, better readable domain for our Wordpress website. Lets get back to the Azure Portal and to the App Service.

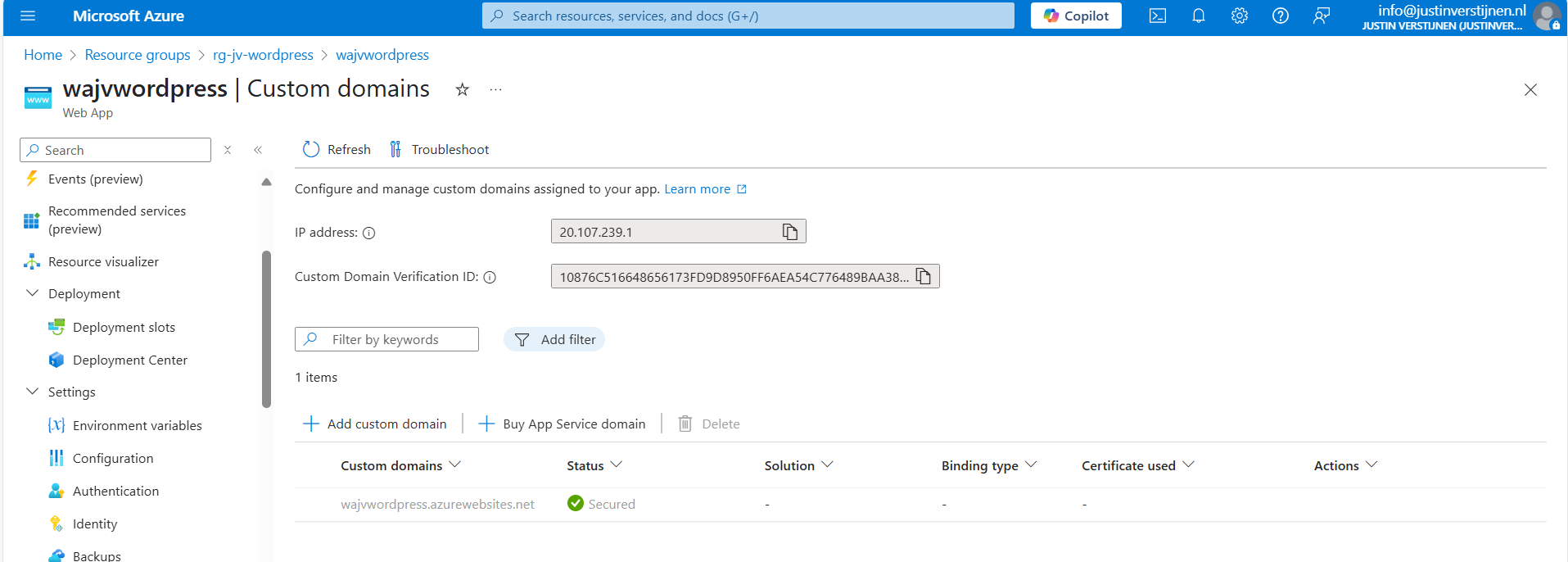

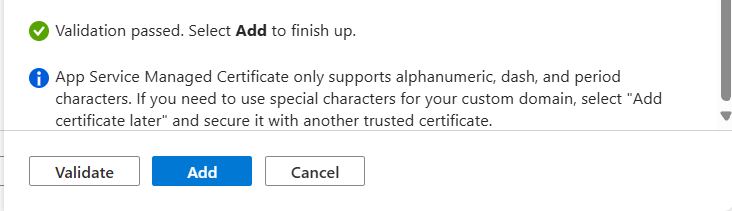

Under “Settings” we have the “Custom domains” option. Open this:

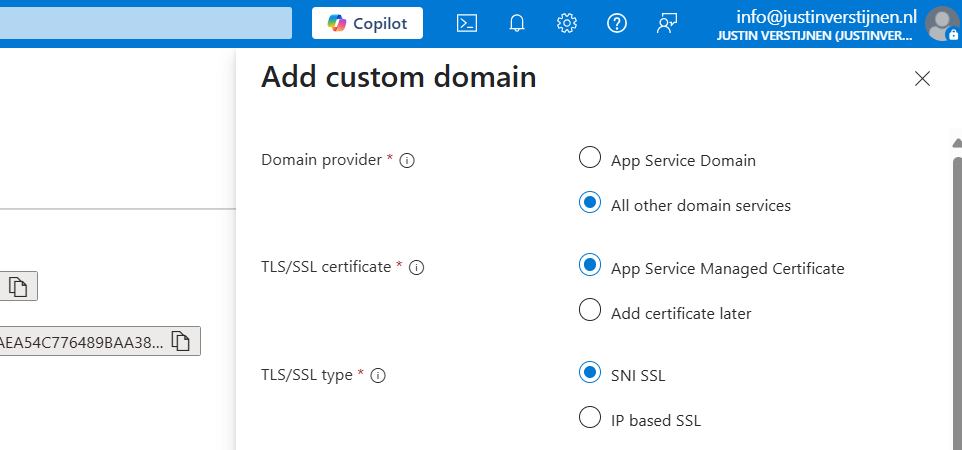

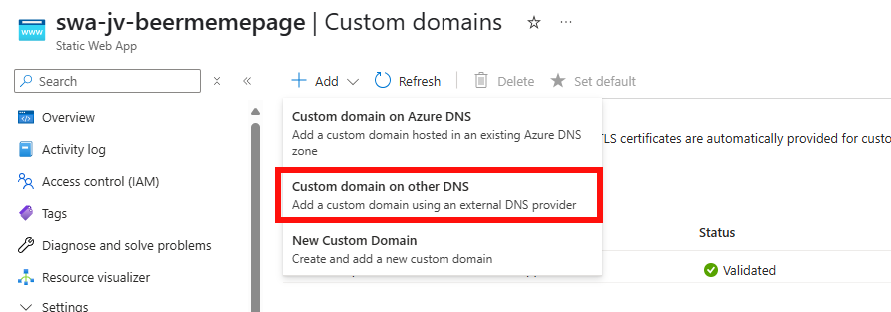

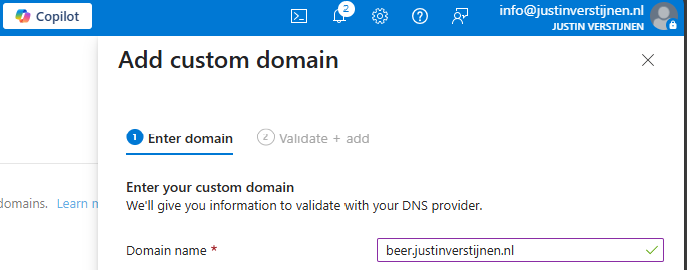

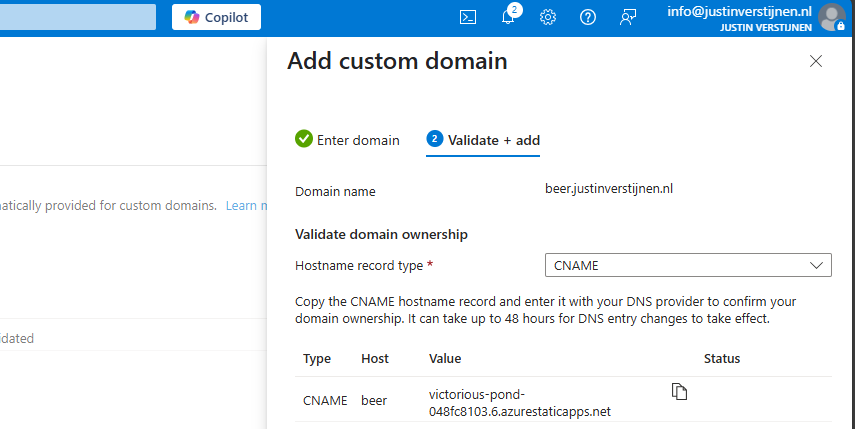

Click on “+ Add custom domain” to add a new domain to the app service instance. We now have to select some options in case we have a 3rd-party DNS provider:

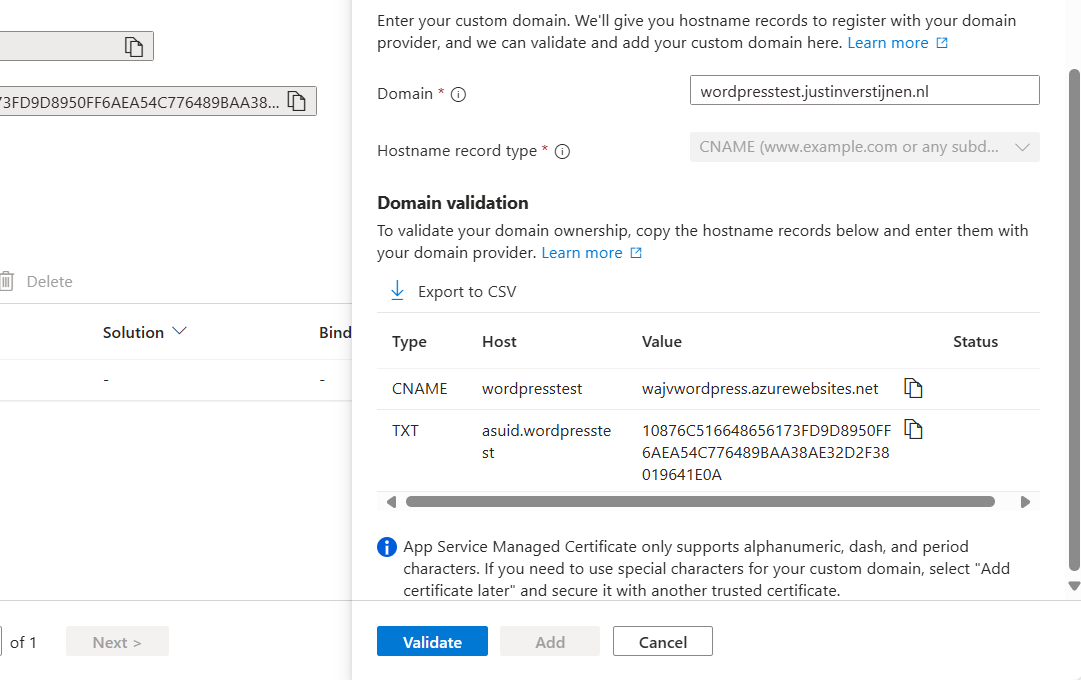

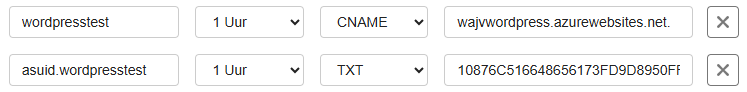

Then fill in your desired custom domain name:

I selected the name:

- wordpresstest.justinverstijnen.nl

This because my domain already contains a website. Now we have to head over to our DNS hosting to verify our domain with the TXT record and we have to create a redirect to our Azure App Service. This can be done in 2 ways:

- When using a domain without subdomain: justinverstijnen.nl -> use a ALIAS record

- When using a subdomain: wordpresstest.justinverstijnen.nl -> use a CNAME record

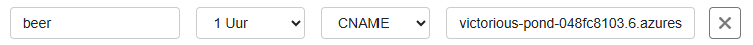

In my case, I will create a CNAME record.

Make sure that the CNAME or ALIAS record has to end with a “.” dot, because this is a domain outside of your own domain.

In the DNS hosting, save the records. Then wait for around 2 minutes before validating the records in Azure. This should work instantly, but can take up to 24 hours for your records to be found.

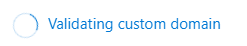

After some seconds, the custom domain is ready:

Click on “Add” to finish the wizard. After adding, a SSL certificate will be automatically added by Azure, which will take around a minute.

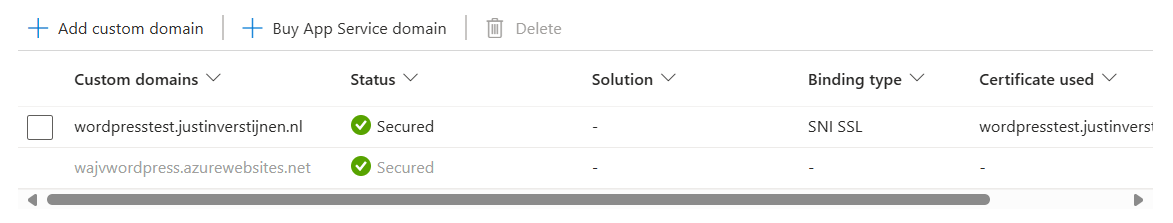

Now we are able to use our freshly created Wordpress solution on Azure with our custom domain name:

Let’s visit the website:

Works properly! :)

We can also visit the Wordpress admin panel on this URL now by adding /wp-admin:

Step 5: Configure Single Sign On with Entra ID

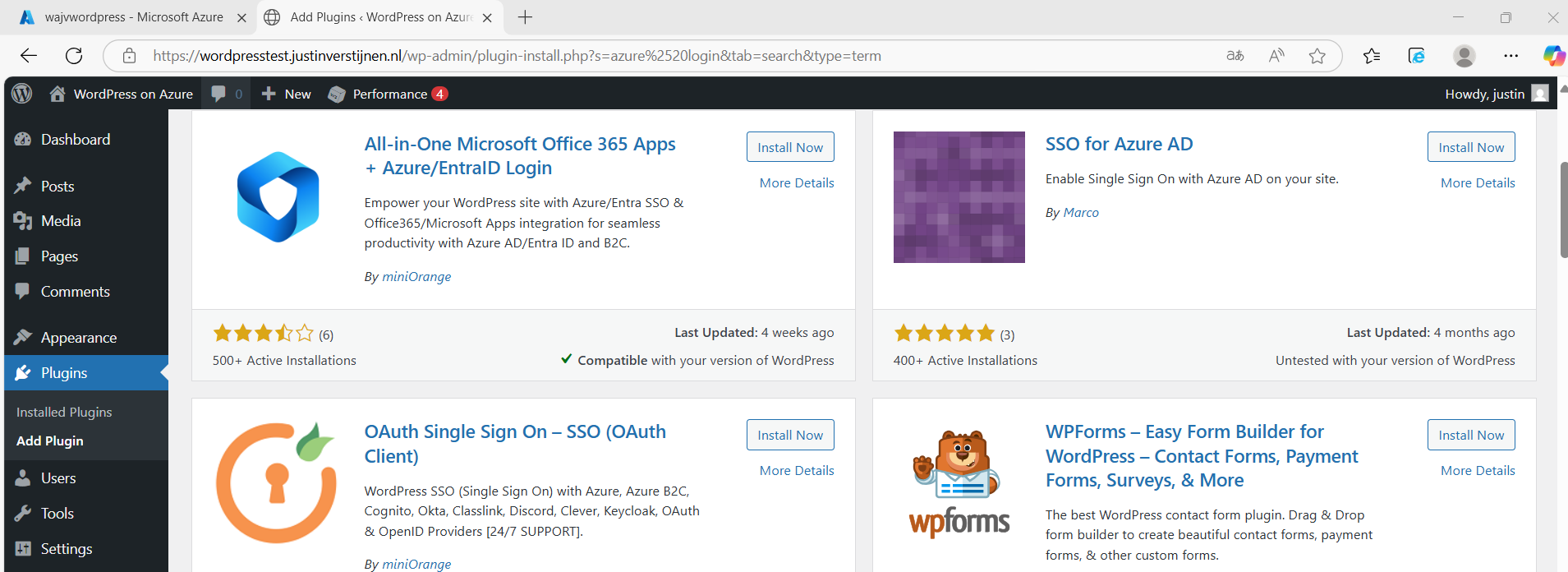

Now we can login to Wordpress but we have seperate logins for Wordpress and Azure/Microsoft. It’s possible to integrate Entra ID accounts with Wordpress by using this plugin:

- All-in-One Microsoft Office 365 Apps + Azure/EntraID Login

- https://wordpress.org/plugins/login-with-azure/

Head to Wordpress, go to “Plugins” and install this plugin:

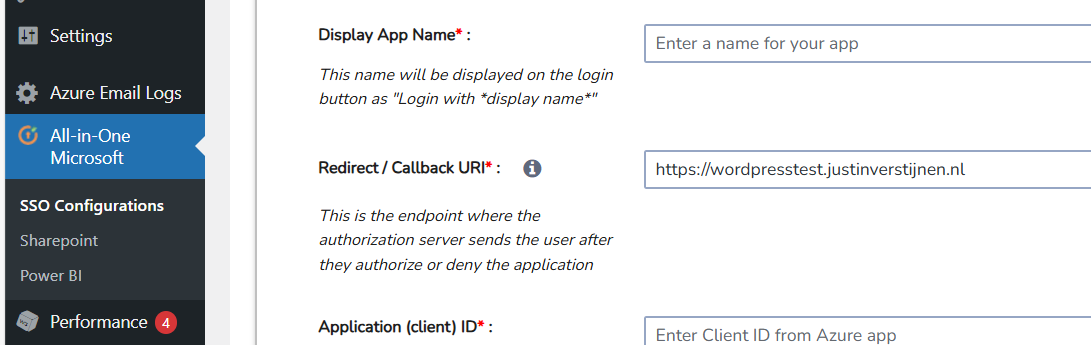

After installing the plugin and activating the plugin, we have an extra menu option in our navigation window on the left:

We now have to configure the Single Sign On with our Microsoft Entra ID tenant.

Create an Entra ID App registration

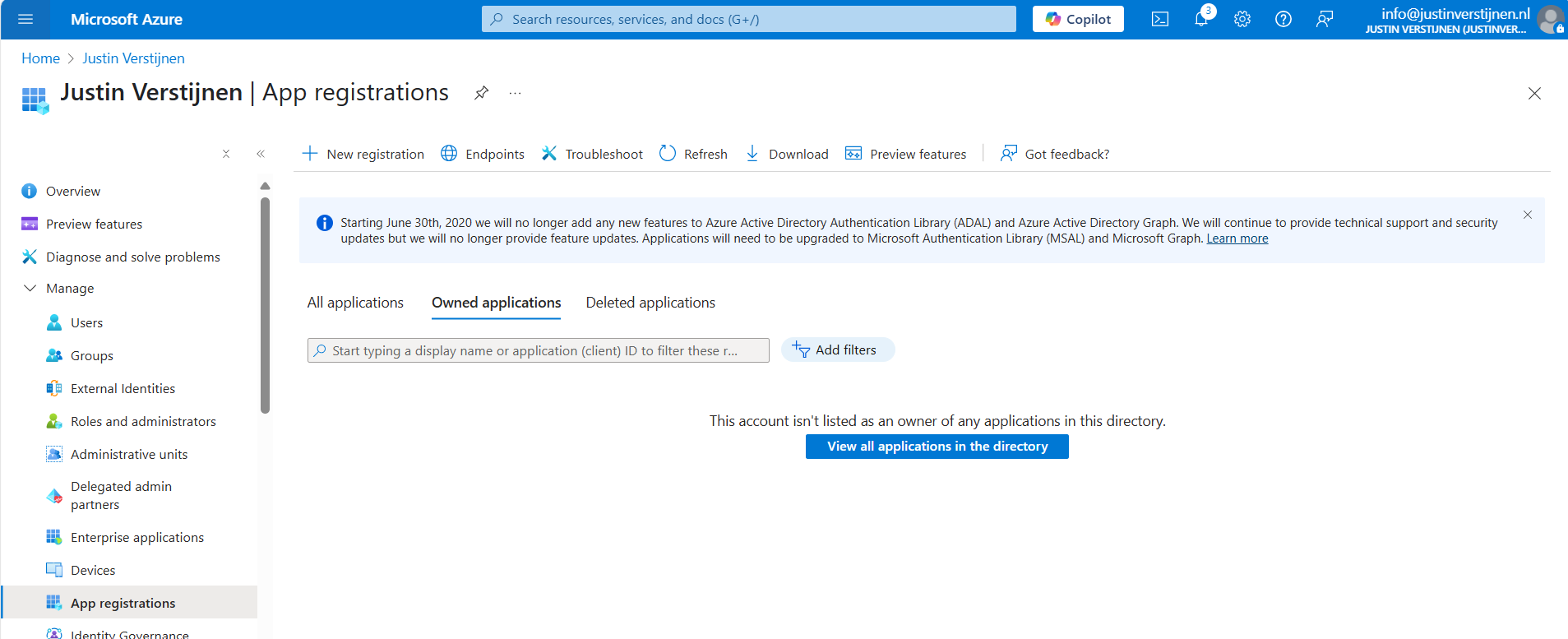

Start by going to Microsoft Entra ID, because we must generate the information to fill in into the plugin.

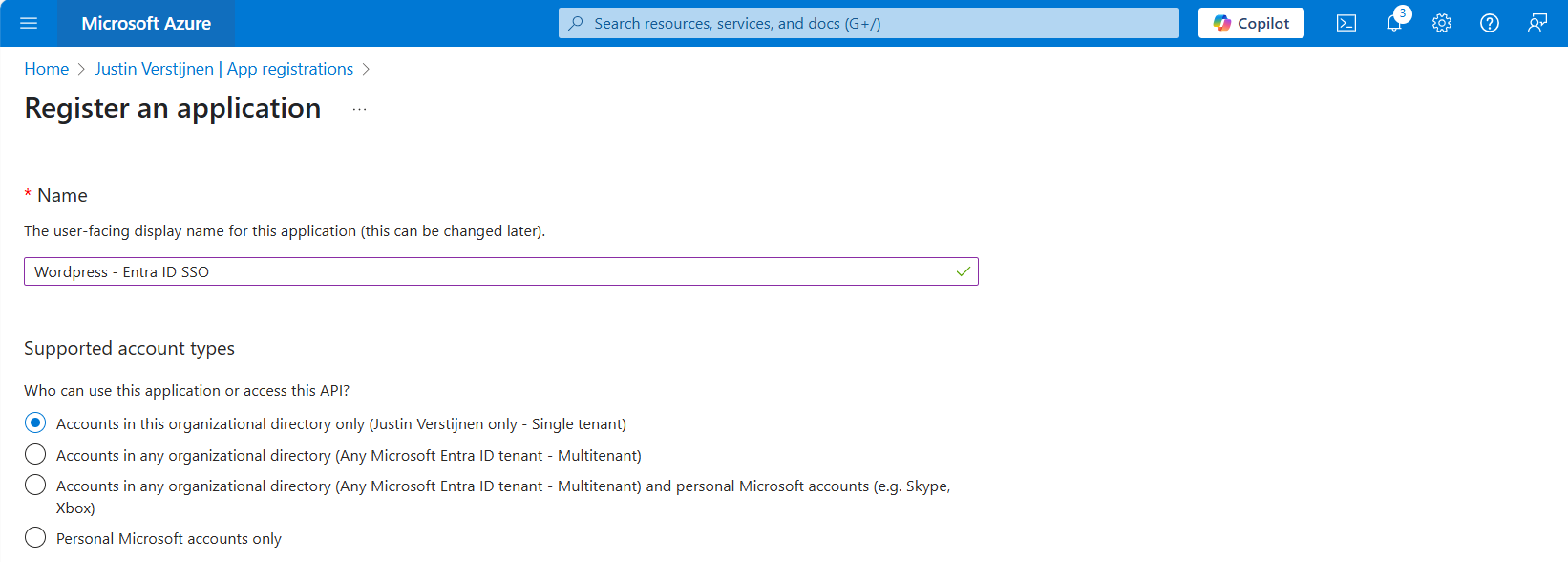

Go to Microsoft Entra ID and then to “App registrations”:

Click on “+ New registration” to create a new custom application.

Choose a name for the application and select the supported account types. In my case, I only want to have accounts from my tenant to use SSO to the plugin. Otherwise you can choose the second option to support business accounts in other tenants or the third option to also include personal Microsoft accounts.

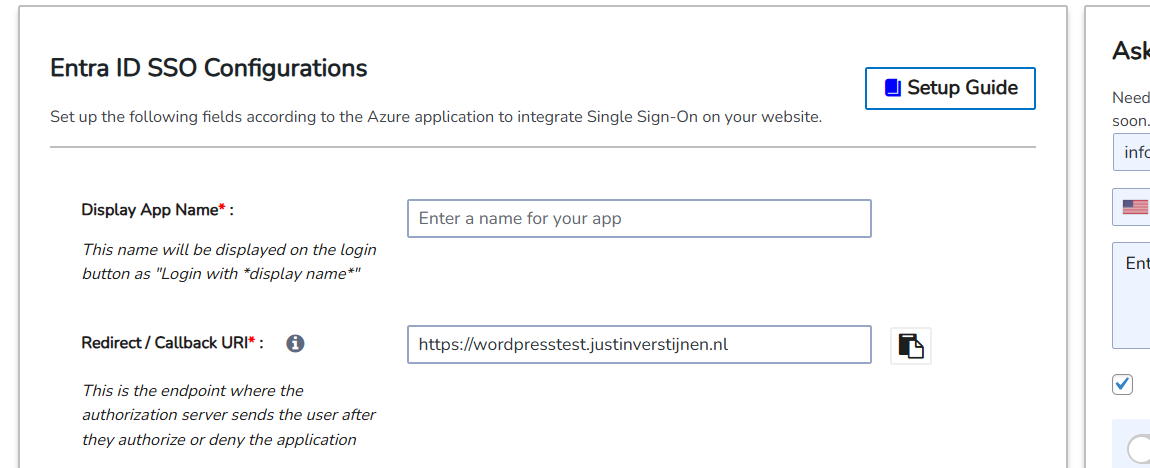

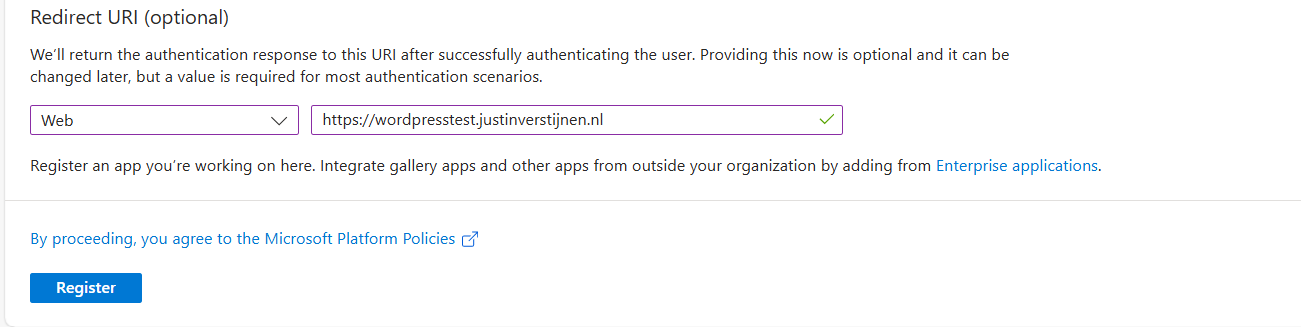

Scroll down on the page and configure the redirect URL which can be found in the plugin:

Copy this link, select type “Web” and paste this into Entra ID:

This is the URL which will be opened after succesfully authenticating to Entra ID.

Click register to finish the wizard.

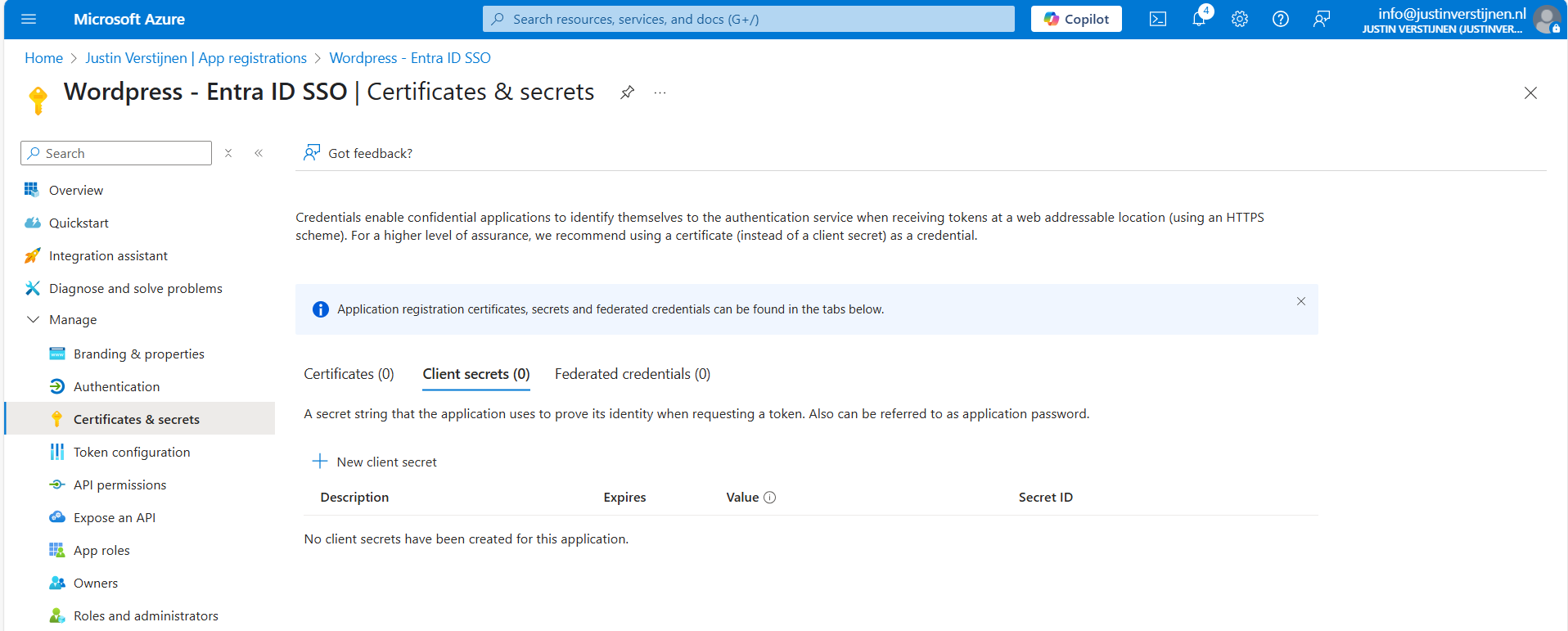

Create a client secret

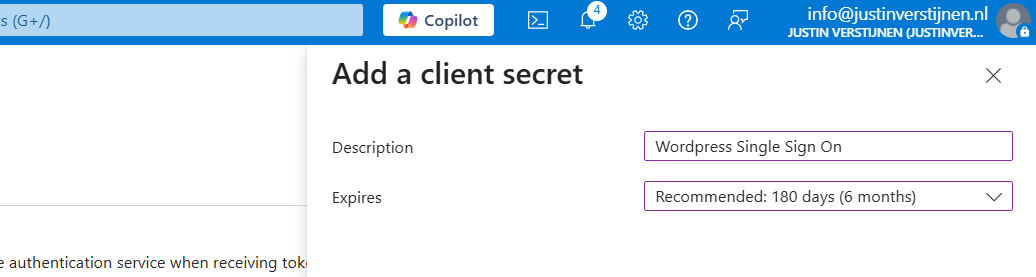

After creating the app registration, we can go to “Certificates & Secrets” to create a new secret:

Click on “+ New client secret”.

Type a good description and select the duration of the secret. This must be shorter than 730 days (2 years) because of security. In my case, I stick with the recommended duration. Click on “Add” to create the secret.

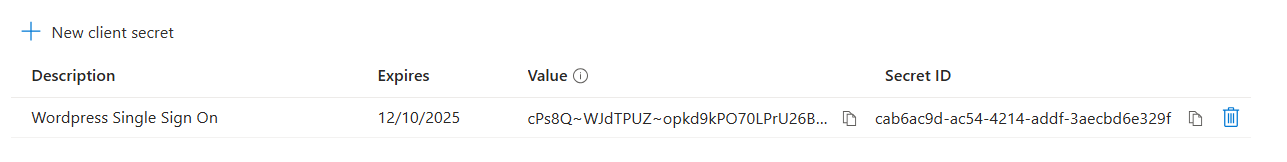

Now please copy the information and place it in a safe location, as this will be the last option to actually see the secret full. After some minutes/clicks this will be gone forever and a new one has to be created.

My advice is to always copy the Secret ID too, because you have a good identifier of which secret is used where, especially when you have like 20 app registrations.

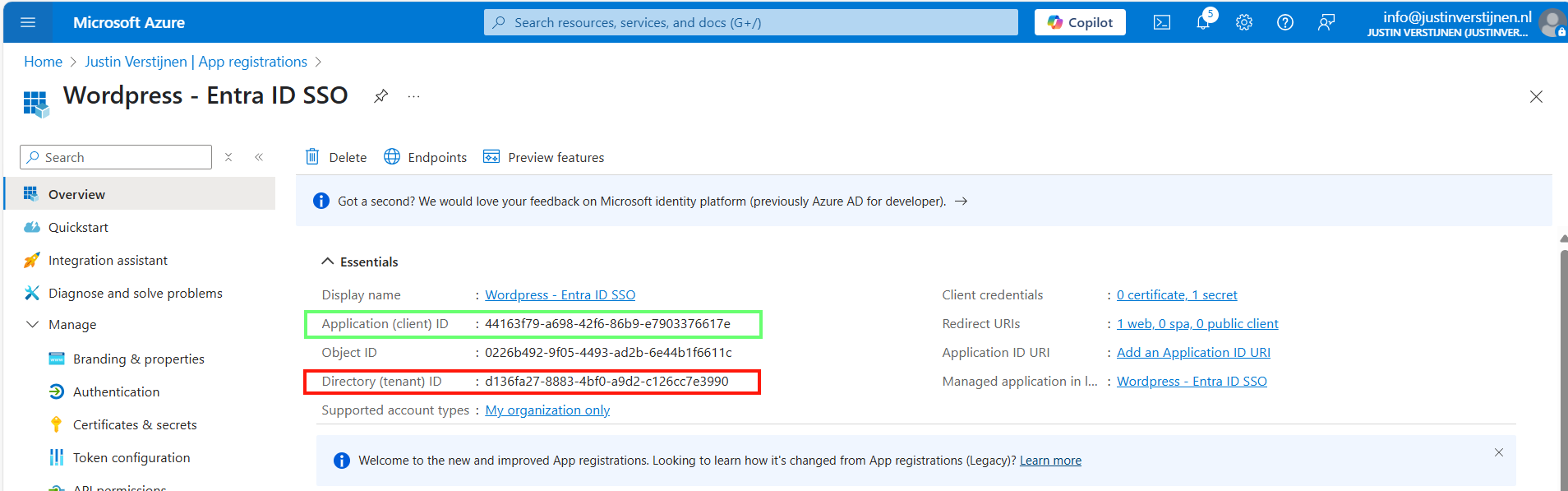

Collect the information in Microsoft Entra ID

Now that we have finished the configuration in ENtra ID, we have to collect the information we need. This is:

- Client ID

- Tenant ID

- Client Secret

The Client ID (green) and Tenant ID (red) can be found on the overview page of the app registration. The secret is saved in the safe location from previous step.

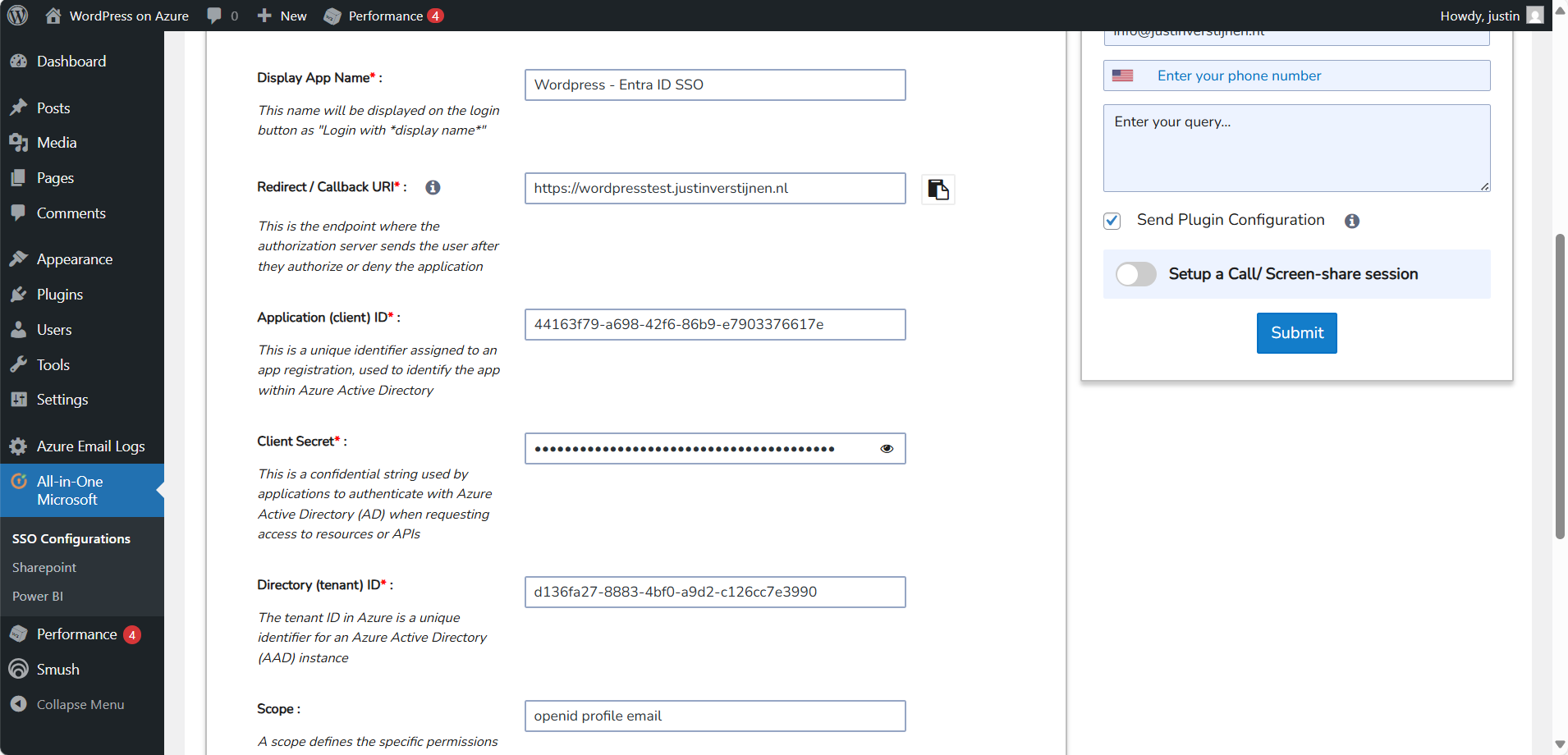

Configure Wordpress plugin

Now head back to Wordpress and we have to fill in all of the collected information from Microsoft Entra ID:

Fill in all of the collected information, make sure the “Scope” field contains “openid profile email” and click on “Save settings”. The scope determines the information it will request at the Identity Provider, this is Microsoft Entra ID in our case.

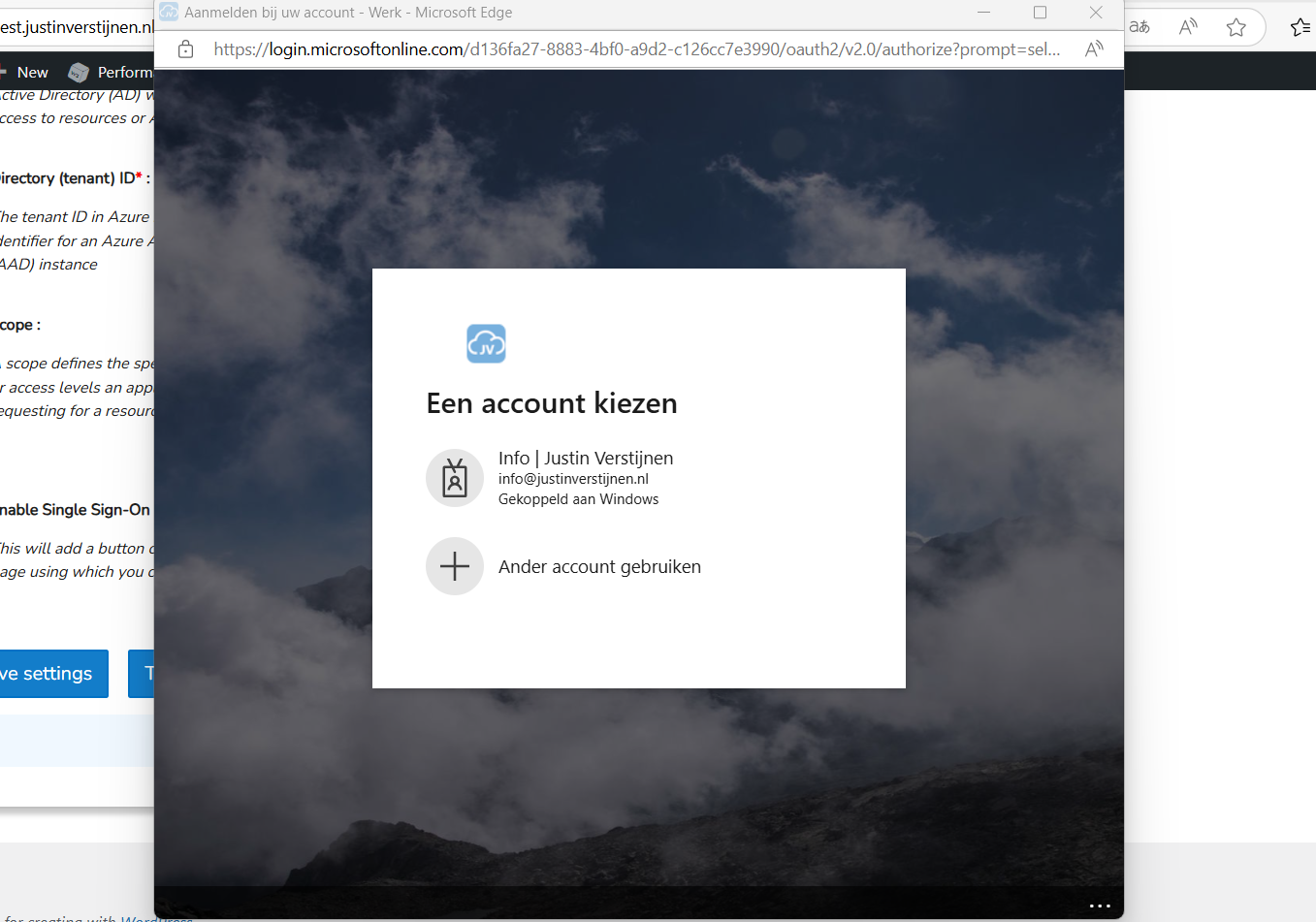

Then scroll down again and click on “Test Configuration” which is next to the Save button. An extra authentication window will be opened:

Select your account or login into your Entra ID account and go to the next step.

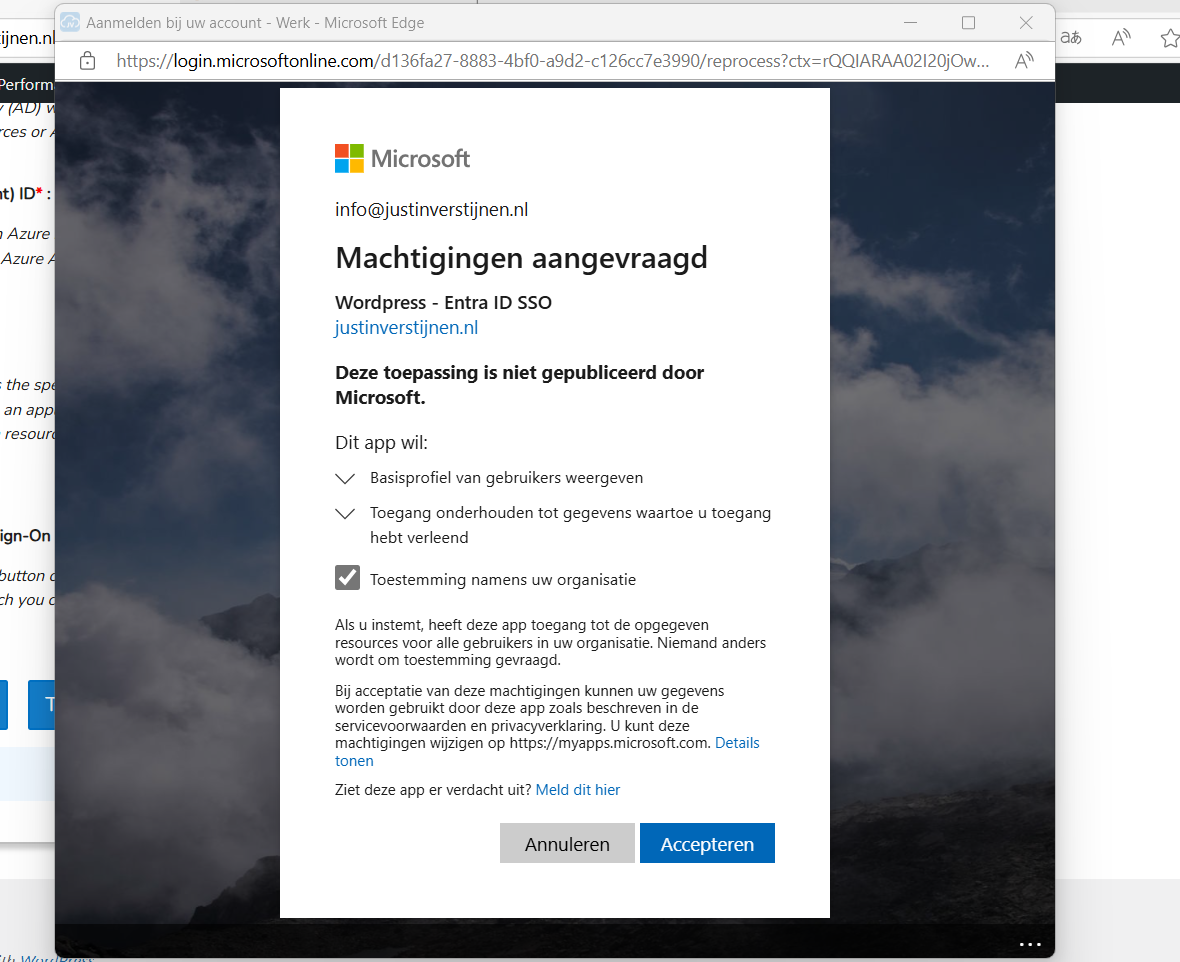

Now we have to accept the roles the application wants and to permit the application for the whole organization. For this step, you will need administrator rights in Entra ID. (Cloud Application Administrator or Application Administrator roles or higher).

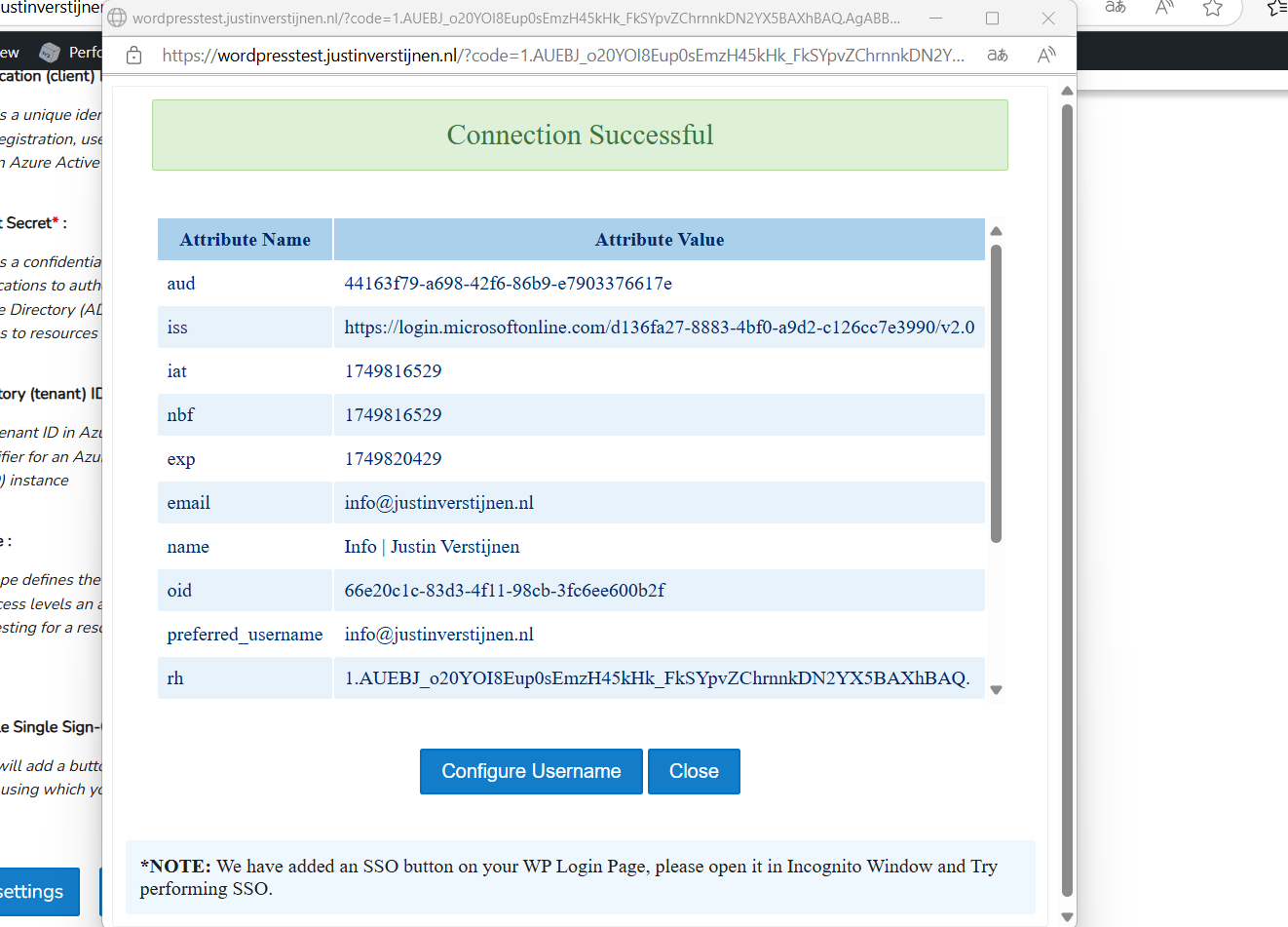

Accept the application and the plugin will tell you the information it got from Entra ID:

Now we have to click on the “Configure Username” button or go the tab “Attribute/Role Mapping”.

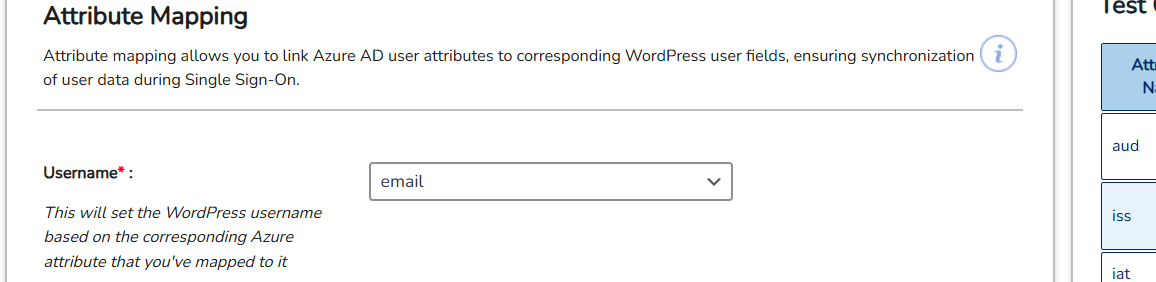

In Entra ID, a user has several properties with can be configured. In identity, we call this attributes. We have to tell the plugin which attributes in Entra ID to use for what in the plugin.

Start by selecting “email” in the “Username field”:

Then click on “Save settings”.

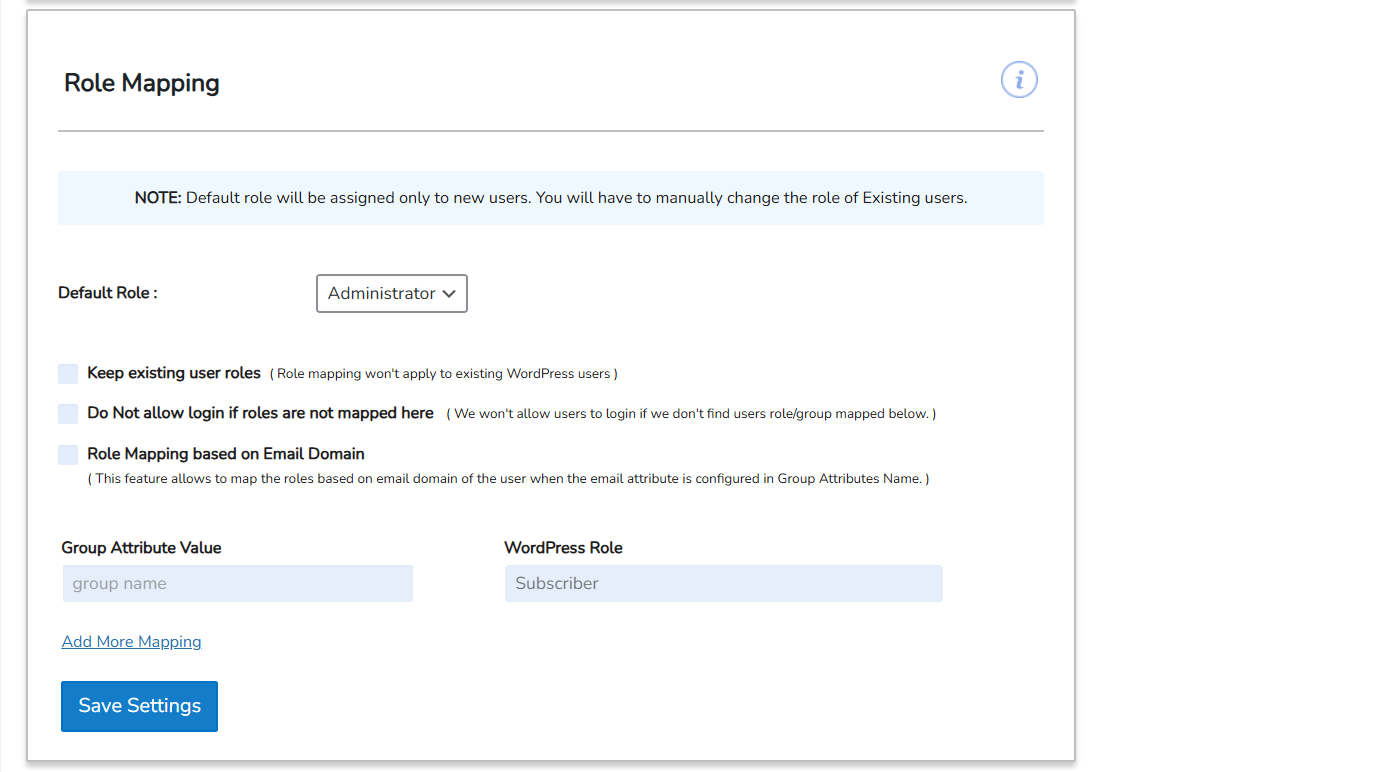

Configure Wordpress roles for SSO

Now we can configure which role we want to give users from this SSO configuration:

In my case, I selected “Administrator” to give myself the Administrator permissions but you can also chosse from all other built-in Wordpress roles. Be aware that all of the users who are able to SSO into Wordpress will bet this role by default.

Test Wordpress SSO

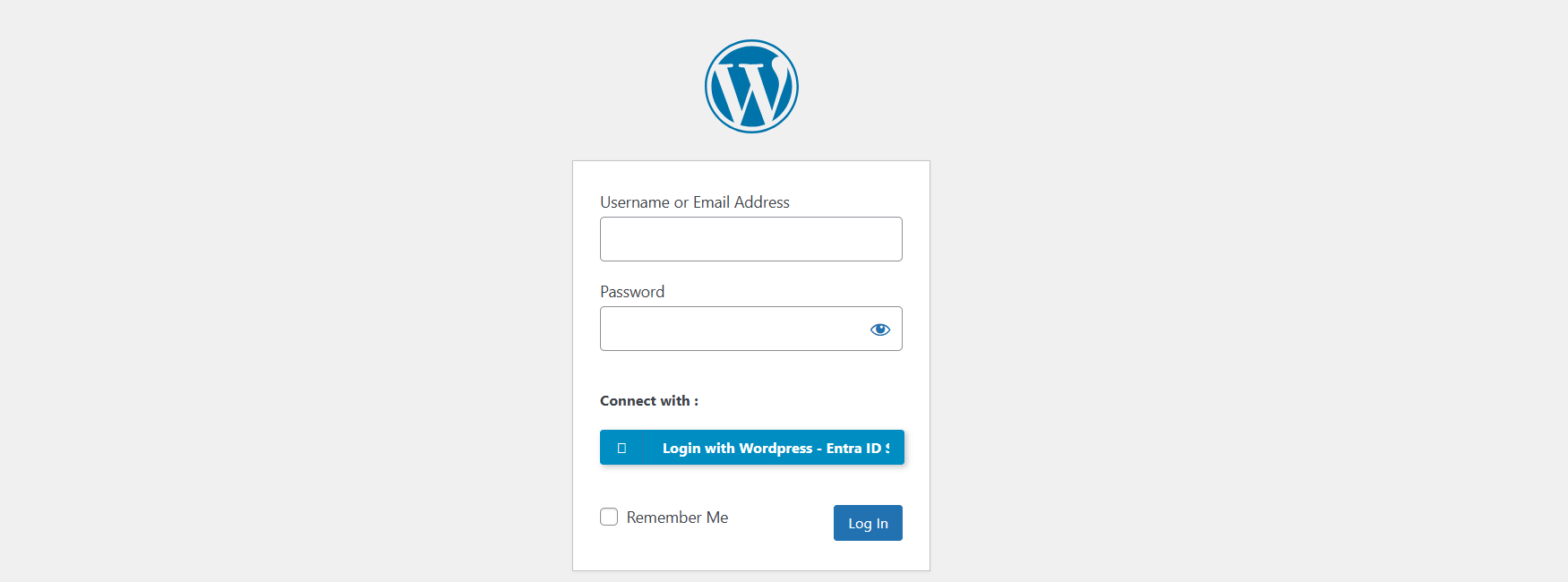

Now we can test SSO for Wordpress by loggin out and again going to our Wordpress admin panel:

We have the option to do SSO now:

Click on the blue button with “Login with Wordpress - Entra ID”. You will now have to login with your Microsoft account.

After that you will land on the homepage of the website. You can manually go to the admin panel to get there: (unfortunately we cannot configure to go directly to the admin panel, this is a paid plugin option).

Summary

Wordpress on Azure is a great way to host a Wordpress environment in a modern and scalable way. It’s high available and secure by default without the need for hosting a complete server which has to be maintained and patched regularly.

The setup takes a few steps but it is worth it. Pricing is something to consider prior, but I think with the Basic plan, you have a great self hosted Wordpress environment for around 25 dollars a month and that is even with a hourly Backup included. Overall, great value for money.

Thank you for reading this guide and I hope it was helpful.

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

New: Azure Service Groups

What are these new Service Groups in Azure?

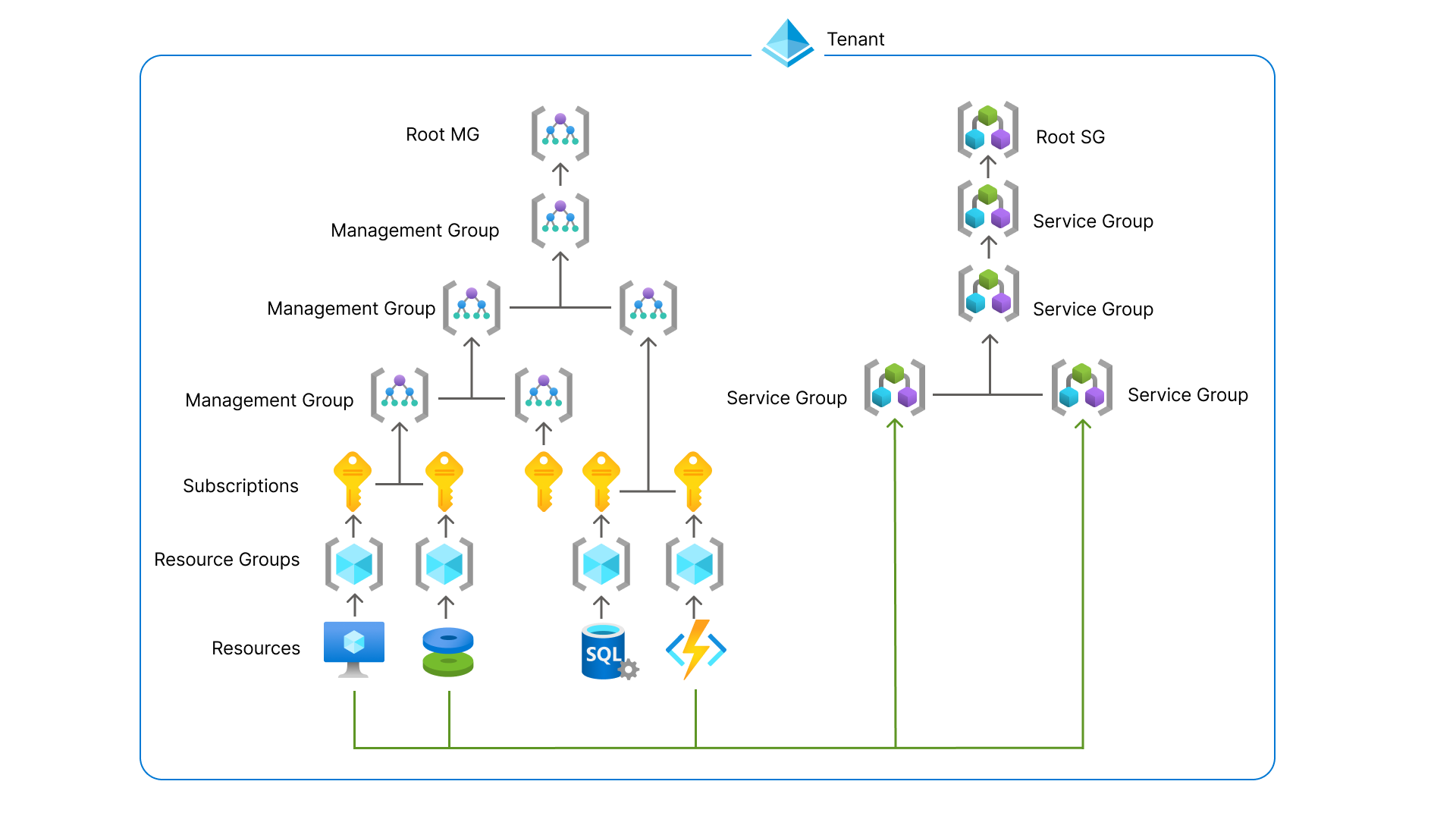

Service Groups are a parralel type of group to group resources and separate permissions to them. In this manner we can assign multiple resources of different resource groups and put them into a overshadowing Service Group to apply permissions. This eliminates the need to move resources into specific resource groups with all broken links that comes with it.

This looks like this:

You can see these new service groups as a parallel Management Group, but then for resources.

Features

- Logical grouping of your Azure solutions

- Multiple hierarchies

- Flexible membership

- Least privileges

- Service Group Nesting (placing them in each other)

Service Groups in practice

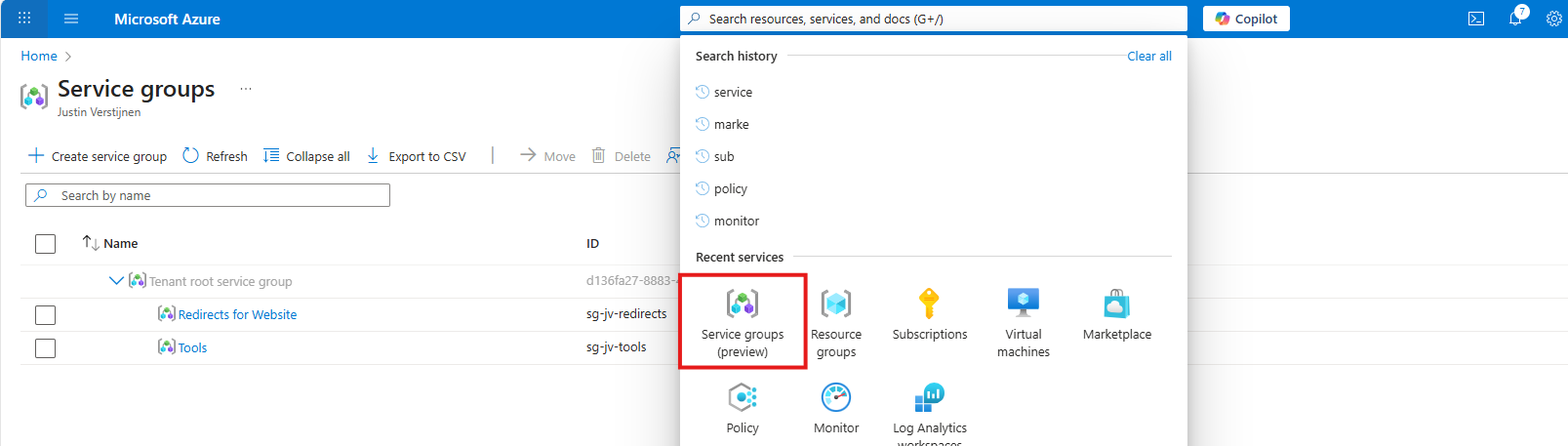

Update 1 September 2025, the feature is in public preview, so I can do a little demonstration of this new feature.

In the Azure Portal, go to “Service Groups”:

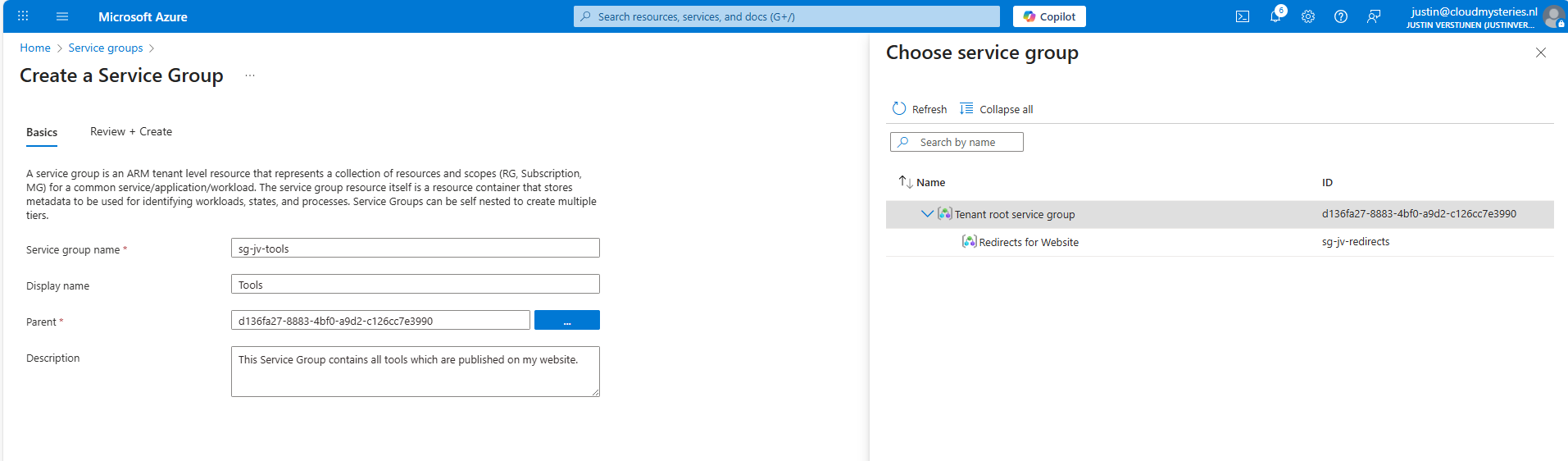

Then create a new Service Group.

Here I have created a service group for my tools which are on my website. These reside in different resource groups so it’s a nice candidate to test with. The parent service group is the tenant service group which is the top level.

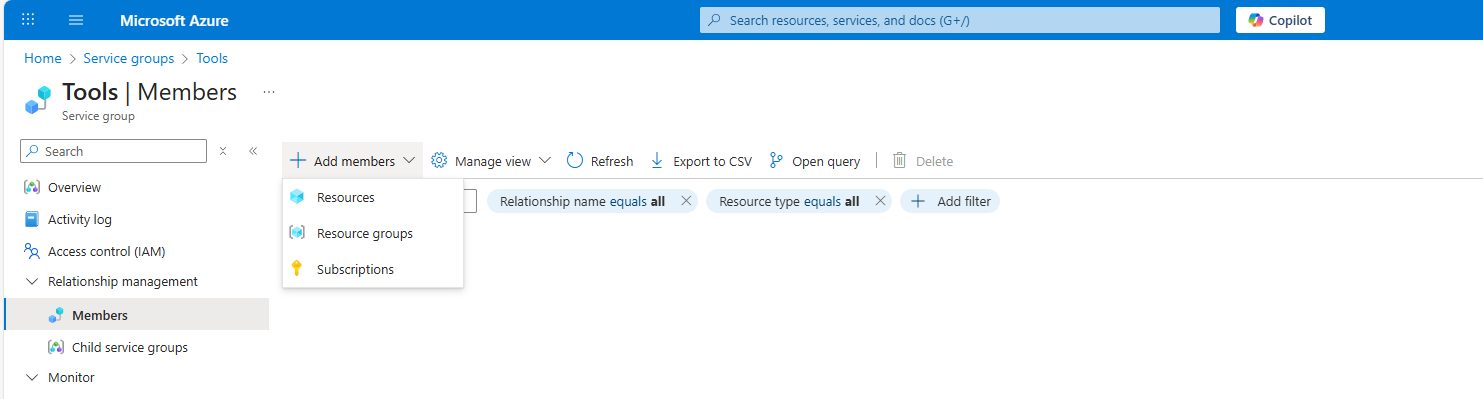

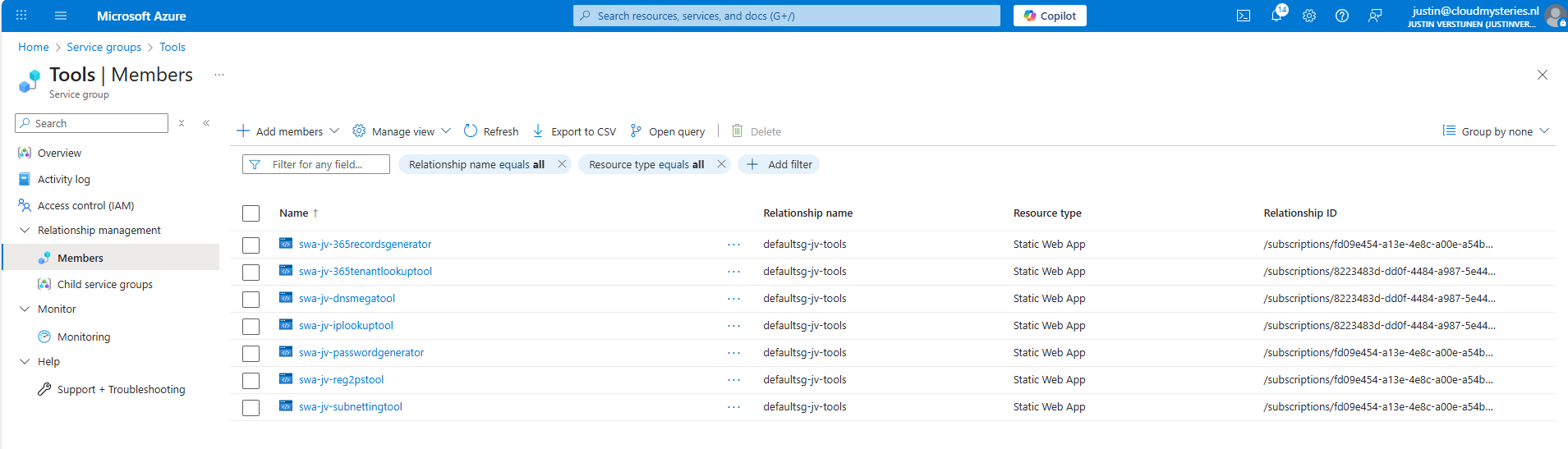

Now open your just created service group and add members to it, which can be subscriptions, resource groups and resources:

Like I did here:

Summary

Service Groups are an great addition for managing permissions to our Azure resources. It delivers us a manner to give a person or group unified permissions across multiple resources that are not in the same resource group.

This can now be done, only with inheriting permissions flowing down, which means big privileges and big scopes. With this new function we can only select the underlying resources we want and so permit a limited set of permissions. This provider much more granular premissions assignments, and all of that free of charge!

Sources

These sources helped me by writing and research for this post;

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

In-Place upgrade to Windows Server 2025 on Azure

Once every 3 to 4 years you want to be on the last version of Windows Server because of new features and of course to have the latest security updates. These security updates are the most important these days.

When having your server hosted on Microsoft Azure, this proces can look a bit complicated but it is relatively easy to upgrade your Windows Server to the last version, and I will explain how to on this page.

Because Windows Server 2025 is now out for almost a year and runs really stable, we will focus in this post on upgrading from 2022 to Windows Server 2025. If you don’t use Azure, you can exclude steps 2 and 3 but the rest of the guide still tells you how to upgrade on other systems like Amazon/Google or on-premise/virtualization.

Requirements

- The Azure Powershell module

- A machine running an older version of Windows Server to upgrade

- A backup to succesfully roll back to in case of emergency

- 45 minutes to 2 hours of your time

The process described

We will perform the upgrade by having a eligible server, and we will create an upgrade media for it. Then we will assign this upgrade media to the server, which will effectively put in the ISO. Then we can perform the upgrade from the guest OS itself and wait for around an hour.

Recommended is before you start, to perform this task in a maintenance window and to have a full server backup. Upgrading Windows Server isnt always a full waterproof process and errors will always occur if not having a plan b.

You’ll be happy to have followed my advice on this one if this goes wrong.

Step 1: Determine your upgrade-path

When you are planning an upgrade, it is good to determine your upgrade path beforehand. CHeck your current version and check which version you want to upgrade to.

The golden rule is that you can skip 1 version at a time. When you want to run Windows Server 2022 and you want to reach this in 1 upgrade, your minimum version is Windows Server 2016. To check all supported upgrade paths, check out the following table:

| Upgrade Path | Windows Server 2012 R2 | Windows Server 2016 | Windows Server 2019 | Windows Server 2022 | Windows Server 2025 |

|---|---|---|---|---|---|

| Windows Server 2012 | Yes | Yes | - | - | - |

| Windows Server 2012 R2 | - | Yes | Yes | - | - |

| Windows Server 2016 | - | - | Yes | Yes | - |

| Windows Server 2019 | - | - | - | Yes | Yes |

| Windows Server 2022 | - | - | - | - | Yes |

Horizontal: To Vertical: From

For more information about the supported upgrade paths, check this official Microsoft page: https://learn.microsoft.com/en-us/windows-server/get-started/upgrade-overview#which-version-of-windows-server-should-i-upgrade-to

Step 2: Create upgrade media in Microsoft Azure

When you have a virtual machine ready and you have determined your upgrade path, we have to create an upgrade media in Azure. We need to have a ISO with the new Windows Server version to start the upgrade.

To create this media, first login into Azure Powershell by using the following command;

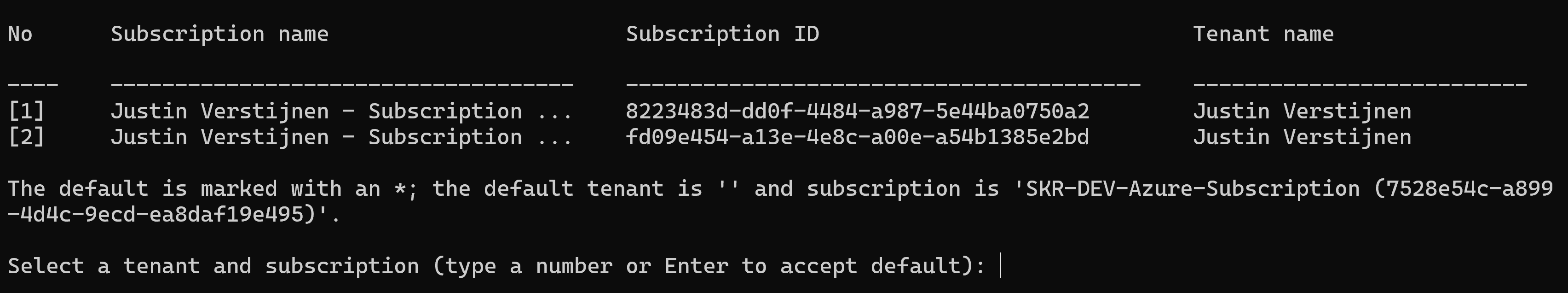

Connect-AzAccountLog in with your Azure credentials which needs to have sufficient rights in the target resource group. This should be at least Contributor or use a custom role.

Select a subscription if needed:

Then after logging in succesfully, we need to execute a script to create a upgrade disk. This can be done through this script:

# -------- PARAMETERS --------

$resourceGroup = "rg-jv-upgrade2025"

$location = "WestEurope"

$zone = ""

$diskName = "WindowsServer2025UpgradeDisk"

# Target version: server2025Upgrade, server2022Upgrade, server2019Upgrade, server2016Upgrade or server2012Upgrade

$sku = "server2025Upgrade"

#--------END PARAMETERS --------

$publisher = "MicrosoftWindowsServer"

$offer = "WindowsServerUpgrade"

$managedDiskSKU = "Standard_LRS"

$versions = Get-AzVMImage -PublisherName $publisher -Location $location -Offer $offer -Skus $sku | sort-object -Descending {[version] $_.Version }

$latestString = $versions[0].Version

$image = Get-AzVMImage -Location $location `

-PublisherName $publisher `

-Offer $offer `

-Skus $sku `

-Version $latestString

if (-not (Get-AzResourceGroup -Name $resourceGroup -ErrorAction SilentlyContinue)) {

New-AzResourceGroup -Name $resourceGroup -Location $location

}

if ($zone){

$diskConfig = New-AzDiskConfig -SkuName $managedDiskSKU `

-CreateOption FromImage `

-Zone $zone `

-Location $location

} else {

$diskConfig = New-AzDiskConfig -SkuName $managedDiskSKU `

-CreateOption FromImage `

-Location $location

}

Set-AzDiskImageReference -Disk $diskConfig -Id $image.Id -Lun 0

New-AzDisk -ResourceGroupName $resourceGroup `

-DiskName $diskName `

-Disk $diskConfigView the script on my GitHub page

On line 8 of the script, you can decide which version of Windows Server to upgrade to. Refer to the table in step 1 before choosing your version. Then perform the script.

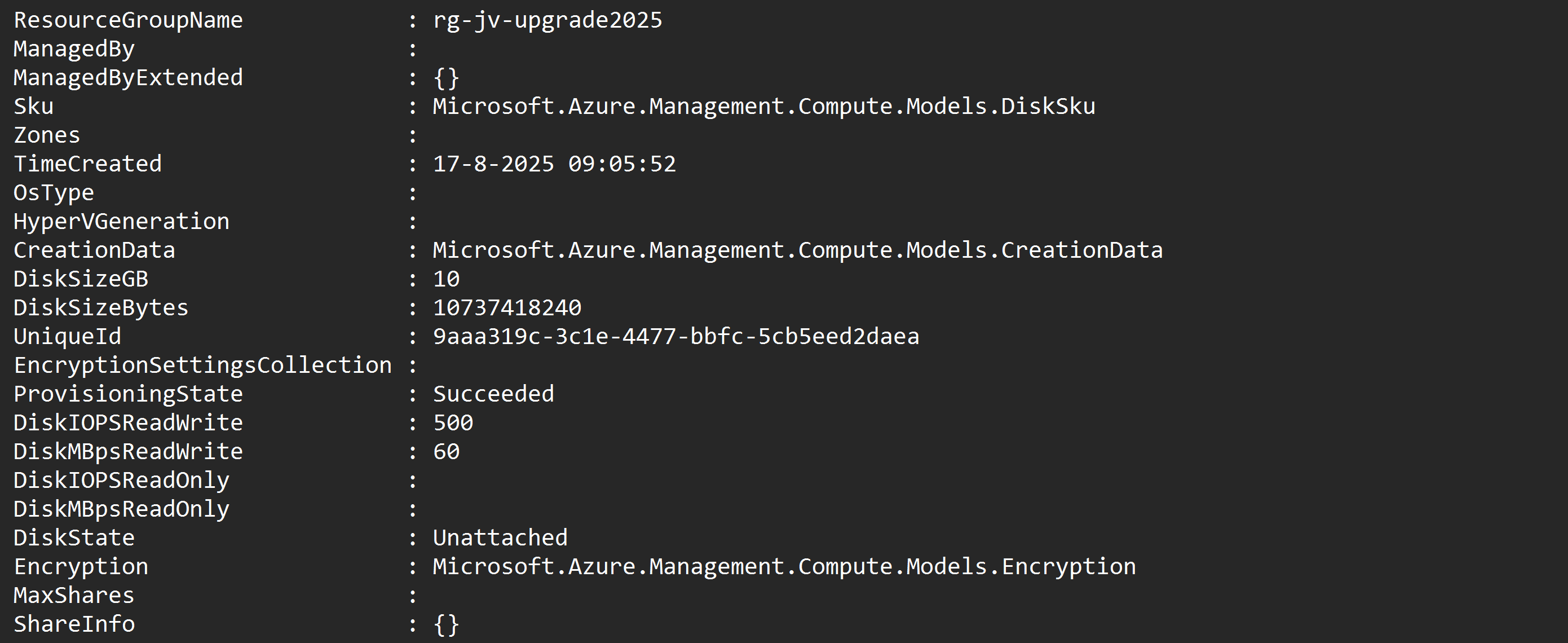

After the script has run successfully, I will give a summary of the performed action:

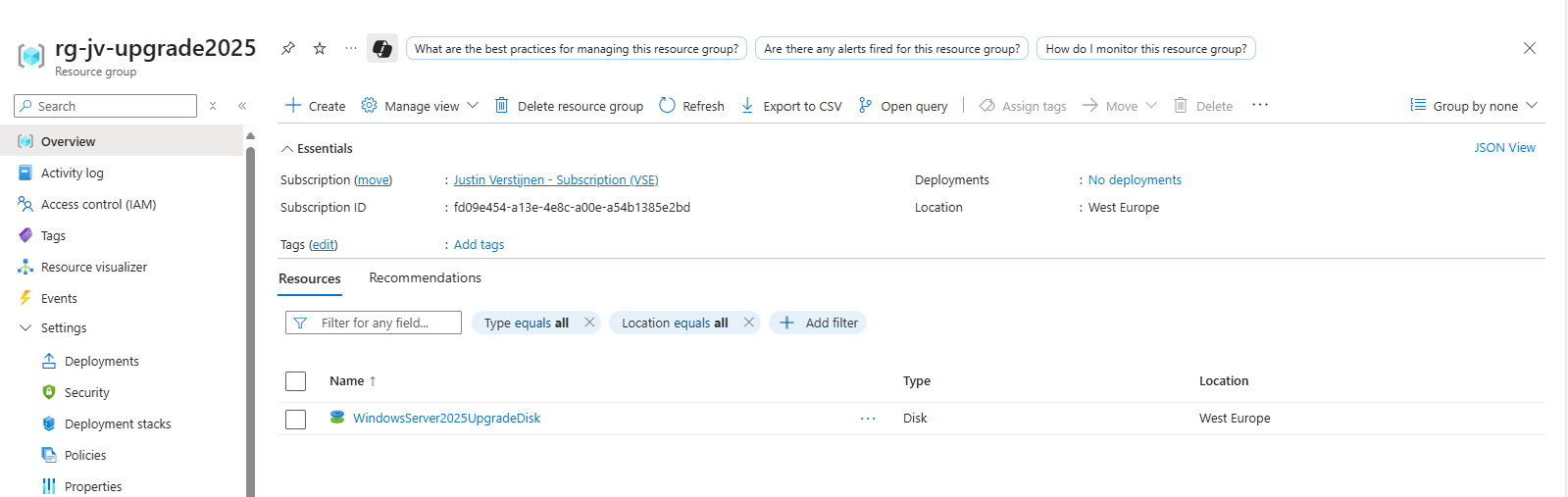

After running the script in the Azure Powershell window, the disk is available in the Azure Portal:

Step 3: Assign upgrade media to VM

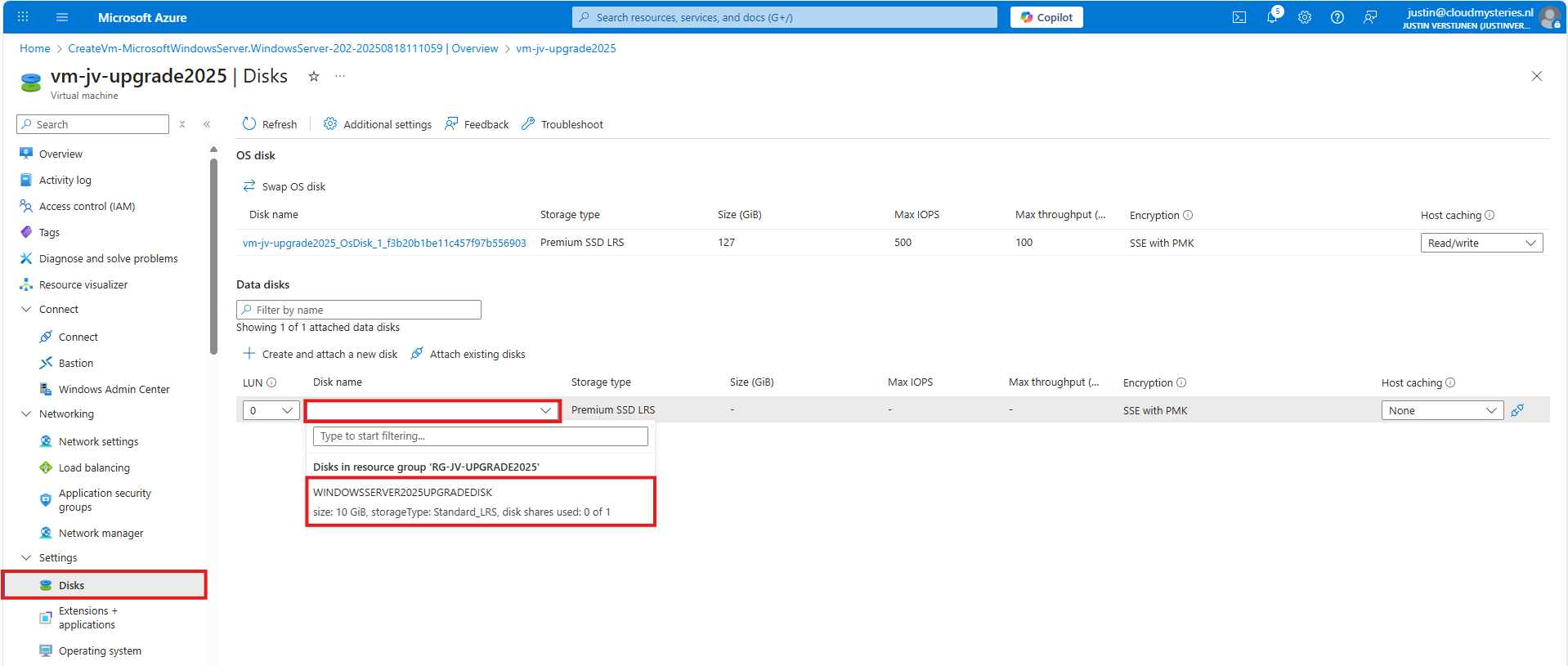

After creating the upgrade media we have to assign it to the virtual machine we want to upgrade. You can do this in the Azure Portal by going to the virtual machine. After that, hit Disks.

Then select to attach an existing disk, and select the upgrade media you have created through Powershell.

Note: The disk and virtual machine have to be in the same resource group to be attached.

Step 4: Start upgrade of Windows Server

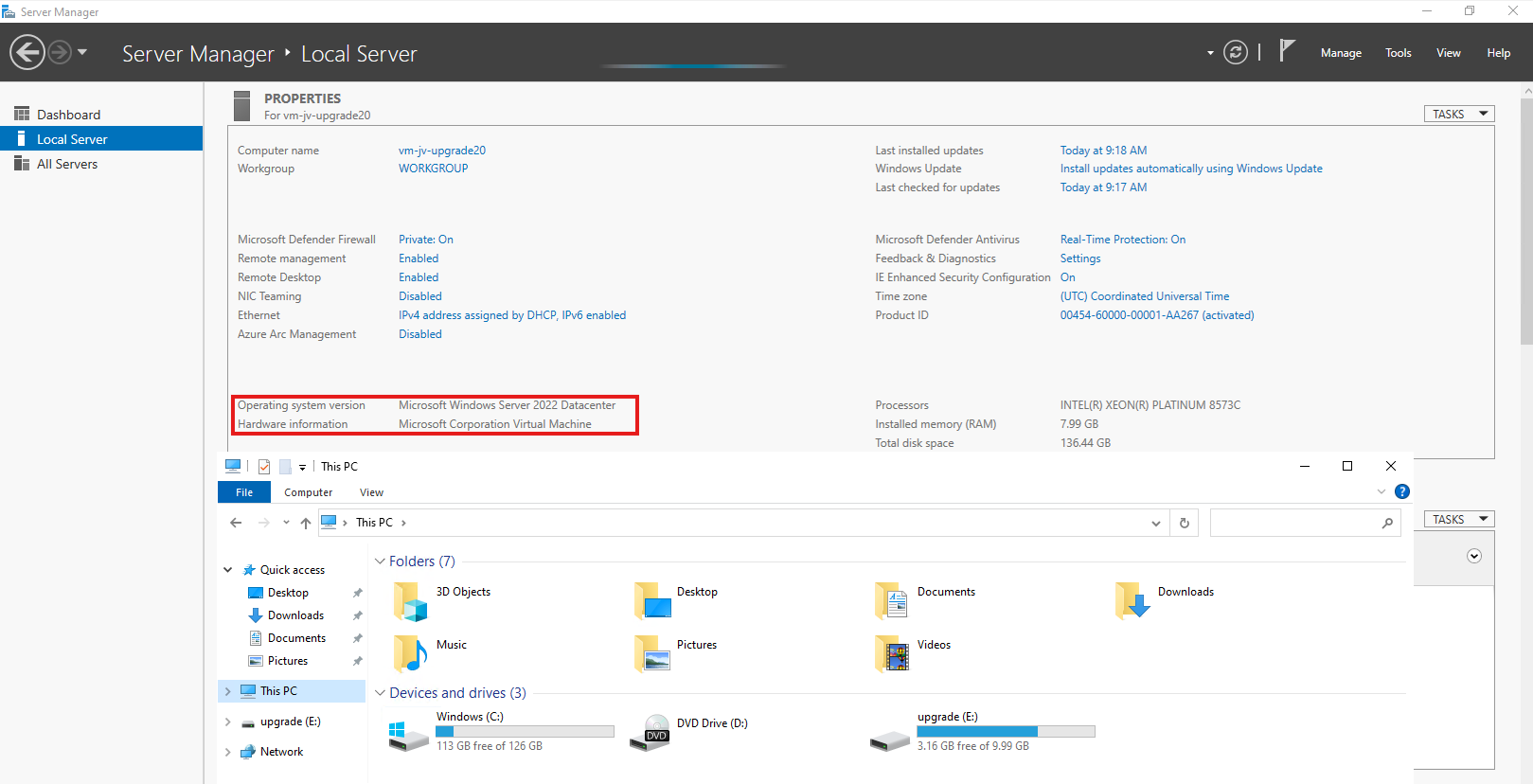

Now we have prepared our environment for the upgrade of Windows Server, we can start the upgrade itself. For the purpose of this guide, I have quickly spun up a Windows Server 2022 machine to upgrade this to Windows Server 2025.

Login into the virtual machine and let’s do some pre-upgrade checks:

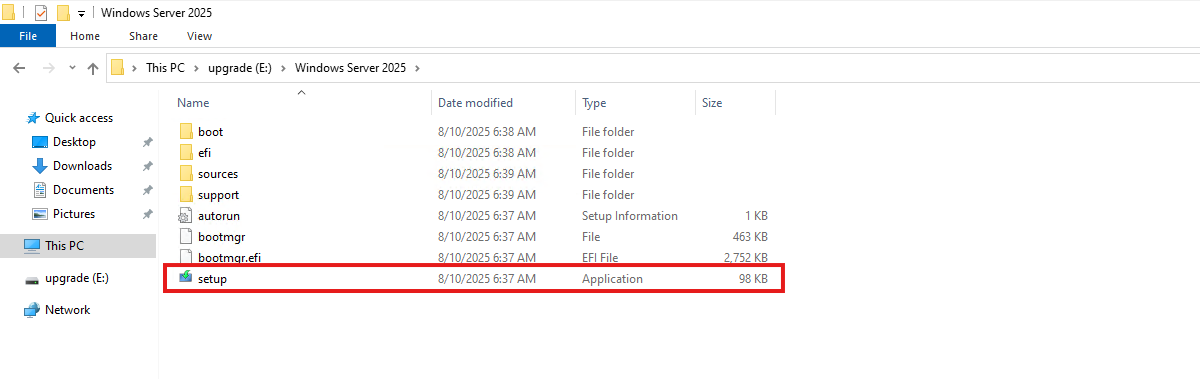

As you can see, the machine is on Windows Server 2022 Datacenter and we have enough disk space to perform this action. Now we can perform the upgrade through Windows Explorer, and then going to the upgrade disk we just created and assigned:

When the volume is not available in Windows Explorer, you first have to initialize the disk in Disk Management (diskmgmt.msc) in Windows. Then it will be available.

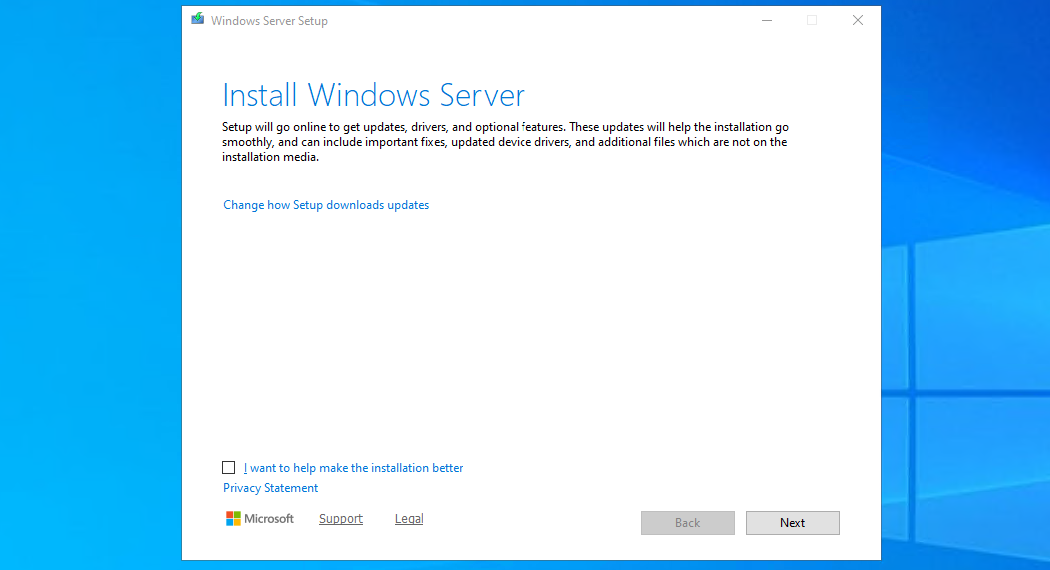

Open the volume upgrade and start setup.exe. The starup will take about 2 minutes.

Click “Next”. Then there will be a short break of around 30 seconds for searching for updates.

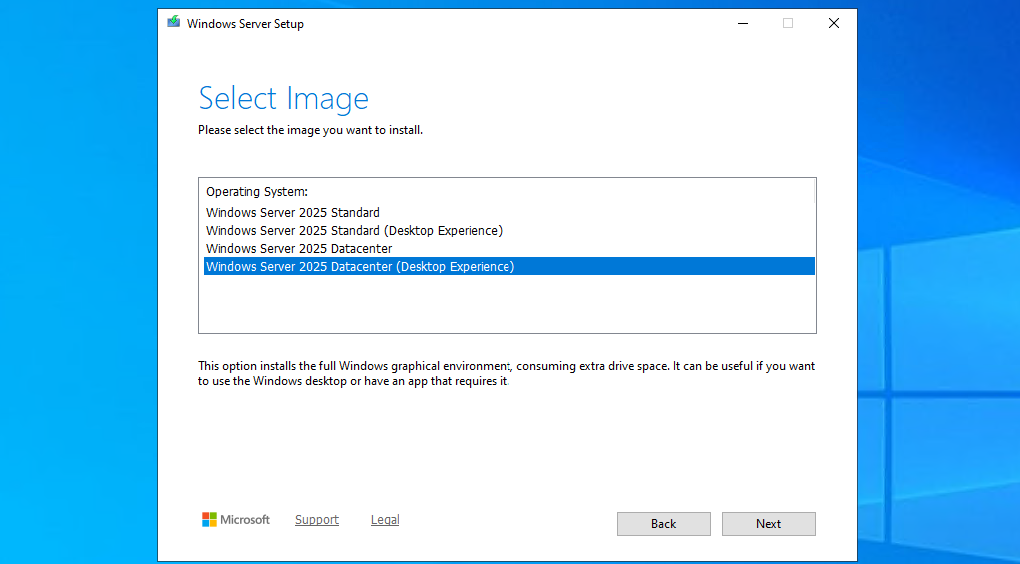

Then select you preferred version. Note that the default option is to install without graphical environment/Desktop Experience. Set this to your preferred version and click “Next”.

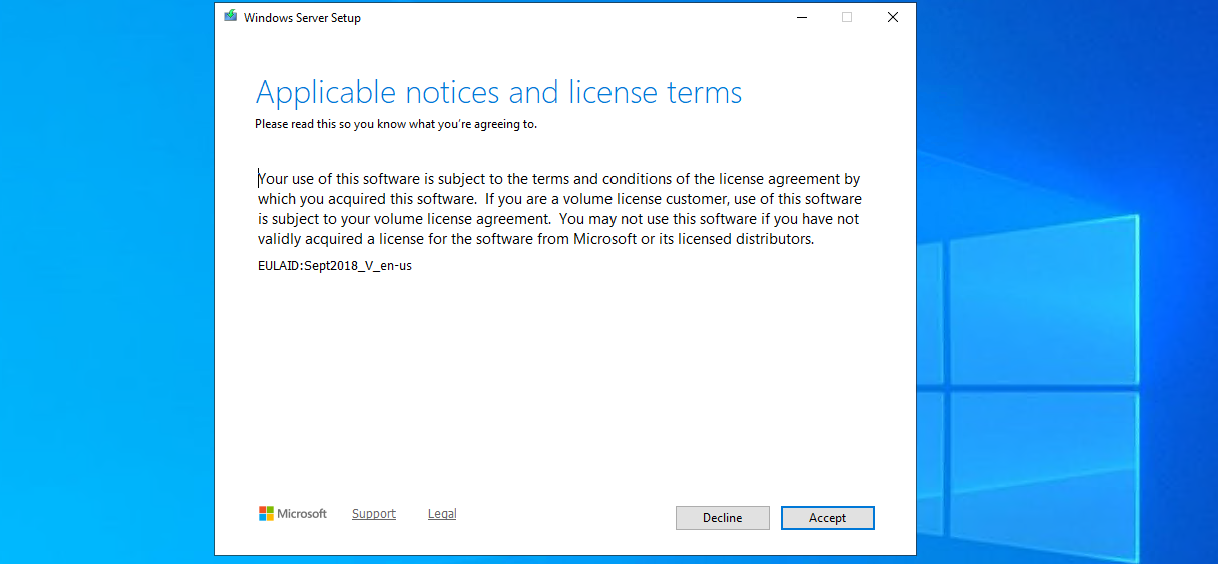

Ofcourse we have read those. Click Accept.

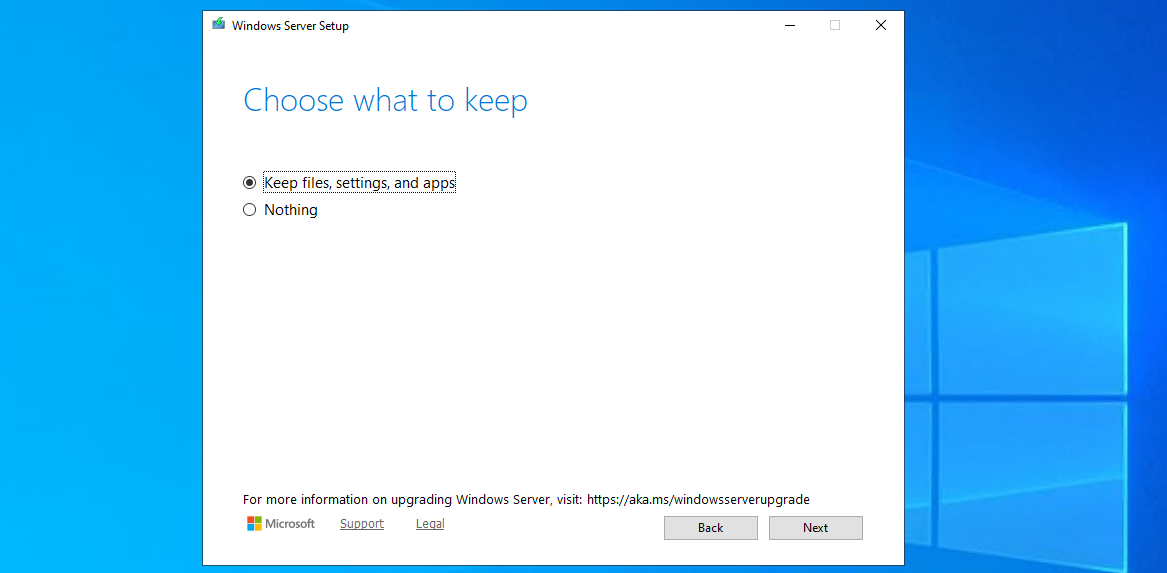

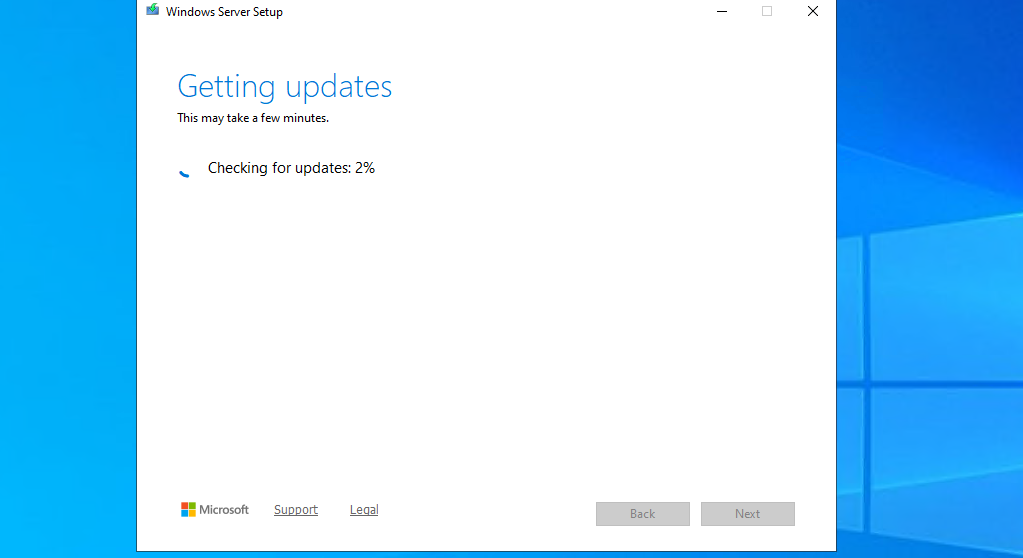

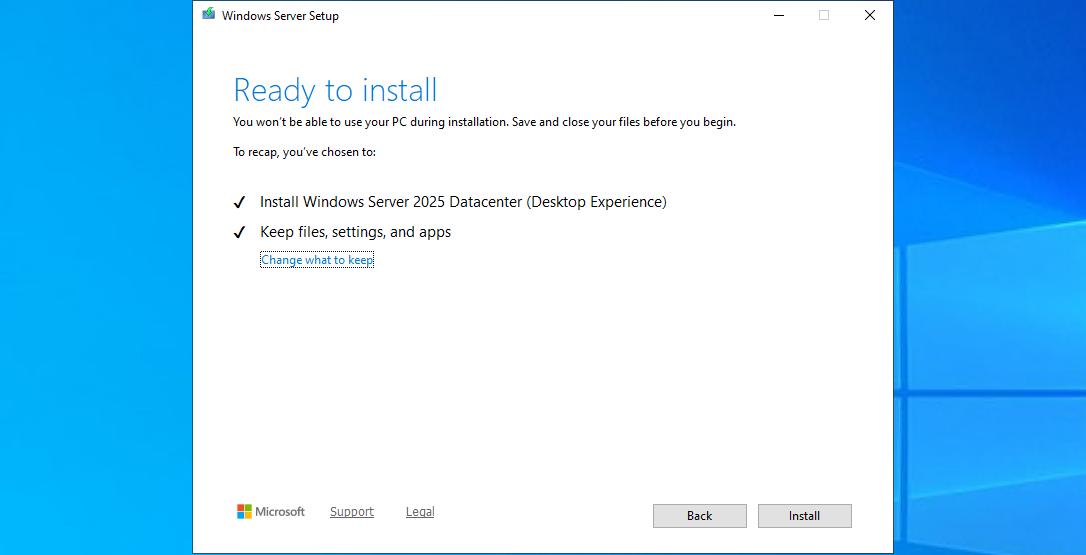

Choose here to keep files, settings and apps to make it an in-place upgrade. Click “Next”. There will be another short break of some minutes for the setup to download some updates.

This process can take 45 minutes up to 2 hours, depending on the workload and the size of the virtual machine. Have a little patience during this upgrade.

Step 5: Check status during upgrade

After the machine will restart, RDP connection will be lost. However, you can check the status of the upgrade using the Azure Portal.

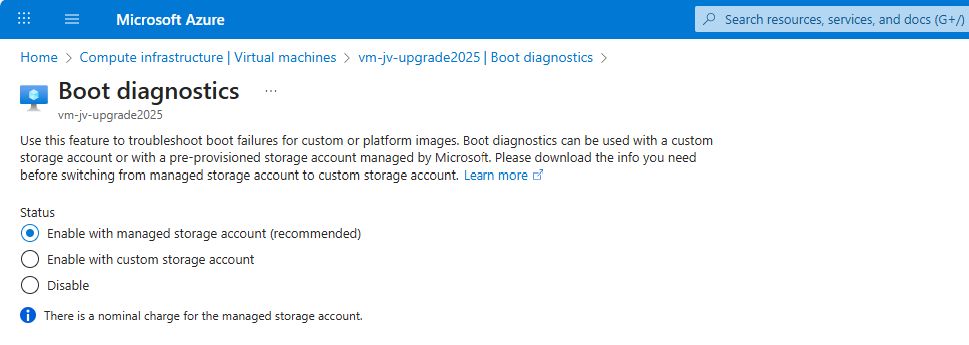

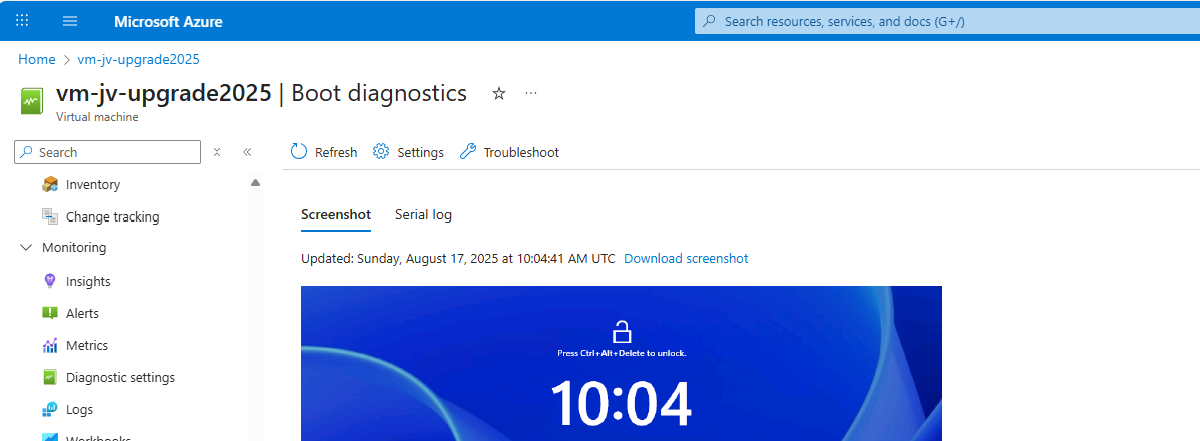

Go to the virtual machine you are upgrading, and go to: “Boot diagnostics”

Then configure this for the time being if not already done. Click on “Settings”.

By default, select a managed storage account. If you use a custom storage account for this purpose, select the custom option and then your custom storage account.

We can check the status in the Azure Portal after the OS has restarted.

The upgrade went very fast in my case, within 30 minutes.

Step 6: After upgrading checks

After the upgrade process is completed I can recommend you to test the update before going into production. Every change in a machine can alter the working of the machine, especially in production workloads.

A checklist I can recommend for testing is:

- Check all Services for 3rd party applications

- Check if all disks and volumes are present in disk management

- Check all processes

- Check an application client side (like CRM/ERP/SQL)

- Check event logs in the virtual machine for possible errors

After these things are checked and no error occured, then the upgrade has been succeeded.

Summary

Upgrading a Windows Server to Server 2025 on Azure is relatively easy, although it can be somewhat challenging when starting out. It is no more than creating a upgrade disk, link to the machine and starting the upgrade like before with on-premises solutions.

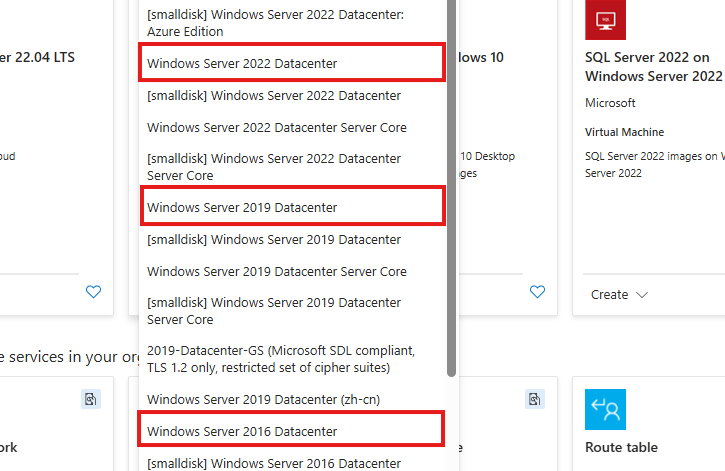

The only downside is that Microsoft does not support upgrading Windows Server Azure Editions (ServerTurbine) yet, we are waiting with high hopes for this. Upgrading only works on the default Windows Server versions:

Thank you for reading ths guide and I hope it helped you out upgrading your server to the latest and most secured version.

Sources

These sources helped me by writing and research for this post;

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

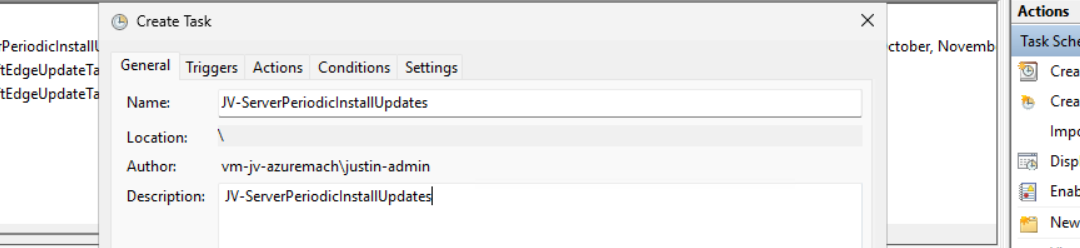

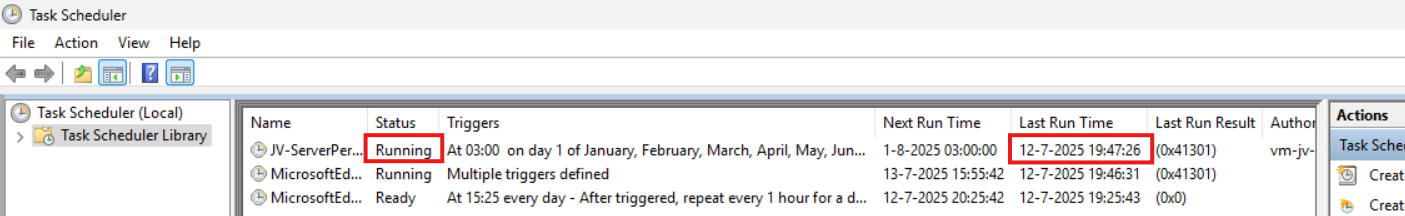

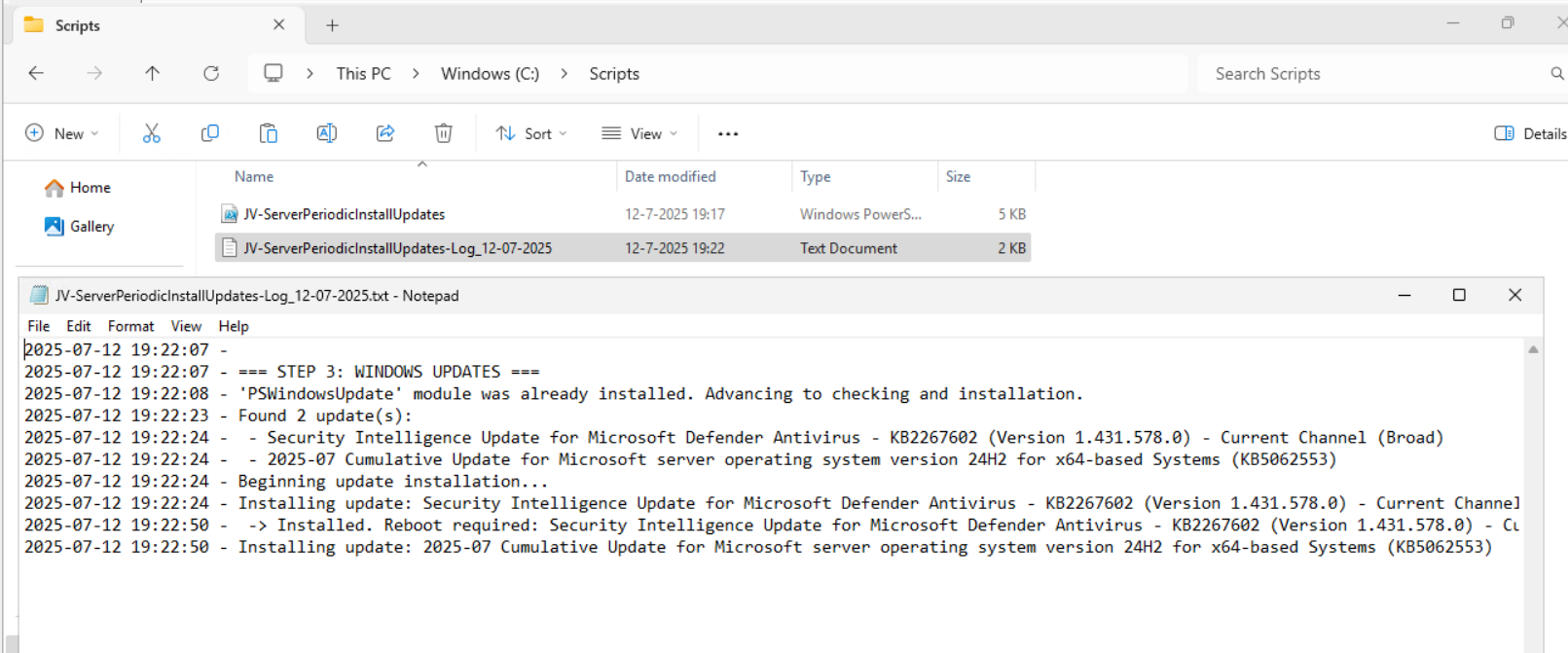

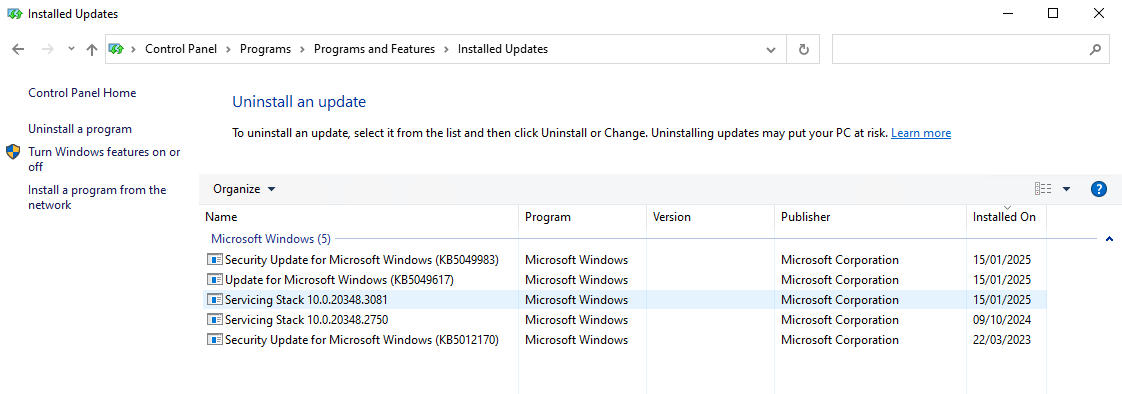

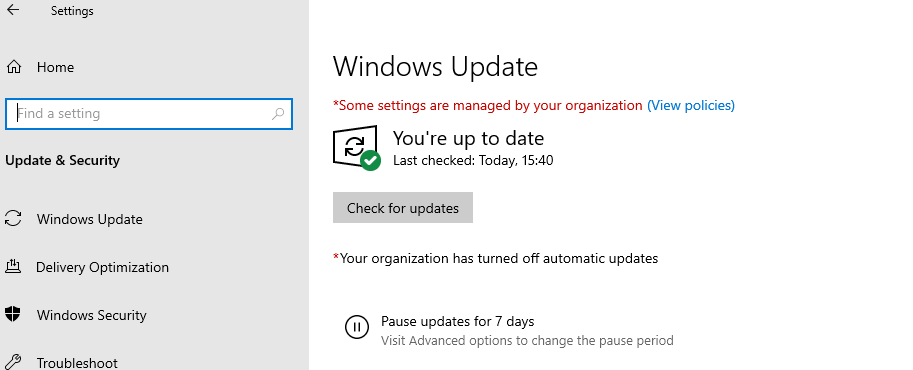

Installing Windows Updates through PowerShell (script)

Sometimes we want to install updates by hand because of the need for fast patching. But logging into every server and installing them manually is a hell of a task and takes a lot of time.

I have made a very simple script to install Windows Updates by hand using PowerShell including logging to exactly know which updates there were installed for monitoring later on.

The good part about this script/PowerShell module is that it does support both Windows Client and Windows Server installations.

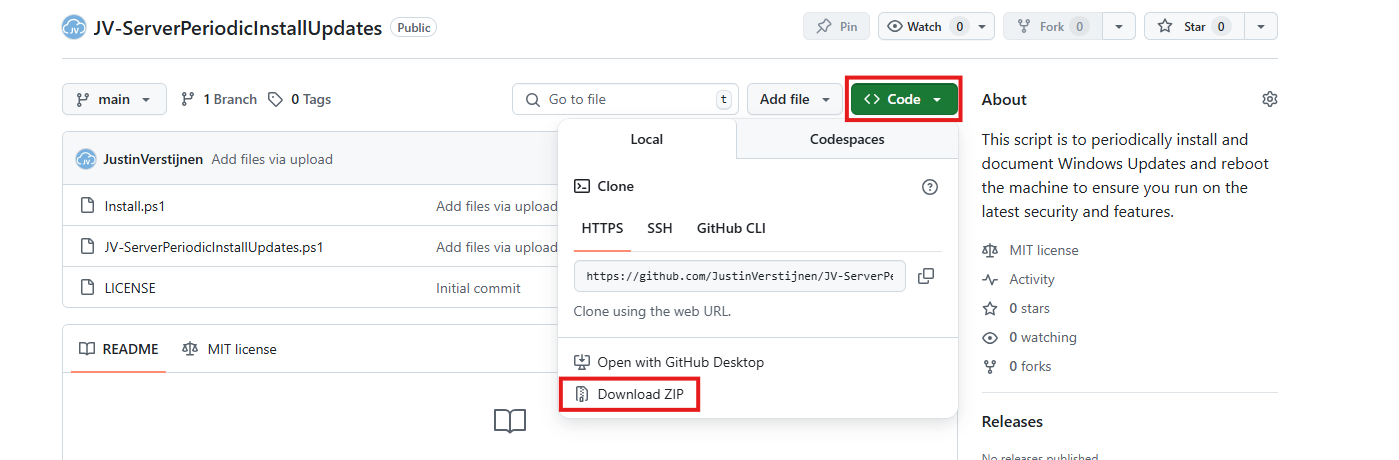

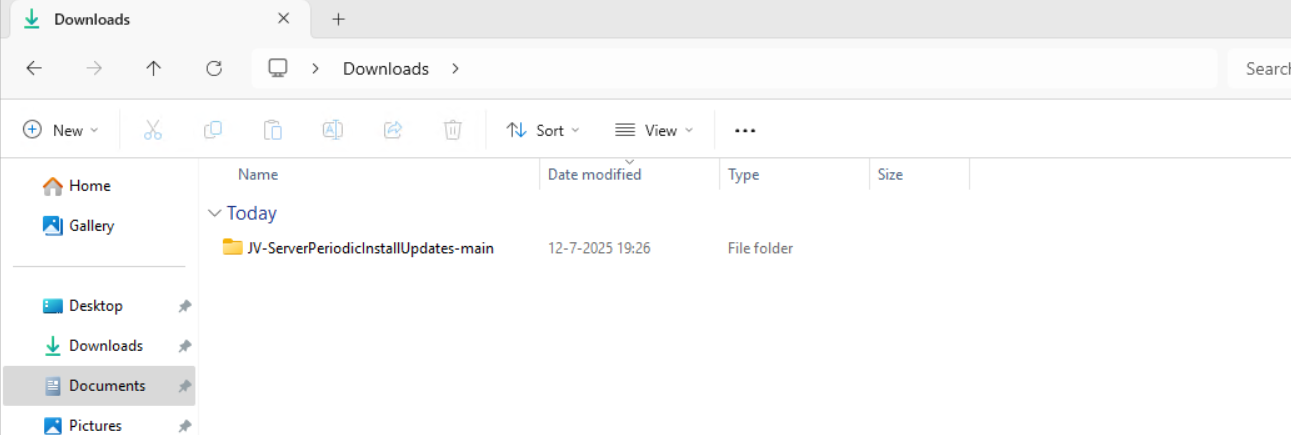

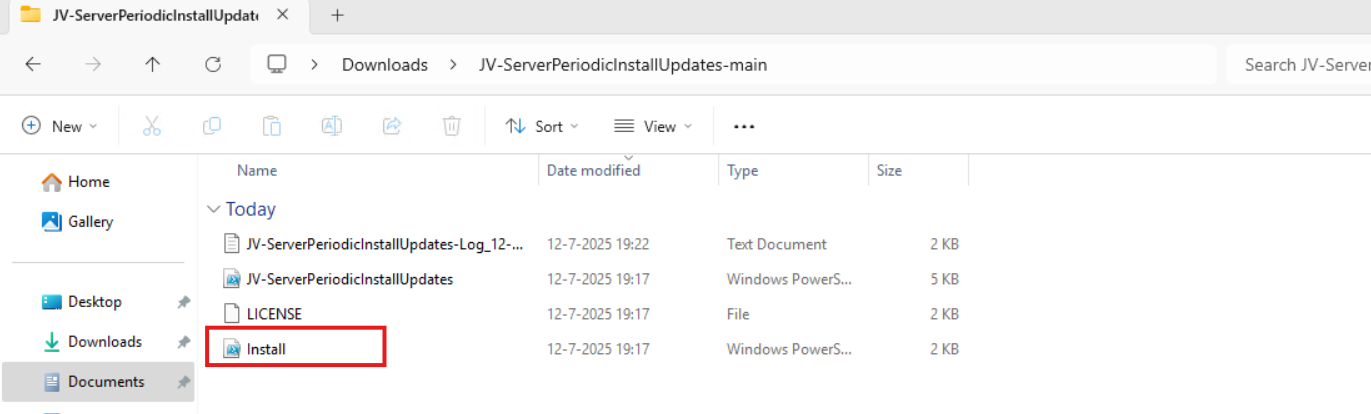

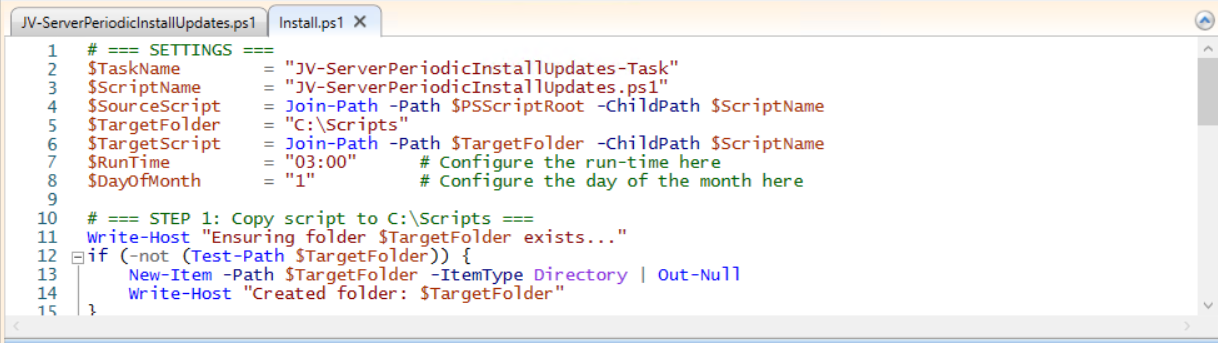

Where to download this script?

For the fast pass, my script can be downloaded here:

What does the Windows Updates script do?

The script I have made focusses primarily on searching for and installing the latest Windows Updates. It also creates a log file to exactly know what updates were installed for monitoring and documentation purposes.

The script itself has 6 steps:

- Ensuring it runs as Administrator

- Enable logging

- Saves a log file in the same directory and with 100KB limit

- Searching Windows Updates and installing them

- Rebooting server to apply latest updates

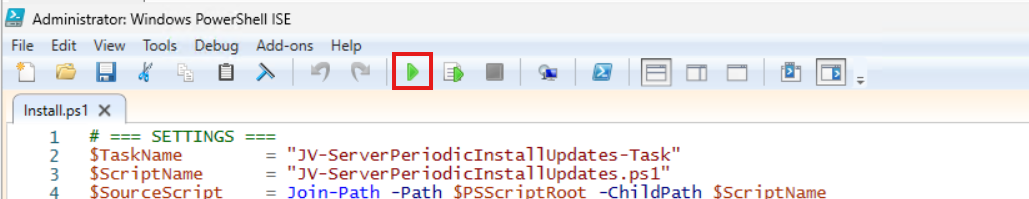

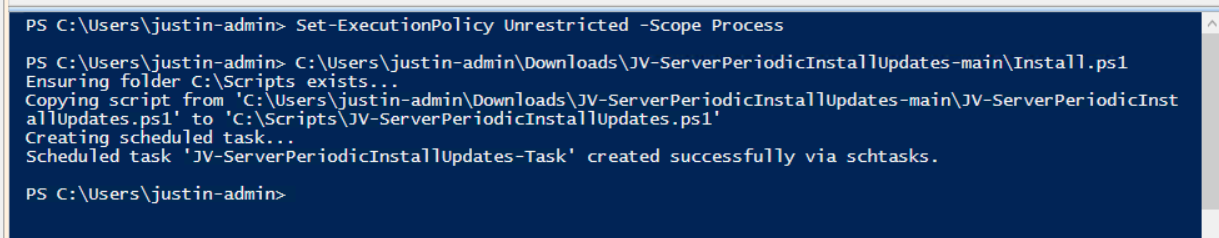

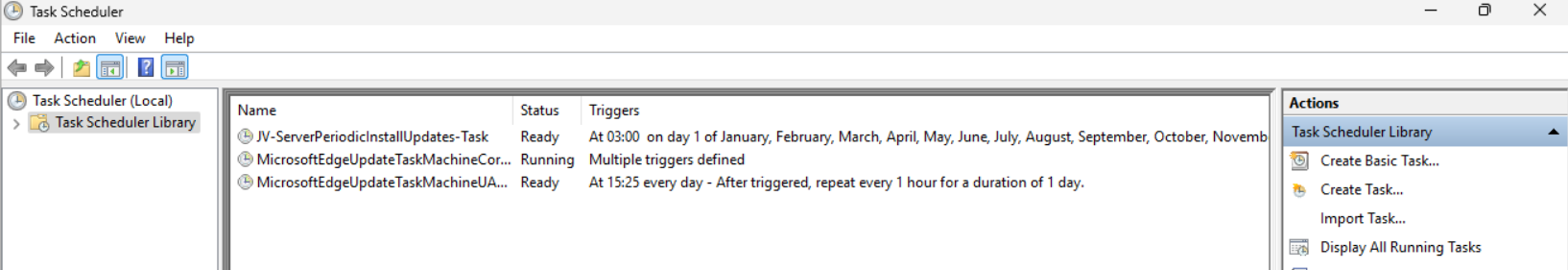

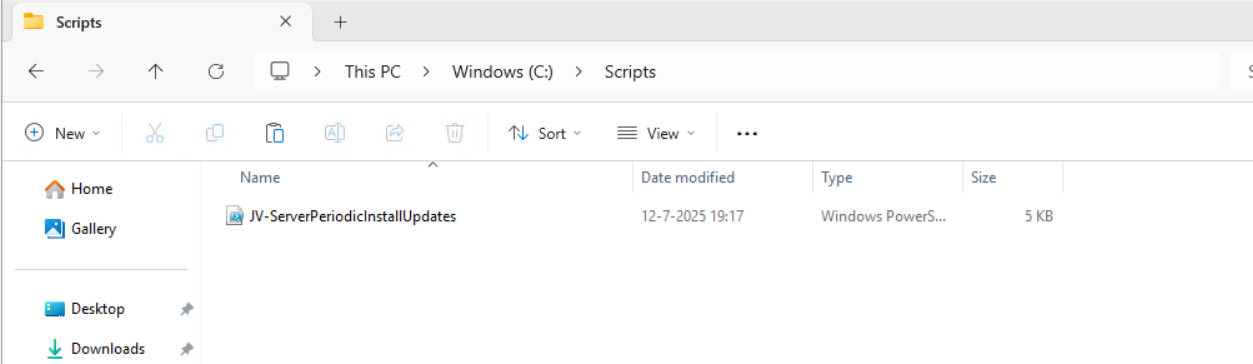

How to install the Windows Updates script automatically